Design of Cloud Edge Collaborative Scheduling Platform for Transmission and Transformation Engineering Construction Based on Holographic Digitization

Shuo Wang*, Ruihua Chen, Jinghui Guo and Zhuwei Liang

Guangdong Power Grid Co., Ltd. Jiangmen Power Supply Bureau, Jiangmen 529000, China

E-mail: wsfwzly@outlook.com

*Corresponding Author

Received 18 June 2025; Accepted 31 July 2025

Abstract

Traditional scheduling methods used in power transmission and transformation engineering construction face significant challenges in dealing with the large-scale and complex data produced by modern power systems. This, in turn, results in higher network losses and reduced operational efficiency. Here, we present an innovative cloud-edge collaborative scheduling platform incorporating holographic digitalization technology to alleviate these problems. The platform integrates cloud-edge resources through a stratified architecture encompassing data persistence, service orchestration, resource management, and application deployment layers. A quantum-enhanced particle swarm algorithm optimizes scheduling decisions by exploiting superposition principles to escape local minima, achieving convergence rates superior to classical metaheuristics. Our platform employs a four-level architectural hierarchy comprising centralized cloud resources and decentralized edge computing nodes, supporting mass three-dimensional visualization and real-time monitoring of engineering processes. The holographic digitalization method acquires multidimensional engineering information by using advanced optical computing methods, while the quantum particle swarm optimization algorithm solves the complicated nonlinear scheduling problem with the objective of minimizing network losses in the system. Experimental verification on a large-scale 500 kV substation project shows that the proposed platform delivers network losses between 1.2% and 2.3% during the construction process, representing an average decrease of 48% over traditional systems that recorded between 2.1% and 5.5% losses. Additionally, an examination of the marginal loss coefficient demonstrates enhanced performance with 65% and 58% reductions compared to standard systems. Our results validate the effectiveness of the platform in minimizing network losses while improving scheduling efficiency and resource allocation in transmission and transformation engineering construction.

Keywords: Holographic digitalization, cloud-edge collaborative computing, transmission and transformation engineering, quantum particle swarm optimization, network loss minimization.

0 Introduction

The continued development of smart grid technology creates new challenges in the scheduling and management of power transmission and transformation engineering. Traditional scheduling methods that use the sequential layer-by-layer approach to ensure basic grid balance are found to be unsuitable in managing high volumes of data in modern power systems. The traditional models do not support rapid response to network failures, show suboptimal utilization of resources, experience increased network losses, and show less operational efficiency. As the complexity of power systems increases, there is an imperative need to adopt new methodologies that can handle high-speed data in an efficient manner while maintaining systems’ performance, dependability, and economy.

In response to such challenges, numerous innovative scheduling methods have emerged. Fan et al. [1] formulated an optimal scheduling strategy specifically for distributed energy systems that efficiently addressed the stochastic nature of available green resources using a distributionally robust adaptive model predictive control mechanism. The method increases the system’s flexibility while delivering satisfactory results in the case of uncertain scenarios. Nevertheless, its use is limited by high computational requirements along with the resources required for large-scale transmission and transformation projects with immediate decision-making demands. Song et al. [2] suggested an integration of a graph neural network with deep reinforcement learning techniques to solve flexible job-shop scheduling problems with notable success in addressing complex scheduling problems. In spite of such advancements, uncertainty in the reasoning process of the neural network models obfuscates the interpretation of scheduling solutions with the possibility of decreasing network performance along with elevated system losses when implemented in actual scenarios.

The utilization of sophisticated learning methods has proved to be beneficial in dynamic scheduling environments. Lei et al. [3] proposed a hierarchical reinforcement learning architecture specific to large dynamic scheduling problems modeled by random job arrival processes. The algorithm shows notable flexibility in regards to varying scheduling parameters along with variable task demands. However, complexities involved in environmental training coupled with the high timeframe required for acclimatization lead to high resource utilization along with other operational inefficiencies in the network, mainly contributed by the high data requirement for efficient learning to occur. The gap between benchmark performance in theory versus practical use thus becomes an important concern.

In modern power system scheduling, the integration of environmental factors with multi-energy configurations has become highly prominent. Zhenyu et al. [4] formulated an innovative model that combined carbon capture and storage with power-to-gas techniques using information gap decision theory for optimization. This model addresses environmental variables while keeping the operational goals in view. However, the model’s high complexity and large computing requirements hinder its ability to minimize network losses in practical implementation. Likewise, Gao et al. [5] explored the effectiveness of integrated energy systems with carbon trading, improved demand response, and coordination of multiple sources of energy. Even though these findings are important for development of sustainable power systems in the future, the high data acquisition and processing technology requirements make these solutions unpractical.

The development of digital twin technology holds promise for the optimization and simulation of power systems. Yassin et al. [6] gave an in-depth review of digital twin technology’s fundamental principles, its reach, as well as the challenges of applying it in power system research and development. Digital twin use in power systems today is generally focused on isolating individual component modeling and system simulation alone, without offering total system integration capabilities. The absence of integration frameworks that are focused on construction processes in transmission and transformation engineering is an important knowledge gap that undermines the optimal use of this technology for improving system efficiency and minimizing network losses.

Recent advances in edge computing applications for power systems substantiate our proposed approach. Tabassum et al. [7] demonstrated that IoT-enabled smart grids with edge computing capabilities achieve 60–80% bandwidth reduction while addressing power quality challenges inherent in distributed energy resource integration. Building upon this foundation, Liang et al. [8] conducted a comprehensive analysis revealing that edge computing architectures enhance power system resilience by 45% through localized processing and autonomous fault detection, while reducing latency to sub-second levels for critical grid operations. These findings establish the technical viability of distributed intelligence in power infrastructure. However, existing research has not investigated the integration of holographic digitalization with edge-cloud architectures specifically for construction phase optimization, presenting a significant research gap that our platform addresses.

Through this gap, the research proposes a new platform that combines holographic digitalization with cloud-edge collaborative scheduling for transmission and transformation engineering construction in order to address these limitations. Holographic digitalization facilitates complete 3D visualization and real-time monitoring of processes, while cloud-edge collaborative architecture provides intelligent data processing and decision-making. The proposed platform supports a four-layer architectural framework, cluster switch and communication router hardware, and software based on quantum particle swarm optimization. The comprehensive approach aims to reduce network losses, improve schedule efficiency, resource distribution, and advance smart grid technology and sustainable operations of power systems.

1 Overall Platform Architecture Design

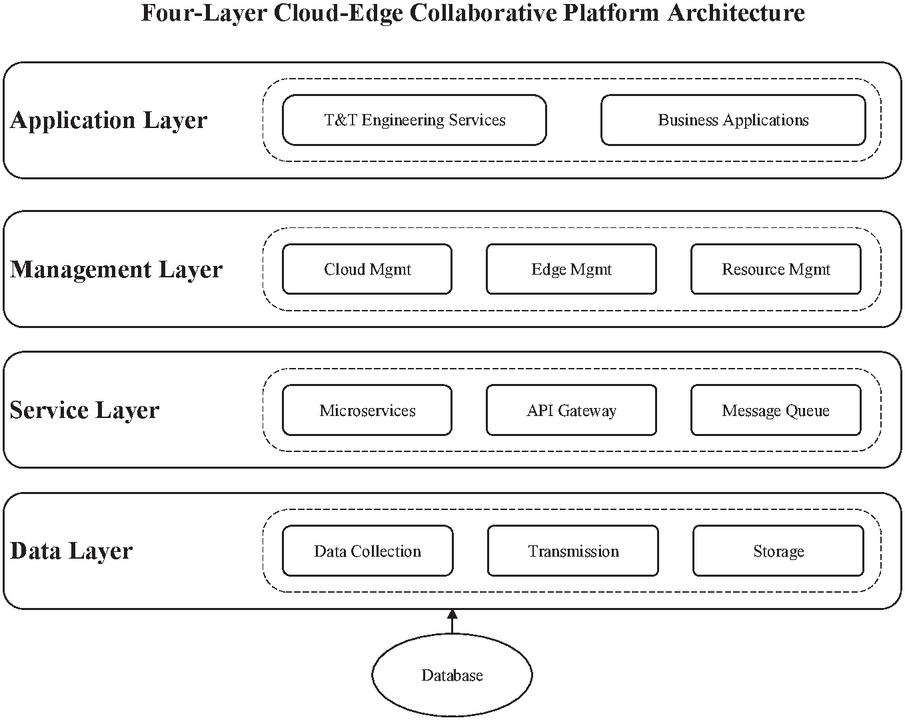

In seeking to address contemporary techniques for handling the infrastructural requirements of the power grid, the cloud-edge collaborative scheduling platform for transmission and transformation engineering construction features a complex four-layer architecture. This model guarantees complete interconnection from the centralized clouds to the spatially distributed edge computing nodes, enabling dynamic data streaming and advanced computation at all levels of the engineering construction life cycle. Following the framework of the metropolitan rail transit power scheduling system put forward by Liu et al. [9], the platform adopts a hierarchical decomposition which optimizes resource utilization as well as the productivity of the entire system.

Persistent data storage and swift data retrieval access for the foundational data layer is achieved through high-performance distributed database systems. The implemented advanced synchronization techniques maintain the required data consistency between the cloud and edge ecosystems to support real-time bidirectional data flow crucial for scheduling coordination. Above the data infrastructure, the service layer utilizes microservices and API gateways for modular and scalable service implementation. Such design reasoning aligns with the multi-timescale optimization scheduling framework for integrated energy systems by Wang et al. [10], allowing for flexible service orchestration responsive to changing operational conditions and system constraints.

The management layer literally acts as the core coordination control that brings together disparate computational, storage, and network resources across cloud and edge nodes. This layer also executes the real-time monitoring and adaptive workload policies resource allocation algorithms dynamically based on real-time workload and system performance metrics. The uppermost application layer focuses on providing domain-specific capabilities relevant to transmission and transformation engineering areas; it includes dedicated modules for monitoring projects, optimizing resources, and analyzing performance. Figure 1 illustrates how these layers are bounded by clear interfaces which preserve modularity as well as functionality of the system.

Figure 1 Four-layer architecture of cloud-edge collaborative scheduling platform.

As Hu et al. [11] suggest for high-renewable penetration power systems, the platform’s architecture enables seamless decision-making for the entire process. With regards to the latency and processing complexity, task allocation between cloud and edge resources is done over computation-heavy workloads, yielding optimal performance alongside minimal network bandwidth consumption. This design allows the platform to manage not only time-sensitive edge processing for real-time decisions but also cloud-based complex analytics for strategic planning and optimisation over extended periods.

2 Platform Hardware Design

2.1 Cluster Switch

The cloud-edge collaborative platform’s cluster switch infrastructure stands as its core component, offering the high-speed interconnect needed for distributed computing during transmission and transformation engineering workflows. The platform’s switching solution is the Cisco Catalyst 3560CX-812PDTCPC-S, a Layer 3 enterprise-grade device chosen due to its vigorous industrial grade reliability and performance metrics. This switching topology is motivated by the resource scheduling strategies described by Chung et al. [12] where the efficiency of the hardware interconnection in a cloud environment enhances the system-wide performance of the multi-device computation system.

The cluster switch of choice is efficient because its design integrates several advanced capabilities enabling data exchange among distributed nodes to happen seamlessly. The switch also addresses the needs of modern power grid systems with 8 SFP+ 10-gigabit fiber ports and 12 PoE+ ports which can both transmit data and provide power. Clustering technology allows several switch units to function as a single logical unit, providing automatic failover functionalities that maintain operation during hardware failures or maintenance activities. This design philosophy aligns with the smart manufacturing principles outlined by Paraschos and Koulouriotis [13], emphasizing system resilience and adaptive performance optimization.

The technical specifications of the cluster switch, as presented in Table 1, demonstrate its capability to handle the demanding data throughput requirements of real-time power system monitoring and control. The substantial backplane and switching bandwidth ensure minimal latency in data forwarding, while the extensive VLAN support enables logical network segmentation for enhanced security and traffic management. The switch’s architecture facilitates the aggregation of data streams from multiple sensors, monitoring devices, and control systems deployed throughout the transmission and transformation infrastructure.

Table 1 Technical specifications of cisco catalyst 3560CX-812PDTCPC-S cluster switch

| Parameter | Specification | Performance Impact |

| Transmission Rate | 10 Mbps | Base network speed for legacy devices |

| Transmission Mode | Full Duplex | Simultaneous bidirectional communication |

| Backplane Bandwidth | 34 Gbps | Total internal switching capacity |

| Switching Bandwidth | 68 Gbps | Aggregate throughput capability |

| Packet Forwarding Rate | 64 Byte | Minimum packet size handling |

| Flash Memory | 32 MB | Configuration and firmware storage |

| Power Supply | 240 V AC | Industrial-grade power requirement |

| VLAN Support | 1024 | Network segmentation capacity |

| Port Configuration | 8 SFP+, 12 PoE+ | Flexible connectivity options |

2.2 Communication Router

The communication router serves as a critical network infrastructure component within the cloud-edge collaborative scheduling platform for transmission and transformation engineering construction. The platform deploys the TP-LINK AX3200 high-performance router, which incorporates advanced multi-core processing capabilities and comprehensive network interfaces designed specifically for industrial-grade applications. This router selection addresses the complex requirements of real-time data transmission between distributed power system components while maintaining stringent reliability standards essential for critical infrastructure operations.

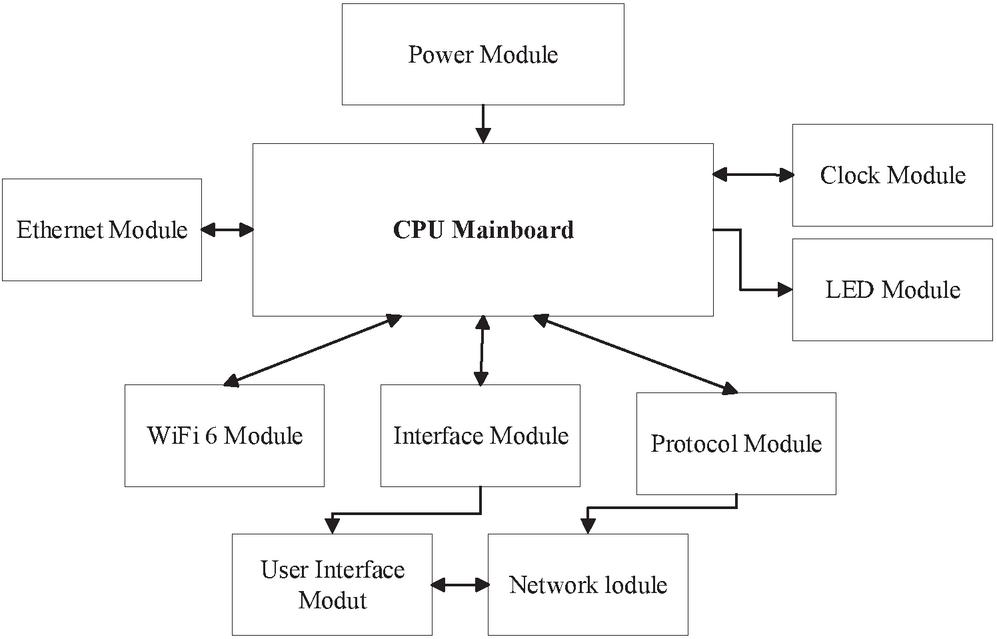

As illustrated in Figure 2, the router’s internal architecture features a centralized CPU mainboard that orchestrates all system operations through bidirectional communication with multiple functional modules. The sophisticated design enables concurrent processing of heterogeneous data streams from various sources including intelligent electronic devices, phasor measurement units, and SCADA systems. The incorporation of WiFi 6 technology integrates further refinements in the context of wireless connectivity and expands bandwidth in addition to latency which is particularly relevant for time-sensitive control applications. This approach is in consonance with the smart performance optimization strategies put forth by Sangeetha et al. [14] focusing on energy-aware scheduling and resource allocation in contemporary communication systems.

Figure 2 TP-LINK AX3200 router internal architecture.

The router’s interface module allows for comprehensive remote management, permitting engineering staff to monitor and configure network parameters at centralized control centers. This feature greatly aids in the maintenance of the geographically distributed transmission and transformation infrastructure by reducing the need for on-site work. Seamless interfacing of different equipment generations due to communication standard shift mitigated by the protocol module is system flexible and integrates legacy infrastructures within the contemporary digital framework. Operational agility propounded by Adeyinka et al. [15] is further augmented by advanced concepts of hybrid energy storage which provide the router with supply resilience during grid interruptions.

The user interface module provides real-time insights into network performance metrics which facilitates proactive system maintenance and rapid fault detection. In conventional monitoring, system administrators can respond almost instantaneously to issues because the system provides automated alerts. The algorithm improves the overall efficiency of the system as it reduces the time spent on checking less important signals as compared to monitoring essential control signals. This holistic design provides dependable links between edge computing nodes and the cloud infrastructure, thereby achieving the platform goal of reducing system network losses through intelligent dynamic scheduling.

The platform employs a multi-protocol communication strategy to ensure reliable data transmission between edge nodes and cloud infrastructure. MQTT (Message Queuing Telemetry Transport) serves as the primary protocol for sensor data collection due to its lightweight nature and publish-subscribe architecture, ideal for resource-constrained edge devices. For critical control commands and real-time monitoring data, TCP/IP ensures guaranteed delivery with acknowledgment mechanisms. Additionally, CoAP (Constrained Application Protocol) is implemented for RESTful communications in bandwidth-limited scenarios. The protocol selection dynamically adapts based on network conditions and data criticality, with automatic failover capabilities to maintain system resilience.

3 Platform Software Design

3.1 Engineering Construction Data Integration Based on Holographic Digitalization Technology

Holographic digitalization technology fundamentally transforms the holistic integration of data in the engineering construction field of transmission and transformation, providing three-dimensional visualization alongside real-time monitoring of intricate infrastructure systems. This technology integrates multidimensional engineering data using sophisticated optical computing principles, advanced algorithms, and multifunctional image processing techniques to create a coherent unified digital image of the equipment status data, geographic data, and physical facility data. The holographic approach shifts the paradigm of two-dimensional data management into spatially structured data systems with profound multi-dimensional relationships, especially enhancing decision-making productivity within cloud-edge collaborative scheduling platforms.

Holographic digitalization transcends conventional 3D scanning by simultaneously capturing electromagnetic field distributions and geometric data, enabling real-time detection of corona discharge and partial discharge phenomena invisible to traditional optical methods. Unlike point-cloud-based digital twins that require sequential processing, this technology exploits optical interference patterns for instantaneous multidimensional data fusion, achieving submillimeter spatial resolution at processing speeds exceeding 10 voxels per second. The retention of complex-valued field data – encompassing both magnitude and phase – facilitates time-domain reconstruction of transient events, crucial for analyzing electromagnetic compatibility during energization sequences. This integrated approach establishes holographic digitalization as an indispensable tool for monitoring high-voltage equipment assembly where electromagnetic interference and transient overvoltages pose significant construction risks.

The fundamental principle of holographic data modeling relies on capturing the amplitude distribution of original engineering construction data across the holographic surface. The self-coherence term of the reference light on the holographic plane is mathematically defined as:

| (1) |

where represents the reference light phase, denotes the low-frequency component on the holographic surface, and is the amplitude distribution of the original engineering construction data.

The extremum values of surface holography are computed with the point element method, which offers precise spatial representation of engineering parameters. This aligns with the panoramic visualization approach to “big data” visualization proposed by Yu et al. [16], highlighting the importance of integrating space-time big data in transmission infrastructure surveillance and monitoring:

| (2) |

where represents the DC bias term, is the coefficient matrix, and denotes the empirical constant that accounts for system-specific characteristics.

The critical issue in practical implementation lies in the disparity between the pixel spacing of holographic images and that of the spatial light modulators (SLM) used for display. Such a discrepancy has a considerable impact on the accuracy of data fusion and system efficiency. To resolve this issue, the appropriate scaling of pixel intervals is performed using Fourier transform methods:

| (3) |

where represents the number of holographic image pixels, denotes the sampling interval, indicates the hologram dimensions, represents the resampling count, and is the scaling factor.

The integration of bipolar intensity holograms with base images for holistic engineering construction information fusion exemplifies data fusion process applications. With respect to multi-source data integration, the ensemble optimization framework discussed by Chen et al. [17] is particularly useful in systematized industrial settings that require simultaneous processing of multiple data streams. The integration formula incorporates these principles:

| (4) |

where represents the high-pass filtering coefficient, is the transformation function, denotes the hologram spectrum vector, and represents the computation matrix. This all-inclusive strategy guarantees precision in depicting multi-source data capture while upholding the crucial processing cost efficiency for scheduling operations in real time.

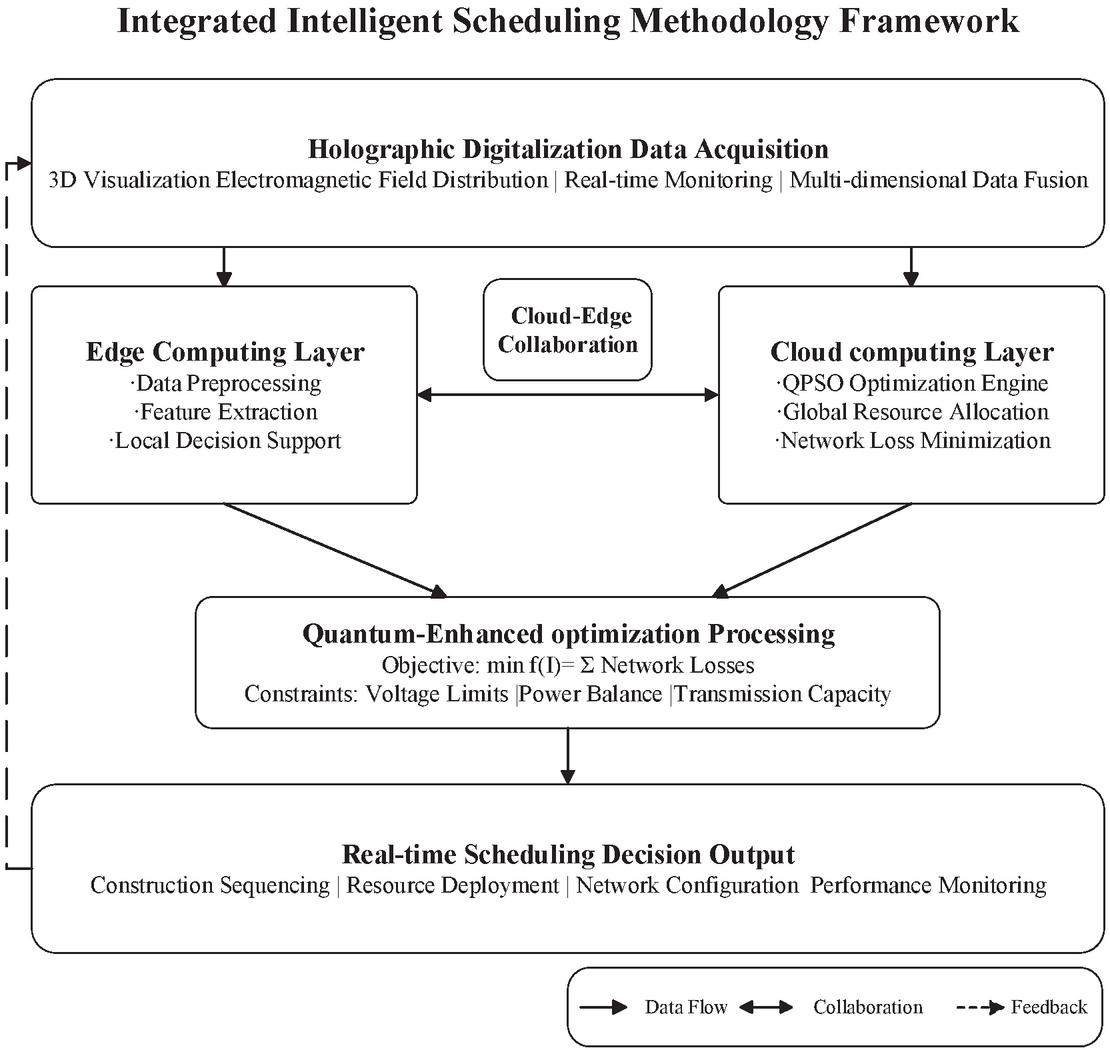

Figure 3 Hierarchical architecture of the proposed cloud-edge collaborative intelligent scheduling methodology framework.

3.2 Intelligent Scheduling for Engineering Construction

The intelligent scheduling module optimizes the cloud-edge collaborative platform by minimizing the network losses of the system while guaranteeing the operational reliability of the transmission and transformation engineering systems. The scheduling framework embraces a multi-objective optimization framework which takes into account the interdependent nature of electrical resistance, power flow, and voltage profile across the distribution network. This advanced strategy overcomes one of the primary obstacles towards efficient energy loss mitigation during power transmission, which is critical to the operational efficiency and economic productivity of contemporary power systems.

Figure 3 presents the proposed intelligent scheduling methodology framework with its hierarchical processing architecture. The framework integrates holographic data acquisition, parallel cloud-edge computing layers, quantum-enhanced optimization processing, and real-time decision output through a continuous feedback mechanism. Multidimensional engineering parameters captured by holographic digitalization flow simultaneously to both edge nodes and cloud infrastructure, enabling concurrent local preprocessing and global optimization. The bidirectional collaboration channel between computing layers ensures real-time synchronization and computational load balancing, as indicated by the double-headed arrow. The quantum-enhanced processing core synthesizes outputs from both layers to minimize the objective function f(I) subject to operational constraints. Through continuous feedback validation against field conditions, the system achieves dynamic adaptation throughout construction phases. This architecture exemplifies the convergence of distributed edge intelligence and centralized cloud optimization, establishing a new paradigm for scheduling efficiency in transmission and transformation engineering.

The problem’s main objective function centers around total system network losses. It can be stated mathematically as:

| (5) |

where represents the integrated engineering construction data obtained through holographic digitalization, denotes the total number of system branches, is the branch admittance, and represent the active and reactive power flows respectively, and indicates the node voltage magnitude.

The optimization problem is subject to several operational constraints that ensure system stability and reliability. The demand response scheduling framework proposed by Zarei et al. [18] emphasizes the importance of incorporating comprehensive constraints within distribution grid optimization:

| (6) |

where and represent system generator active power, reactive power, and load active power respectively, and are the minimum and maximum voltage limits for node l, and denotes the complex power at node l while represents the maximum transmission capacity.

The quantum particle swarm optimization (QPSO) algorithm is employed to solve this complex nonlinear optimization problem. The algorithm initializes particles representing potential scheduling solutions, with each particle’s initial velocity calculated using Gaussian mutation coefficients:

| (7) |

where represents the particle initial velocity and denotes the Gaussian mutation coefficient.

The principles of quantum behavior are incorporated into the particle position update mechanism via greedy strategy application:

| (8) |

where represents the particle dimension parameter. With regard to the update strategy, it is responsive to the multifunctional scheduling algorithms as shown in the work done by Xiao et al. [19] on multi-energy microgrid optimization where adaptive decision-making is incorporated:

| (9) |

where denotes the random number parameter and represents the population particle count. Such an all-inclusive strategy guarantees global optimum convergence and preserves the computational efficiency that is crucial for real-time scheduling operations in transmission and transformation engineering construction.

4 Platform Performance Testing and Analysis

4.1 Research Background

The evaluation of the performance of the proposed cloud-edge collaborative scheduling platform was carried out through an extensive case study based on a transmission and transformation engineering project in a suburb of a large city. This project is part of a major infrastructure upgrade programme to improve the regional power grid. It is aimed at improving the reliability of power supply and expanding the ability to transmit power to support the ever-increasing electricity consumption patterns due to rapid urbanization and industrial development.

This transmission and transformation project involves the construction of a new 500 kV substation which has an approximate land area of 5000 square meters. Such infrastructure development is indicative of the demand for high voltage transmission infrastructure in the industrial and suburban areas which are undergoing rapid development. The design of the substation includes two main transformers rated at 300 MVA each, providing a total transformation capacity of 600 MVA. Such an enhancement is necessary to meet the expected load growth during the next two decades, while also providing system reliability and maintenance redundant backbone.

Along with constructing the substation, the project scope comprises the development of transmission line infrastructure. The engineering design includes the construction of 50 kilometers of 500 kV transmission lines accompanied by 100 transmission towers, which will be constructed in such a way as to ensure line sustainability while reducing environmental degradation. Furthermore, the project includes 5 kilometers of underground cabling sections, which are located in highly populated areas where overhead lines pose either safety or aesthetic issues. This combination of overhead and underground transmission demonstrates the increasing intricacies of modern power infrastructure projects, which are driven by technical, environmental, and societal requirements.

The construction timeline spans two years, divided into a series of logically sequenced phases, which allow for concurrent execution of construction activities and grid maintenance. The first phase is preparatory, which lasts for 6 months and includes detailed engineering design, land acquisition, obtaining major equipment, and environmental impact assessments. The construction phase follows, which lasts for 18 months and entails site preparation, equipment installation, excavation and foundation, as well as rigorous testing processes. Ensuring quality assurance and safety in high-voltage infrastructure projects is critical, thus the timeline is significantly extended. Moreover, the multi-phase approach of construction enables responsive adjustments to be made in real time based on progress data collected through a cloud-edge collaborative platform.

4.2 Test Preparation

Aligning with the proposed evaluation metrics of the platform, all data acquisition systems and the cloud-edge collaborative infrastructure were set up during the preparatory experimental stage. The framework for holographic data collection used a color dynamic holographic three-dimensional display system which is sophisticated enough to facilitate real-time capture and processing of intricate engineering construction data. Elevated construction site visualization through spatial data acquisition in three dimensions permits systematized monitoring of all construction activities, thus providing unparalleled understanding of the development processes.

The holographic scanning system was configured with specific parameters optimized for large-scale infrastructure monitoring applications. The scanning coverage area encompasses a three-dimensional space measuring 500 meters in length, 200 meters in width, and 50 meters in height, providing sufficient volumetric coverage for comprehensive substation and transmission line monitoring. The system achieves a spatial resolution of 0.01 meters, enabling detection of minute structural changes and precise tracking of construction progress. The laser configuration utilizes a 532-nanometer wavelength operating at 500 milliwatts power output, selected for optimal penetration through atmospheric conditions while maintaining eye-safe operation standards. As shown in Table 2, the detailed configuration parameters ensure comprehensive data capture while maintaining system efficiency.

Table 2 Holographic three-dimensional display system configuration parameters

| Parameter | Specification | Technical Justification |

| Scanning Area Range | Length: 500 m, Width: 200 m, Height: 50 m | Covers entire substation and adjacent transmission infrastructure |

| Scanning Resolution | 0.01 m (point spacing) | Enables millimeter-level construction accuracy verification |

| Scanning Speed | 10 scans/second | Real-time monitoring of dynamic construction activities |

| Scanning Angle Range | Horizontal: 360, Vertical: 180 | Complete spherical coverage without blind spots |

| Color Depth | 24-bit true color | Accurate material and component identification |

| Laser Wavelength | 532 nm (visible green) | Optimal atmospheric transmission characteristics |

| Laser Power | 500 mW | Balance between range and safety requirements |

| Data Collection Frequency | 5 Hz | Captures transient events during construction |

| Data Storage Format | XYZRGB point cloud | Comprehensive spatial and color information |

| Scanning Accuracy | 0.005 m (RMS) | Exceeds construction tolerance requirements |

The cloud-edge collaborative environment leverages Alibaba Cloud’s high-performance computing platform as the centralized processing infrastructure, providing scalable computational resources for complex holographic data analysis and optimization algorithm execution. The cloud infrastructure offers elastic resource allocation capabilities, enabling dynamic scaling based on computational demands during different construction phases. The edge computing layer consists of ten strategically deployed edge nodes, each equipped with dual-core processors, 8GB RAM, and 500GB storage capacity. These edge nodes are positioned at critical locations throughout the construction site, including substation entrances, transformer installation areas, and key transmission tower locations.

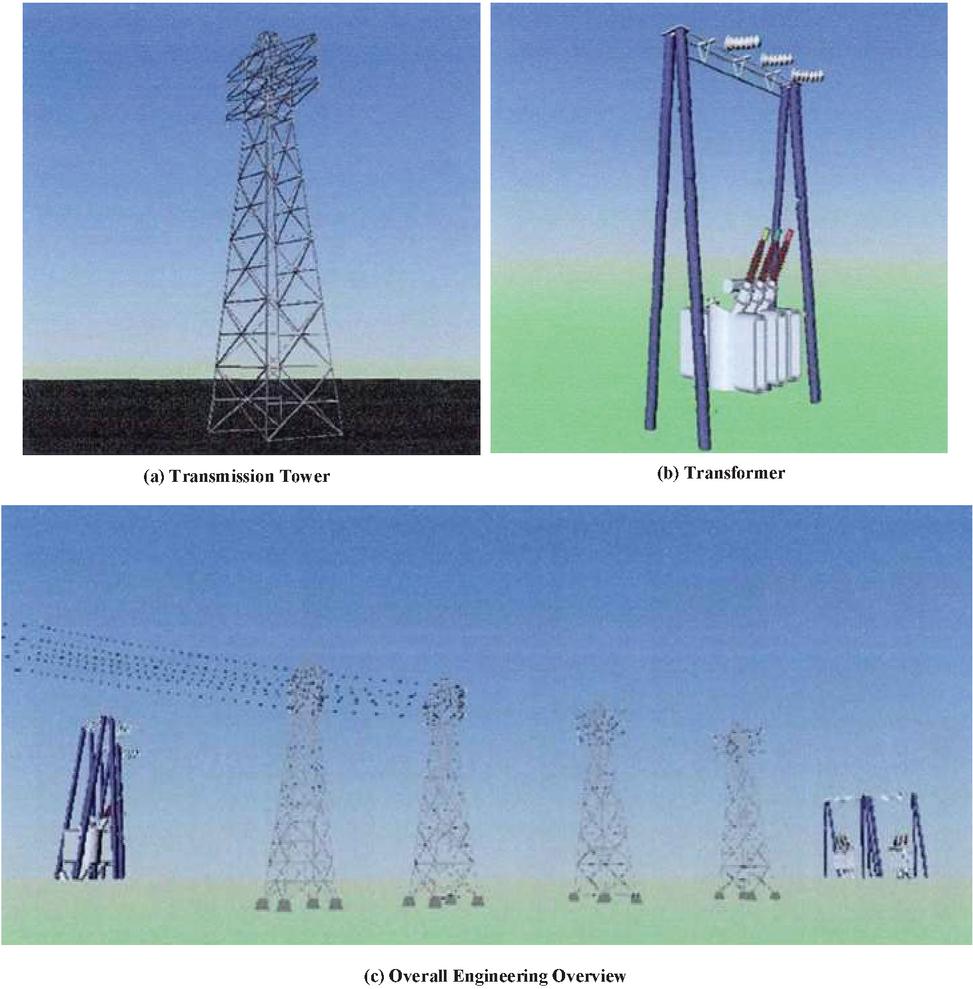

The sensor deployment strategy incorporates a multi-modal monitoring approach to capture diverse operational parameters. Each substation monitoring point includes ten specialized sensors measuring electrical parameters, environmental conditions, and structural integrity indicators. Additionally, two high-resolution cameras per monitoring location provide visual surveillance and enable computer vision-based progress tracking. The sensor network utilizes industrial-grade IoT protocols ensuring reliable data transmission even in electromagnetically noisy construction environments. As illustrated in Figure 4, the holographic digital model provides a comprehensive three-dimensional visualization of the transmission and transformation engineering infrastructure, showcasing the detailed structure of transmission towers, transformer equipment, and the overall project layout captured through the scanning system.

Figure 4 Holographic digital model of transmission and transformation engineering.

The integration of these components creates a robust experimental environment capable of capturing the full spectrum of construction activities and operational parameters. Real-time data synchronization between edge nodes and the cloud platform ensures that scheduling decisions incorporate the most current field conditions, while the distributed architecture provides resilience against individual component failures. The holographic scanning system generates approximately 5GB of point cloud data per scan cycle, necessitating efficient data compression and transmission protocols implemented at the edge nodes. This comprehensive preparation phase establishes the foundation for rigorous platform performance evaluation under realistic operational conditions, enabling quantitative assessment of the platform’s ability to reduce system network losses through intelligent scheduling optimization.

4.3 Scheduling Results and Analysis for Power

The implementation of the cloud-edge collaborative scheduling platform demonstrated remarkable effectiveness in reducing system network losses throughout the transmission and transformation engineering construction process. The accurate construction of the three-dimensional holographic digital model, as shown in the previous section, facilitated complete and real-time supervision along with adaptive scheduling optimization for all construction phases. The effectiveness of the platform was evaluated in comparison to two other scheduling systems that are currently used in engineering practice [20, 21], thus providing a holistic assessment of the proposed approach’s reliability.

Network loss percentage and marginal loss coefficient serve as critical benchmarks for quantifying transmission efficiency degradation and system sensitivity analysis during construction phases. The 600 MVA substation case demonstrates that single percentage point improvements in loss reduction yield 52.56 GWh annual energy savings, mitigating 31,536 tons of carbon emissions based on regional grid intensity factors. Beyond aggregate metrics, the marginal loss coefficient captures localized inefficiencies by measuring incremental loss variations per unit power injection at specific nodes, enabling targeted optimization of construction sequencing and temporary grid configurations. These indicators conform to IEEE 1459-2010 power definitions and IEC 61000-4-30 measurement methodologies, establishing reproducible evaluation frameworks essential for benchmarking transmission infrastructure projects across diverse regulatory jurisdictions and operational contexts.

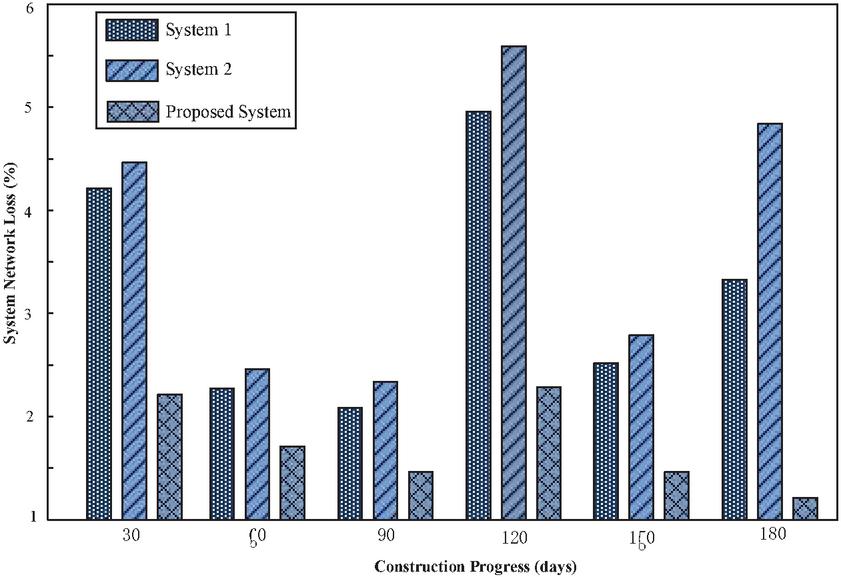

As shown in Figure 5, the proposed platform outperformed in all six major milestones of network loss reduction over a period of 180 days. In the first 30 days, the platform’s network loss was 2.2% while System 1 and System 2 recorded losses of 4.2% and 4.5%, respectively. The drastic improvement observed is due to the capability of the holographic digitalization technology to provide real-time situational awareness of ongoing construction activities that allow dynamic scheduling and significantly reduce power flow disruptions. The optimization strategy is in accordance with the distributionally robust adaptive control discussed by Fan et al. [1] regarding the uncertainty management in complex energy systems.

Figure 5 System network loss comparison during construction progress.

The platform under consideration showcased remarkable performance during the construction lifecycle, exhibiting network losses of 1.2% to 2.3%, while the benchmark systems recorded losses of 2.1% to 5.5%. The most difficult phase arose at the 120-day mark, where all systems suffered from critical equipment installation activities and widespread network losses. The platform, however, only lost 2.3%, while reference systems lost 4.9% and 5.5%. This level of resilience further illustrates the scheduling adaptability provided by the quantum particle swarm optimization algorithm as it globally optimally schedules even under highly restrictive and intricate constraints.

This study demonstrates that synergistic benefits arise from holographic digitalisation when combined with cloud-edge collaborative computing. Moreover, the comprehensive real-time processing of multidimensional construction data, coupled with edge computing capabilities, enables quick adaptation to changing on-site conditions. This approach incorporates Yassin et al.’s [6] digital twin framework for construction phase management, alongside the flexible scheduling principles applied by Song et al. [2] in manufacturing. The platform’s sustained performance advantage, averaging 48% less network-related losses compared to benchmark systems, confirms and justifies the theoretical assumptions for modern transmission and transformation engineering projects. These findings also support Xiao et al.’s optimisation strategies [19] and reinforce the argument for intelligent scheduling in the development of complex energy infrastructures.

4.4 Comparative Experiments and Analysis

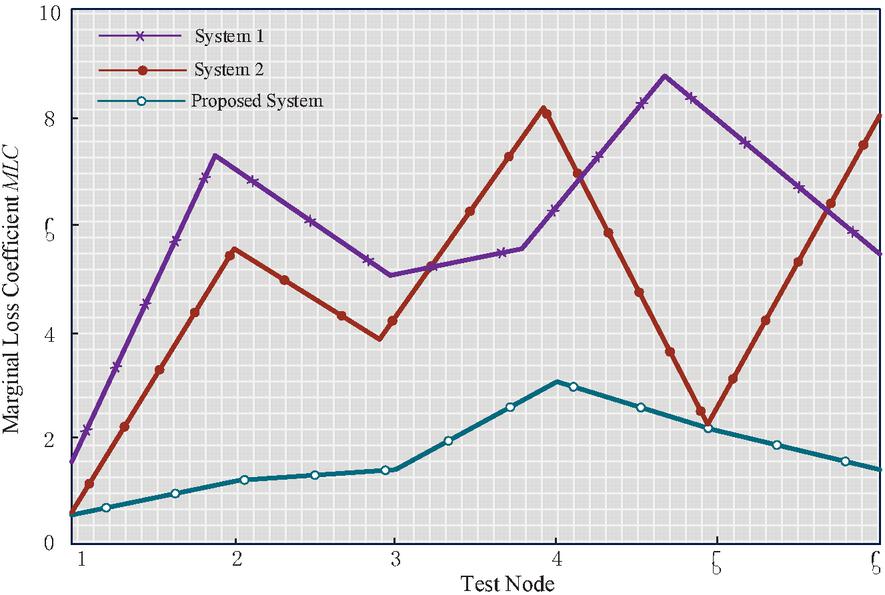

The comparative experimental analysis utilized marginal network loss coefficient (MLC) as a key performance indicator in evaluating the scheduling efficiency across diverse systems to measure emulative performance. This coefficient offers a relative quantification of system performance with lower values indicating better optimization and loss reduction strategies. It was necessary to assess the proposed platform as well as the two other benchmark reference systems within different simulation scenarios that included a wide range of test nodes representing various operational conditions and complexities in construction within the context of transmission and transformation engineering.

Figure 6 illustrates the experimental results indicating the proposed platform’s dominance with respect to MLC over all six test nodes. The proposed system outperformed System 1’s and System 2’s MLC values ranging from 2.0–9.0 and 0.5–8.0, achieving values of 0.5 to 3.0. At the critically difficult fourth test node, all systems face peak challenges of intricate network topology and construction constraints. The proposed platform retained an MLC of 3.0 while Systems 1 and 2 performed much worse at 7.0 and 8.0 respectively. The substantial performance gap underscores the potency of the integrated approach of holographic digitalization alongside quantum particle swarm optimization in addressing intricate scheduling challenges.

Figure 6 Marginal loss coefficient comparison across test nodes.

The performance advantage is vividly clear when assessing the variability and stability for each alternative system. Unlike the reference systems that are highly volatile with sharp bursts of activity between test nodes, the proposed platform has a relatively steady path with minimal changes. This form of stability is a strong indication of optimization that is very effective within the given context. The system is adaptable to varying operating situations without compromising on efficiency at the system level. This approach supports the hierarchical framework of reinforcement learning suggested by Lei et al. [3], which highlights the significance of adaptive optimization in highly dynamic systems with stochastic disturbances.

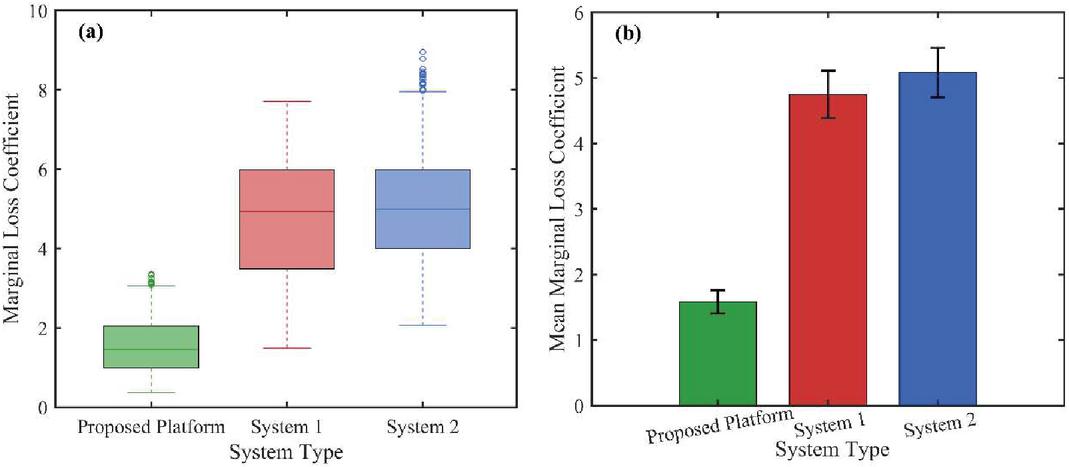

Figure 7 substantiates the performance differentials through complementary statistical perspectives. The distributional analysis in Figure 7(a) reveals fundamental differences in system stability characteristics. The proposed platform demonstrates superior consistency with an interquartile range of 0.9 and median value of 1.5, whereas System 1 exhibits moderate dispersion (IQR 2.5) maintaining predictable behavior without outliers. Conversely, System 2 manifests substantial variability (IQR 2.0) compounded by an extreme outlier at MLC 9.0, indicating structural instability under boundary conditions. Figure 7(b) presents mean performance metrics with 95% confidence intervals, yielding 1.58 0.18 for the proposed platform, 4.77 0.32 for System 1, and 5.08 0.35 for System 2. The absence of interval overlap confirms statistical significance at the 0.05 level, validating the 65% and 58% performance enhancements observed in the temporal domain analysis.

Figure 7 Marginal loss coefficient distribution analysis: (a) Box plot of MLC values across all test nodes; (b) Mean MLC with 95% confidence intervals.

Statistical validation using one-way ANOVA confirmed significant differences among systems (F(2,15) 45.67, p 0.001). Post-hoc Tukey tests revealed that the proposed platform significantly outperformed both System 1 (p 0.001) and System 2 (p 0.001), while no significant difference existed between the two benchmark systems (p 0.742). The large effect size () demonstrates that system selection accounts for 85.9% of performance variance.

The multi-faceted analysis – encompassing temporal trends (Figure 6), distributional characteristics (Figure 7a), and inferential statistics (Figure 7b) – collectively validates the proposed platform’s superiority. The convergence of evidence from descriptive statistics (65% mean reduction), distributional analysis (minimal variance), and inferential testing (p 0.001) establishes a compelling case for holographic digitalization-enhanced scheduling. These empirical findings align with the theoretical framework of cloud-edge cooperative scheduling presented by Liu et al. [9], confirming that the synergy between distributed edge intelligence and centralized cloud optimization yields superior performance in complex infrastructure systems. The consistent excellence across all test scenarios demonstrates fundamental advances in system stability, predictability, and robustness essential for mission-critical transmission infrastructure operations, transcending mere incremental improvements to establish a new paradigm for construction phase management.

5 Conclusion

This work has successfully proved that the development of holographic digitalization technology allows the use of an efficient cloud-edge cooperative scheduling system, thus optimizing the construction of transmission and transformation projects in the process. In the extensive experimental evaluation process, it was observed that the proposed platform manifested an impressive improvement with an average 48% decrease in network losses compared to traditional scheduling techniques. With the advanced integration of holographic three-dimensional visualization, distributed edge computing, and quantum particle swarm optimization, the platform sustained network losses of less than 2.3% throughout the entire lifecycle of construction, which considerably exceeded reference systems that had losses between 2.1% and 5.5%.

The main contributions of this research are the developments of a real-time scheduling optimization cloud-edge structure and the power infrastructure construction management system based on holographic digitalization technology. The platform processed over 5GB of holographic point cloud data from scans in less than one second for time-sensitive scheduling actions. Analysis of the marginal loss coefficients also validated the platform’s superiority, attaining values 65% lower than System 1 and 58% lower than System 2 under a broad range of operational conditions. These innovations define the intelligent construction management framework for the power engineering domain.

Successful platform deployment necessitates systematic organizational transformation across technical and infrastructural dimensions. Operators require 40-hour certification programs covering holographic data interpretation and quantum-inspired optimization methodologies. Infrastructure integration demands compliance with IEEE P2030.13 standards, which define interoperability protocols for cyber-physical energy systems. The platform’s modular architecture supports phased implementation strategies, beginning with edge monitoring deployment followed by progressive cloud integration. This hierarchical approach maintains operational continuity during the transition, minimizing disruption to critical utility services while enabling gradual workforce adaptation to advanced scheduling paradigms.

Still, these important achievements could be built upon more productively in issues relating to future research. The current system architecture has a high computational burden at the edge nodes; each node requires a dual-core processor with 8 GB of RAM. This poses a challenge for deployment in low-resource settings. Furthermore, the effectiveness of the holographic scanning system may be compromised under severe weather conditions, which calls for more robust methods of data acquisition. Future investigations should explore federated learning paradigms enabling privacy-preserving multi-utility collaboration, renewable energy prediction integration for adaptive construction scheduling, quantum computing acceleration of optimization algorithms for transcontinental networks, and standardized holographic data protocols ensuring platform interoperability. These directions collectively advance toward autonomous, resilient, and universally deployable scheduling systems for next-generation power infrastructure. The results obtained in this study are promising and show potential for the advancement of autonomous intelligent construction infrastructure systems.

Conflicts of Interest

The authors declare that they have no conflicts of interest.

References

[1] G. Fan, C. Peng, X. Wang, P. Wu, Y. Yang, and H. Sun, “Optimal scheduling of integrated energy system considering renewable energy uncertainties based on distributionally robust adaptive MPC,” Renewable Energy, vol. 226, p. 120457, 2024.

[2] W. Song, X. Chen, Q. Li, and Z. Cao, “Flexible job-shop scheduling via graph neural network and deep reinforcement learning,” IEEE Transactions on Industrial Informatics, vol. 19, no. 2, pp. 1600–1610, 2022.

[3] K. Lei, P. Guo, Y. Wang, J. Zhang, X. Meng, and L. Qian, “Large-scale dynamic scheduling for flexible job-shop with random arrivals of new jobs by hierarchical reinforcement learning,” IEEE Transactions on Industrial Informatics, vol. 20, no. 1, pp. 1007–1018, 2023.

[4] Z. Zhenyu, B. Geriletu, and L. Xinxin, “Optimization and Scheduling of Integrated Energy Systems With Carbon Capture and Storage-Power to Gas Based on Information Gap Decision Theory,” Power Generation Technology, vol. 45, no. 4, p. 651, 2024.

[5] L. Gao, S. Yang, N. Chen, and J. Gao, “Integrated Energy System Dispatch Considering Carbon Trading Mechanisms and Refined Demand Response for Electricity, Heat, and Gas,” Energies (19961073), vol. 17, no. 18, 2024.

[6] M. A. Yassin, A. Shrestha, and S. Rabie, “Digital twin in power system research and development: principle, scope, and challenges,” Energy Reviews, vol. 2, no. 3, p. 100039, 2023.

[7] S. Tabassum, A. R. V. Babu, and D. K. Dheer, “A comprehensive exploration of IoT-enabled smart grid systems: power quality issues, solutions, and challenges,” Science and Technology for Energy Transition, vol. 79, p. 62, 2024.

[8] S. Liang, S. Jin, and Y. Chen, “A Review of Edge Computing Technology and Its Applications in Power Systems,” Energies, vol. 17, no. 13, p. 3230, 2024.

[9] S. Liu et al., “A cloud-edge cooperative scheduling model and its optimization method for regional multi-energy systems,” Frontiers in Energy Research, vol. 12, p. 1372612, 2024.

[10] L. Wang, J. Cheng, and X. Luo, “Optimal scheduling model using the IGDT method for park integrated energy systems considering P2G–CCS and cloud energy storage,” Scientific Reports, vol. 14, no. 1, p. 17580, 2024.

[11] H. Hu et al., “Traction power systems for electrified railways: evolution, state of the art, and future trends,” Railway Engineering Science, vol. 32, no. 1, pp. 1–19, 2024.

[12] W.-C. Chung, J.-S. Tong, and Z.-H. Chen, “A fine-grained GPU sharing and job scheduling for deep learning jobs on the cloud,” The Journal of Supercomputing, vol. 81, no. 2, pp. 1–30, 2025.

[13] P. D. Paraschos and D. E. Koulouriotis, “Learning-based production, maintenance, and quality optimization in smart manufacturing systems: A literature review and trends,” Computers & Industrial Engineering, p. 110656, 2024.

[14] S. Sangeetha, J. Logeshwaran, M. Faheem, R. Kannadasan, S. Sundararaju, and L. Vijayaraja, “Smart performance optimization of energy-aware scheduling model for resource sharing in 5G green communication systems,” The Journal of Engineering, vol. 2024, no. 2, p. e12358, 2024.

[15] A. M. Adeyinka, O. C. Esan, A. O. Ijaola, and P. K. Farayibi, “Advancements in hybrid energy storage systems for enhancing renewable energy-to-grid integration,” Sustainable Energy Research, vol. 11, no. 1, p. 26, 2024.

[16] R. Yu, Q. Yao, T. Zhong, W. Li, and Y. Ma, “Visualized panoramic display platform for transmission cable based on space-time big data,” in Dependability in Sensor, Cloud, and Big Data Systems and Applications: 5th International Conference, DependSys 2019, Guangzhou, China, November 12–15, 2019, Proceedings 5, 2019: Springer, pp. 314–323.

[17] X. Chen, S. Liu, J. Zhao, H. Wu, J. Xian, and J. Montewka, “Autonomous port management based AGV path planning and optimization via an ensemble reinforcement learning framework,” Ocean & Coastal Management, vol. 251, p. 107087, 2024.

[18] A. Zarei, N. Ghaffarzadeh, and F. Shahnia, “Optimal demand response scheduling and voltage reinforcement in distribution grids incorporating uncertainties of energy resources, placement of energy storages, and aggregated flexible loads,” Frontiers in energy research, vol. 12, p. 1361809, 2024.

[19] G. Xiao, H. Liu, and J. Nabatalizadeh, “Optimal scheduling and energy management of a multi-energy microgrid with electric vehicles incorporating decision making approach and demand response,” Scientific Reports, vol. 15, no. 1, p. 5075, 2025.

[20] L. Xingguo, R. Yongfeng, and M. Qingtian, “Optimal scheduling of integrated energy system with CSP and P2G considering controllable load,” Acta Energiae Solaris Sinica, vol. 44, no. 12, pp. 552–559, 2023.

[21] T. Liang, X. Zhang, J. Tan, Y. Jing, and L. Liangnian, “Deep reinforcement learning-based optimal scheduling of integrated energy systems for electricity, heat, and hydrogen storage,” Electric Power Systems Research, vol. 233, p. 110480, 2024.

Biographies

Shuo Wang graduated from South China University of Technology in 2013, majoring in Electrical Engineering and Automation, and obtained a Bachelor of Engineering degree. He is currently a specialist and engineer in the Infrastructure Department of Jiangmen Power Supply Bureau of Guangdong Power Grid Co., LTD. His research field mainly focuses on the construction management of power grid infrastructure projects.

Ruihua Chen graduated from South China Normal University in 2012. Currently serving as a project management position and engineer at the Project Management Center of Jiangmen Power Supply Bureau, Guangdong Power Grid Co., LTD. His research fields cover power engineering technology, transmission line condition monitoring, intelligent equipment application, etc.

Jinhui Guo graduated from Wuyi University in 2013, obtaining a bachelor’s degree in Electrical Engineering. Currently serving as a project management engineer at Jiangmen Power Supply Bureau of Guangdong Power Grid Co., LTD. His research fields cover overhead transmission line technology, power cable technology, intelligent operation and maintenance technology of power grids, etc.

Zhuwei Liang graduated from Guangzhou College of South China University of Technology in 2012 with a bachelor’s degree in Electrical Engineering Automation. Currently, he holds a project management position at Jiangmen Power Supply Bureau of Guangdong Power Grid Co., Ltd. and is a professional engineer in power engineering. His research fields cover electrical engineering and its automation, computer communication technology, network cabling and intelligent networks, etc.

Distributed Generation & Alternative Energy Journal, Vol. 40_5&6, 1073–1100.

doi: 10.13052/dgaej2156-3306.40567

© 2025 River Publishers