Modeling of Real Time Traffic Flow Monitoring System Using Deep Learning and Unmanned Aerial Vehicles

Sachin Upadhye1, S. Neelakandan2,*, K. Thangaraj3, D. Vijendra Babu4, N. Arulkumar5 and Kashif Qureshi6

1Department of Computer Application, Shri Ramdeobaba College of Engineering and Management, Nagpur

2Department of CSE, R.M.K Engineering College, Chennai, India

3Department of IT, Sona College of Technology, Salem, India

4School of Electronics Engineering, Vellore Institute of Technology, Vellore – 632 014, Tamil Nadu, India

5Department of Data Science and Statistics, CHRIST (Deemed to be University), Bangalore, India

6Department of CSE, Sanskriti University Mathura, Uttar Pradesh, India

E-mail: upadhyesd@rknec.edu; snksnk17@gmail.com; thangarajkesavan@sonatech.ac.in; drdvijendrababu@gmail.com; itsprofarul@gmail.com; srk1521@gmail.com

*Corresponding Author

Received 13 September 2021; Accepted 22 November 2021; Publication 15 November 2022

Abstract

Recently, intelligent video surveillance technologies using unmanned aerial vehicles (UAVs) have been considerably increased in the transportation sector. Real time collection of traffic videos by the use of UAVs finds useful to monitor the traffic flow and road conditions. Since traffic jams have become common in urban areas, it is needed to design artificial intelligence (AI) based recognition techniques to attain effective traffic flow monitoring. Besides, the traffic flow monitoring system can assist the traffic managers to start efficient dispersal actions. Therefore, this study designs a real time traffic flow monitoring system using deep learning (DL) and UAVs, called RTTFM-DL. The proposed RTTFM-DL technique aims to detect vehicles, count vehicles, estimate speed and determine traffic flow. In addition, an efficient vehicle detection model is proposed by the use of Faster Regional Convolutional Neural Network (Faster RCNN) with Residual Network (ResNet). Also, a detection line based vehicle counting approach is designed, which is based on overlap ratio. Finally, traffic flow monitoring takes place based on the estimated vehicle count and vehicle speed. In order to guarantee the effectual performance of the RTTFM-DL technique, a series of experimental analyses take place and the results are examined under varying aspects. The experimental outcomes highlighted the betterment of the RTTFM-DL technique over the recent techniques. The RTTFM-DL technique has gained improved outcomes with a higher accuracy of 0.975.

Keywords: Deep learning, traffic flow monitoring, unmanned aerial vehicles, video surveillance, vehicle counting, speed estimation.

1 Introduction

In recent times, unmanned aerial vehicle (UAV) has begun to develop the field of traffic data gathering un-leashing opportunity to develop the practice and theory in modeling, collecting and understanding traffics on the basis of large video stream [1]. It is because of the developments in the technical specification of UAVs, their levels of automation, and the advance in security systems and telemetry [2]. Furthermore, Computer Vision (CV) is currently in the front of Machine Learning model; different methods and pretrained DL networks exist to analyze large amounts of images with huge detection abilities. Amongst the benefits of UAV based systems are their stability and flexibility. They could transmit information from the air in real time when continuing with their observing task [3]. Probably the best part of UAV is in the fields of observation. The usage of UAV has developed as common trend in many higher altitude tasks, like checking crops, exploring topography, provide monitoring traffic, and situational awareness [4]. Especially, AI with deep learning (DL) methods, is accessing a wider range of applications, includes scene recognition, voice recognition, image classification, and object detection. In this method, DL’s aim is to excavate higher level features of raw information at many layers. This feature is later employed for representing the real time. In the area of transportations, integrating DL methods and UAVs show promising to traffic analyses. Because it provides an un-leashing opportunity to develop the practice and theory in modelling, collecting, and understanding traffics on the basis of a large video stream, which is currently being explored. An effective method which is used in Present technology for monitoring traffic in specific locations, surveillance cameras, inductive loop detectors, GPS and remote sensing satellites were installed. The goal of monitoring traffic is to gather information for calculating the traffic network. Present technology for monitoring traffic includes surveillance camera, inductive loop detector [5], GPS, and remote sensing satellite were deployed in specific locations. An inductive loop detector uses the percentage of time points occupied by the vehicle for computing the density of traffics. But, this method is inconvenient and expensive to function. Inductive loops have the following disadvantages: poor detection of small vehicles, road deterioration or heavy vehicles, major traffic disruption during installation, sensitivity to temperature fluctuations, affected by metallic road construction materials, and a high risk of the loop and feeder cable being stolen.

Additionally, it isn’t practical for installing loop detectors through whole road networks. In recent times, the usage of GPS has become a common method for collecting dynamic traffic information. But, the large amount of information that GPS gathers is overwhelm for handling. Surveillance camera refers to alternative method for monitoring traffics. Traffic jam is popular in most of the big cities. In several locations, the issue is worsening with an increased amount of private car leads to more traffic congestions on urban road [6], costs time, wasting resource, and aggravating inconvenience and add to air pollution as well as cause traffic accident. Data collection and the development of a prediction model are the two main steps in traffic congestion forecasting. Each step of the methodology is critical and, if not completed correctly, can have an impact on the results. Data processing is important for preparing the training and testing datasets after data collection. For various studies, the case area varies. Traffic congestion is, therefore, a threat to property and life. Subsequently, it is essential to the traffic officials and government for reducing traffic congestion. Different approaches for traffic congestion detection were introduced. E.g., [7] presented a GPS trace analysis to identify traffic congestions. But, this technique has 2 constraints. Initially, because the amount of traffic information gathered using GPS is hard to manage in a timely manner, error could result, leads to imprecise analyses. Additionally, the privacy of the driver could not be protected well while utilizing GPS devices. In order to tackle the initial limitations, AI based detection techniques provide an efficient manner for delivering accurate and timely guidance to the driver for avoiding congested roads. Furthermore, real time traffic congestion detection is useful for the traffic manager to begin efficient dispersal measures. Another approach of Surveillance camera: The wireless sensor-based network performs admirably in the traffic control system, which is a real-time application. The reason for this is that the WSN design is capable of meeting all real-time traffic and road condition monitoring needs.

This study presents real time traffic flow monitoring system using deep learning (DL) and UAVs, called RTTFM-DL with the intention to detect vehicles, count vehicles, estimate speed, and determine traffic flow. At the same time, an efficient vehicle detection model is proposed by the use of Faster Regional Convolutional Neural Network (Faster RCNN) with Residual Network (ResNet). Followed by, a detection line based vehicle counting approach is designed, which is based on overlap ratio. Lastly, the traffic flow is monitored based on the estimated vehicle count and vehicle speed. For assuring the proficient results analysis of the RTTFM-DL technique, a sequence of experimental analyses is carried out and the results are inspected under distinct aspects. In short, the paper contributions are summarized as follows.

• Develop a novel RTTFM-DL technique for traffic flow monitoring using UAVs

• Aims to detect vehicles, count vehicles, estimate speed, and determine traffic flow.

• Design an efficient vehicle detection model is proposed by the use of Faster RCNN with ResNet model

• Present a detection line based vehicle counting approach is designed, which is based on overlap ratio.

• Finally, monitor the traffic flow is monitored based on the estimated vehicle count and vehicle speed.

2 Literature Survey

Ham et al. [8] investigate the effect of some significant factors: the size of object, the amount of samples, and the integration of dataset, on identifying multiclass vehicles with DL methods in many UAVs images. The 3 recognition methods were related: fast RCNN, RFCN, and SSD, for suggesting guidelines to the selected models. Varia et al. [9] proposed an automated road extraction with UAVs based on Remote Sensing data. Road extraction with UAVs information is highly helpful in city planning, GPS based application, traffic management, and so on. The DL methods i.e., FCN and GAN models are employed for extracting road from UAV datasets presented in the study. The FCN executes semantic segmentation on an image where the GAN creates output image in the models it learns. The DL methods i.e., FCN and GAN models are employed for extracting road from UAV datasets presented in the study. The FCN executes semantic segmentation on an image where the GAN creates output image in the models it learns.

Vlahogianni et al. [10] proposed a 2 complementary to one another approach: (i) recognize in realtime, with traffic condition, minimum computation cost (ii) classify vehicle, localize and estimate traffic variables (speed, density, volume) on road segments from videos taken using UAV. The issues are developed as classification problems and addressed by CNN models. Also, the usage of pre-trained CNN is explored. The process is, later, analyzed according to its feasibility and accuracy in execution. Zhang et al. [11] aim are to perform traffic analyses with UAV based videos and DL methods. The road traffic videos are gathered via a location fixed UAV. The advanced DL techniques are used for identifying the moving object in the videos. The related mobility metric is estimated for conducting traffic analyses and calculate the consequence of traffic congestion. Micheal et al. [12] proposed a new method for detecting and tracking objects from UAVs information. A DSOD model is fully trained on UAV images. Deep supervision and dense layer wise connections enrich the learning of DSOD and well perform object detections when compared with pretrained based detectors. The LSTM model is employed to track the recognized objects. Gupta and Verma [13] introduce a new aerial image traffic surveillance and monitoring system according to the popular and advanced DL object detections model (SSD, Fast RCNN, YOLOv4, and YOLOv3) with the AUAIR datasets. These datasets are very imbalanced and to solve this problem, other five hundred images were taken using web mining technique. The new contributions of this study are 2 fold. Firstly, this study systematically distinguishes the irrelevance of ground view images for detecting aerial objects. Next, a regress comparison of this algorithm has been performed for investigating their efficacy.

Zhang et al. [14] fixed the statistics of road traffic flows as the initial point. Afterward investigating the features of video shot with the UAV, they prefer to employ the DL architecture on the basis of Fast RCNN for training vehicle recognition models for detecting the vehicle’s target in video. The motion tracks of vehicles in the shooting scenes are derived based on the results of object recognition. Ammour et al. [15] proposed automated solutions to the problems of counting and detecting cars in UAV images. The presented model starts by separating the input images to smaller homogeneous region that is employed as a candidate location for detecting car. Then, a window is extracted near all the regions, and DL model is employed for mining illustrative features from this window. They employ to use a DCNN model i.e., pretrained previously on large auxiliary information as a feature extraction tool, integrated to linear SVM classifiers for classifying region to “car” and “no-car” class. Ye et al. [16] proposed an innovative method for detecting and tracking UAVs from an individual camera installed on distinct UAVs. Firstly, evaluate background motion through perception transformation models and later detect moving object candidate in the background subtracted image via DL classifiers trained on automatically labelled dataset. For every moving object candidate, they discover spatio and temporal traits through optical flow matching and later prune them according to their motion pattern in comparison to the background. Kalman filter is employed on pruned moving objects for improving temporal consistencies amongst the candidate recognitions.

Many UAV photos were used by Ham et al. [8] to detect multiclass vehicles using DL techniques. They were fast RCNN, RFCN, and SSD. According to Varia et al. [9], remote sensing data can be used to extract roads. Transportation, city planning, and traffic management all benefit from road excavation with UAVs. The work uses DL methods such as FCN and GAN models to extract roads from UAV data. With a GAN, the FCN does semantic segmentation on an image.

According to Micheal et al. [12], A DSOD model fully trained using UAV pictures Similar to pretrained detectors, deep supervision and thick layerwise connections enhance DSOD learning. For tracking, the LSTM model is used. With the AUAIR datasets, Gupta and Verma [13] provide a new aerial image traffic surveillance and monitoring system. Other 500 photos were acquired using web mining to balance these datasets. This study adds two new ideas to the research. In order to detect airborne objects, this study disproves the use of ground view photographs. Then, this algorithm’s efficacy was compared using a regress comparison method.

Zhang et al. To train vehicle recognition models, they first investigate the properties of UAV video. Object recognition findings are used to construct vehicle motion tracks in shooting settings. Ammour et al. [15] offered automated car detection and counting in UAV photos. Start by splitting the input photos into smaller homogeneous regions. From this window, a DL model extracts illustrative features, which are then used in the next step. With the help of linear SVM classifiers, they classify the region into “car” and “no-car”. According to Ye et al. [16], one camera on each UAV can detect and track the other. Following that, use DL classifiers trained on an artificially labelled dataset to detect moving objects in the background subtracted image. This allows them to find spatiotemporal features in moving objects and then prune them based on their motion relative to the background. For candidate recognitions, Kalman filter is applied on trimmed moving objects.

3 The Proposed RTTFM-DL Technique

In this study, a new RTTFM-DL technique is developed to detect vehicles, count vehicles, estimate speed, and determine traffic flow. The RTTFM-DL technique involves a vehicle detection model using Faster RCNN with ResNet model. Moreover, a detection line based vehicle counting approach is designed, which is based on overlap ratio. Furthermore, the traffic flow is monitoring based on the estimated vehicle count and vehicle speed.

3.1 Vehicle Detection Using Faster RCNN Technique

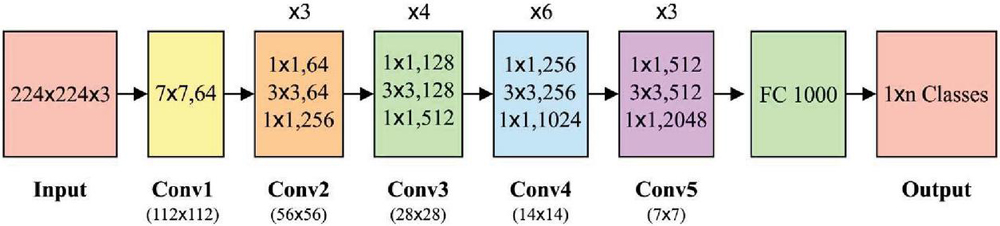

Primarily, the UAVs collect the traffic videos which are then separated into a collection of frames. Then, the vehicles that appeared in the set of frames are detected by the use of Faster RCNN technique. The feature extraction (FE) purposes to attain the original image features. Initial, an image of a similar size is reached with the normalized procedure [17], afterward, the feature of the images can be extracted with convolutional pooling of CNN networks. The ResNet is a skipping framework that directly skips more than one layer. It resolves this issue of gradient disappearance produced by stack of layers. But, the VGG16 network could not extract features of the image. So, the VGG16 network has been changed with ResNet. Input images have been unified primary and then input to the FE networks to unified output. It can decrease the image by 16 times in the FE phase, similar to VGG16, and the MobileNet from the experimentation parts are similar. Figure 1 illustrates the structure of ResNet-50 model.

Figure 1 Structure of ResNet-50.

The deep residual network has been executed by FFNN with skip connections. The skipping framework carries out identity mapping towards the outcome of 1 layer is direct through many layers as input of latter layers. The computation technique is benefits that no other coefficients are introduced, and the computation doesn’t improve considerably. With crosslayer operations and reutilizing transitional features, gradient vanishing produced with improving the amount of NN layers is avoided. In the entire structure of Faster RCNN, the RPN network has essential network related to the FE networks. It can be fundamental network of Faster RCNN. The vehicle locating task has been carried out by RPN. The RPN creates region proposal with sliding window positioned on all points on the convolution feature map outcome with the final shared convolution layer. The sliding window will slide on all points of feature map for generating anchor point. So, there is 4K output from the b-box regression layer, referring the place coordinate of vehicle, and 2K outcomes from classifier layer, referring the vehicles. As the classifier layer includes 2 static outcomes, it can be demonstrated 2 deterministic divisions. The Faster RCNN contains nine anchors calculated by 3 scales (128, 256, and 512) and 3 ratios.

3.2 Vehicle Counting and Speed Estimation Process

The proposed model is based on the detection line, presented is employed for counting pedestrians and vehicles. The fundamental approach based on the estimation of the overlap ratio R as:

| (1) |

Whereas p, p, q, q represent the X-coordinate of the end point of the segment that the vehicle intersects the detection line. Therefore, similar vehicles were identified when R exceeds 0.75. Or else, the vehicle counts are upgraded. But, the research in [18] recognizes the importance of lane lines in the capability to identify the neighboring vehicle. In these cases, no lane line is existing on the road result in difficult traffic situations. The vehicle parked on the pavement is omitted. In order to tackle this traffic circumstance, they presented the succeeding step to enhance the abovementioned process according to this UAV based information. In order to measure the vehicle speed, the moving distance of objects center from single frame to the following frame is evaluated based on the obtained bounding boxes. They employ 4 successive frames for calculating the average moving distance i.e., the evaluated vehicle speed, that is later transformed in image pixels based measurements to space meter based measurements.

3.3 Traffic Flow Monitoring Process

Receiving the movement tracks of vehicles using abovementioned methods, they could measure the traffic flow for a certain time according to the additional analyses of track. The certain approaches are given in the following: Vehicles will move slower or stops because of traffic jams. Hence, initially, they calculate the amount of vehicles in all the frames. When the amount is greater when compared to the provided threshold, they assume there are traffic jams. In this instance, they calculate the amount of vehicles in the picture and report the occurrence of traffic jams. When there are no traffic jams, they fetch the track of all the vehicles. The vehicles that are stopped at the roadside and the vehicle that is entering the scene might be shot using UAVs, however, they shouldn’t be involved in traffic flows. They evaluate the distance among the beginning and end points of every vehicle track. When the distance is lesser when compared to the provided threshold, they believed that these tracks aren’t tracks of the vehicles on main road and they won’t include these tracks in the traffic flows. Finally, they include each track that meets the aforementioned condition. The statistical result of track is the traffic flows of the road observed using the UAVs for a certain time.

4 Results and Discussion

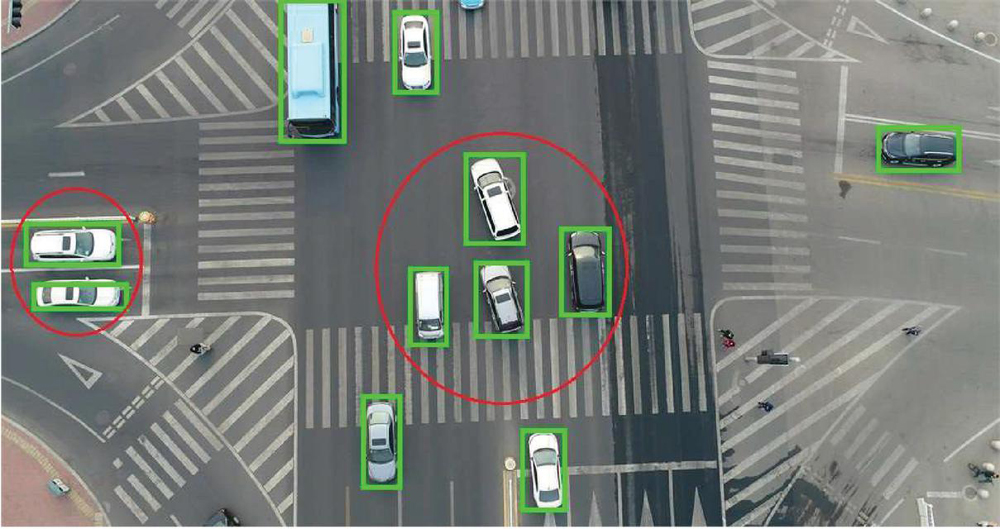

This section validates the performance of the RTTFM-DL technique on the benchmark dataset [19] and the results are investigated under several dimensions. Figure 2 depicts the visualization image of RTTFM-DL model.

Figure 2 Visualization image of RTTFM-DL model.

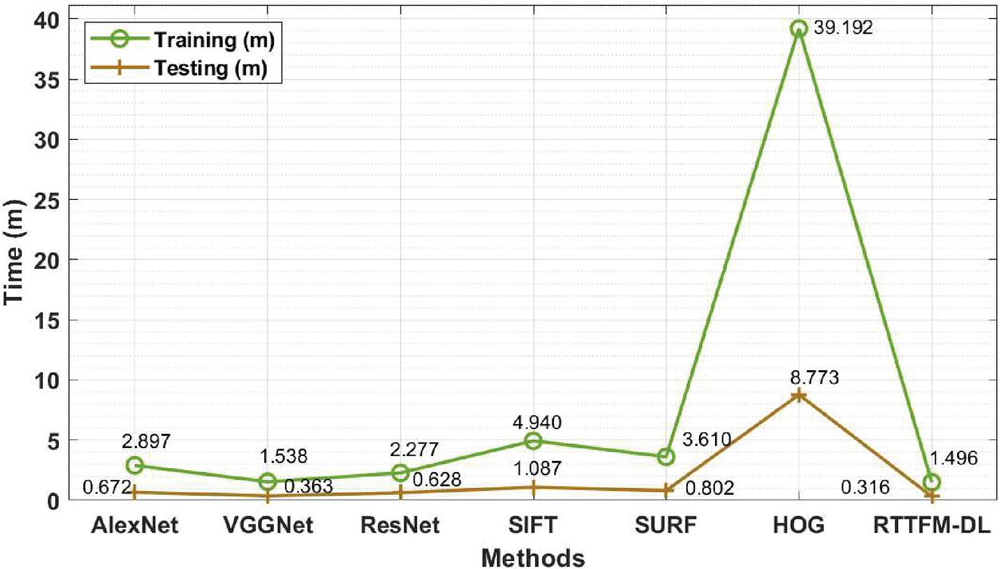

Table 1 and Figure 3 showcases the results analysis of the RTTFM-DL technique interms of training time and testing time. The experimental results stated that the RTTFM-DL technique has accomplished minimum training time and testing time.

Table 1 Training time and testing time analysis of RTTFM-DL model

| Methods | Training (m) | Testing (m) |

| AlexNet | 2.897 | 0.672 |

| VGGNet | 1.538 | 0.363 |

| ResNet | 2.277 | 0.628 |

| SIFT | 4.940 | 1.087 |

| SURF | 3.610 | 0.802 |

| HOG | 39.192 | 8.773 |

| RTTFM-DL | 1.496 | 0.316 |

Figure 3 Training and testing time analysis of RTTFM-DL model.

On examining the results interms of training time, the RTTFM-DL technique has required a lower training time of 1.496 minutes whereas the AlexNet, VGGNet, ResNet, SIFT, SURF, and HOG techniques have needed a higher training time of 2.897, 1.538, 2.277, 4.940, 3.610, and 39.192 minutes respectively. Also, on inspecting the results with respect to testing time, the RTTFM-DL algorithm has required a minimal testing time of 0.316 minutes whereas the AlexNet, VGGNet, ResNet, SIFT, SURF, and HOG methodologies have needed a superior testing time of 0.672, 0.363, 0.628, 1.087, 0.802, and 8.773 minutes correspondingly.

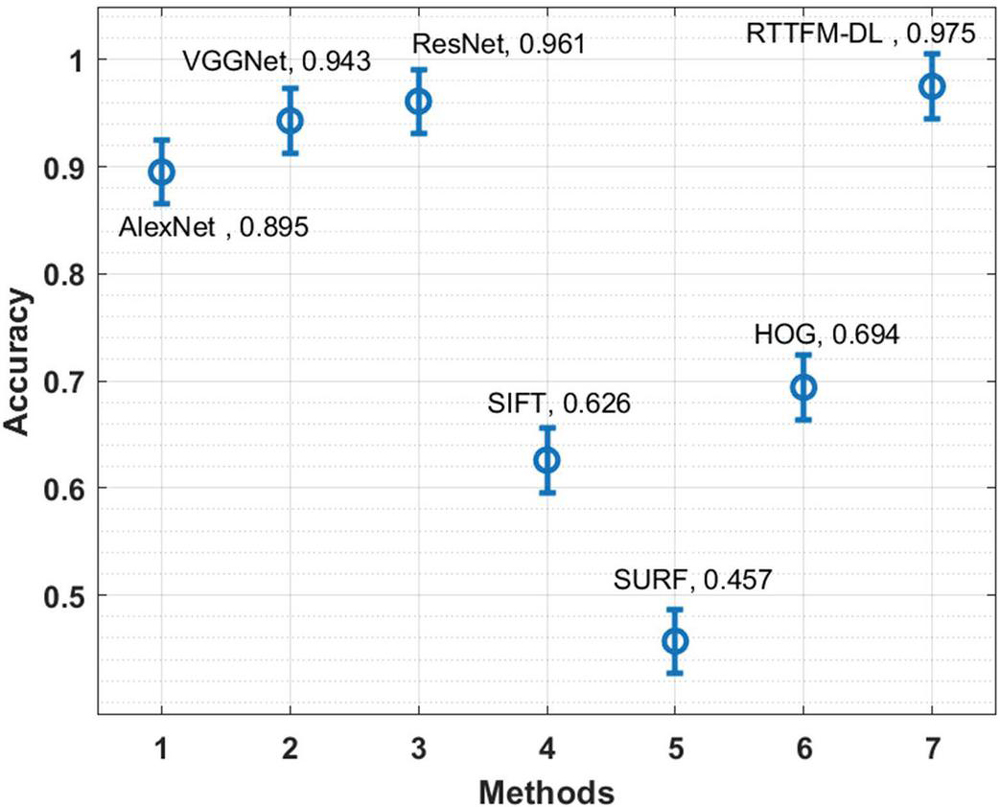

Table 2 Classification accuracy analysis of RTTFM-DL with existing techniques

| Methods | Accuracy |

| AlexNet | 0.895 |

| VGGNet | 0.943 |

| ResNet | 0.961 |

| SIFT | 0.626 |

| SURF | 0.457 |

| HOG | 0.694 |

| RTTFM-DL | 0.975 |

The classification results analysis of the RTTFM-DL technique with existing ones interms of accuracy is provided in Table 2 and Figure 4. The results portrayed that the SIFT, SURF, and HOG techniques have accomplished poor results with lower accuracy. At the same time, the AlexNet has shown slightly boosted outcomes whereas the VGGNet and ResNet models have accomplished near optimal performance. However, the RTTFM-DL technique has gained improved outcomes with a higher accuracy of 0.975.

The AlexNet model has produced results that are somewhat improved, whilst the VGGNet and ResNet models have produced results that are close to ideal. Although the RTTFM-DL technique has a greater accuracy of 0.975 than the previous technique, the results have been improved with the latter.

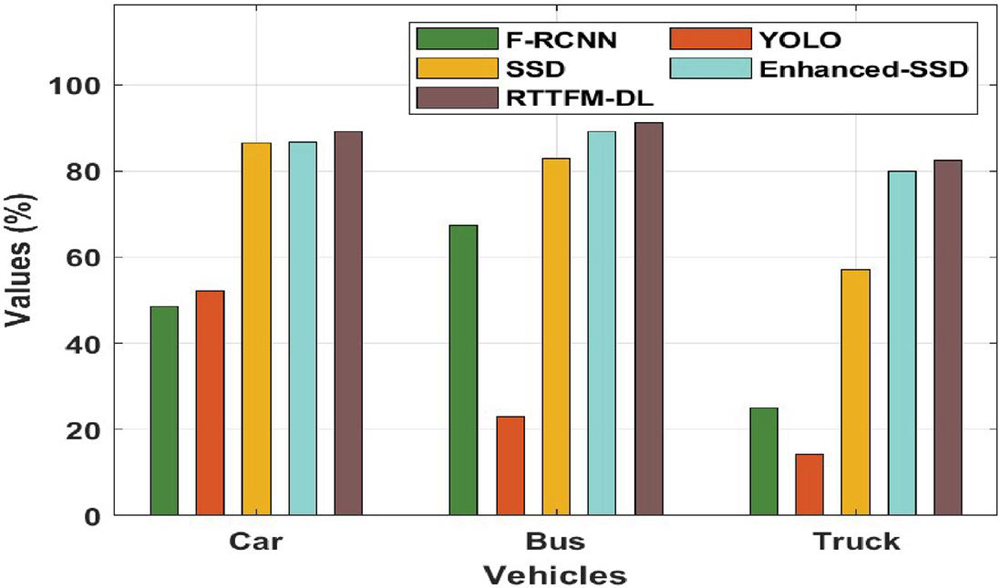

Table 3 and Figure 5 offer the counting results analysis of the RTTFM-DL technique on different vehicles. The results demonstrated that the RTTFM-DL technique has effectively counted all the different kinds of vehicles. For instance, the RTTFM-DL technique has counted the ‘car’ with the maximum rate of 89.12% whereas the F-RCNN, YOLO, SSD, Enhanced-SSD techniques have attained a minimum rate of 48.60%, 52.10%, 86.40%, and 86.60% respectively. At the same time, the RTTFM-DL approach has counted the ‘Bus’ with the higher rate of 91.25% whereas the F-RCNN, YOLO, SSD, Enhanced-SSD methods have obtained a lower rate of 67.40%, 22.90%, 82.90%, and 89.20% correspondingly. In addition, the RTTFM-DL manner

Figure 4 Accuracy analysis of RTTFM-DL model.

Table 3 Counting results of particular vehicle types on testing set 1

| Type/Methods | F-RCNN | YOLO | SSD | Enhanced-SSD | RTTFM-DL |

| Car | 48.60 | 52.10 | 86.40 | 86.60 | 89.12 |

| Bus | 67.40 | 22.90 | 82.90 | 89.20 | 91.25 |

| Truck | 25.00 | 14.30 | 57.10 | 80.00 | 82.37 |

Figure 5 Counting result analysis of RTTFM-DL model.

has counted the ‘Truck’ with the increased rate of 82.37% whereas the F-RCNN, YOLO, SSD, Enhanced-SSD methods have achieved a decreased rate of 25%, 14%, 57.10%, and 80% correspondingly.

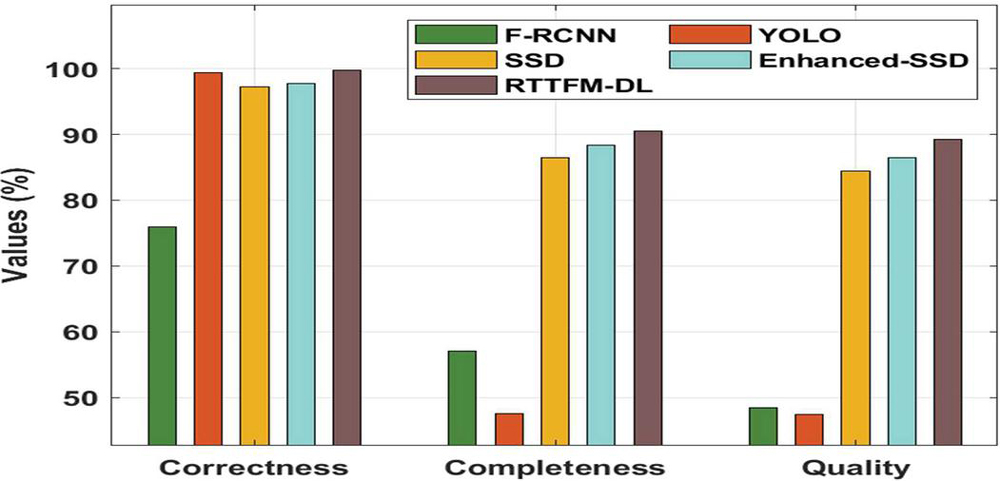

Figure 6 Comparative analysis of RTTFM-DL model.

Table 4 Counting results on the testing set 1 interms of correctness, completeness and quality

| Methods | Correctness | Completeness | Quality |

| F-RCNN | 76.00 | 57.10 | 48.40 |

| YOLO | 99.40 | 47.60 | 47.40 |

| SSD | 97.30 | 86.40 | 84.40 |

| Enhanced-SSD | 97.70 | 88.30 | 86.40 |

| RTTFM-DL | 99.75 | 90.56 | 89.23 |

Finally, a brief counting results analysis of the RTTFM-DL technique with existing techniques takes place in Figure 6 and Table 4 [20]. The obtained values highlighted the enhanced performance of the RTTFM-DL technique under various aspects. On examining the performance interms of correctness, the RTTFM-DL technique has accomplished maximum correctness of 99.75% whereas the F-RCNN, YOLO, SSD, and Enhanced SSD techniques have obtained minimal correctness of 76%, 99.40%, 97.30%, and 97.70% respectively. Likewise, on investigative the performance with respect to completeness, the RTTFM-DL manner has accomplished maximal completeness of 90.56% whereas the F-RCNN, YOLO, SSD, and Enhanced SSD methods have gained lower completeness of 57.10%, 47.60%, 86.40%, and 88.30% correspondingly. Moreover, on exploratory performance in terms of quality, the RTTFM-DL methodology has accomplished maximal quality of 89.23% whereas the F-RCNN, YOLO, SSD, and Enhanced SSD algorithms have reached a reduced quality of 48.40%, 47.40%, 84.40%, and 86.40% correspondingly.

By looking into the above-mentioned tables and figures, it is apparent that the RTTFM-DL technique has resulted in superior traffic flow monitoring performance over the other techniques.

5 Conclusion

In this study, a new RTTFM-DL technique is developed to detect vehicles, count vehicles, estimate speed, and determine traffic flow. The RTTFM-DL technique involves a vehicle detection model using Faster RCNN with ResNet model. Moreover, a detection line based vehicle counting approach is designed, which is based on overlap ratio. Furthermore, the traffic flow is monitoring based on the estimated vehicle count and vehicle speed. For assuring the proficient results analysis of the RTTFM-DL technique, a sequence of experimental analyses is carried out and the results are inspected under distinct aspects. The experimental outcomes highlighted the betterment of the RTTFM-DL technique over the recent techniques. In future, the presented RTTFM-DL technique can be employed in real time scenarios such as logistics, healthcare, etc. The RTTFM-DL technique has gained improved outcomes with a higher accuracy of 0.975.

References

[1] Vlahogianni, E.I., Del Ser, J., Kepaptsoglou, K. and Laña, I., 2021. Model Free Identification of Traffic Conditions Using Unmanned Aerial Vehicles and Deep Learning. Journal of Big Data Analytics in Transportation, 3(1), pp. 1–13.

[2] Barmpounakis EN, Vlahogianni EI, Golias JC (2016) Unmanned Aerial Aircraft Systems for transportation engineering: Current practice and future challenges. Int J Transp Sci Technol 5(3):111–122.

[3] Jian, L., Li, Z., Yang, X., Wu, W., Ahmad, A. and Jeon, G., 2019. Combining unmanned aerial vehicles with artificial-intelligence technology for traffic-congestion recognition: electronic eyes in the skies to spot clogged roads. IEEE Consumer Electronics Magazine, 8(3), pp. 81–86.

[4] G. Salvo, L. Caruso, and A. Scordo, “Urban traffic analysis through an UAV,” Procedia-Social Behavioral Sci., vol. 111, pp. 1083–1091, 2014.

[5] J. Wan, Y. Yuan, and Q. Wang, “Traffic congestion analysis: A new perspective,” in Proc. Acoustics, Speech and Signal Processing (ICASSP), 2017, pp. 1398–1402.

[6] E. D’Andrea and F. Marcelloni, “Detection of traffic congestion and incidents from GPS trace analysis,” Expert Syst. Applicat., vol. 73, pp. 43–56, 2017.

[7] Ham, S.W., Park, H.C., Kim, E.J., Kho, S.Y. and Kim, D.K., 2020. Investigating the influential factors for practical application of multi-class vehicle detection for images from unmanned aerial vehicle using deep learning models. Transportation Research Record, 2674(12), pp. 553–567.

[8] Varia, N., Dokania, A. and Senthilnath, J., 2018, November. DeepExt: A convolution neural network for road extraction using RGB images captured by UAV. In 2018 IEEE Symposium Series on Computational Intelligence (SSCI) (pp. 1890–1895). IEEE.

[9] C Pretty Diana Cyril, J Rene Beulah,Mohan, A Harshavardhan, D Sivabalaselvamani, An automated learning model for sentiment analysis and data classification of Twitter data using balanced CA-SVM, https://doi.org/10.1177/1063293X211031485.

[10] Vlahogianni, E.I., Del Ser, J., Kepaptsoglou, K. and Laña, I., 2021. Model Free Identification of Traffic Conditions Using Unmanned Aerial Vehicles and Deep Learning. Journal of Big Data Analytics in Transportation, 3(1), pp. 1–13.

[11] Zhang, H., Liptrott, M., Bessis, N. and Cheng, J., 2019, September. Real-time traffic analysis using deep learning techniques and uav based video. In 2019 16th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS) (pp. 1–5). IEEE.

[12] Micheal, A.A., Vani, K., Sanjeevi, S. and Lin, C.H., 2021. Object Detection and Tracking with UAV Data Using Deep Learning. Journal of the Indian Society of Remote Sensing, 49(3), pp. 463–469.

[13] S., Berlin, M.A., Tripathi, S. et al. IoT-based traffic prediction and traffic signal control system for smart city. Soft Computing (2021). https://doi.org/10.1007/s00500-021-05896-x

[14] Paulraj, D 2020, ‘An Automated Exploring And Learning Model For Data Prediction Using Balanced CA-SVM’, Journal of Ambient Intelligence and Humanized Computing, Vol. 12, no. 5, April 2020 , DOI: https://doi.org/10.1007/s12652-020-01937-9

[15] Gupta, H. and Verma, O.P., 2021. Monitoring and surveillance of urban road traffic using low altitude drone images: a deep learning approach. Multimedia Tools and Applications, pp. 1–21.

[16] Zhang, J.S., Cao, J. and Mao, B., 2017, July. Application of deep learning and unmanned aerial vehicle technology in traffic flow monitoring. In 2017 International Conference on Machine Learning and Cybernetics (ICMLC) (Vol. 1, pp. 189–194). IEEE.

[17] Ammour, N., Alhichri, H., Bazi, Y., Benjdira, B., Alajlan, N. and Zuair, M., 2017. Deep learning approach for car detection in UAV imagery. Remote Sensing, 9(4), p. 312.

[18] Ye, D.H., Li, J., Chen, Q., Wachs, J. and Bouman, C., 2018. Deep learning for moving object detection and tracking from a single camera in unmanned aerial vehicles (UAVs). Electronic Imaging, 2018(10), pp. 466–1.

[19] Zhang, Y., Song, C. and Zhang, D., 2020. Deep learning-based object detection improvement for tomato disease. IEEE Access, 8, pp. 56607–56614.

[20] F. Liu, Z. Zeng, and R. Jiang, “A Video-based Real-time Adaptive Vehicle-counting System for Urban Roads,” PLOS ONE 12(11): e0186098, 2017.

[21] https://github.com/civftor/detection-and-tracking-from-uav

[22] Zhu, J., Sun, K., Jia, S., Li, Q., Hou, X., Lin, W., Liu, B. and Qiu, G., 2018. Urban traffic density estimation based on ultrahigh-resolution UAV video and deep neural network. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 11(12), pp. 4968–4981.

Biographies

Sachin Upadhye is an Assistant Professor at Computer Application Department of Shri Ramdeobaba College of Engineering and Management, Nagpur. He holds MCA, M. Tech. & PhD degrees in Computer Science & Engineering. He is an Oracle Certified Associate, IBM Rational Certified developer and has more than 20 research paper published in National & International Journals and conferences. He has 12 Years teaching experiences. He has successfully completed many certifications from NPTEL, IIT and also completed the research proposal and project.

S. Neelakandan (Senior Member, IEEE) is working as an Assistant Professor in the Department of CSE at R.M.K Engineering College Chennai. He has 14 years of Teaching experience. He has obtained his Bachelor of Engineering in Computer Science & Engineering M.E in Computer Science and Engineering from Anna University Chennai. He has received Ph.D in Information and Communication Engineering from Anna University. His research interests includes Data Science, Machine Learning, Big Data and Cloud Computing. He has published more than 30 research papers. He is a recipient of several awards for his credits and Reviewer for several International Journals. He also a Senior IEEE member, Life member of ISTE&IAENG.

K. Thangaraj, currently working as a Sr. Grade Assistant Professor cum Researcher in the Information Technology Department of Sona College of Technology with more than 14 years of Experience in Teaching and Research field. His current research focus is in the Design and Development of Energy Efficient Secure Wireless Protocols, and other research interests include Cloud Computing, Machine Learning and Internet of Things.

D. Vijendra Babu obtained his B.E. from University of Madras, India, M.Tech. from SASTRA, Tanjore, India & Ph.D. from Jawaharlal Nehru Technological University, Hyderabad, India. He is currently designated as Vice Principal & Professor in the Department of Electronics and Communication Engineering at Aarupadai Veedu Institute of Technology, Vinayaka Mission’s Research Foundation (VMRF), Tamil Nadu, India. He has 22 Years of Experience in the field of Education, Research & Administration at various levels. He has obtained Grants for 3 Australian Patents, 1 Indian Design Patent & Published 5 Indian Patents. He has published 100 Articles in Refereed International/National Journals & Conferences which are indexed in Scopus/SCIE/Publons and also Reviewer in various leading Journals/Conferences. He gas published 2 Book Chapters and authored 5 Books on Hands on Data Science, Python Crash Course, Computer Vision Programming, Machine Learning and its Applications & Essentials of Wireless Sensor Networks. He is a Member in Academic Council Mm& Board of Studies Member of VMRF. He has acted as Chair in 21 International/National Conferences & delivered 40 Invited Lectures. Apart from Academics, he is an active involvement in Professional Societies as a Life Member in IEEE, CSI, IETE, BES (I), ISTE, ACEEE and IACSIT. He is currently the Secretary, Robotics & Automation Society (RAS), IEEE Madras Section & Executive Committee Member, IETE Chennai Centre.

N. Arulkumar is currently working as an Assistant Professor at Christ (Deemed to be University), Bangalore, INDIA. He received a Ph.D. degree in Computer Science from Bharathidasan University, India in 2019. His research areas are Computer Networks, Cyber Security, and the Internet of Things (IoT). He published more than 30 research papers in both journals and conferences. He published 2 patents in the fields of communication and computer science. He has chaired many technical sessions and delivered more than 10 invited talks at the national and international levels. He has completed more than 33 certifications from IBM, Google, Amazon, etc. He passed the CCNA: Routing and Switching Exam in 2017. Additionally, he also passed the Networking Fundamentals in the year 2017 exam from Microsoft.

Kashif Quarsh After having applied research and academic experience of 21 years of various countries (US, U.K, Australia, Saudi and Libya) and cultures now it is the right time to indoctrinate my scientific and soft skills to the experience for the long term in an international reputed universities. I am much spellbound in teaching Big Data, Cloud Computing, IoT, Data Security & Data Science (Artificial Intelligence, Machine Learning & Deep Learning) as the ensuing epoch will be an intelligent machines era and non-explicit programming will be much preferable. I have published books on Artificial Intelligence, Machine Learning, Operating System and Computer fundamentals, I am sure my core experience is a quick strong learning tool for students. My Ph.D. Masters and Bachelor’s Degrees were highly dedicated towards my interest and numerous students already benefitted. I have acted on the various post and served Universities and students in all ranges across 6 Countries.

Journal of Mobile Multimedia, Vol. 19_2, 477–496.

doi: 10.13052/jmm1550-4646.1926

© 2022 River Publishers