Novel Deep Learning Approach to Support Optimal Resource Allocation in 5G Environment

Raja Varma Pamba1, Rahul Bhandari2,*, A. Asha3, Rahul Neware4 and Ankur Singh Bist5

1LBS Institute of Technology for Women, Poojapura, Trivandrum, India

2Department of Computer Science and Engineering, Chandigarh University, Mohali, Punjab, India

3Rajalakshmi Engineering College, Rajalakshmi Nagar, Thandalam, Chennai, India

4Department of Computing, Mathematics and Physics, Høgskulen på Vestlandet, Bergen, Norway

5Graphic Era Hill University Bhimtal Campus, Uttarakhand, India

E-mail: er.rahulbhandari1987@gmail.com

*Corresponding Author

Received 06 April 2022; Accepted 19 September 2022; Publication 15 February 2023

Abstract

In recent times, the advancement in network devices has focused entirely on the miniaturisation of services that should ensure better connectivity between them via fifth generation (5G) technology. The 5G network communication aims to improve Quality of Service (QoS). However, the allocation of resources is a core problem that increases the complexity of packet scheduling. In this paper, we develop a resource allocation model using a novel deep learning algorithm for optimal resource allocation. The novel deep learning is formulated using the constraints associated with optimal radio resource allocation. The objective function design aims at reducing the system delay. The study predicts the traffic in a complex environment and allocates resources accordingly. The simulation was conducted to test the scheduling efficacy and the results showed an improved rate of allocation than the other methods.

Keywords: Resource allocation, 5G networks, QoS, web traffic, deep learning.

1 Introduction

The capacity, latency, and battery life of 5th generation (5G) networks will be 1000 times that of 4th generation (4G) networks [1, 2]. The growth of demanding mobile applications as well as an increase in daily traffic are driving up the use of mobile devices [3]. When lockdowns were established because of COVID-19 concerns, this behaviour was especially evident [4–6]. The increasing volume of traffic poses new technical hurdles in terms of handling mobile devices and satisfying a variety of user needs [7].

By reducing communication latency and data traffic in the backhaul network, multi-access edge computing (MEC) improves the quality of service (QoS) of fifth-generation networks [8–10]. MEC is a technique used by 5G networks to gather idle mobile user resources like storage and processing. As a result, MEC makes cloud services like environmental monitoring and statistical analysis more accessible to mobile customers [11–13].

As a result, certain mobile users might benefit from MEC resources, while others can donate resources to MEC. Mobile applications and services can be assigned to MEC [14–16] to match your needs. Due to restricted resource capacity, MEC can only provide a limited number of services to mobile customers. As a result, it’s critical to develop effective MEC resource management approaches so that user mobility can provide more services while lowering the number of resources that must be reassigned.

In order to develop a resource management strategy, you must first aggregate your resources and then allocate them according to your needs. Resource aggregation generates a pool of MECs or accessible resources that may be leveraged to suit user demands on different mobile devices. MEC is the abbreviation for Multi-Access Edge Computing. To make the most of the system’s resource pool, idle computer resources are exposed and shared with other devices. This step entails allocating MEC resources so that they can better meet the needs of the customers who have requested the services. When and where a resource allocation mechanism allocates a service has a significant impact on how well that service performs.

Depending on their movement patterns, this unpredictability in the number of resources available in a MEC’s pool may cause users to disconnect from the MEC on a regular basis. This problem has a direct effect on how many services are used or allocated. As a result of user movement, MEC must reallocate resources to ensure service levels are not compromised. There is a significant influence on service allocation and reallocation performance as a result of user movement [31].

If the service cannot be supplied owing to a lack of resources, it may be stalled while searching for an allocated or reallocated device, or the service quality may suffer during the transfer process. Different techniques for allocating resources on MEC have been proposed in recent years to give better service to customers [32–35]. These tactics, however, do not account for the length of the service or the mobility of the users. In addition, the decision-making process in these projects is extremely complex, which increases the computing costs as well as the time it takes to draw conclusions.

Resource allocation is a multi-objective optimization problem in DNNs (Dense Neural Networks), as it necessitates a balance of spectral efficiency (SE), energy efficiency (EE), and fairness. As a result, we’ll look at how to distribute new deep learning support resources based on models. To increase cell edge performance and eliminate or reduce adjacency interference, a new DNS (Denne Neural Network)-based optimization framework constraint is proposed for optimal radio resource allocation. Then, for training a DNN-based optimization framework, we present a novel deep learning approach.

In this research, we use a new deep learning technique to create a resource allocation model that optimises resource allocation. The novel deep learning is formulated using the constraints associated with optimal radio resource allocation. The objective function design aims at reducing the system delay. The study predicts the traffic in a complex environment and allocates resources accordingly. The simulation was conducted to test the scheduling efficacy and the results showed an improved rate of allocation than the other methods.

2 Literature Review

Resource management and orchestration get more challenging as the number of users, services, and resources grows. Costs are reduced and over/under resource levels are avoided by making efficient use of resources. Because the network environment is so dynamic and complicated, recent improvements in machine learning for interacting with the environment can help solve these challenges [17].

On offloading computer duties and allocating network resources, traditional methods have also been studied in detail. To achieve efficient task execution, for instance, Reference [16] uses a differential evolution algorithm to handle the task offloading problem, although this method uses more network bandwidth. In order to offload heavy tasks and allocate resources in MEC, Reference [17] developed a stochastic mixed integer nonlinear programming approach. A resource allocation plan employing orthogonal and non-orthogonal multiple access techniques was developed in reference [18] while taking energy consumption and efficiency in MEC into account. However, the overall delay still needs to be decreased. The Pareto archive evolution approach is used in Reference [19]’s proposed multi-objective resource allocation method for MEC in order to balance load and reduce time cost. At the same time, it integrates multi-criteria decision-making and prioritisation strategies, much like the best possible solutions to get the best resource allocation, but it ignores how 5G is integrated. At the same time, it integrates multi-criteria decision-making and prioritisation strategies, much like the best possible solutions to get the best resource allocation, but it ignores how 5G is integrated.

Four papers [17–19] use deep learning-based techniques to solve the problem of network slicing resource allocation. Because network slicing allows network operators to offer a variety of specialised and independent services on the same shared physical infrastructure, it is vital to the success of 5G. [18] suggested a 5G network slice allocation technique based on deep learning. To evaluate the quantity of physical resource blocks (PRBs) available to active bearers, we offer an author-specified measure called REVA for each network slice. A deep learning model is proposed to forecast this measurement. Yanette [17]. We developed a system that combines deep learning and reinforcement learning to schedule and allocate resources. We may accomplish the appropriate level of performance isolation while using the fewest resources possible by utilising network slicing. In [18], deep learning models are used to forecast network conditions based on previous data, and resource allocation is examined. [19] provided a strategy for forecasting the intermediate network slicing consumption in 5G scenarios while maintaining service level agreements.

Three studies [20–23] offer deep learning-based solutions for reducing 5G network energy usage. 2018 Martin et al. [20] describe a new network resource allocator system that allows for independent network management that considers the quality of the experience (QoE). Each system forecasts the number of fixed network resources needed to meet traffic demands as well as the topology requirements. Furthermore, the system dynamically configures the network architecture in a proactive manner while maintaining QoS. The system accomplishes this by analysing signals from various network nodes as well as end-to-end QoS and QoE parameters. The Hypertext Transfer Protocol (HTTP) and Efficient Video Coding Services are used to evaluate real-time and on-demand dynamic adaptive streaming systems. Based on the outcomes of the experiments, we can conclude that the system can scale the network architecture and meet the resource efficiency requirements for media streaming services. The ideal scheduling strategy is transferred to input parameters with the help of deep learning models (such as user requests, channel factors, submission deadlines, and user power). In a similar way to the strategy described in [21], a deep learning model is utilised to discover the optimum approximate co-resource allocation method to reduce energy usage. [22] employed a deep learning model that took into account the behaviour of offloaded access points in order to determine user affiliation. In order to improve energy efficiency, the authors of [23] developed a deep learning approach for dynamic carrier allocation in Multi-carrier Power Amplifier (MCPA). The major goal is to figure out how to assign carriers to the Multi-carrier Power Amplifier (MCPA) in the most efficient way possible while minimising overall power usage. This problem is solved using a combination of convex relaxation and deep learning. A deep learning model is used to approximate the non-convex and discontinuous power consumption function specified in the optimization problem.

[24] proposes a 5G downlink CoMP (Coordinated Multi-Point) approach based on deep learning. Each model’s downlink capacity for physical layer measurements from user equipment is improved thanks to a changed CoMP trigger function. The model’s output is whether or not to employ the CoMP mechanism.

In dense D2D mm and wave environments, the authors propose a deep learning model for beam forming-based intelligent communication systems [25]. To optimise the overall system throughput, the model chooses the most dependable relay node based on a variety of reliability indicators. They also offer a deep learning technique for distributing resources in a multi-unit network while maximising network performance. We provide a deep learning model that accurately predicts resource allocation methods without the use of complex computations.

3 System Modelling

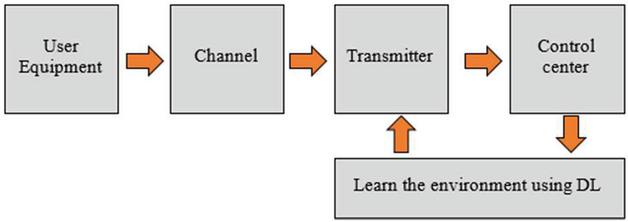

There are a lot of tiny cells [3] in the 5G network. A placement that provides greater bandwidth and fewer interferences can be provided by such a placement. When it comes to 5G ultra-dense wireless networks, macro base stations operate at low frequencies while small cells operate at higher frequencies, often in the 2 GHz to 15 GHz range. To improve the performance of the cell edge and cancel or reduce adjacent interference, downlink cooperation is also being used. Figure 1 depicts a resource allocation scenario for 5G networks, where the transmission of information from user equipment to the transmitter and then to the control centre via a communication channel is connected with a DL framework that learns the 5G environment for optimal allocation of resources.

Figure 1 Deep learning based radio resource allocation.

It is possible to perform global optimization since all base stations (BSs) and small cells are linked to the control centre. An operation that allocates resources depending on the channel environment is called a resource allocation. The pilot-based channel estimating approach consumes bandwidth resources every frame. The AI-based channel prediction approach may look at the prior channel state and forecast the next channel gain based on historical data.

Use AI-based learning to model and solve the transmission optimization problem, then apply it to dynamic transmission.

3.1 System Model

Suppose the target area has tiny cells (M N) and users (N N). The primary tiny cell j N serves all users, i M. The following model can be used to express the received signal from any small cell ‘i’ using the Shannon Formula. Between the user and the small cell, ‘H’ is considered as a channel matrix, and ‘n’ is considered as random noise. The transmitter and receiver will operate as a MIMO system if they use the specified frequency. This allows for two-way communication between users, therefore the signal model given in Equations (1) and (2). Power constraints, antenna count, and bandwidth all play a role in D2D performance.

| (1) |

Cooperative pairs share information in order to cooperate, hence the signal a cooperative tiny cell receives could be described as:

| (2) |

Where ‘k’ is considered as the cooperative small cell index, and the number of cooperative cells depends on the algorithm of cooperation. The target cell and the cooperative cells retrieve the received signal, which is expressed as:

| (3) |

The goal is to maximise the sum capacity, which may be represented as the following equation in order to maximise capacity under the appropriate resource allocation method. The mutual information is provided by the Shannon Formula.

| (4) |

Equation (5) expresses the mutual information from different tiny cells:

| (5) |

By modifying the policy for resource management, the optimization seeks to maximise the total capacity. The optimization failed because the objective is concave and the constraint is non-convex. There is a critical shortage of good approximation methods. In Equation (6), the goal is to maximise the fixed transmission sum rate while the constraint is the channel capacity and power limitations.

| (6) |

The channel environment must be recognised in order to analyse the channel status and allocate resources appropriately. Here, we offer a deep learning technique that analyses past channel circumstances to drastically lower pilot frequency while increasing prediction accuracy.

The idea is to create neural networks based on the circumstances that were set forth previously. The input, hidden layer, and output layer are all included in the multilayer perceptron method of deep learning [21]. A sigmoid threshold is used instead of a step for nodes placed in the hidden and output layers. The input layer’s task is to distribute the inputs among the neurons of the hidden layer, not to process them.

Equation (7) calculates the error ‘p’ of a certain set of inputs for node ‘j’ in the output layer as follows: ‘t’ equals the necessary output, whereas ‘o’ is the output and ‘’ is the error applied to the target node.

A single perceptron activation at node ‘j’ is just the weighted sum of all the inputs to that node. This function, f(net), is the derivative of threshold function. The following equation can be used to calculate output node error, but it is not appropriate for hidden nodes because the correct target output is hidden.

| (7) |

Similarly, Equation (8) is used to calculate hidden node errors:

| (8) |

The sigmoid function – net derivative is determined as follows:

| (9) |

The error calculations are then given by the following two equations, which incorporate the derivative of the threshold function:

| (10) | ||

| (11) |

Using the aforementioned functions, we can figure out how to minimise the error function. If errors occur in nodes in hidden layers, they will also occur in nodes below them, such as those used to produce data. It is for this reason that the final hidden node is calculated first before moving back to the initial set of hidden nodes. Once we know how much error will be applied to each node, we may alter the weights in Equation (12) for each connection leading to the nodes.

| (12) |

where, – gain term, and w – weight between UEs i and BS j over a time period t.

4 Proposed Method

With the consideration of the optimization problem provided in Equation (6), the study provides a dense neural network (Dense Net) approach to solve this.

Equation (13) can be simplified as follows based on the target function:

| (13) |

As a result, the optimization challenge can be summed up in the following way:

| (14) |

Where

The optimization issue can be further reformed as follows by simplifying the correlation matrix on the transmitter side:

| (15) |

Fundamental matrix computation theory might be used to simplify the goal function:

| (16) |

where

This is how the optimization problem with M and N is presented below:

| (17) |

Model a deep learning architecture to track the state of the channel and help the flexible and precise allocation strategy to tackle this challenge using deep learning. The dense neural network refers to a collection of dense network that can calculate the best course of action given a perfect representation of the surrounding environment. However, deep learning algorithms typically depict the solution as a less computationally intensive approximation of the channel condition. The probabilities of transition determine the dynamics.

The immediate rewards are thus estimated as below:

Where, S is considered as the terminal state for S.

To determine which policy is the best, we use the optimal value function, which is represented by V.

| (18) |

To solve the difficulty outlined in Equation (17), the deep learning method can use which is described below.

| Pseudocode |

| Step 1 Initiate X ma, X feand X nd |

| Step 2 Evaluate f (X ma), f (X fe) and f (X nd ) |

| Step 3 Assign f fit = f (X ma) and Cgen = 0 |

| Step 4 Store X ma and f (X ma) |

The flowchart of the proposed methodology, which is shown in the below Figure 2, is recognizing the channel environment is required to analyse the channel state and execute effective resource allocation. A deep learning approach is introduced in this paper. The frequency of pilots might be drastically decreased and the rate of allocation greatly boosted by analysing past data about channel circumstances. The key is to create dense neural networks based on the prior conditions. This is the proposed method for doing deep learning, which consists of an input layer, a hidden layer, and an output layer. The nodes are in the hidden layers, and the sigmoid threshold function is used instead of the step function in the output layer. The input layer therefore simply works to distribute inputs across the neurons placed in the hidden layer.

5 Results and Discussions

In this section, the performance of the proposed method can be evaluated with existing methods that include DL [23] and DL [24] using an urban micro-channel model. The parameters considered for simulation are given in Table 1. The simulation is conducted on a high-end computing engine in the MATLAB simulation environment.

Table 1 System and network parameters

| Parameters | Value |

| Configuration of antenna | 16*16 |

| Channel model | Urban micro model |

| Transmit power | 6 W |

| Layout of cell | 7 cells with three sectors |

| Bandwidth | 25 MHz |

| Total users | 300 |

| User equipment speed | 40km/hr |

| Path loss model [32] | 93.4+22.8logR |

| Modulation | QPSK – 16 QAM |

| Simulation time interval | 1000s |

| Detection | MMSE |

| Shadowing range | 10dB |

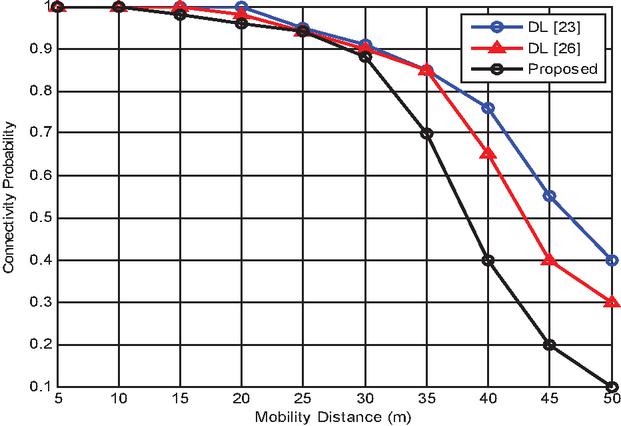

Figure 3 Probability of successful connection with the UEs based on their mobility distance with the nearby BS.

Figure 3 shows the results of successful connection probability with the UEs based on their mobility distance from the nearby BS. The results of the simulation show that with increasing distance between the users and BS, the probability of successful connection gets reduced. This is true in the case of all three deep learning methods. However, the proposed method is more successful than other methods.

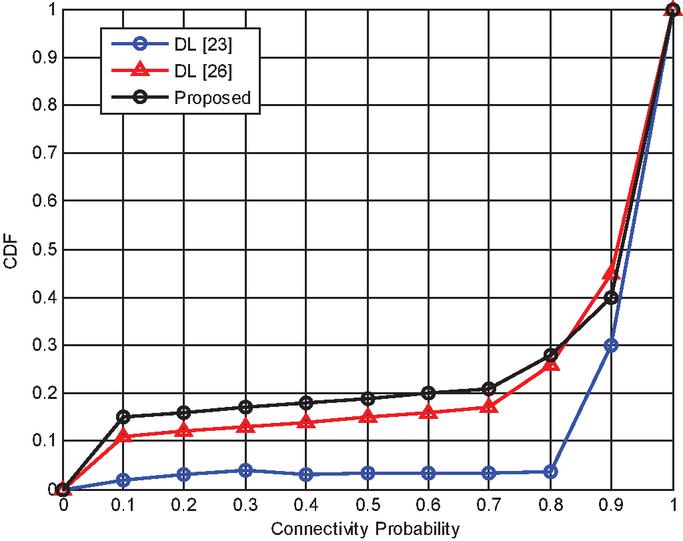

Figure 4 CDF vs. connectivity probability.

Figure 4 shows the results of the cumulative distribution function (CDF) against the successful connectivity probability in 5G networks. The results of the simulation show that with increasing connectivity, the probability of CDF increases, meaning the randomness of the data connection gets reduced and the users are allocated specific resources.

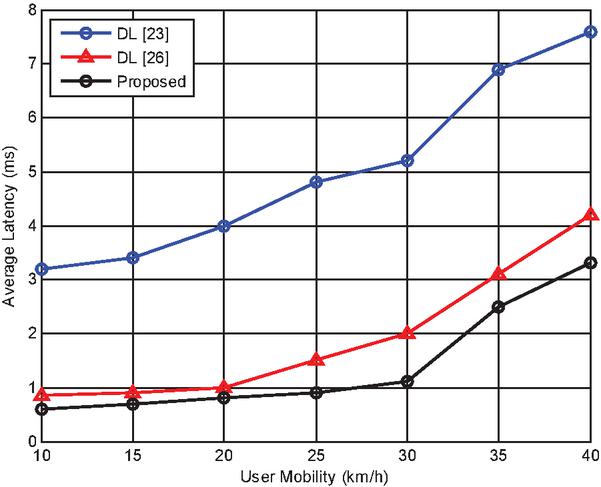

Figure 5 Latency over various user mobility.

Figure 5 shows the results of the latency between the UEs’ transmission and reception of data in 5G networks. The simulation is conducted to test how well the latency of the entire communication is, and it is found that with increasing user mobility, the latency increases. However, the proposed deep learning model achieves a lower rate of latency than other methods.

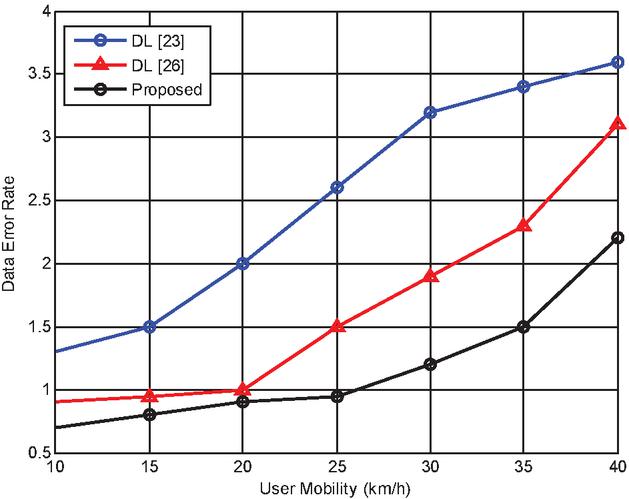

Figure 6 Data error rate w.r.t the mobility of the user.

Figure 6 shows the results of the data error rate w.r.t. the mobility of the user, where the proposed method achieves a lower data error rate than other methods. The simulation shows that with increased user mobility, the error rate increases and that affects the performance of the system.

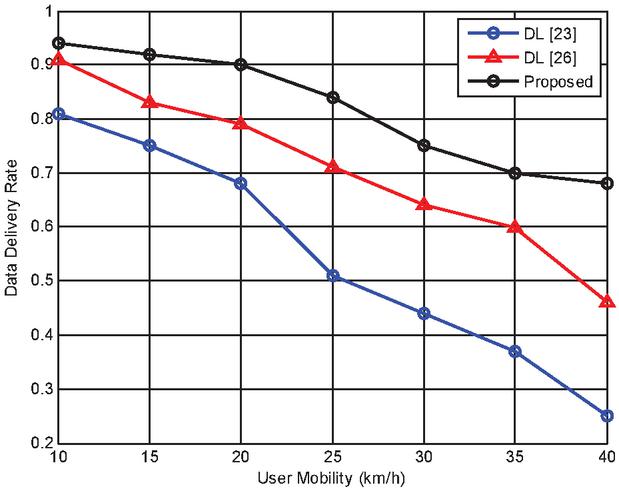

Figure 7 Data delivery rate w.r.t the mobility of the user.

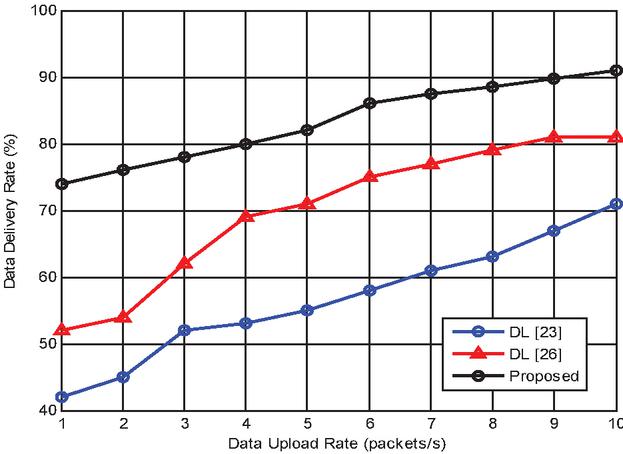

Figure 8 Data delivery rate w.r.t the data upload rate.

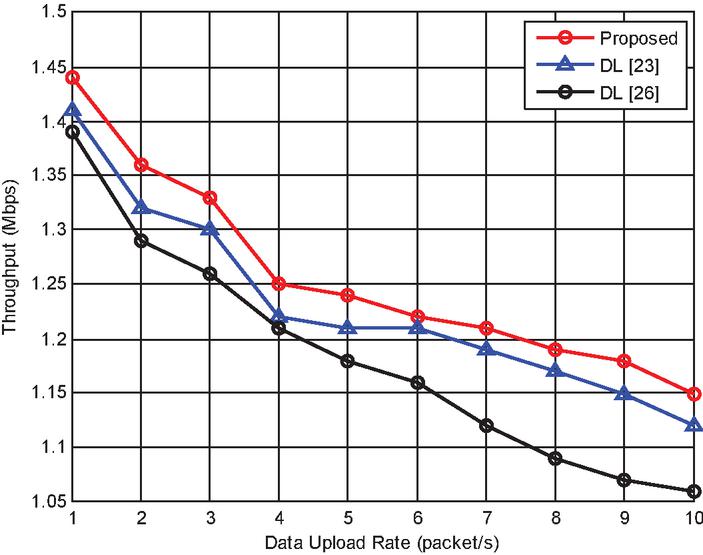

Figure 9 Throughput w.r.t the data upload rate.

Figure 7 shows the results of data delivery rate w.r.t. the mobility of the user, and Figure 8 shows the results of data delivery rate w.r.t. the data upload rate, where the proposed method achieves increased data delivery rate under user mobility and with increasing data upload rate. Further, Figure 8 shows the results of throughput w.r.t. the data upload rate. There seems to be an increased throughput rate using the proposed model than other methods. With an increasing upload rate per second, the throughput is not stabilised and it varies based on the size and delivery rate of the packets.

It is also possible that all the methods can satisfy the demand for 5G wireless transmission. Even when the number of mobile devices is small, the performance can reach the bounds of 10–6. Otherwise, especially about 450 times, there is a deep descent and all methods reach the bounds of 10–11. It is because mobility slows down the speed of computing and more details of the channel are obtained, but it is not quite necessary for us to strive for such small benefits.

Finally, the numerical results allow us to compare the SE and rate of allocation performance of our proposed DL algorithm with those of the existing algorithms, including the random allocation algorithm and the benchmark algorithm. The random allocation algorithm uses random variables with a normal distribution to represent the probability of resource allocation. The benchmark algorithm transforms the NP-hard non-convex optimization problem into five cases of the fairness index. When simulating the weighted sum rate maximisation (SRM) problem or the weighted total power minimization (TPM) problem when , and the fairness maximisation problem.

6 Conclusions

In this study, a resource allocation model was developed that optimizes resource allocation using a novel deep learning technique. There was a limitation on the allocation of radio resources that affected the new deep learning. The main objective is to reduce the time it takes for a system to respond. Research anticipates traffic and allocates resources as necessary in complex environments. The simulations show that, in terms of scheduling effectiveness, the allocation rate is superior to alternative options. Create resource allocation models with innovative deep learning methods for effective resource allocation. Limitations pertaining to the appropriate use of radio resources are included in the definition of the new deep learning. Research anticipates traffic and allocates resources as necessary in complex environments. The simulations were conducted to evaluate the scheduling effectiveness, and the findings show that the allocation rate is higher when compared to other methods. Then, we demonstrate how modelling optimization issues can be made simpler and more difficult using fixed resource allocation and convex optimization methodologies, and we apply deep learning to optimise assignment limits based on channel circumstances.

References

[1] Pereira, R. S., Lieira, D. D., da Silva, M. A., Pimenta, A. H., da Costa, J. B., Rosário, D.,… and Meneguette, R. I. (2020). RELIABLE: Resource allocation mechanism for 5G network using mobile edge computing. Sensors, 20(19), 5449.

[2] Huang, J., Xing, C. C., Qian, Y., and Haas, Z. J. (2017). Resource allocation for multicell device-to-device communications underlaying 5G networks: A game-theoretic mechanism with incomplete information. IEEE Transactions on Vehicular Technology, 67(3), 2557–2570.

[3] Wang, D., Song, B., Chen, D., and Du, X. (2019). Intelligent cognitive radio in 5G: AI-based hierarchical cognitive cellular networks. IEEE Wireless Communications, 26(3), 54–61.

[4] Haryadi, S., &Aryanti, D. R. (2017, October). The fairness of resource allocation and its impact on the 5G ultra-dense cellular network performance. In 2017 11th International Conference on Telecommunication Systems Services and Applications (TSSA) (pp. 1–4). IEEE.

[5] Bashir, A. K., Arul, R., Basheer, S., Raja, G., Jayaraman, R., and Qureshi, N. M. F. (2019). An optimal multitier resource allocation of cloud RAN in 5G using machine learning. Transactions on emerging telecommunications technologies, 30(8), e3627.

[6] Chen, M., Miao, Y., Gharavi, H., Hu, L., and Humar, I. (2019). Intelligent traffic adaptive resource allocation for edge computing-based 5G networks. IEEE transactions on cognitive communications and networking, 6(2), 499–508.

Richart, M., Baliosian, J., Serrati, J., Gorricho, J. L., Agüero, R., and Agoulmine, N. (2017, November). Resource allocation for network slicing in WiFi access points. In 2017 13th International conference on network and service management (CNSM) (pp. 1–4). IEEE.

[7] Wu, D., Zhang, Z., Wu, S., Yang, J., and Wang, R. (2018). Biologically inspired resource allocation for network slices in 5G-enabled Internet of Things. IEEE Internet of Things Journal, 6(6), 9266–9279.

[8] Yu, P., Zhou, F., Zhang, X., Qiu, X., Kadoch, M., and Cheriet, M. (2020). Deep learning-based resource allocation for 5G broadband TV service. IEEE Transactions on Broadcasting, 66(4), 800–813.

[9] Shahzadi, R., Niaz, A., Ali, M., Naeem, M., Rodrigues, J. J., Qamar, F., and Anwar, S. M. (2019). Three tier fog networks: Enabling IoT/5G for latency sensitive applications. China Communications, 16(3), 1–11.

[10] Ari, A. A. A., Gueroui, A., Titouna, C., Thiare, O., and Aliouat, Z. (2019). Resource allocation scheme for 5G C-RAN: a Swarm Intelligence based approach. Computer Networks, 165, 106957.

[11] Tadayon, N., and Aissa, S. (2018). Radio resource allocation and pricing: Auction-based design and applications. IEEE Transactions on Signal Processing, 66(20), 5240–5254.

[12] Othman, A., and Nayan, N. A. (2019). Efficient admission control and resource allocation mechanisms for public safety communications over 5G network slice. Telecommunication Systems, 72(4), 595–607.

[13] Korrai, P. K., Lagunas, E., Sharma, S. K., Chatzinotas, S., and Ottersten, B. (2019, September). Slicing based resource allocation for multiplexing of eMBB and URLLC services in 5G wireless networks. In 2019 IEEE 24th International Workshop on Computer Aided Modeling and Design of Communication Links and Networks (CAMAD) (pp. 1–5). IEEE.

[14] Korrai, P., Lagunas, E., Sharma, S. K., Chatzinotas, S., Bandi, A., and Ottersten, B. (2020). A RAN resource slicing mechanism for multiplexing of eMBB and URLLC services in OFDMA based 5G wireless networks. IEEE Access, 8, 45674–45688.

[15] Zhang, H., Liu, N., Chu, X., Long, K., Aghvami, A. H., and Leung, V. C. (2017). Network slicing based 5G and future mobile networks: mobility, resource management, and challenges. IEEE communications magazine, 55(8), 138–145.

[16] Ma, Z., Li, B., Yan, Z., and Yang, M. (2020). QoS-Oriented joint optimization of resource allocation and concurrent scheduling in 5G millimeter-wave network. Computer Networks, 166, 106979.

[17] Yan, M., Feng, G., Zhou, J., Sun, Y., and Liang, Y. C. (2019). Intelligent resource scheduling for 5G radio access network slicing. IEEE Transactions on Vehicular Technology, 68(8), 7691–7703.

[18] Gutterman, C., Grinshpun, E., Sharma, S., and Zussman, G. (2019, July). RAN resource usage prediction for a 5G slice broker. In Proceedings of the Twentieth ACM International Symposium on Mobile Ad Hoc Networking and Computing (pp. 231–240).

[19] Toscano, M., Grunwald, F., Richart, M., Baliosian, J., Grampín, E., and Castro, A. (2019, July). Machine learning aided network slicing. In 2019 21st International Conference on Transparent Optical Networks (ICTON) (pp. 1–4). IEEE.

[20] Lei, L., You, L., He, Q., Vu, T. X., Chatzinotas, S., Yuan, D., and Ottersten, B. (2019). Learning-assisted optimization for energy-efficient scheduling in deadline-aware NOMA systems. IEEE Transactions on Green Communications and Networking, 3(3), 615–627.

[21] Luo, J., Tang, J., So, D. K., Chen, G., Cumanan, K., and Chambers, J. A. (2019). A deep learning-based approach to power minimization in multi-carrier NOMA with SWIPT. IEEE Access, 7, 17450–17460.

[22] Dong, R., She, C., Hardjawana, W., Li, Y., and Vucetic, B. (2019). Deep learning for hybrid 5G services in mobile edge computing systems: Learn from a digital twin. IEEE Transactions on Wireless Communications, 18(10), 4692–4707.

[23] Zhang, S., Xiang, C., Cao, S., Xu, S., and Zhu, J. (2019). Dynamic carrier to MCPA allocation for energy efficient communication: Convex relaxation versus deep learning. IEEE Transactions on Green Communications and Networking, 3(3), 628–640.

[24] Mismar, F. B., and Evans, B. L. (2019). Deep learning in downlink coordinated multipoint in new radio heterogeneous networks. IEEE Wireless Communications Letters, 8(4), 1040–1043.

[25] Abdelreheem, A., Omer, O. A., Esmaiel, H., and Mohamed, U. S. (2019, April). Deep learning-based relay selection in D2D millimeter wave communications. In 2019 International Conference on Computer and Information Sciences (ICCIS) (pp. 1–5). IEEE.

[26] Ahmed, K. I., Tabassum, H., and Hossain, E. (2019). Deep learning for radio resource allocation in multi-cell networks. IEEE Network, 33(6), 188–195.

[27] Ma, Z., Li, B., Yan, Z., and Yang, M. (2020). QoS-Oriented joint optimization of resource allocation and concurrent scheduling in 5G millimeter-wave network. Computer Networks, 166, 106979.

[28] Tayyaba, S. K., and Shah, M. A. (2019). Resource allocation in SDN based 5G cellular networks. Peer-to-Peer Networking and Applications, 12(2), 514–538.

[29] Chien, H. T., Lin, Y. D., Lai, C. L., and Wang, C. T. (2019). End-to-end slicing with optimized communication and computing resource allocation in multi-tenant 5G systems. IEEE Transactions on Vehicular Technology, 69(2), 2079–2091.

[30] Tun, Y. K., Tran, N. H., Ngo, D. T., Pandey, S. R., Han, Z., and Hong, C. S. (2019). Wireless network slicing: Generalized kelly mechanism-based resource allocation. IEEE Journal on Selected Areas in Communications, 37(8), 1794–1807.

[31] Liu, Y., Tang, A., and Wang, X. (2019). Joint incentive and resource allocation design for user provided network under 5G integrated access and backhaul networks. IEEE Transactions on Network Science and Engineering, 7(2), 673–685.

[32] Roostaei, R., Dabiri, Z., &Movahedi, Z. (2021). A game-theoretic joint optimal pricing and resource allocation for Mobile Edge Computing in NOMA-based 5G networks and beyond. Computer Networks, 198, 108352.

[33] Lien, S. Y., Deng, D. J., Lin, C. C., Tsai, H. L., Chen, T., Guo, C., and Cheng, S. M. (2020). 3GPP NR sidelink transmissions toward 5G V2X. IEEE Access, 8, 35368–35382.

[34] Tang, F., Zhou, Y., and Kato, N. (2020). Deep reinforcement learning for dynamic uplink/downlink resource allocation in high mobility 5G HetNet. IEEE Journal on Selected Areas in Communications, 38(12), 2773–2782.

[35] Ali, R., Zikria, Y. B., Garg, S., Bashir, A. K., Obaidat, M. S., and Kim, H. S. (2021). A Federated Reinforcement Learning Framework for Incumbent Technologies in Beyond 5G Networks. IEEE Network, 35(4), 152–159.

Biographies

Raja Varma Pamba is an Assistant Professor working in LBS Institute of Technology for Women, Poojapura, Trivandrum, Kerala, India. She has over 13 years of experience in teaching and 3 years of industrial experience. She completed her B. Tech from Govt Rajiv Gandhi Institute of Technology, Kottayam and M. Tech from Visveswaraya National Institute of Technology, Nagpur, Maharashtra. She received her PhD in CSE from Mahatma Gandhi University, Kottayam, Kerala with a specialization in Machine Learning. She has over 10 paper publications to her credit and participated in many national and international conferences.

Rahul Bhandari is working as Associate Professor in Chandigarh University, Punjab, India. He has over 12 years of experience in teaching. He is completed their B.E. and M.Tech. from Jodhpur Institute of Engineering Technology, Jodhpur. He received Ph.D. degree from Career Point University, Kota. There are 9 papers published in international journal and actively participate in FDP as well as STTP.

A. Asha is currently working as an Associate Professor in the Department of Electronics and Communication Engineering, Rajalakshmi Engineering College, Chennai. She received her B. E. degree from M.S. University in 2000 and Master’s Degree in Communication Systems from SASTRA University in 2002 and PhD in Information and communication engineering from Anna University Chennai, in 2019. She has a teaching experience of 18 years and her research interests are Wireless Sensor Networks, Cloud computing and Internet of Things.

Rahul Neware received the B.E. degree in Information Technology from the RTM Nagpur University India in 2015 and the MTech. in Computer Science and Engineering from G. H. Raisoni College of Engineering Nagpur India, in 2018. He is currently pursuing a Ph.D. degree in Computing from Western Norway University of Applied Sciences, Bergen campus, Norway. His area of interest includes Cybersecurity, Cloud and Fog Computing, Advanced Image Processing, Remote Sensing. He is a member of various professional bodies like IEEE, ASR, CSI, IAENG, and Internet Society.

Ankur Singh Bist is currently working as Associate Professor at Graphic Era Hill University Bhimtal, India. His area of research is computer virology and Machine Learning. He has written more than 100 research papers. He has worked as reviewer/editor in more than 350 conferences/journals. He is active member of various research societies and educational group which covers more than 50 countries of world. He has received 5 international awards and medals of honors by renowned societies.

Journal of Mobile Multimedia, Vol. 19_3, 739–764.

doi: 10.13052/jmm1550-4646.1935

© 2023 River Publishers