A Computer Vision-based Architecture for Remote Physical Rehabilitation

Daniel Muller Rezende1, Paulo Victor de Magalhães Rozatto1, Dauane Joice Nascimento de Almeida1, Filipe de Lima Namorato1, Tatiane Daniele dos Santos2 and Rodrigo Luis de Souza da Silva1,*

1Computer Science Department, Federal University of Juiz de Fora, Brazil

2Faculty of Physical Education and Sport, Federal University of Juiz de Fora, Brazil

E-mail: daniel.muller@ice.ufjf.br; paulo.rozatto@ice.ufjf.br; dauanejoice@gmail.com; filipe.namorato@estudante.ufjf.br; tatianesantosedf@gmail.com; rodrigoluis@gmail.com

*Corresponding Author

Received 29 November 2023; Accepted 20 July 2024

Abstract

The use of computer vision in healthcare is constantly growing and the application of these techniques in the context of physical rehabilitation can bring great benefits. In this work, a software architecture was proposed which, with the use of computer vision techniques, aims to assist in the treatment and remote diagnosis of patients undergoing physical rehabilitation. The architecture was developed to allow the system to be used on computers and mobile devices. In the proposed system, the user with a professional profile can register and prescribe exercises for their patients according to the treatment. Users with a patient profile can view and perform the exercises that were prescribed for them in the application, relying on the application’s help to visually assist them with proper execution. A field research and a qualitative assessment were carried out in order to verify the usability and effectiveness of the application from the users’ point of view, with a positive reception.

Keywords: Physical rehabilitation, computer vision.

1 Introduction

Practicing physical exercise is extremely important for a good quality of life. Studies show that sedentary people are more likely to develop cardiovascular diseases such as stroke, in addition to musculoskeletal diseases, associated with bone, joint and/or muscular disorders, such as arthritis, osteoarthritis, osteoporosis, among other diseases [1]. When developing diseases such as those mentioned above, physiotherapy and rehabilitation are possible treatments that these patients can undergo. The use of technology has helped the improvement of many areas and, in the area of health, it is already possible to observe great advances. Regarding rehabilitation, the use of technologies such as computer vision, which assist in the treatment process and diagnosis of patients, are highly relevant.

Rehabilitation systems that use computer vision rely on gesture recognition techniques to detect the patient’s movements and from there collect information to assist in treatment and diagnosis. The system proposed in this article has as its main objective to provide tools that use computer vision resources to promote rehabilitation and individual assessment of patients remotely. The focus of this article is to bring forth a tool that provides an interface that adapts to the devices most commonly found in patients’ homes, namely computers, smartphones and tablets.

1.1 Motivation

To carry out physiotherapy and physical rehabilitation, without the help of any application, patient and professional need to be physically present in the same place. These patients are often elderly or people with limited mobility. Interruptions and postponements of treatment may result from patients’ need to commute to clinics and offices.

Although there are tools and applications that enable remote rehabilitation, many of them impose restrictions on the type of sensor used to capture the patient’s movements. Some tools do not have data analysis capabilities or do not provide feedback to physiotherapists. Those that eventually have all the necessary resources are expensive, making their use unfeasible.

Currently, digital devices such as notebooks, tablets and smartphones are very accessible and a considerable part of the population uses them on a daily basis, making it unusual to find someone who does not have access to at least one these devices. Applications that can run on multiple devices have a great advantage, as we can take advantage of the functionalities of these applications anytime, anywhere.

1.2 Objectives

The main objective of this work was to develop a system to enable the remote assessment of patients undergoing physical rehabilitation, with feedback to the physiotherapist responsible for the treatment. The proposed system detects the user’s body gestures, being able to capture relevant data for the rehabilitation process, such as the angle of the movement performed and the number of repetitions.

A system was developed to allow healthcare professionals to monitor patients remotely. Also, for the patient, a graphic system that provides visual cues indicating whether the proposed exercises were performed correctly was developed. In this way, the system proposed in this article will provide safety and convenience for the professional and the patient who, in turn, will be able to perform the tasks at home or in a safe place. The professional will evaluate the patient, providing the necessary guidance so that the treatment is carried out correctly and efficiently.

The contribution of this article is twofold. Firstly, a software architecture was proposed that integrates a graphical system to assist patients in carrying out prescribed exercises. The second contribution is related to the accessibility of this proposal, as it focuses on low-cost devices, especially mobile devices.

1.3 Methodology

Initially, a bibliographical survey was carried out with the purpose of verifying which methods and libraries would be most viable for the development of this project. This bibliographic survey also aimed to identify and understand the functioning of other applications that make use of computer vision and technologies to assist in the rehabilitation process.

Experimental studies were conducted after completing a preliminary version of the system. Qualitative analyzes were performed with the aim of validating the usability and functionality of the system. Experimental tests were conducted on the application and forms were made available to collect information about the volunteers’ experience. Through testing, we obtained data that made it possible to analyze the effectiveness and future usability of the application. Part of the suggestions made throughout this experimental study have already been incorporated into the system presented in this article.

2 Related Work

There are several works in the literature with proposals similar to the one presented in this article, the main ones being discussed below.

The work presented in [2] proposes an immersive virtual environment that can be applied to remote collaboration and physical activity training. The framework uses multiple cameras that are strategically positioned. The 48 cameras used capture images of human users in 360º and are connected to a computer that performs a 3D reconstruction of the users’ entire bodies and renders their images in virtual space, allowing remote users to interact. In a study carried out by the researchers of this work, the movements of a Tai Chi teacher were recorded, which were previously made available to a student in training. While the student practiced the movements in real time, the system rendered the student’s images on the screen, allowing him to see himself and the teacher. The study demonstrated that the use of this technology improved the learning of physical movements. One of the difficulties with the technology used is the speed of 3D reconstruction and the need for powerful and efficient hardware to perform the functions.

In [3] the authors propose a system to assist patients and professionals in remote physical rehabilitation. In the proposed system, it is possible for the patient to perform the exercises prescribed by the physiotherapist, with the help of a RGBD camera (Microsoft Kinect), to capture the movements. The system detects and evaluates the exercises performed through the DTW (Dynamic Time Warping) algorithm, which compares the execution of the exercise performed by the patient with a reference of the correct exercise present in the application database. In the comparison, the algorithm ignores the execution time and, therefore, the patient’s execution time does not interfere in the comparison and evaluation of the assertiveness of the exercise. Through system configuration, the patient can choose to only perform one of the exercises, without the need to pre-select which exercise would be performed. In this configuration, the algorithm detects which exercise in the database is closest to the one performed to make the comparison. The system uses gamification techniques, such as star scores and levels, which the patient can achieve by filling an experience bar according to correct executions, in order to stimulate the patient and keep him always motivated to perform the prescribed exercises and ensure adherence to physiotherapy in this remote mode. The system’s feedback is immediately displayed on the screen to the user and then the results are sent to the physiotherapist to assess the patient. Tests were carried out regarding the performance of the algorithm, as well as the usefulness and ease of use of the system. As for performance, the algorithm proved to be fast, regardless of the number of joints detected in the exercise. The need to use RGBD equipment, such as Kinect, is one of the limitations of this proposal.

The work proposed in [4] makes two main contributions. The first is a modular neural network divided into two modules. The first module has the function of detecting the execution of physiotherapeutic activities. The second module has the function of measuring and evaluating the assertiveness of the execution of these activities. The second contribution is the increase in data from the database used by the neural network mentioned above. The proposed system involves the patient performing a series of physical therapy exercises, while their movements are captured by a standard camera. From there, the OpenPose library is used, which is capable of capturing the joints of the human body. By capturing the joints of those performing the activity, kinematic data is also collected and the angles when performing the exercise are calculated. This data is stored in the database for testing and training. This way, the database is constantly updated with new information, aiming to train the measurement module. The detection module, in turn, identifies the execution of the exercise, receives the angles and associates them with the corresponding exercise in the database. The measurement module evaluates the accuracy of the execution according to the angle data considered correct in the database and then the responses and corrections are displayed to the user. In an experimental study, a database was built and the detection and measurement modules were trained, obtaining an accuracy of approximately 90%. No qualitative analysis was carried out in this study nor was a system available to test the application.

At [5] we have a telemedicine tool to assist in the remote rehabilitation of patients who have suffered a stroke. The tool uses an open variant of the DTW (Dynamic Time Warping) algorithm to identify and classify the correctness of exercises performed by the patient. The objective of the proposed work is to have a tool that can automatically identify the exercise performed, in addition to classifying and evaluating the execution without the need for the patient to pre-select the exercise that will be performed, with the system being able to identify the moment in which the patient starts and ends the execution. This functionality in the tool aims to promote greater accessibility for patients who have the most impaired cognitive and functional abilities, as can occur with people who have had a stroke. A RGBD camera was used to detect patients’ movements. To classify and evaluate the assertiveness of the exercises, the system compares the exercise performed with the same reference exercise correctly performed by the physiotherapist. In order to test the tool, a user was monitored while performing a routine of 3 physical therapy exercises. The experimental results showed that the algorithm is efficient, identifying and evaluating the exercises correctly.

More and more people have become sedentary and exercise less, and this can often lead to greater problems such as obesity and other diseases. Motivated by this statement, in [6] a cyber-physical system is presented to measure and analyze the physical activities performed by the user. The application uses a holographic virtual trainer to guide the user in performing exercises and can be controlled by voice commands and gestures. It has features such as detecting the beginning or end of a series of exercises, measuring the duration, number of repetitions, measuring the deviation of the exercise performed based on configuration rules and displaying information about the environment the user is in so that they can better adapt the environment to perform the task, if necessary. The system uses 3 RGBD cameras to detect the user’s movements and Microsoft Hololens, which is a mixed virtual reality device so that the user can visualize the system. For the CO2 and temperature environmental sensors, the smart and private open source sensor station was used. The system also requires a high-performance computer to process the data. When the entire system is connected and online, a RGBD camera will detect and collect information from the user’s face and movements and the environmental sensors will collect information about the user’s environment. The collected information will be sent to the workstation, which will receive and process the information and display it on Microsoft Hololens to the user. Thus, the user can view their avatar and the holographic virtual trainer, taking advantage of the system’s functionalities.

With the aim of assisting doctors and physiotherapists in recognizing their patients’ gait movements in the remote rehabilitation process, we have an application proposed by [7]. The function performed by the application is divided into preprocessing and classification. In the first stage, the patient’s movements are captured by video using common RGB cameras. With the StarRGB technique, captured videos are transformed into RGB images and, to reduce computational cost, the images are resized. In the second stage, through the proposed algorithm in a convolutional neural network and baseline models using transfer learning, movement classification is performed. The algorithm receives as input the images resulting from the first stage, extracts information from the images and classifies which exercise was performed. The system proved to be efficient, reaching an average accuracy of 99.64% over the six classes of movements analyzed.

Among the related works mentioned above, we can highlight [3] as the most similar to the one proposed in this article. As the main differences, we can mention the fact that in our system the user must perform the exercise prescribed by the healthcare professional, and not a random exercise. The system will check whether the execution of this specific exercise is correct, within the parameters specified by the professional. Our system also does not require an RGBD camera (like Kinect), but rather a simple monocular camera like a webcam or smartphone camera. The article presented in [4], despite using libraries similar to those used in our architecture, does not present an application for general use and does not focus on mobile devices. We believe that the possibility of using smartphones and tablets makes the usability and reach of the system proposed in this article much broader, facilitating the adoption of our proposal in real case scenarios.

3 Fundamentals

3.1 Computer Vision

Computer vision is an area of computing that aims to enable machines and computers to understand the world around them through images, normally obtained through a camera. The idea is to simulate human vision by allowing computers to analyze, interpret and extract information from images and videos. This information can be used to make decisions or to generate relevant data for a future application.

The concept of computer vision is broad and its application is possible in various sectors. Studies and applications that use computer vision in the areas of medicine and rehabilitation have allowed healthcare professionals to obtain better assessments and more accurate diagnoses of patients.

3.2 PoseNet

To perform pose estimation, computer vision techniques that detect bodies in images and videos are used. In this project we use PoseNet, which is a monocular relocalization library [8]. The library employs an end-to-end trained convolutional neural network that, from a monocular image (RGB), can regress to a pose with six degrees of freedom of the camera in relation to a scene, that is, it can perform image recognition.

To train the neural network, two techniques were used: an automated method of labeling data using structure from motion to generate large sets of pose regression data from a camera; and transfer learning that trains a pose regressor. Training starts from a network pre-trained on large image recognition datasets, like a classifier.

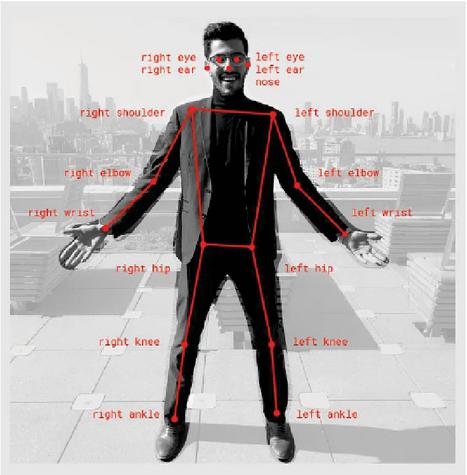

The library can detect the main human joints in a video or image, which are called key points, such as shoulders, knees, elbows and wrists, as illustrated in Figure 1.

Figure 1 Main joints of the human body (key points) that are detected by PoseNet. Available at [9].

For the most part, pose detection systems require hardware with great computational power and/or specific equipment to be processed in real time. An advantage of using PoseNet is that any individual with a basic notebook or smartphone can run the application and obtain a pose estimation.

3.3 Exercise Characterization

This section will present the exercises that compose the database of the proposed system, details on their execution and precautions during the movement. The entire description of the exercises was carried out by health professionals who collaborate in our project and has been added in this section for completeness.

Our system provides some exercises for the upper limbs, such as lateral raises and shoulder presses with dumbbells, which are performed in the frontal plane. The dumbbell lateral raise consists of shoulder abduction and scapulothoracic upward rotation. According to [10], the main muscles recruited in shoulder abduction are the deltoid with emphasis on the acromial portion and the supraspinatus.

According to [11], to perform the lateral raise with dumbbells, the individual must place themselves in an orthostatic position, feet shoulder- or hip-width apart, with knees slightly bent, torso erect and look straight ahead. With your arms at your sides and a dumbbell of the same weight in each hand, you should raise your arms to shoulder height, performing an abduction. Next, you must return to the starting position of the movement. During execution, it is important to observe the cadence of the movement, adapt the load and not fully extend the elbows. To avoid common mistakes such as leaning the trunk and performing additional movements in the spine, it is necessary to maintain good posture, activating the core muscles. Furthermore, it is important not to go beyond the shoulder line to avoid subacromial impingement.

The seated dumbbell press is a multi-joint exercise that consists of shoulder abduction, scapulothoracic superior rotation and elbow extension. These actions, according to [10], have as their primary motor the deltoid and supraspinatus muscles, serratus anterior, trapezius (descending and ascending part), triceps brachii and the anconeus, respectively. According to [11], for its correct execution, the individual must analyze the seat so that the thighs are approximately parallel to the ground and the feet are firmly on the ground. Subsequently, you should hold the dumbbells pronated and, with your elbows facing the ground, perform shoulder abduction and elbow extension, pushing the dumbbell upwards, until you reach the desired repetitions.

The proposed system also provides exercises for the lower limbs: hip abduction, free squats, sumo squats and side squats.

Hip abduction is an exercise performed in the frontal plane and its movement consists of moving the lower limb away from the midline of the body. The main abductor muscles, according to [10], are: gluteus medius, gluteus minimus and tensor fasciae latae. Hip abduction can be done with shin guards or on devices, both in lateral decubitus and in an upright position, as presented in the application. In this case, its execution consists of abducting the hip to the greatest possible amplitude, without tilting the trunk. Afterwards, return to the starting position, repeating continuously according to the professional’s prescription. It is necessary to keep the core muscles activated to avoid compensations and tilts of the trunk as well as accentuation of the lumbar curvature.

The squat, in turn, is a multi-joint exercise and occurs in the sagittal plane. Osteokinematic movement consists of hip and knee extension, as well as plantar flexion. Therefore, according to [10], the main muscle groups involved are the gluteus maximus, hamstrings, posterior fibers of the adductor magnus, quadriceps and triceps surae, in addition to the core stabilizing muscles. According to [11], its execution consists of placing your feet hip- and shoulder-width apart, pointed slightly to the sides, so that your knees are aligned with your feet. In this position, the hips and knees are flexed as far as possible and with the greatest possible amplitude, without losing the natural curvatures of the spine. Afterwards, you must return to the starting position and perform repetitions continuously. Some necessary precautions are maintaining the positions of the feet and knees, not allowing them to move towards the midline of the body, keeping the trunk erect and the natural curvatures of the spine, as well as not lifting the heel off the ground.

The squat has some variations and ways of implementing loads, one of them is the sumo squat which consists of a variation where the feet are more abducted and pointed laterally forming a 45º angle. In this variation, the hip adductor muscles are in a more favorable position for their action and thus increase their recruitment.

Another variation of the squat present in our system are the side squats, which consist of a unilateral performance in which the feet are further apart than the hip line. In this way, the body moves to one side, through hip abduction, promoting weight transfer and knee flexion up to approximately 90º, while the other leg remains extended. Subsequently, you must return to the starting position and repeat the movement, alternating the segment uninterruptedly until the proposed series is completed.

4 Proposed System

In this paper we present a web-based system that aims, through computer vision techniques, to allow the remote assessment of a patient undergoing physical rehabilitation by a professional, in an efficient and safe way for both the patient and the person responsible for their treatment. The main flow of the system consists of allowing the prescription of exercises by healthcare professionals, followed by the execution of these exercises by patients, capturing these executions through a camera. In this way, the system is capable of processing images of the exercise, validating correct execution and notifying the responsible professional.

4.1 System overview

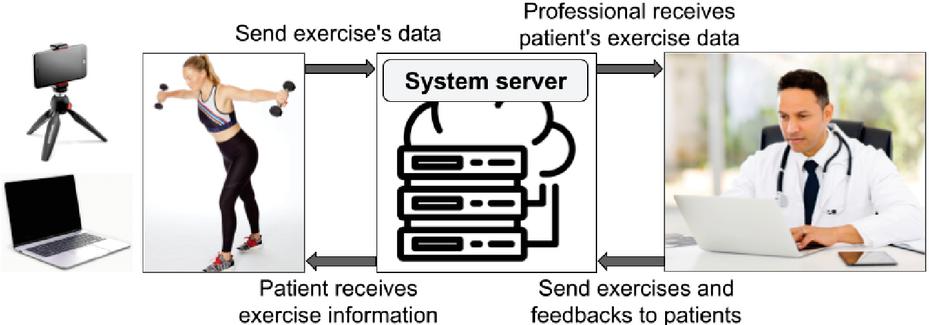

The software architecture built for the development of our solution is composed of a front-end developed in javascript and a back-end developed with tensorflow pose-detection 2.0, tensorflow tfjs-backend-webgl 3.19, mysql 2.18 and other auxiliary libraries. An overview of our system can seen in Figure 2.

Figure 2 Data flow of our system.

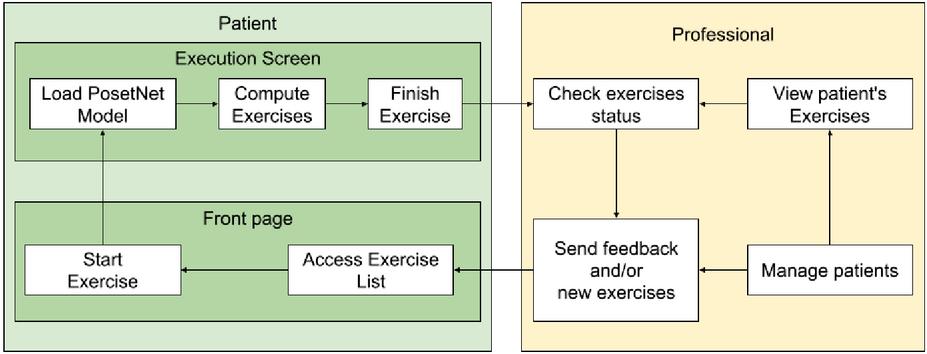

The proposed system has login and password authentication for access and has 3 types of users: administrator, professional and patient. When registering in the system, every patient is linked to a professional, also registered, who will monitor them. The system allows the patient and the professional responsible for them to exchange messages and feedback regarding the exercises. The main activities for the two major user profiles can be seen in Figure 3.

Figure 3 Main activities for the two major user profiles (patient and professional).

When prescribing an exercise for the patient, the professional must fill in data such as number of executions, correct angles for each exercise and execution time. This way, each exercise can be prescribed and targeted to each patient in a unique way, considering their problem and needs.

The administrator is the user responsible for managing the system and can perform functions such as viewing exercises and users registered in the system, registering new users and linking patients to a specific professional. In the system, users with a professional profile can view the patients linked to them, view patients’ exercise lists, check exercise performance status and information, and prescribe new exercises.

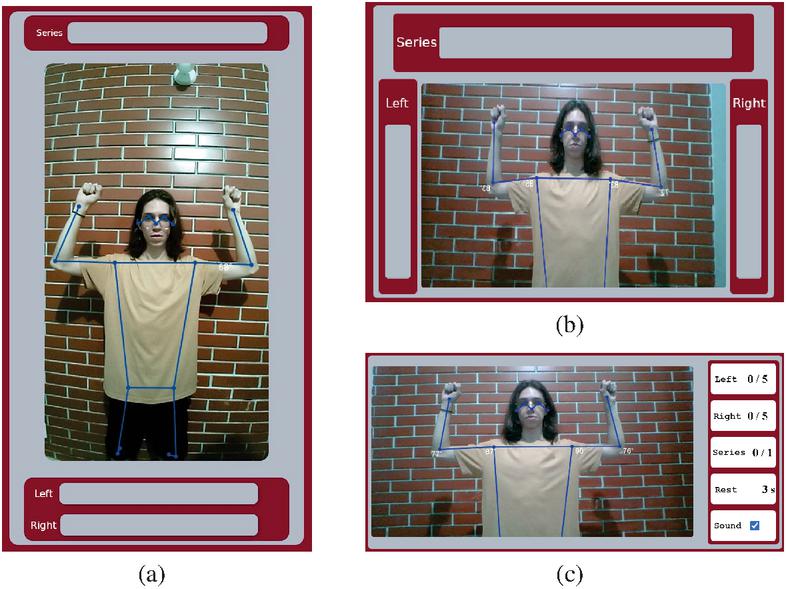

Users with a patient profile will be the final customers of the system. These users are able to perform activities such as viewing and editing their personal data, viewing their respective exercise lists and executing them. When choosing to perform the exercise, the patient is redirected to the execution screen, as illustrated in Figure 4.c. On this screen, it is possible to view the data of the exercises indicated by the professional for execution. In the image captured by the camera, the application can identify, in real time, the joints of interest for a given exercise. In this way, when the patient performs the exercise, the system identifies and displays on the screen the angles of the movement and whether a complete and correct execution was carried out, in accordance with the proposed exercise and the information for performing the exercise prescribed by the professional. The application is able to validate the execution of the exercise and return to the screen whether the exercise was completed correctly or not.

Figure 4 Execution screen on different devices. (a) and (b) present the interface on a mobile device in portrait and landscape modes. (c) presents the complete interface running on a computer.

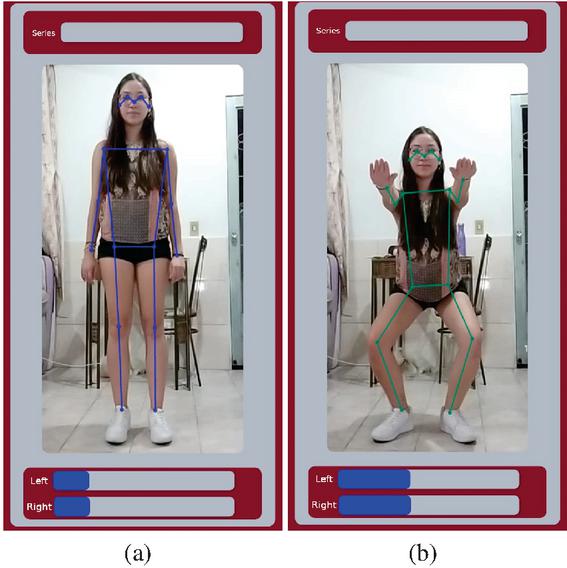

Figure 5 Exercise in resting position (a) and in the desired amplitude (b).

If the system is used on a mobile system, the interface is automatically changed so that the number of runs and the number of completed sets are more easily seen from a distance. The system displays different interfaces whether the view is in landscape or portrait mode, as can be seen in Figures 4.a and 4.b.

The system has visual cues that indicate whether the exercise was performed correctly. These visual cues are presented by a representation of the main joints that make up the human body, connected by lines. When in a resting position, these visual cues are represented in blue while the green color indicates that the exercise has reached the desired amplitude, as illustrated in Figure 5.

5 Experiments and Evaluation

In order to test and evaluate the usability and functionality of the system, from the users’ perspective, and verify the application’s potential to help patients and professionals, a qualitative evaluation was performed together with a field research.

To conduct this assessment, participants used the system and then answered a questionnaire with questions about their profiles and the experience they had with the system. A total of 23 individuals with ages ranging from 18 to 40 years participated in the evaluation. We had the collaboration of students from a Physical Education course in our institution and also from graduated professionals, 14 people with complete higher education and 9 with incomplete higher education. The professional and patient profiles were chosen for the participants at random. Of the total number of participants in the evaluation, the test profiles were divided into 3 professionals and 20 patients. For both profiles, participants were registered in the system and received the following instructions.

• Professional: The participant’s login and password were made available along with a manual for using the system, taking into account the professional’s profile view. The participant was instructed to access the application with their pre-registered login and password. Upon entering, the user should access the registration page and register some patients, prescribing 2 exercises for each of them and then, after the patients have completed the exercises, check whether the exercises had been completed correctly.

• Patient: For this profile, in addition to the pre-registration of the evaluation participant, 2 exercises were also prescribed in advance. The participant’s login and password were also provided along with a manual for using the system. The participant was instructed to access the system and perform the 2 exercises already prescribed, following the number of executions, angles and number of sets.

After carrying out the previous practical steps, a form with 9 questions was made available. There were characterization questions such as age, gender, level of education and, if they had higher education (complete or incomplete). The other questions were related to the user experience with the application. The Likert scale was used, which is commonly used in questionnaires for opinion and satisfaction surveys, with response options ranging from “strongly agree” to “strongly disagree”.

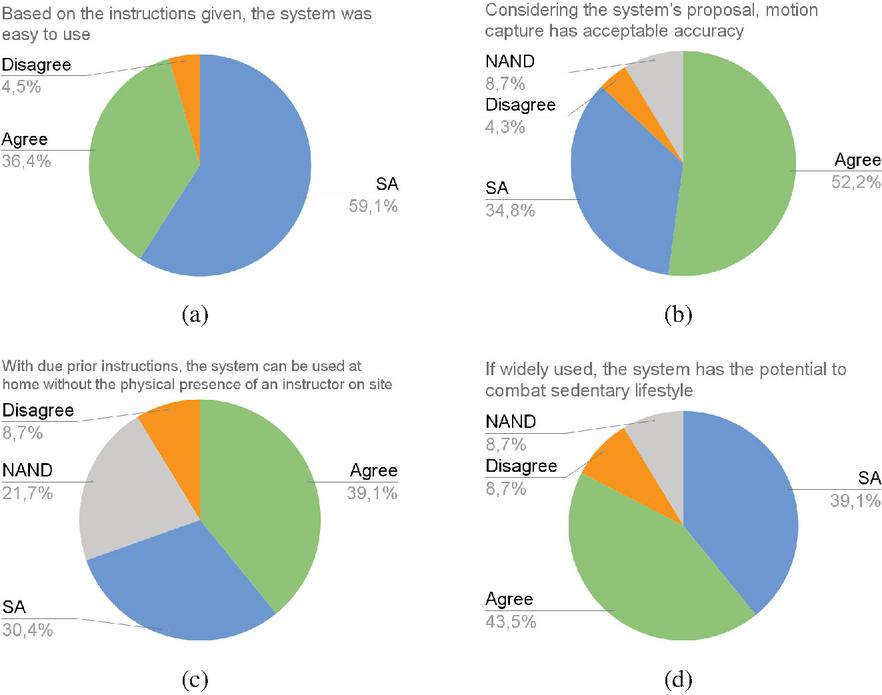

The remaining questions in the questionnaire were as follows: Based on the instructions given, the system was easy to use; Considering the system’s proposal, motion capture has acceptable accuracy; With due prior instructions, the system can be used at home without the physical presence of an instructor on site; If widely used, the system has the potential to combat sedentary lifestyle.

At the end, an open-ended question was included, where each participant could describe their opinion on which part of the system could be improved.

The results of the questions regarding the participants’ experience with the system are illustrated in the graphs in Figure 3. It is observed that 95.7% rated the system as easy to use based on the instructions given, while only 4.3% disagreed . With answers ranging from “agree” or “strongly agree”, 87% of participants agreed that the system has acceptable accuracy for capturing movements, 4.3% disagreed, and the remainder responded that they “neither agree nor disagree”. Given the statement that with due prior instructions, the system can be used at home without the physical presence of an instructor on site, a higher percentage of positive and favorable responses to the application was also obtained, with 69.5% in answers between “agree” or “strongly agree”. It was noted that participants were in favor of the application to combat sedentary lifestyle, if it is widely used. Only 8.7% disagreed with this statement, while 43.5% agreed and 39.1% strongly agreed.

Figure 6 Qualitative evaluation results – Answers given by participants regarding their experience using our system. Caption: SA “Strongly agree” and NAND “Neither agree nor disagree”

In the open-ended question, suggestions were given by the participants and some of them have already been implemented in the version of the system presented in this article as described below:

• Inclusion of some type of sound or beep when reaching the ideal angle during execution, specially when using mobile devices;

• In the application, when performing the exercise, the patient can view on the screen the angles and key points that the system identifies on their body.

• When reaching the correct angle, the visualization of these key points turns green, signaling that the correct angles have been reached.

• We understand that in addition to visual signaling, audio signaling with a beep will really offer better assistance to the patient, especially if the screen used by the patient is small.

The only suggestion not yet implemented is related to the inclusion of voice support to guide the execution of the exercise.

Some participants reported difficulty in getting the system to record the execution of the exercise. In some cases, observing the participants’ execution, it was possible to notice that they had some limitations in their execution, such as executing too quickly or performing the movement without the arm being correctly extended. In the cases mentioned, the execution was not incorrect, but small details were making it difficult for the application to identify the execution. Furthermore, some participants reported that in some exercise series, the execution of one side was captured first in relation to the other side, requiring, in some cases, the participant to perform more repetitions to count the series as complete.

5.1 Other Experiments

By invitation, our system was used as support in the course FEF056 (Biomechanics) by professor André Calil, where a demonstration of the system was carried out. The professor highlighted the system’s potential for various applications, including FAEFID laboratories. One student was willing to test the system in class (Figure 7) and the others’ impressions about performance and possible improvements were collected for analysis.

Figure 7 Photograph taken during a software experiment, an action carried out in collaboration with professor André Calil in the Biomechanics course at the Faculty of Physical Education.

6 Conclusion

This work presented a web-based software architecture that, through computer vision techniques, allows individual remote assessment of patients undergoing physical rehabilitation. By accessing the application, a professional can prescribe exercises for a specific patient. This patient will be able to perform, in the application, the prescribed exercises with the movements being captured by a camera. The application is capable of assisting and guiding the patient regarding the correct execution of the exercises, displaying and indicating the correct angulation and also counting the series performed. Finally, through the system, the professional can evaluate and provide feedback to the patient.

The development of the system and the capture of movements through the camera was supported by the PoseNet library, a convolutional neural network capable of detecting the main joints and human movements through a camera.

To assess the usability of the system, a qualitative evaluation was carried out with professionals in the field of Physical Education. In this experimental study, 23 individuals used the application with patient and professional profiles. The system was well accepted by participants, with the majority considering it to be an easy-to-use system with good potential for combating a sedentary lifestyle.

Future work intends to expand the testing of the system with real patients and test other motion capture models.

Acknowledgment

The authors of this work would like to thank the Minas Gerais Research Funding Foundation (Fapemig) for the financial support provided to carry out this research. Fapemig’s support was fundamental to the development of this study, allowing the investigation of new perspectives and contributing to the advancement of knowledge in our research area.

References

[1] Jung Ha Park, Ji Hyun Moon, Hyeon Ju Kim, Mi Hee Kong, and Yun Hwan Oh. Sedentary lifestyle: overview of updated evidence of potential health risks. Korean journal of family medicine, 41(6):365, 2020.

[2] Gregorij Kurillo, Ruzena Bajcsy, Klara Nahrsted, and Oliver Kreylos. Immersive 3d environment for remote collaboration and training of physical activities. In 2008 IEEE Virtual Reality Conference, pages 269–270. IEEE, 2008.

[3] Santiago Schez-Sobrino, D Vallejo, Dorothy Ndedi Monekosso, C Glez-Morcillo, and Paolo Remagnino. A distributed gamified system based on automatic assessment of physical exercises to promote remote physical rehabilitation. IEEE Access, 8:91424–91434, 2020.

[4] João A Francisco and Paulo Sérgio Rodrigues. Computer vision based on a modular neural network for automatic assessment of physical therapy rehabilitation activities. IEEE Transactions on Neural Systems and Rehabilitation Engineering, 2022.

[5] Santiago Schez-Sobrino, Dorothy N Monekosso, Paolo Remagnino, David Vallejo, and Carlos Glez-Morcillo. Automatic recognition of physical exercises performed by stroke survivors to improve remote rehabilitation. In 2019 International Conference on Multimedia Analysis and Pattern Recognition (MAPR), pages 1–6. IEEE, 2019.

[6] Zdravko Naydenov, Agata Manolova, Krasimir Tonchev, Nikolay Neshov, and Vladimir Poulkov. Holographic virtual coach to enable measurement and analysis of physical activities. In 2021 44th International Conference on Telecommunications and Signal Processing (TSP), pages 288–291. IEEE, 2021.

[7] Wyctor Fogos Da Rocha, Pablo Pereira E Silva, Luiza Emília VN Mazzoni, Clebeson Canuto, Rafhael Milanezi De Andrade, Antônio Bent, Mariana Rampinelli, and Douglas Aumonfrey. The use of convolutional neural network and starrgb technique for gait movements recognition in remote physiotherapy. In 2021 International Conference on Electrical, Computer, Communications and Mechatronics Engineering (ICECCME), pages 01–06. IEEE, 2021.

[8] Alex Kendall, Matthew Grimes, and Roberto Cipolla. Posenet: A convolutional network for real-time 6-dof camera relocalization. In Proceedings of the IEEE international conference on computer vision, pages 2938–2946, 2015.

[9] Dan Oved. Real-time human pose estimation in the browser with tensorflow.js, 2018. Acessado em: 30/03/2023.

[10] Donald A Neumann. Cinesiologia do aparelho musculoesquelético: fundamentos para reabilitação. Elsevier Health Sciences, 2010.

[11] National Strenght and Conditioning Association (NSCA). Manual de Técnicas de Exercício para Treinamento de Força. Artmed, 2010.

Biographies

Daniel Muller Rezende is an undergraduate student in Computer Science at the Federal University of Juiz de Fora. His research interests are Computer Vision and Computer Graphics.

Paulo Victor de Magalhães Rozatto is an undergraduate student in Computer Science at the Federal University of Juiz de Fora. He has a IT Technician certificate from the Federal Institute of Rio de Janeiro (2018). His research interests are Computer Graphics, Augmented Reality, and Virtual Reality.

Dauane Joice Nascimento de Almeida has her B.S. in Computer Science at the Federal University of Juiz de Fora and her research interests are software engineering and software development.

Filipe de Lima Namorato is an undergraduate student in Computer Science at the Federal University of Juiz de Fora. His research interests are Computer Vision and Computer Graphics.

Tatiane Daniele dos Santos is an undergraduate student in Physical Education at the Federal University of Juiz de Fora. His research interests are entrepreneurship, marketing and Innovation in sport.

Rodrigo Luis de Souza da Silva is an Associate Professor in the Department of Computer Science at Federal University of Juiz de Fora. He has a B.S. in Computer Science from the Catholic University of Petropolis (1999), M.S. in Computer Science from Federal University of Rio de Janeiro (2002), Ph.D. in Civil Engineering from Federal University of Rio de Janeiro (2006) and a postdoc in Computer Science from the National Laboratory for Scientific Computing (2008). His main research interests are Augmented Reality, Virtual Reality, Scientific Visualization and Computer Graphics.

Journal of Mobile Multimedia, Vol. 20_4, 879–900.

doi: 10.13052/jmm1550-4646.2045

© 2024 River Publishers