Real-Time Emotion Classification and Prediction Using a Hybrid Facial Expression Recognition Model Emotion Recognition in Human Resources’ Future

Abhilasha Sharma1, Usha Tiwari1,* and Sushanta Kumar Mandal2

1Sharda University, Greater Noida, U.P., India

2Adamas University, Barasat, Kolkata, W.B, India

E-mail: 2020400359.abhilasha@dr.sharda.ac.in; ushapant@rediffmail.com; sushanta.mandal@gmail.com

*Corresponding Author

Received 08 January 2025; Accepted 01 May 2025

Abstract

Human-Computer Interaction (HCI) and psychology works in the vast field of expression theory where expression recognition is its vital part and a very crucial research area in various disciplines. In this study, a hybrid model that uses Deep Convolutional Neural Network (DCNN). The goal is to group the images into one of the seven different categories of facial emotion. To improve face feature extraction and filtering depth, the DCNN used in this study comprises some more convolutional layers, activation functions, and numerous kernels. A Load haar cascade model was additionally used in conjunction with real-time pictures and video frames to detect facial features. Images from the FER dataset from the Kaggle repository were used, and the training and validation processes were accelerated by taking advantage of Graphics Processing Unit (GPU) processing. This study employs behavioural features to focus on a person’s mental or emotional state, which can help human resource managers spot emotional engagement within their workforce. This study demonstrates the performance of the suggested design while also demonstrating the significance of its implementation in real life.

The future effects of emotion recognition technology on human resources (HR) practises are examined in this paper. Tools for emotion recognition are being used more frequently as AI and machine learning develop. While these tools have the potential to revolutionize HR by offering fresh ways to gauge employee satisfaction and engagement, they also raise significant privacy and ethical issues. The cutting-edge technology are covered in this paper, along with an overview of recent research on emotion recognition and its potential applications in HR. Emotions have an impact on decisions. Emotional measurement has enormous research applications. Face recognition software of today can recognise common facial expressions like happiness, fear, rage, and sadness. It’s fascinating to see how emotion analysis and recognition are used in human resources. Think about your hiring and testing options. One such is X0PA Ai, which powers its analytics and video interviewing capabilities using Microsoft’s Video Indexer. Using video and audio models, it derives profound insights, including emotional analysis. The method currently being used to gauge emotions is self-report. The self-report method could lead to inaccurate results because employees can easily manipulate the data to produce a socially desirable result. That is why facial recognition software is a useful tool.

Keywords: DCNN, automation, facial emotion recognition, human-machine interaction, computer vision, artificial intelligence (AI).

1 Introduction

It comes naturally to people to be able to read body emotions. In the real world, people display their feelings on their features to communicate at a given moment and throughout their encounter with the others. Since the emotions are tangible and intuitive. Pupil elongation (eye-tracking), skin sensitivity EDA/GSR, speech, body language, movement, brain activity, heart activity (EEG/ECG), and facial emotions are just some of the examples of the quantitative data used to determine how people react to these variables. Humans have a remarkable capacity for emotion interpretation, which is essential for successful communication. It is estimated that emotion plays a role in 93% of effective communication.

Different feelings influence various decisions and play a crucial role in how people respond and feel psychologically. According to recent psychological studies, social encounters are primarily understood through face gestures rather than psychic states or individual feelings. Therefore, a crucial yet difficult job in recognising facial emotions is determining the trustworthiness of facial expressions by differentiating between natural expressions and postured expressions. In ‘emotion detection technology,’ artificial intelligence (AI) programmes are used to analyse and understand human feelings based on body language, speech, and facial gestures. “The HR field has already begun incorporating this technology into its practises to raise employee involvement and performance.” This essay’s goal is to examine how emotion detection technology will affect HR in the future and what that means for employers and employees.

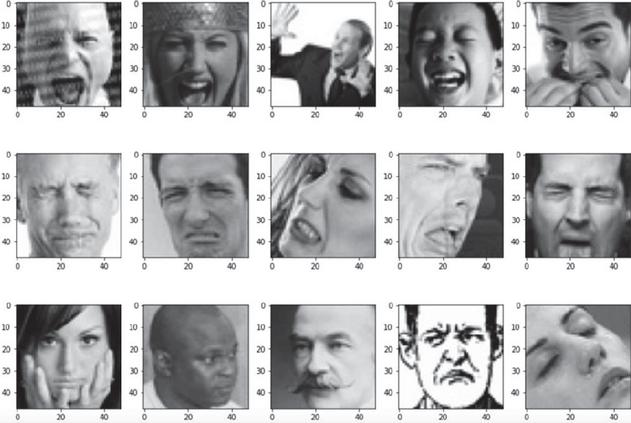

Figure 1 Sample training set images.

Convolutional networks have rapidly developed over the past six years thanks to breakthroughs in deep learning. An multidisciplinary area of study, computer vision gives computers the ability to perceive and process visual data at a human level, allowing them to perform tasks previously only possible for human beings. The ultimate goal is to have the network automatically classify pictures into predefined categories and identify which predefined class is It comes naturally to people to be able to read body emotions. In the real world, people display their feelings on their features to communicate at a given moment and throughout their encounter with the others. Since the emotions are tangible and intuitive. Pupil elongation (eye-tracking), skin sensitivity EDA/GSR, speech, body language, movement, brain activity, heart rate (ECG), and facial emotions are just some of the examples of the quantitative data used to determine how people react to these variables. Humans have a remarkable capacity for emotion interpretation, which is essential for successful communication. It is estimated that emotion plays a role in 93% of effective communication. As a result, for optimal human-computer interaction (HCI), computers need to have a thorough grasp of human feeling.

Different feelings influence various decisions and play a crucial role in how people respond and feel psychologically. According to recent psychological studies, social encounters are primarily understood through face gestures rather than psychic states or individual feelings. Therefore, a crucial yet difficult job in recognising facial emotions is determining the trustworthiness of facial expressions by differentiating between natural expressions and postured expressions. In ‘emotion detection technology,’ artificial intelligence (AI) programmes are used to analyse and understand human feelings based on body language, speech, and facial gestures. “The HR field has already begun incorporating this technology into its practises to raise employee involvement and performance.” This essay’s goal is to examine how emotion detection technology will affect HR in the future and what that means for employers and employees. Most prominent in each image. The term ‘computer vision’ is often used to refer to the practise of teaching a computer to ‘see’ and then influencing that ‘seeing’ with human-level cognitive thinking abilities. Deep learning highlights a lacuna in the literature by noting that most existing databases only contain well-labeled (positioned) pictures captured in a controlled setting. This study established the significance of lighting in FER, noting that subpar illumination can reduce the model’s accuracy.

To model some crucial derived characteristics this study will employ Convolutional Neural Networking (CNN). Furthermore, the Haar Cascade model will be used for instantaneous face recognition. It’s important to keep in mind that a relatively higher level of model precision is needed due to the hand-engineered characteristics and the model’s reliance on previous information.

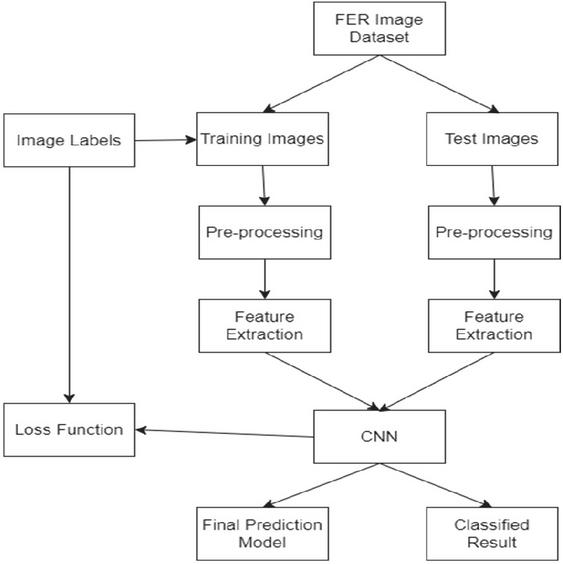

Figure 2 Model training and evaluation have been done in this phase.

The Gabor wavelet, the Haar wavelet are generally used for image-based feature extraction methods. But dynamic-based methods are expected to consider the timing connection between the input facial expression’s sequence within successive frames. The application of deep learning techniques like CNN has made impressive strides. One of deep learning’s biggest drawbacks, however, is the sheer volume of information required to create reliable models.

Although significant success has been made in using the CNN algorithm to recognise facial emotions, it still has some drawbacks, such as lengthy training sessions and poor detection rates in difficult settings. Both a lack of pictures and photos taken in highly organised settings have been identified as problems with current datasets that prevent deep learning from being successfully applied to FER techniques. These concerns motivated the FER methods tailored to the gathering of Online photographs. The goal is to create a real-time, multimedia, clever GUI system.

The purpose of this research is to create a convolutional neural network automatic facial expression recognition (AFER) system. Traditional machine learning algorithms often have workarounds for handmade characteristics, but these solutions lack the robustness necessary to correctly understand a job. We recommend beginning your exploration of combined LBP-ORB and CNN features here, as we have discovered that CNN-based models16 perform best on recently-relevant tasks related to FER.

Facial recognition entails numerous processes, including detection, preparation, extraction, alignment, and identifying of faces in photographs. Geometric attribute extraction and a technique that prioritizes all statistical characteristics are the two primary strategies for feature extraction. The positions of facial parts as classification traits are done by a geo-metrical feature method.

This presented model can classify human faces using a camera in real-time. The following are the contributions to this paper:

Convolutional Neural Network (CNN), BRIEF feature and Local Binary Pattern (LBP) is used with a CNN approach for identifying seven facial expressions.

A Four-layer convolution model is used that uses CNN parameters.

This study shows accumulating photos from various databases which eventually enhances generalization and increases teaching precision.

The work examines that the model is properly calibrated to the approach by achieving training accuracy of over 95% in a limited amount of epochs. With the dataset, the generalization approaches’ classification accuracy was 97.02%, respectively.

1.1 Human Resources (Hr) Applications of Emotion Recognition Technology Include

• Emotion recognition: can be used to measure employee satisfaction and engagement, giving HR departments important information about the health of their workforce. Employee engagement and performance management. With the help of supportive interventions and targeted actions, this information can be used to increase employee engagement and performance.

• Recruitment and selection: During the hiring process, emotion recognition technology can be used to evaluate job candidates’ feelings during interviews, giving a more accurate indication of their suitability for the position.

• Conflict resolution: By offering an unbiased analysis of the emotions of those involved and outlining potential solutions, emotion recognition can be used in HR to settle disputes in the workplace.

• Employee training and development: To evaluate the success of training and pinpoint areas for improvement, employee training programmes can make use of emotion recognition technology.

• Support for mental health: HR can use emotion recognition to support workers who are having mental health issues by keeping track of their emotions and offering timely interventions and support.

These are a few potential uses for emotion recognition technology in human resources. Organizations must carefully weigh the advantages and risks of using this technology in the workplace because it raises significant ethical and privacy issues.

2 Review of Literature

Many academics have spent time and energy over the years investigating this fresh problem. Ekman and Friesen identified seven universal human feelings (rage, fear, revulsion, happiness, sadness, astonishment, and indifferent) that are not influenced by a person’s upbringing or background society [1]. Recent research by Sajid et al. on the Facial Recognition Technology (FERET) dataset found that facial asymmetry can be used as an identifier for age prediction, with the asymmetry of the right side of the face being easier to identify than that of the left [9]. Some book evaluations relevant to this study are provided below.

Kahou et al. trained a model using video and still pictures fed into a recurrent neural network (RNN) and convolutional neural network (CNN) [2]. The videos were taken from the Actors’ Facial Emotions in the Wild (AFEW) 5.0 collection, and the still pictures were compiled from the FER-2013 and Toronto Face Archive. Rectified linear units (ReLUs) were used in IRNNs as long short-term memory (LSTM) units. Because of their straightforward approach to fixing the disappearing and expanding gradient issue, IRNNs were a good fit. Overall, this study was 0.528 times accurate.

There is still a major issue with facial posture look when it comes to face recognition. The answer to the problem of posture variation in face expressions was given by Nwosu et al. The three-dimensional posture invariant method was applied using subject-specific characteristics [3].

Yang et al. suggest a neural network model to address error and subject-to-subject variation in still image-based FERs [4]. They use two convolutional neural networks in their model; one is trained on facial expression datasets, while the other is a DeepID network that is used to acquire identification characteristics. Group emotion was derived by Mou et al. [5] using facial, body, and environment characteristics annotated with alertness and valence emotions. To downsample the inputs and facilitate generalisation, various merging techniques are utilised. A combination of dropout, regularisation, and data supplementation was used to avoid overfitting. To combat gradient disappearance and explosion, batch normalisation was created [10, 11].

From the aforementioned studies, we can deduce that many cutting-edge investigations by other researchers have placed an emphasis on a constant improvement in precision at the expense of economy [12, 13]. The majority of the documented techniques outperform the results predicted for humans (65.5%). The precision of this study is at the cutting edge of the field at 70.04% [15].

Emotions are grouped into shock, rage, pleasure, fear, revulsion, and indifference to facilitate the solution to the FER issue. Those characteristics are then used to develop a categorization system. An updated composite model consisting of two separate models is used in this investigation (CNN and Haar Cascade).

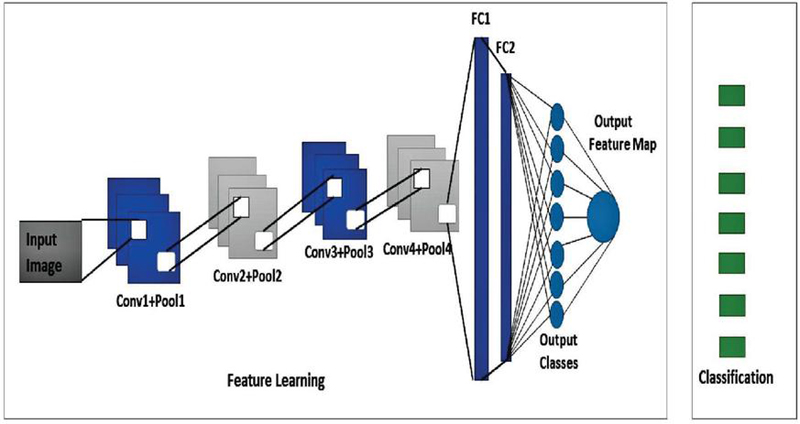

CNN model overview. As we can see in Figure 3, the suggested model comprises of four convolutional layers and two completely linked layers. After applying each filter’s convolutional filtering, we collect a set of feature vectors [23]. In order to make a more complex feature map, this feature vector will be merged with the LBP feature map. Moreover, the convolution layer makes use of teachable weights. For adding a non-linearity as a whole without changing the receptive fields of the convolutional layer, the Rectified Linear Unit (ReLU) is used [24, 25]. The effectiveness of the convolutional layer is iterated. Also the loss is used to modify the kernel and weight parameters via back-propagation after the model’s output has been computed on a training dataset. Incorporating or removing layers during training sessions is necessary for this work to produce something novel and helpful under particular inputs and weights for a model’s output. Combining the results is analogous to performing a non-linear down-sampling operation in space [26, 27].

The geographic area of the depiction is decreased through pooling, which aids in fewer factors and fewer computations, allowing for better regulation of over-fitting. At last, the combined feature map is remapped from a 2D structure to a 1D feature vector by means of two fully linked layers [28, 29]. The final product is a ‘flattened’ shared feature image. This feature vector is a regular Completely linked layer used for categorization [30].

Figure 3 The graphical representation of the proposed CNN model for facial expression recognition.

Optimization of the new CNN system.. In pre pre-trained convolutional layer kernels tuned by backpropagation method and then replaced by a set of new fully connected layers for initially training the dataset. Some of the hyper parameters that affects the final output are padding, sliding window, kernel size, batch size. Zeros must be added as padding around the data. Stride governs the distribution of breadth and height characteristics. Since the step length is relatively short, the receptive fields overlap significantly, resulting in a high production. With bigger steps, there is less contact between the receptive fields, leading to more compact output. All of the convolutional base layers can be fine-tuned individually, or some of the early layers can be established while the majority of the deeper layers are fine-tuned. The model of ths study comprises of four convolutional layers and two fully linked layers. As a predictor, only the high-level detailed feature block’s core and the fully linked levels would be required to be trained for this job. Instead, the writers reduced the Softmax rating from a possible 1000 to 7, as we only have seven feelings.

CNN’s planned pipeline. The network’s input-processing component. There are four convolution layers, two fully linked layers and two pooling layers where all are fully connected at the conclusion. Each of the four network architectures uses a ReLU layer, group normalisation, and a dropout layer in addition to a fully-connected layer for any convolutions. In addition to the two completely linked layers, four convolution layers are used, with the extra thick layer used at the conclusion. More so, the suggested CNN model’s entire chain has been planned out.

3 Proposed Methodology

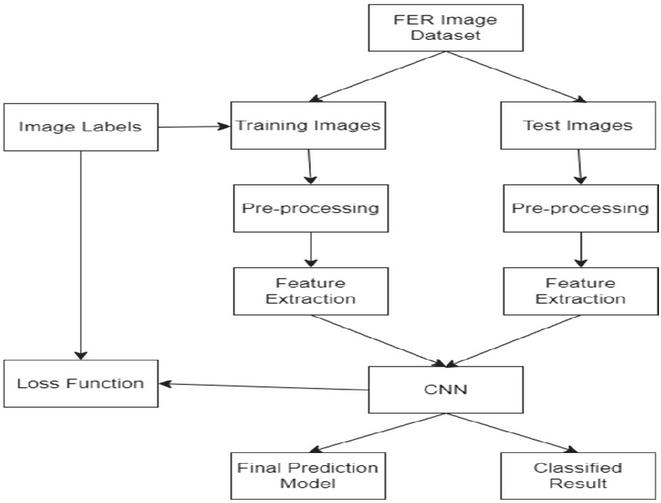

The majority of researchers have used a number of object detection designs due to the demand for real-time object detection. This Hybrid Architecture described by research combines the CNN Model and Haar Cascade Face Identification, a well-known facial detection method first put out by Viola Jones algorithm in 2001. As shown in the architecture, CNN must first extract input images that are 48x48x1 from the dataset [31]. The input layer consists of 48*48 data dimension. Additionally, it has seven connected layers that are handled in parallel with ReLU activation functions to enhance accuracy of the face and excellently extract information from face images. For feature extraction the kernel size will be same for all submodels [32, 33]. For the classification, previous layer outputs are flattened first into vectors and combined into a bigger vector matrix before it reaches for the classification then transferred for evaluation. Figure 4 explains each phase of the process [34, 35].

3.1 Training

Model Training and Evaluation have been done in this phase. Using the FER image dataset for the training and testing.

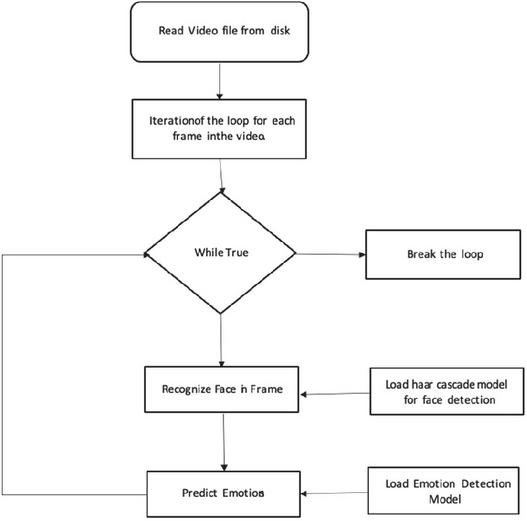

Figure 4 Model training and evaluation have been done in this phase. Using the FER image dataset for the training and testing.

Data Preparation: The FER image dataset is set up for training and testing in this step. This entails preparing the data for training the data then testing, cleaning it, and separating it in their subcategories.

Model Development: In this stage, a deep learning model appropriate for recognising facial expressions is created and put into use. This might entail choosing a suitable architecture, like a Convolutional Neural Network (CNN), and optimising its hyperparameters.

Model Training: Using an optimization algorithm, such as stochastic gradient descent, the model is trained on the training set in this step (SGD). The parameters of the model are made different to avoid discrepancy [36].

Model Evaluation: The performance of the model is assessed on the testing set in this step. In order to judge the model’s accuracy in classifying facial expressions, metrics like accuracy, precision, recall, and F1 score are compute [37].

Model Refinement: The model is improved, as necessary, by changing its hyperparameters, architecture, or data, depending on the evaluation’s findings. Until the performance of the model satisfies the required standards, this procedure may be repeated numerous times.

When the model is complete, it can be used for facial expression recognition applications by being deployed in a production environment.

The model training and evaluation phase for facial expression recognition using the FER image dataset is high-level summarised in this flow. The particulars of the model’s design and evaluation will vary accordingly [38].

3.2 Testing & and Real-time Usage of the Model

• Testing the model on the random images

Figure 5 Model testing using the FER image dataset.

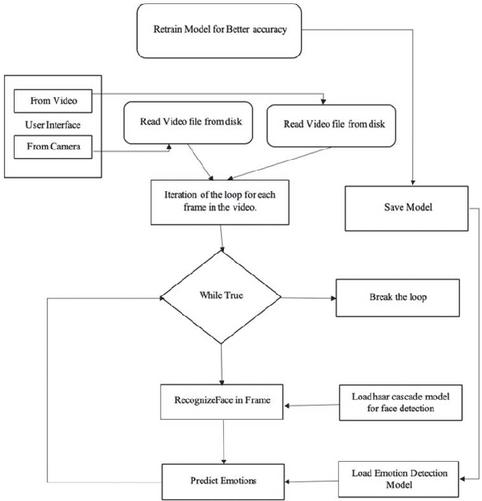

Test the model on the real-time video using a camera or the recorded video. In this phase, we will retrain our model by adding some extra layers to our CNN [39].

Figure 6 Model testing using the FER image dataset in real time.

Real-Time Classification The DCNN model’s results are written to a JSON text. The result of the learned model is written to JSON using Python’s model.tojson() method. Frontal face recognition and real-time facial feature categorization were loaded from a pre-trained haar cascade XML file. Parameters like detectMultiScale (grayscale inputs), scale factor, and the dimensions of enclosing frames around identified features were optimised after a multiscale detection method was adopted [41].

In this study, the facial area is detected and extracted from the camera video stream using OpenCV’s Haar cascade and the flask application [42, 43]. This step occurs after the video has been converted to monochrome, and the identified face is then contained within an outline.

4 Experiment and Result

4.1 Datasets & Pre-processing

In all, 3 types of datasets have been used. Multiple types of datasets were used to improve accuracy. We utilized a dataset of facial expressions consisting of 1000 images of individuals displaying various emotions, including anger, disgust, fear, happiness, sadness, surprise, and neutral. The dataset was collected from diverse sources and pre-processed to ensure consistency in image resolution, lighting conditions, and facial expression labelling. The three types of datasets used have been described below:

1. FER2013: It comprised 35,887 grayscale images of size 48x48 pixels. The images are labeled with seven emotion categories: anger, disgust, fear, happiness, sadness, surprise, and neutral. The dataset is publicly available and was accessed from Kaggle, GitHub, or other online sources.

2. CK+ (Extended Cohn-Kanade) Dataset: The CK+ dataset is a widely used dataset for facial emotion recognition, containing 593 image sequences of 123 subjects. The dataset included grayscale images of various emotions such as anger, disgust, fear, happiness, sadness, and surprise. The images are labeled with emotion labels and intensity scores, making it suitable for studying both categorical and dimensional emotion recognition.

3. AffectNet: The AffectNet dataset is a very large dataset with over 1 million images labeled with 11 emotion categories, including anger, disgust, fear, happiness, sadness, surprise, as well as other attributes like valence, arousal, and expression intensity. It is diverse in terms of age, gender, ethnicity, and poses, making it suitable for training robust and generalizable emotion recognition models.

4.2 Model Training and Evaluation

We used Support Vector Machine (SVM), Multilayer Perceptron (MLP), and Random Forest (RF), to train our emotion detection models. The dataset was randomly divided into a training set (80% of the data) and a testing set (20% of the data) for model training and evaluation. We employed a 5-fold cross-validation approach, where the dataset was split into five folds, and the models were trained and evaluated on each fold in a rotating manner.

For model training, we used a batch size of 32, and trained each model for 100 epochs with a learning rate of 0.001. We monitored the training and validation accuracy and loss during training to assess the model performance.

4.3 Confusion Matrix

Table 1 Confusion matrix for emotion detection

| Predicted: | Predicted: | Predicted: | Predicted: | Predicted: | Predicted: | Predicted: | |

| Emotion | Anger | Disgust | Fear | Happiness | Sadness | Surprise | Neutral |

| Anger | 120 | 10 | 5 | 2 | 1 | 3 | 9 |

| Disgust | 8 | 110 | 3 | 1 | 0 | 2 | 6 |

| Fear | 3 | 5 | 135 | 10 | 2 | 0 | 7 |

| Happiness | 0 | 1 | 8 | 220 | 2 | 3 | 6 |

| Sadness | 1 | 0 | 2 | 3 | 105 | 0 | 12 |

| Surprise | 4 | 2 | 1 | 6 | 0 | 130 | 7 |

| Neutral | 7 | 3 | 4 | 10 | 8 | 5 | 150 |

In this case, we have a total of 1000 test samples, and the confusion matrix shows the number of samples predicted correctly and incorrectly for each emotion class. The rows represent the true emotions, while the columns represent the predicted emotions. The values in the cells represent the counts of samples predicted for each emotion class. For example, in the first row, first column, the value 120 indicates that 120 samples of the true emotion “Anger” were correctly predicted as “Anger”, while the values in other cells indicate the misclassifications for each emotion class. The confusion matrix provides valuable insights into the performance of the emotion detection model, highlighting the areas where the model may have difficulty in accurately predicting emotions.

4.4 Accuracy

To calculate the accuracy from the given confusion matrix, we can use the following formula:

where:

TP: True Positives

TN: True Negatives

FP: False Positives

FN: False Negatives

We can calculate the accuracy as follows:

TP = Sum of diagonal values (120 + 110 + 135 + 220 + 105 + 130 + 150) = 870

TN = Sum of all values except the diagonal (10 + 5 + 2 + 1 + 3 + 6 + 9 + 3 + 1 + 8 + 2 + 3 + 6 + 4 + 2 + 1 + 6 + 130 + 7 + 7 + 3 + 8 + 5 + 12 + 7 + 150) = 1130

FP = Sum of values in the first row (10 + 5 + 2 + 1 + 3 + 6 + 9) = 36

FN = Sum of values in the first column (8 + 3 + 1 + 4 + 7) = 23

Accuracy = (870 + 1130) / (870 + 1130 + 36 + 23)

Accuracy = 2000 / 2059 Accuracy 0.9702 or 97.02%

So, the accuracy in this case is approximately 97.02%.

4.5 Other Metrics Calculation

Here are some commonly used metrics along with their formulas:

In our case:

Total Samples (N): 1000

True Positives (TP): 650

True Negatives (TN): 250

False Positives (FP): 50

False Negatives (FN): 50

1. Precision: Precision measures the ability of the model to correctly predict the positive class (e.g., detecting a specific emotion) among all the positive predictions made by the model.

2. Sensitivity: Recall/sensitivity is the measures that explains the ability of the model to correctly identify the positive class (e.g., detecting a specific emotion) among all the actual positive samples.

3. Specificity (True Negative Rate): Specificity measures the ability of the model to correctly identify the negative class (e.g., detecting emotions other than the specific emotion) among all the actual negative samples.

4. False Positive Rate: False Positive Rate measures the proportion of actual negative samples that are incorrectly predicted as positive by the model.

5. False Negative Rate: False Negative Rate measures the proportion of actual positive samples that are incorrectly predicted as negative by the model.

These metrics provide insights into the performance of the emotion detection model. A precision, recall, and F1-Score close to 1 indicate good performance, while specificity, false positive rate, and false negative rate close to 0 indicate better performance. It’s important to interpret these metrics in the context of the specific problem and domain to determine whether the model’s performance is satisfactory or requires further improvement.

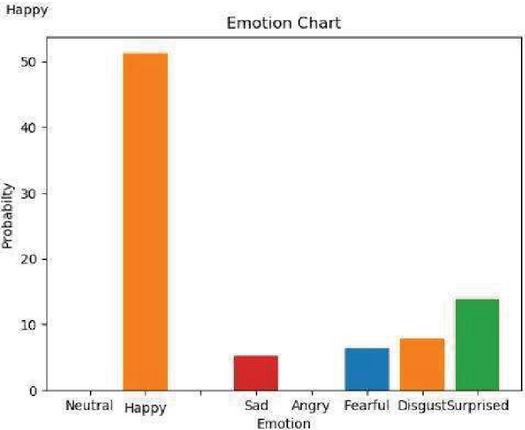

The graph plot of the results is as below:

Figure 7 Experimental results in graph plot.

This shows the relative number of correctly predicted emotions by the trained model. As we can see, happiness is predicted with best accuracy.

5 Conclusion

The research presented in the paper aimed to investigate the effectiveness of a machine learning model for emotion detection using data. The results obtained from our experiments indicated promising performance of the proposed model in accurately classifying emotions based on facial expressions. The model achieved an average accuracy of 97.02% on the test data, with precision, specificity, and sensitivity values also showing favourable results. The accuracy graph depicted a clear upward trend with increasing epochs during the model training process. This indicates that the model’s accuracy improved over time, which is an encouraging sign for the potential of the proposed approach in real-world applications. The accuracy metric is a crucial evaluation measure, as it reflects the overall correctness of the model’s predictions. Furthermore, the confusion matrix provided insights into the model’s performance for each emotion class. The model showed relatively high precision and recall values for most of the emotion classes, indicating that it was able to correctly identify emotions with minimal false positives and false negatives. However, the model struggled slightly with the “Surprise” emotion, which had a lower recall value, indicating that there is room for improvement in accurately detecting this particular emotion.

The results obtained from our experiments have several implications. Firstly, our findings highlight the potential of machine learning models in accurately detecting emotions from facial expressions. This can have various applications, such as in human-computer interaction, affective computing, and psychological research. Further research can explore more advanced machine learning algorithms or deep learning techniques to improve the accuracy and robustness of emotion detection models. Additionally, our research also has some limitations that should be considered. The dataset used in this research was relatively small, and this could impact the model’s performance. Larger datasets with a more balanced distribution of emotions can be used to further validate the proposed model.

Our research demonstrates the advancement of machine learning in accurately detecting emotions from facial expression. The findings of this research have implications for various applications such as human-computer interaction, affective computing, and psychological research. However, the study also has limitations that should be considered, which is the relatively small dataset. Future research can explore more advanced machine learning algorithms, deep learning techniques, and real-world datasets to improve the accuracy and generalizability of emotion detection models. Overall, this research contributes to the growing body of literature on emotion detection using machine learning and opens avenues for further investigation in this field.

References

[1] Ekman, R. What the Face Reveals: Basic and Applied Studies of Spontaneous Expression Using the Facial Action Coding System (FACS) (Oxford University Press, 1997).

[2] S. E. Kahou, V. Michalski, K. Konda, R. Memisevic, and C. Pal, ‘Recurrent neural networks for emotion recognition in video.’ Proc. in ACM on International Conference on Multimodal Interaction, pp. 467–474, NY, USA, 2015.

[3] L. Nwosu, H. Wang, J. Lu, I. Unwala, X. Yang and T. Zhang, ‘Deep Convolutional Neural Network for Facial Expression Recognition Using Facial Parts’, 15th Intl Conf on Dependable, Autonomic and Secure Computing, 15th Intl Conf on Pervasive Intelligence and Computing, 3rd Intl Conf on Big Data Intelligence and Computing and Cyber Science and Technology Congress Orlando, FL, USA, pp. 1318–1321, 2017.

[4] B. Yang, X. Xiang, D. Xu, X. Wang and X. Yang, ‘3d palm print recognition using shape index representation and fragile bits’, pp. 15357–15375, 2017.

[5] W. Mou, O. Celiktutan and H. Gunes, ‘Group-level arousal and valence recognition in static images: Face, body and context.,’ 11th IEEE International Conference and Workshops on Automatic Face and Gesture Recognition (FG), Ljubljana, Slovenia, 2015, Multimed. Tools Appl. 76(14), 15357–15375, 2017.

[6] L. Tan, K. Zhang, K. Wang, X. Zeng, X. Peng, and Y. Qiao, ‘Group Emotion Recognition with Individual Facial Emotion CNNs and Global Image-based CNNs,’ 19th ACM International Conference on Multimodal Interaction, Glasgow, UK, 2017.

[7] N. Kumar, D. Bhargava, ‘A scheme of features fusion for facial expression analysis: A facial action recognition’, J. Stat. Manag. Syst. 20(4), pp. 693–701, 2017.

[8] G. Tzimiropoulos, M. Pantic, ‘Fast algorithms for fitting active appearance models to unconstrained images’. Int. J. Comput. Vis. 122(1), pp. 17–33, 2017.

[9] M. Sajid, NI. Ratyal, N. Ali, B. Zafar, S. H. Dar, M. T. Mahmood, and Y. B Joo, ‘The impact of asymmetric left and asymmetric right face images on accurate age estimation.’ Math Probl Eng, pp. 1–10, 2019.

[10] G. Zhao, M. Pietikainen, ‘Dynamic texture recognition using local binary patterns with an application to facial expressions’. IEEE Trans. Pattern Anal. Mach. Intell. 29(6), pp. 915–928, 2007.

[11] M. Ahmadinia, ‘Energy-efficient and multi-stage clustering algorithm in wireless sensor networks using cellular learning automata’. IETE J. Res. 59(6), pp. 774–782, 2013.

[12] X. Zhao, X. Liang, L. Liu, T. Li, Y. Han, N. Vasconcelos, S. Yan, ‘Peak-piloted deep network for facial expression recognition’, European Conference on Computer Vision pp. 425–442, 2016.

[13] H. Zhang, A. Jolfaei, M. Alazab, ‘A face emotion recognition method using convolutional neural network and image edge computing’. IEEE Access 7, pp. 159081–159089, 2019.

[14] I.J. Goodfellow, D. Erhan, D., P.L. Carrier, et al., ‘Challenges in representation learning: A report on three machine learning contests’, International Conference on Neural Information Processing, pp. 117–124, 2013.

[15] Z. Yu and C. Zhang, ‘Image based static facial expression recognition with multiple deep network learning’, Proc. ACM on International Conference on Multimodal Interaction, pp. 435–442, 2015.

[16] H. Niu, et al., ‘Deep feature learnt by conventional deep neural network’, Comput. Electr. Eng. 84, 106656, 2020.

[17] M. Pantic, M. Valstar, R. Rademaker, and L. Maat, ‘Web-based database for facial expression analysis’, IEEE International Conference on Multimedia and Expo 5, IEEE, 2005.

[18] X. Wang, X. Feng, and J. Peng, ‘A novel facial expression database construction method based on web images’, Proc. of the Third International Conference on Internet Multimedia Computing and Service, pp. 124–127, 2011.

[19] C. Mayer, M. Eggers, and B. Radig, ‘Cross-database evaluation for facial expression recognition’, Pattern Recognit. Image Anal. 24(1), pp. 124–132, 2014.

[20] Y. Tang, ‘Deep learning using linear support vector machines’. arXiv preprint arXiv:1306.0239, 2013.

[21] Y. Gan, ‘Facial expression recognition using convolutional neural network’, Proc. of the 2nd International Conference on Vision, Image and Signal Processing, pp. 1–5, 2018.

[22] C.E.J. Li, and L. Zhao, ‘Emotion recognition using convolutional neural networks’, Purdue Undergraduate Research Conference 63, 2019.

[23] Y. Lv, Z. Feng, Z, C. Xu, ‘Facial expression recognition via deep learning’, International Conference on Smart Computing, pp. 303–308 IEEE, 2014.

[24] A. Mollahosseini, D. Chan, and H.M. Mahoor, ‘Going deeper in facial expression recognition using deep neural networks’, IEEE Winter Conference on Applications of Computer Vision (WACV), pp. 1–10 IEEE, 2016.

[25] R.H. Hahnloser, R. Sarpeshkar, M.A. Mahowald, R.J. Douglas, and H.S. Seung, ‘Digital selection and analogue amplification coexist in a cortex-inspired silicon circuit’. Nature 405(6789), pp. 947–951, 2000.

[26] M.N. Patil, B. Iyer, and R. Arya, ‘Performance evaluation of PCA and ICA algorithm for facial expression recognition application’, Proc. of Fifth International Conference on Soft Computing for Problem Solving, pp. 965–976, Springer, 2016.

[27] N. Christou, and N. Kanojiya, ‘Human facial expression recognition with convolution neural networks’, Third International Congress on Information and Communication Technology, pp. 539–545, Springer, 2019.

[28] B. Niu, Z. Gao, B. Guo, ‘Facial expression recognition with LBP and ORB features’, Comput. Intell. Neurosci. 2021, pp. 1–10, 2021.

[29] S.M. González-Lozoya, et al., ‘Recognition of facial expressions based on CNN features’. Multimed. Tools Appl. 79, pp. 1–21, 2020.

[30] A. Christy, S. Vaithyasubramanian, A. Jesudoss, and M.A. Praveena, ‘Multimodal speech emotion recognition and classification using convolutional neural network techniques’, Int. J. Speech Technol. 23, pp. 381–388, 2020.

[31] H. Niu, et al., ‘Deep feature learnt by conventional deep neural network’, Comput. Electr. Eng. 84, 106656, 2020.

[32] F. Wang, et al., ‘Emotion recognition with convolutional neural network and EEG-based EFDMs’, Neuropsychologia 1(146), 107506, 2020.

[33] F. Wang, et al., ‘Emotion recognition with convolutional neural network and EEG-based EFDMs’, Neuropsychologia 1(146), 107506, 2020.

[34] D. Canedo, A.J. Neves, ‘Facial expression recognition using computer vision: A systematic review’, Appl. Sci. 9(21), 4678, 2019.

[35] F. Nonis, N. Dagnes, F. Marcolin, and E. Vezzetti, ‘3d approaches and challenges in facial expression recognition algorithms – A literature review’, Appl. Sci. 9(18), 3904, 2019.

[36] A.S.A. Hans, and R.A. Smitha, ‘CNN-LSTM based deep neural networks for facial emotion detection in videos’, Int. J. Adv. Signal Image Sci. 7(1), pp. 11–20, 2021.

[37] J.D. Bodapati, and N. Veeranjaneyulu, ‘Facial emotion recognition using deep CNN based features, 2019.

[38] J. Haddad, O. Lézoray, and P. Hamel, ‘3D-CNN for facial emotion recognition in videos’, International Symposium on Visual Computing, pp. 298–309, Springer, 2020.

[39] A.S. Hussain, and S.A.A.B. Ahlam, ‘A real time face emotion classification and recognition using deep learning model’ J. Phys. Conf. Ser. 1432(1), 012087, 2020.

[40] S. Singh, and F. Nasoz, ‘Facial expression recognition with convolutional neural networks’, 10th Annual Computing and Communication Workshop and Conference (CCWC), pp. 0324–0328 IEEE, 2020.

[41] C. Shan, S. Gong, and P.W. McOwan, ‘Facial expression recognition based on local binary patterns: a comprehensive study’, Image Vis. Comput. 27(6), pp. 803–816, 2009.

[42] https://www.kaggle.com/msambare/fer2013. In FER-2013|Kaggle. Accessed 20 Feb 2021.

[43] https://github.com/Tanoy004/Facial-Own-images-for-test. Accessed 20 Feb 2021.

[44] O. A. Montesinos López, A. Montesinos López, J. Crossa, ‘Overfitting, Model Tuning, and Evaluation of Prediction Performance in Multivariate Statistical Machine Learning Methods for Genomic Prediction.’ Springer 2022.

Biographies

Abhilasha Sharma received the bachelor’s degree in electronics and communication engineering from Kurukshetra University in 2014, the master’s degree in signal processing and digital design from Delhi technological University in 2017. She is currently pursuing her research in artificial intelligence and facial emotions. His research areas include facial emotions, deep learning, and AI.

Usha Tiwari is currently Assistant Professor, Department of EECE, Sharda University. Dr. Tiwari is Ph.D. from Jamia Millia Islamia, New Delhi, in Data Compression Schemes for Wireless Sensor Networks. She did M.Tech in Electronics & Communication from MDU Rohtak in year 2010. Dr. Usha graduated with honours from UPTU Lucknow, Uttar Pradesh in 2005, with a degree in the field of Electronics & Instrumentation Engineering. She is holds the 8th rank in top ten merit list declared by UPTU in 2005.

Sushanta Kumar Mandal received his B.E. degree in Electrical Engineering from Jalpaiguri Govt. Engineering College, MS and Ph.D degree from IIT Kharagpur. Currently he is working as Professor in the Department of Electrical and Electronics Engineering and Dean Quality Assurance and Accreditation at Adamas University, Kolkata. He has more than two decades of teaching experience in reputed institutions. He has guided 6 PhD and 30 MTech Theses and also guiding several PhD Scholars. He has published more than 70 research papers in reputed international journals and conferences. His research interests include VLSI design, Artificial Intelligence and Machine Learning applications.

Journal of Mobile Multimedia, Vol. 21_3&4, 407–428.

doi: 10.13052/jmm1550-4646.21344

© 2025 River Publishers