Hybrid Image Compression Scheme for Wireless Media Sensor Network and Contrast With DWT and DCT Schemes

Usha Tiwari1, 2,*, Shabana Mehfuz1 and Sibaram Khara2

1Department of Electrical Engineering, Jamia Millia Islamia, New Delhi, India

2Department of EECE, Sharda University, U.P, India

E-mail: ushapant@rediffmail.com; smehfuz@jmi.ac.in; sibaram.khara@sharda.ac.in

*Corresponding Author

Received 08 January 2025; Accepted 01 May 2025

Abstract

In recent years, Wireless Media Sensor Network (WMSN’s) deployments have rapidly increased for real time systems in various areas. Power consumption is always a critical issue which affects the overall lifetime of a wireless sensor network. WSN mainly consists of various types of sensor nodes, which are capable of sensing, computing and communicating to sink nodes wirelessly. The communication process is the main source of power consumption in the node, so a data compression technique is required which leads to a reduction in data transmitted over the wireless channels. For reducing the size of multimedia data received from media sensors, the set partitioning in a hierarchical tree (SPIHT) is always a favourable choice. But due to its huge memory requirement and complex coding, this method poses problems for resource constrained systems like a wireless media sensor network. In this paper a novel method has been introduced which is a hybrid of embedded zero tree (EZW) and set partitioning in a hierarchical tree (SPIHT). The advantages of this method is reduction of memory consumption & the processing time of the compression algorithm. The method is compared with DCT and DWT based image compression techniques for WMSN.

The superiority of the algorithm over its competent algorithm has been demonstrated with the help of parameters like Peak signal to ratio (PSNR), Mean square Error (MSE), packet delivery rate, throughput, compression ratio, and energy consumption.

Keywords: WMSN (Wireless Media Sensor Network), PSNR, MSE, packet delivery rate, throughput, compression ratio, data compression.

1 Introduction

Sensor nodes are generally scattered randomly in a particular terrain and used to monitor the target. Events or information are reported to the sink node or base station using a radio communication unit and the sink node processes the information and sends the information whenever it is required via the internet or any other transmission medium. Generally a node consists of three main parts, a sensing unit, a processing unit and a radio communication device for transmitting the information.

The energy consumed in transmitting or receiving a single bit is approximately equivalent to the energy required in processing almost thousands of instructions. WSNs are operated with limited resources like limited bandwidth, limited memory, and limited computational capability and limited packet size. So, a data compression technique can be used to manage high fidelity data transmission to the sink node and make effective use of limited capacity of sensor nodes without any loss of data. Therefore, we can say that compressing data before transmission is one of the key requirements of any WSN application to increase the lifetime of any sensor network. Sensing unit may be a pressure, temperature, humidity or any variety of sensor which is available in the market. As the sensor nodes are having very less memory of the order of few kilobytes and a 4–8 MHz processor, a lossless data compression algorithm to be used for WSN should be using less memory and should have less computational requirements for its effective operation. To achieve these objectives, a novel method has been introduced in this paper, which is a hybridisation of EZW and SPIHT. Proposed algorithm has been tested for a variety of available test images and applied to the wireless media sensor network (WMSN). Various image compression parameters have been evaluated. A comparative result analysis demonstrates the effectiveness of the designed algorithm as compared to the other image compression algorithms proposed for the Wireless multimedia sensor network in the literature. Proposed methodology has high PSNR as compared to others which shows the effectiveness of the image compression algorithm.

2 Related Work

Energy is limited in Sensor networks as compared to other types of wireless networks because of the nature of sensing devices and difficulties in changing or recharging the batteries once the network is deployed in the field area. The algorithm designed for compression of data for such types of sensor nodes should be lightweight (requiring a smaller amount of memory) and having less computational requirement for appropriate operation of WSN. Due to various constraints of WSN like hardware, energy, processing capability and low memory, researchers have designed and developed algorithms specifically for WSNs. Many available data compression algorithms cannot be applied directly to the WSN due to its various constraints in terms of memory and processing power, storage and bandwidth. Therefore an energy efficient data compression algorithm is a necessity for WSN and it also presents itself as a challenging research problem.

In [1] the author has presented a detailed analysis of all the data compression techniques available in the sensor network and along with a comparative analysis in terms of computational complexity, compression rate, size of algorithm and data types supported is done. Data compression algorithms which are available in sensor networks are classified into two broad categories: a Distributed approach and a Local approach. A comparison result shows that each approach has its own advantages and disadvantages in different ways. Distributed approaches depend on some predefined models and are fit for dense networks while the local approaches do not depend upon the topology (pre-defined model) of the network and compression can be performed at each local node. That’s why local approaches are suitable for sparse networks and are not fit for dense sensor networks. So, according to the type of application and density of the sensor network an appropriate compression technique can be chosen.

WSN has attracted the interest of many researchers, with the emergence of various wireless technologies. Nowadays there are several multimedia applications of WSN. WSN can be used in multimedia for different applications like medical diagnostic and traffic surveillance, in defence and various other security services, etc. But the data to be sent or received are affected by limited bandwidth and are delay sensitive. So, a data center or generally a sink node is required to collect the data from all the sensors. After considering all constraints of WSN, we have proposed a novel compression algorithm for WMSN which requires less power, energy and memory as compared to other algorithms. For performance analysis, parameters like PSNR, MSE, complexity and throughput have been evaluated and the results are compared with DCT and DWT algorithms present in literature for WMSN.

WMSN has low memory and is generally deployed in remote locations. Due to these compulsions Data compression is always a key area of research in media sensor networks. To handle resource constrained applications, many techniques have been proposed in the past. In [2] the author analysed the various Discrete Cosine Transform (DCT) and Discrete Wavelet Transform (DWT) based methodologies for WMSN. Analysis for these techniques have been obtained by implementing it on Tiny OS on TelosB hardware platform. Authors have evaluated the parameter PSNR, END to END delay, and compression ratio and battery life time for the multi-hop and single hop networks. Results are compared in these two scenarios and results depict that DWT is better in terms of PSNR, throughput, ETE and battery lifetime. But in terms of compression ratio DCT performs better than DWT. Calculation of the average MAC delay for both techniques also has been carried out.

Several Discrete cosine transform (DCT) based data compressions have been proposed by researchers in the past. In most of the algorithms the frequency component of the image is represented mathematically in terms of the cosine function of a different set of frequencies. For example, JPEG [3] is a well known compression algorithm based on DCT. DCT based techniques are widely used in various applications for reducing complexity of an algorithm and their usage also offers reduction in power consumption. On the other hand, if we compare DCT with DWT, DCT based techniques had more power consumption over DWT [4].

In [5], authors have introduced the concept of parallel pipelining of DCT in which arithmetic and processing elements should work in parallel which result in reduction in computational complexity of the algorithm. The complexity achieved in [5] is again reduced by the author in [6] using the same logic along with cosine transform. In [7] authors have used integer DCT in place of floating point DCT and results show that image compression has an impact on image delay while using the ARQ (automatic Repeat Request). In the work presented in [8], an image sensor network is developed to transmit the images using the multihop sensor network. Compression is performed by using JPEG & JPEG2000 (another variant of JPEG). Results show that JPEG2000 is a more suitable choice as compared to JPEG on the basis of bit error and packet loss parameters. As the power consumption factor has not been considered, it is not a suitable choice for wireless multimedia applications. In [9] the author proposed an energy efficient algorithm for image compression, JPEG compression has been used to trade-off between energy consumption & image quality. The results are verified using simulation only. No real time experiments have been performed. The disadvantages of DCT discussed earlier have been resolved by wavelet transform technique i.e. DWT. In general, most of the DWT based techniques are based on 2-D DWT in which first 1D transform is used to get high and Low i.e. L & H. Then sub-bands LL, LH, HL, HH can be obtained by applying 1-D DWT in column wise. These four bands can further classify into four sub-bands.

In [10] authors have introduced a technique called EZW (Embedded Zero tree of wavelet transform). This algorithm is first drafted for 2D, but it can be used for other dimensions also. In this the input data (image) is converted into a bit stream by using progressive encoding, after which image is converted into corresponding wavelet coefficient. Another variant of EZW has also been discussed [10]. A higher resolution is used where interference is present and low resolution is used where interference is not present. This process helps in reducing the power consumption and bandwidth too. Due to multi resolution and progressive encoding this algorithm is an energy efficient algorithm, but its disadvantage is that the quality of the image to be transmitted is deformed due to the number of passes. Due to the memory constraint, it is very difficult to store the large number of wavelet coefficients. EZW is also prone to packet loss, so packet loss is one of the crucial factors to be considered.

An improved version of EZW has been proposed in [11] known as SPIHT (set partitioning in hierarchical trees). The analysis shows that a high compression ratio is obtained by using a partition algorithm in an image wavelet transform. In this, the image is converted to coefficients and then the group of coefficients is represented by a set known as spatial orientation trees and then encoded separately. SPIHT is generally a two-step process, the first step is sorting pass (finding zero trees) and second step is a refinement pass (sending the precision bit). Many attempts were carried out after this, to minimise the limitation of this algorithm and to add more features to it. In [12], the author has attempted to enhance the speed of the SPIHT algorithm by reducing the internal memory usage. For this purpose, new coding process and enhanced zero-tree structure is implemented which results in better image quality after the compression process. Another technique based on SPIHT is proposed in [13]. The algorithm divides the input image into small strips and each individual strip is encoded separately. A DWT module is used to decompose the few initial lines and then buffer is used to store the coefficient of wavelets, after which the stream is transmitted. One of the disadvantages of SPIHT is that its memory requirement is high, but in terms of power consumption it is a better choice as compared to EZW.

A block based algorithm called EBCOT is proposed in [14], EBCOT is termed as Embedded block coding with optimal truncation. In EBCOT input blocks are divided into non overlapping blocks with DWT coefficients and are termed as code blocks. An individual bit stream is generated for every block and then every block is coded independently. This algorithm exhibits some similarity to JPEG2000. The comparative results show that it is an efficient algorithm, but the disadvantage is that it also requires high memory and has more power consumption. To reduce the memory requirement, authors have converted the three step process into the single step [15]. Because of this the performance has been improved but the problem of packet loss still exists. In [16] the compression is done by JPEG 2000 in which sensors in the network are grouped into clusters, except for the camera sensor which acts like a separate cluster and sends the data (images) to the cluster directly instead of becoming the part of a well-managed cluster. But again it results in high power consumption. The advantage of the algorithm is that it increases the lifetime of the sensor network. In the scheme of JSCCPC (Joint source channel coding and power control) [17], the image is encoded into various layers; this process depends on three main parameters:

(1) Type of Image Content

(2) End to End Image constraints

(3) The Channel condition

This algorithm helps in finding the multi-resolution property of input data (bit stream). But in this, the relation among structure of information and magnitude of information is not clear. The release of a camera sensor may reduce the lifetime of the network and can result in an increase in power consumption, so it is not always the best practice to release the camera. The main reason for power consumption in the above algorithm is DWT.

Another popular image coder is SPECK (set partition embedded block) [18] where a set of pixels are used in place of trees. These pixels are used to form blocks. DWT is used to start sub-band transformation of the input images followed by sorting pass and refinement pass repeatedly. Somehow these phases are similar to phases discussed in SPIHT. Basically, it consists of two types linked list:

(1) List of significant pixels (LSPs)

(2) List of insignificant sets (LIS)

SPEC has many advantages as compared to others like better compression ratio, low memory requirement & low computational complexity. It has some shortcomings like low computational complexity, for which researchers in [18] have tried to overcome. In [19], another variant of SPECK is introduced, i.e. list less SPECK named as LSK. In LSK there is no need of pre-defined list due to search techniques used, i.e. breadth first search. It uses the policies of block partitioning. S-SPECK termed as scalable SPECK is another variant of SPECK is proposed in [20]. It has low complexity because it makes the SPECK scalable. If we consider the real-time hardware implementation, then LIS and LSP are inappropriate because they require high amount of memory as these lists keep on increasing with increase in encoding. As SPECK is vulnerable to packet losses, it also requires an error correction scheme along with it. But it can be preferred over EZW because of its advantages like less power consumption, low complexity and high compression ratio. In [21] authors have proposed a technique called as 2D-discrete Walshe wavelet orthogonal transform. To remove redundant bits from the images it uses arithmetic coding with the existing scheme to compress the images. In terms of visual quality, the scheme is highly efficient. The other orthogonal scheme named as Digital Image Watermarking is presented in [22]. In this watermark is embedded in a DWT sub-band of the cover image in place of directly embedding it in wavelet coefficients. It fully utilizes the main features of two transform techniques: single value decomposition (SVD) and Spatio-frequency localization of DWT. These algorithms can also be implemented on real time WSN environment data.

A lossy compression scheme like VQ (Vector Quantisation) is one of the data compression schemes based on block coding. It is different from other block coding techniques as mentioned in [20]. VQ based methods are generally known by their codebook and perform mapping of vector into a small subset of codes. Many schemes are available based on VQ. In [23] VQ is used to reduce the power consumption. This is done by compressing the LSB of code word and the image. A low power pyramid VQ technique is proposed in [24], the advantage of this technique over the other compression method is that it uses simple decoder block. In this method no transformation block is added so the computational complexity of method is reduced. But there is an increase in vector dimension which result in rise in overall complexity of VQ. This in turn increases the power consumption of the system. Another technique called as fractal compression, in this technique input image can be represented by a set of fractals. It is a lossy scheme similar to VQ, but it is quite different from conventional schemes like JPEG2000 and JPEG. Fractal technique doesn’t involve any transformation block, the encoder converts the input into fractal codes and then decoder does the reverse process. In 1990 fractal based image coder is introduced by Jaquin, several researchers attempted to propose the variation in fractal coders. In [25] wavelet transformation is added with the fractal encoder method. Fractal based image compression has high compression ratio and the process involved in decoding is simple, but the encoding process is little bit time consuming and affects the computational complexity of the system.

From the literature survey, it is clear that fractal based compression and VQ based technique are better in terms of decoding process and computational complexity but these techniques lags in terms of memory requirement and speed of decoding process. Due these reasons fractal based techniques can’t be implemented on real time systems. But the DCT and DWT can be easily implemented in real time systems.

In one of the recent work [26], the author has introduced a modified strip based listless SPIHT for media sensor network. It uses the lifting based DWT in place of DWT based on convolution. In this a simplified coding algorithm is used to merge refinement pass and sorting pass into single pass. It also doesn’t use the arithmetic coding which requires a high memory for storing coding tables. The comparative results show that algorithm is superior to others as PSNR reaches to 1 dB for all bit rates. Memory requirement is reduced to 71% with 27% of energy saving.

During the design of WSNs energy consumption is always considered as an important factor. Several researches have been targeted at lowering the transmission of the information by adopting lossy compression strategies so as to enhance energy efficiency. The data compression scheme termed as Neighborhood Indexing Sequence (NIS) for compressing the data in WSN is proposed in [28]. This algorithm is adaptive and as it dynamically assigns the shorter code word to each of the character input by utilizing the occurrence of neighboring bits. NIS is implemented on real world data set, and results show that the compression results of the NIS algorithm is better than existing compression algorithms. It obtains the compression ratio of 89.13% at bit rate of 1.74 per sample and is able to save the power up to 87.57% for WSN data set. For delay tolerant WSN with mobile base stations, data collection is the main issue.

For delay tolerant WSN with mobile base stations, data collection is the main issue. In [27] this problem has been addressed. They proposed a hybrid strategy which uses the hybridization of compressive sensing and clustering. The raw information is shared among the cluster and data obtained from compressive sensing is sent from cluster to base station. One analytical model has been proposed which finds the energy consumption of the nodes and based on that information optimal cluster radius is determined. Extensive simulations are performed to find the performance and comparison with existing method has also been carried out. Proposed method results in increment of the lifetime of network by a factor of 1.2 and 2 times as compared to the existing techniques. [29] presents an in-depth energy analysis of lossy compression algorithm for multi-hop wireless sensor network. Author has chosen that algorithms for the analysis which either uses piecewise linear approximation methods or minimum transmission energy routing method for the analysis. Performance analysis of the configuration is done in terms of energy consumption by routing approach. Analysis has been done to calculate energy consumption by using real-world sensor datasets.

In one of the recent work proposed in [30], for increasing the lifetime of the sensor network author proposed the LEACH-Fuzzy Clustering (LEACH-FC)protocol and for cluster head selection fuzzy logic based methodology is used which results in increasing the lifetime of the sensor network. The approach used is centralized as compared to the other approaches which are the distributed one and depends upon some predefined models. Comparative analysis with other proposed algorithm is done to prove the effectiveness of the algorithm. Results show that algorithm is effective in balancing the load at each and every node and helps in enhancing the reliability of the sensor network.

Connectivity is always an important issue if we are considering the reliability of sensor network especially in dense sensor networks. So, author in [31] proposed a one step approach which utilizes the concept of disjoint spanning trees. The proposed algorithm is implemented for various sizes of the sensor network, which proves its applicability to sensor network at various scales. The proposed algorithm is more reliable as compared to other methods for the same scenario. Few more WSN cluster algorithms and Data compression algorithm are presented in [32] and [33].

3 Block Diagram

Many efficient procedures have been projected to resolve this issue, like power efficient routing protocols and methods to reduce the energy consumption. Among these techniques, the compression of data is the major task in the sensor networks to reduce the data to be transmitted over wireless frequencies or channels.

Due to various constraints in WMSN, SPIHT is an excellent choice due to its low complexity, high compression ratio, low power consumption and its implementation is less complex as compared to DCT (Discrete cosine transform) and EZW. But one of the drawbacks of SPIHT is that it requires more memory due to its three passes already discussed in literature. Now days many variations of SPIHT are introduced for WMSN which reduces the number of passes either by using lifting based DWT on the place of convolution. In the work proposed algorithm is developed using the hybrid approach of zero tree wavelet and set partition hierarchical tree structure. The hybrid approach is used to improve the value of Peak signal to noise ratio (PSNR). This parameter is used to measure the quality of an image. It compares the quality of compressed image with the original image, higher the ratio better, will be the compression technique. The other parameters like Mean square Error (MSE), packet delivery rate, throughput, compression ratio, and energy consumption are also evaluated for the proposed algorithm. The main aim of the data compression in sensor networks is to broadcast the low frequency information which must be highly correlated for the sharing of the information. The tree data structure is one of the efficient data structures in organizing the simulation and transferring data with less error rate probabilities. The zero tree wavelet deals with four componenets:

1. Zero tree root

2. Isolated coefficients which are having significant descendants

3. An important positive constant

4. An important negative coefficient.

The EZW coder or Embedded Zero Tree wavelet is a quantization & coding technique which also includes some characteristics of wavelet decomposition. EZW and its descents outperform over some of the generic approaches. The main trait which is used by EZW is that there are wavelet coefficients in different sub bands that represent the same spatial location in the image. If the size of different sub-band is different than a single coefficient in smaller sub-bands represent same spatial location as multi coefficients in other sub-bands.

It is basically a multi-pass algorithm, where each pass consist of two steps: dominant pass or significance map and refinement or subordinate pass. The initial value of threshold T is given by Equation (1)

| (1) |

where C = maximum value of largest coefficient.

So, by this process of selection, largest coefficient lie in the range and each iteration on the current value of is reduced to half of the past value . So, four levels are assigned to the coefficients which are:

• Significant positive (S)

• Significant Negative (S)

• Zero tree root (Z)

• Isolated Zero (i)

The SPIHT is a powerful wavelet based image compression algorithm and gives more compact output bit stream than the EZW (Embedded Zero Tree) without addition of any kind of Entropy Encoder. As Wireless media sensor networks are resource constrained, implementation of SPIHT is an excellent choice as it results in a higher compression ratio, lesser computational complexity, low power consumption and complexity of the algorithm is less then the other method like EZW and DCT. If data transmitted is 512*512 grey scale images, SPIHT achieve very compact output bit stream without addition of any entropy encoders which results in lesser computation complexity and lower amount of data transmitted. But as it uses the three lists to save the individual coefficients, some modification is required to obtain reduction in memory requirement.

After compression of the data the performance is evaluated using protocol evaluation and deployment of the sensor nodes using coverage areas and the compressed data is transferred through the neighbouring nodes which must be energy efficient and having less overhead. Firstly de-noising is done for the data to remove the redundancy from the data which will reduce the complexity and computation times. So the normalization is done for the effectual performance to increase the lifespan of the network.

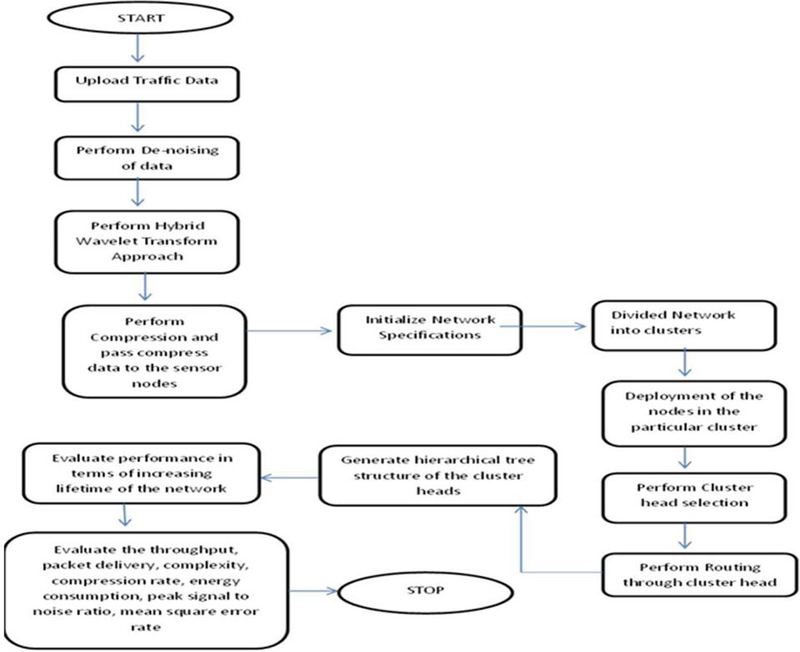

The performance is evaluated using energy consumption, packet delivery rate, throughput, compression rate and the complexity percentage, PSNR, MSE. These are used to evaluate the performance for compressed transmission through wireless transmission at larger distances. So our proposed approach is able to achieve high packet deliveries with low packet losses with high compression rates and less complexity. EZW and SPIHT both have their own benefits and shortcomings too. So, we have proposed a novel hybridized algorithm which includes the best features of both image compression algorithm and results in better results as compared to other image compression algorithms being used for wireless sensor networks. Figure 1 presents the flow chart of proposed algorithm and gives step by step implementation of the proposed work.

As both methods (EZW and SPIHT) have their own benefits and on other hand there are few shortcomings too as already discussed in literature. So, the proposal of a novel hybridised algorithm which includes the best features of both image compression algorithm for improving the PSNR and other features already discussed The hybrid approach is used to improve the value of Peak signal to noise ratio (PSNR). This parameter is used to measure the quality of an image. It compares the quality of compressed image with the original image, higher the ratio better, will be the compression technique. The other parameters like Mean square Error (MSE), packet delivery rate, throughput, compression ratio, and energy consumption are also evaluated for the proposed algorithm and results in good results as compared to other image compression algorithm introduced for wireless sensor networks.

Figure 1 Block diagram of proposed methodology.

4 Results and Comparison

4.1 Parameters Used for Analysis

The following performance parameters are chosen to prove the effectiveness of our algorithm:

(a) Peak signal to Noise Ratio (PSNR)

(b) Throughput

(c) Compression Ratio

(d) End-to-End delay

(e) Battery life time

(a) Peak signal to noise ratio: This parameter is used to measure the quality of an image. It compares the quality of compressed image with original image, higher the ratio better will be the technique used to compress. The PSNR is given by the equation number (2)

| (2) |

Where, MSE mean square error,

| (3) |

Where mn image size 0 A original image value. A compressed image value

If MSE (as in Equation (3)) is low mean error is low between the compressed value and original image. As PSNR is inversely proportional to MSE so, if MSE is low PSNR will be high. So, compression algorithm with high PSNR is preferred.

(b) Compression ratio: It is the ratio of number of bytes in original image to number of bytes in compressed image as given in Equation (4.1)

| (4) |

(c) Throughput: It is basically amount of data to be transmitted; higher the throughput better will be the performance. It is given by Equation (5),

| (5) |

(d) ETE delay (ETE): The time taken by the data packet to travel from source node to destination node in a network is called as ETE delay. It is basically a combination of small delays like compression delay, processing delay, decompression delay, propagation delay, MAC delay. It is given by Equation (6),

| (6) |

Where,

d delay for compression process

d delay for propagation

d delay in processing

d delay in decompression

d MAC delay

(e) Battery Lifetime: This parameter is checked for finding, how many images can be sent without charging a battery.

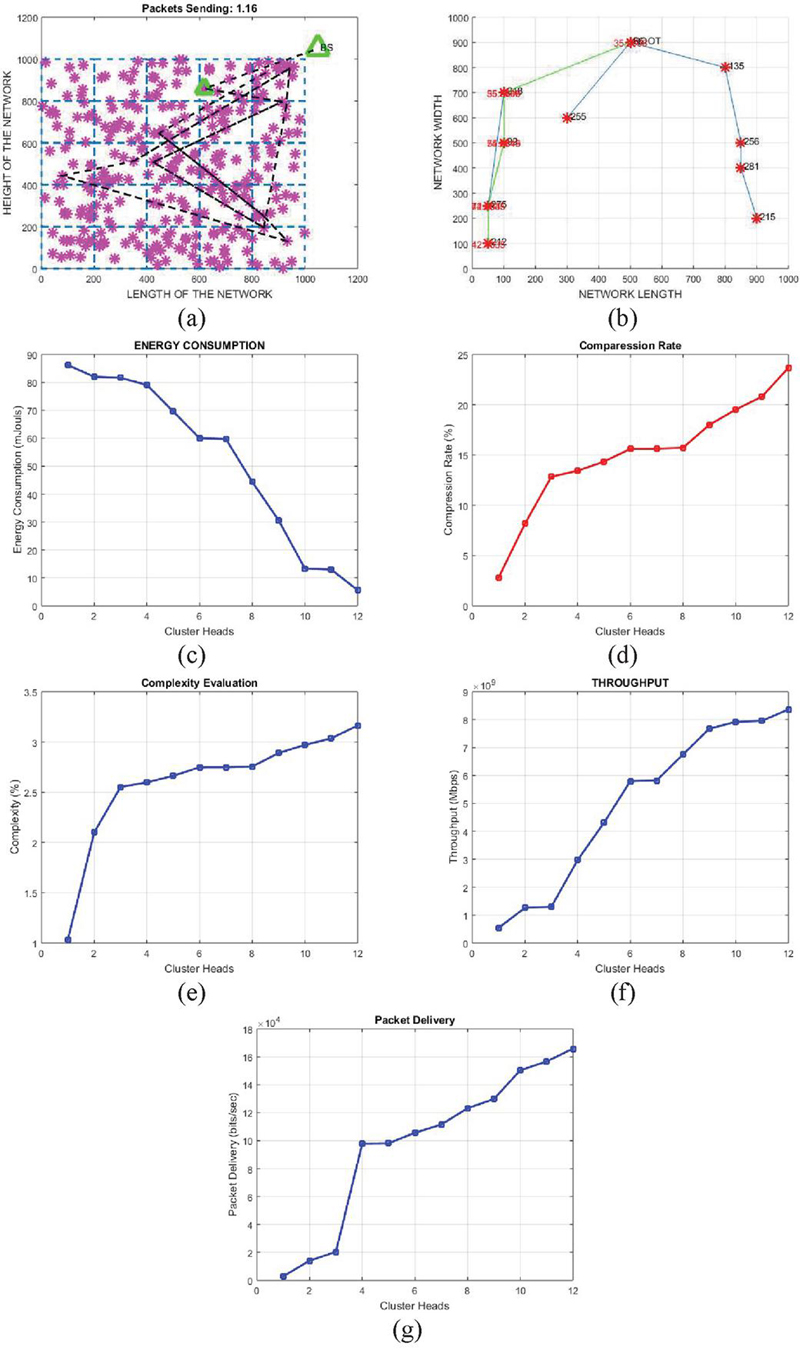

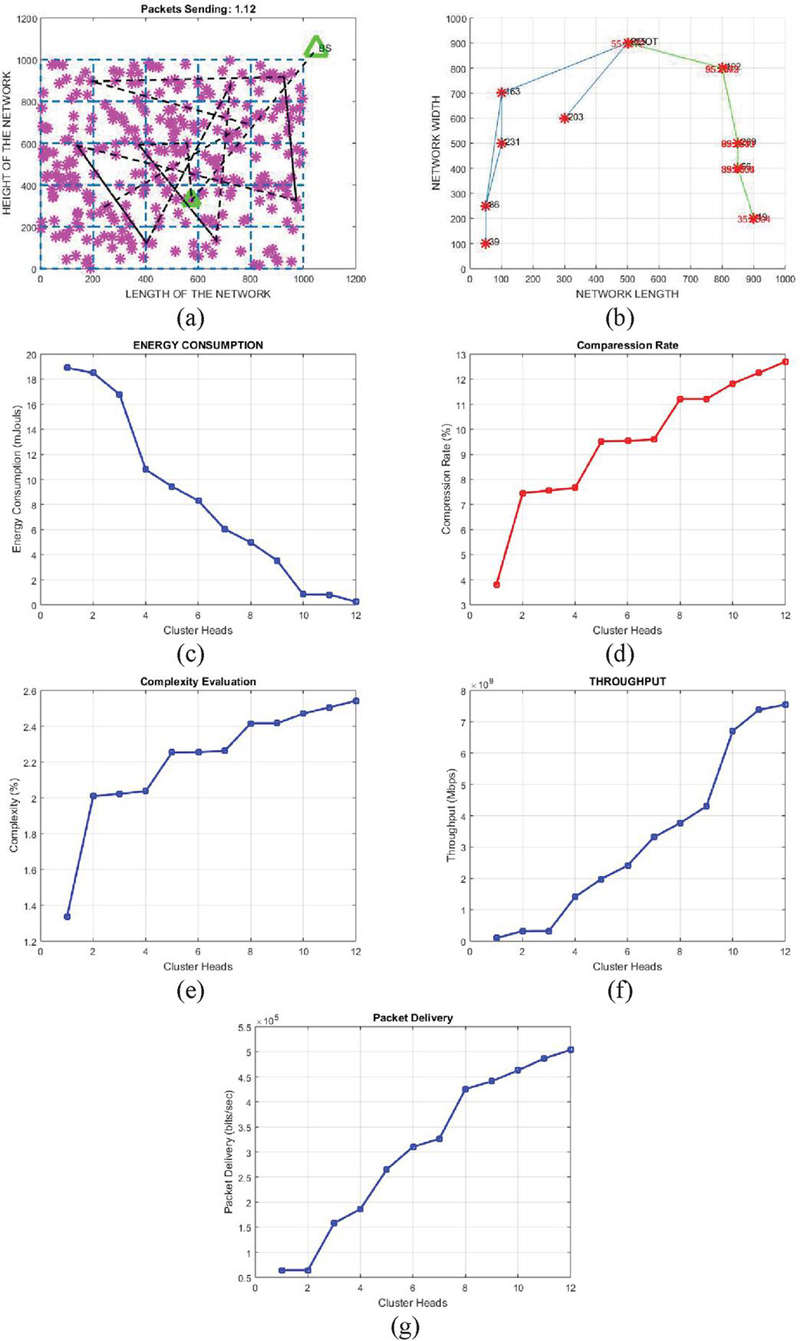

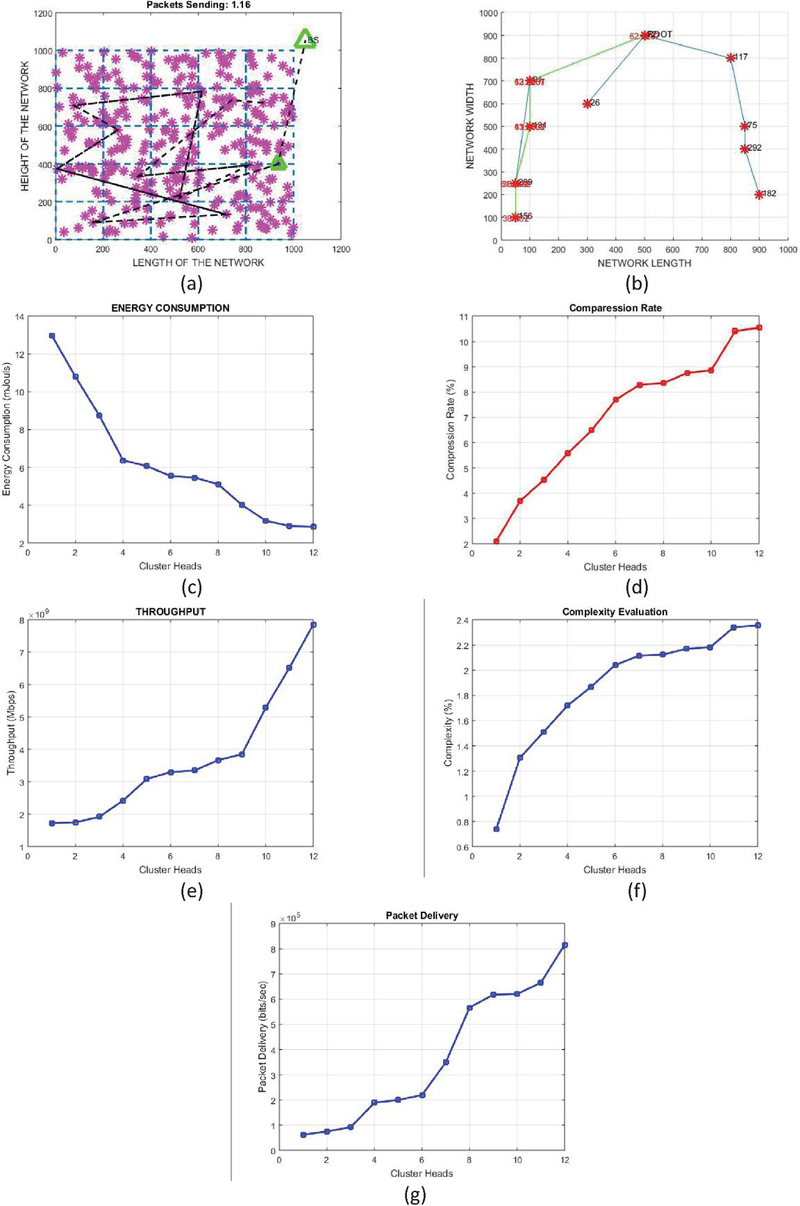

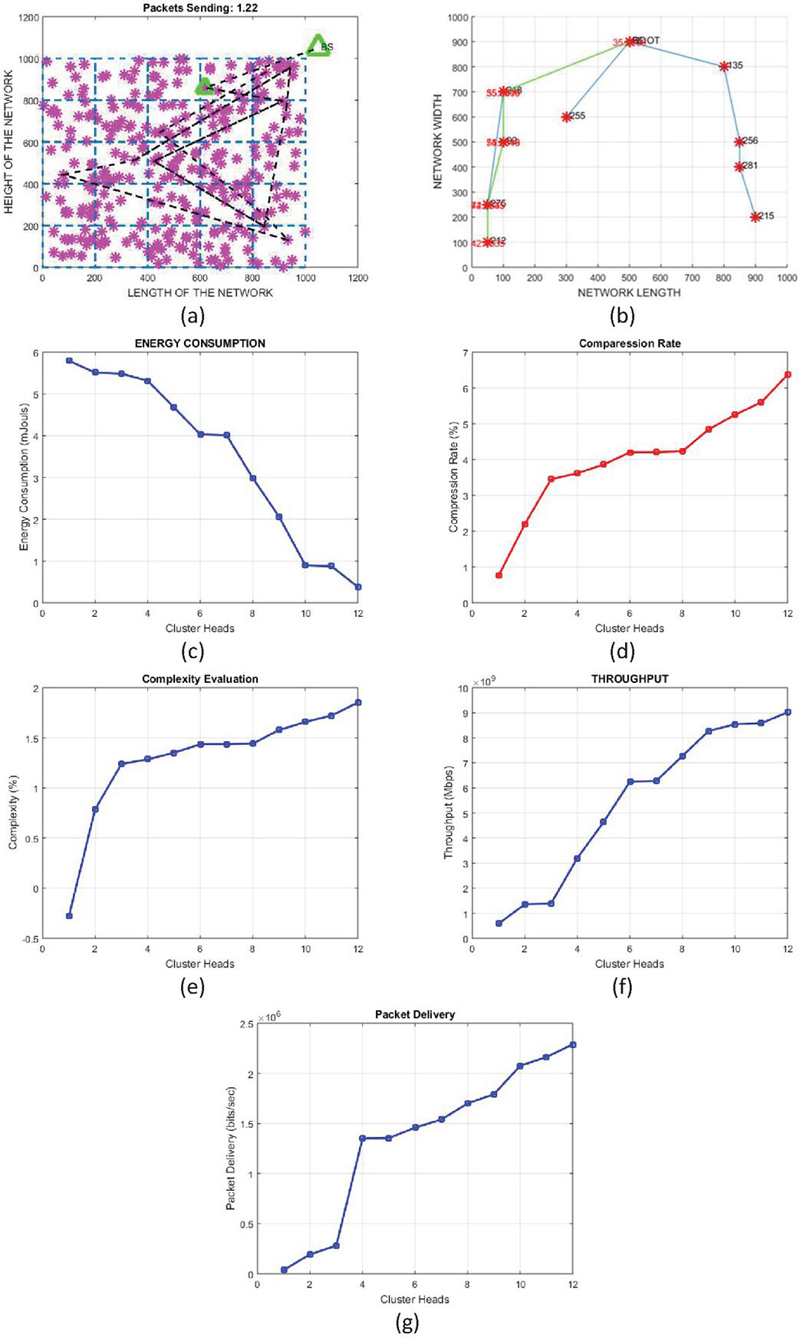

The implementation has been carried out by using MATLAB software. The images captured by sensor node are compressed using the proposed algorithm. The proposed algorithm is tested on dense network as shown in Figure 3(a), figure also shows the topology of network and how the data is transferred to one node to another node and finally to Base station marked. The Figure 3(b) shows the selection of clustered head with node id is mentioned in the graph, and graph also shows the flow of data from cluster head to other nodes. To prove the effectiveness of the proposed algorithm, it has been implemented on a wireless sensor network and parameters like PSNR, throughputs are determined for the designed network. To verify the accuracy of the proposed algorithm, it is applied to encode standard grey scale images of size 512*512 and simulation is done using MATLAB. The results obtained are compared with DCT and DWT techniques for WSN present in the literature survey. Our simulated network uses 100 nodes which are uniformly distributed over a (1000 m 1000 m) rectangular area. The simulation parameters are summarized in Table 1 and few values are assumed otherwise mentioned in the discussion.

Table 1 Simulation environment parameters

| Parameters for Network | Values Used |

| Network Size | |

| Base station Location | x 1050, y 1050 |

| Number of sensor per cluster | 12 |

| Efs* | 10 J/bit |

| Emp* | 0.0013 J/bits |

| EDa* | 5 nJ/bit |

| Elect* | 50 n Jouls |

| Efs* – energy if distance to base station is less than cluster distances in J/bit Emp* – Amplification energy if distance to base station is greater than cluster distances in jouls/bit Elect* – in nJouls EDa* – Data Aggregation Energy in nJ/bits |

|

4.2 Test Images Used

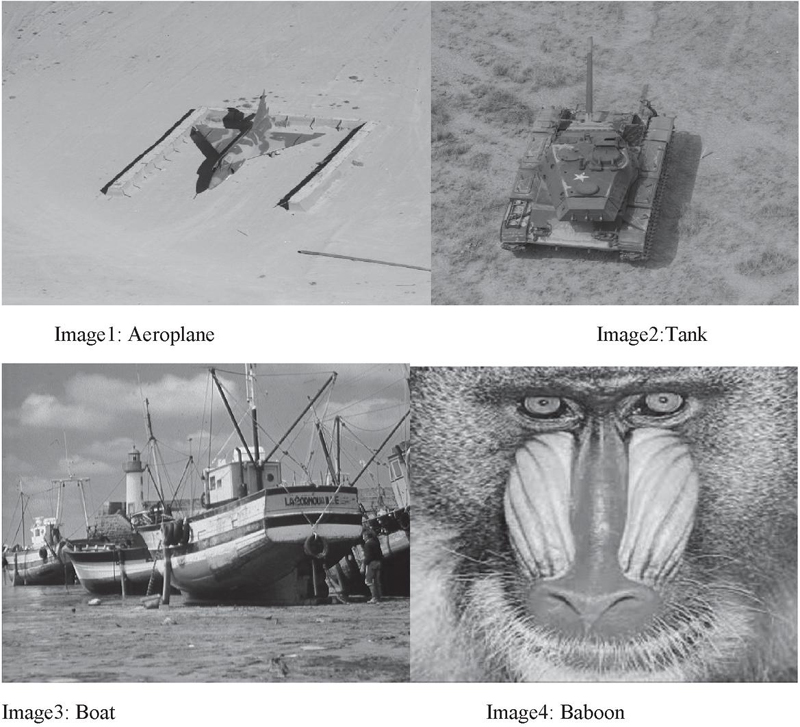

Our algorithm is tested for test images given in Figure 2.

Figure 2 Test images used for image compression (gray scale 512*512).

Figure 3 Results for first test image1-aeroplane (a) development of WSN network with base station (b) cluster head selection (c) energy consumption (d) compression rate (e) complexity evaluation (f) throughput (g) packet delivery.

Figure 4 Results for second Image2-Tank image (a) development of WSN network with base station (b) cluster head selection (c) energy consumption (d) compression rate (e) complexity evaluation (f) throughput (g) packet delivery.

Figure 5 Results for Image3-Boat image (a) development of WSN network with base station (b) cluster head selection (c) energy consumption (d) compression rate (e) complexity evaluation (f) throughput (g) packet delivery.

Figure 6 Results for Test image 4: Image4 – Baboon (a) development of WSN network with base station (b) cluster head selection (c) energy consumption (d) compression rate (e) complexity evaluation (f) throughput (g) packet delivery.

Figures 3, 4, 5 and 6 shows the results for the image database given in Figure 2. In every figure first the network is initialized as shown in figure(a), than cluster head is selected as in figure (b), figure (c) shows the energy consumption at cluster heads. Figure (d) presents compression rate at various cluster heads, figure (e) gives complexity of the coding, figure (f) presents the throughput of the algorithm and last figure (g) gives the packet delivery rate. All the images are of size 512*512 gray scale. Parameters like PSNR, MSE, throughput, energy consumption in mJoules, complexity etc. are also calculated for the proposed algorithm as shown in Figures 3, 4, 5 and 6. Table 2 shows the PSNR(dB) and MSE of the proposed methodology. Proposed methodology has high PSNR as compared to others which shows the effectiveness of the image compression algorithm.

Table 2 PSNR (peak signal to ratio) and MSE (mean square error) for test images

| S.No. | Image Name | PSNR | MSE | Root Node Id |

| 1. | Image1: Aeroplane | 57.335 | 0.12105 | 36 |

| 2. | Image2: Tank | 61.44 | 0.47031 | 255 |

| 3. | Image3: Boat Image | 56.573 | 0.1442 | 72 |

| 4. | Image4: Baboon | 51.633 | 0.4499 | 36 |

| 5. | Image5: Lena | 52.33 | 0.412 | 255 |

Proposed Technique has been compared with DWT and DCT techniques present in the literature survey for Wireless sensor networks [2]. The algorithm [2] has been implemented for two different scenarios: one for single-hop network and second for multi-hop network. In single hop network one to one communication is there and no intermediate node is there, while in multi-hop there exist an intermediate nodes. In both scenarios they calculated the PSNR, compression ratio, throughput, delay and battery life time using DCT and DWT.

For single hop, the image quality is calculated in terms of Quality factor (QF). As the quality factor increases the compression ratio increases. Compression ratio and image quality are inversely proportional to each other. Results for PSNR value at different Quality factor and image size for single hop network are tabulated in Table 3.

Table 3 Shows the comparison of PSNR value for different Quality Factor (QF) and image size (32*32, 64*64 and 128*128) [2]

| QF 10 | QF 20 | ||||||||||

| 32*32 | 64*64 | 128*128 | 32*32 | 64*64 | 128*128 | ||||||

| DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT |

| 33.2 | 25.14 | 29.81 | 23.19 | 31.4 | 25.87 | 27.01 | 20.42 | 27.76 | 21.75 | 28.54 | 23.61 |

| QF 30 | QF 40 | ||||||||||

| 32*32 | 64*64 | 128*128 | 32*32 | 64*64 | 128*128 | ||||||

| DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT | DWT | DCT |

| 24.68 | 18.81 | 25.36 | 21.16 | 26.73 | 22.35 | 23.09 | 18.75 | 23.8 | 19.95 | 25.28 | 21.6 |

For multi-hop network two scenarios are taken for comparison, total six nodes are there: so in first case two intermediate node and in second case four intermediate nodes are existing between the transmitter node and receiver node. Table3 gives the PSNR values for different Quality Factor and image resolution

Table 4 PSNR (dB) results of the multi-hop network for different QF and image resolutions [2]

| MULTI-HOP, QF 10 | |||||||||||

| 32*32 | 64*64 | 128*128 | |||||||||

| DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) |

| 24.19 | 31.25 | 23.65 | 30.78 | 22.23 | 28.85 | 21.81 | 28.11 | 24.17 | 31.09 | 23.15 | 29.38 |

| MULTI-HOP,QF 20 | |||||||||||

| 32*32 | 64*64 | 128*128 | |||||||||

| DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) |

| 20.01 | 26.53 | 19.16 | 26.15 | 21.13 | 26.89 | 20.51 | 26.21 | 22.98 | 28.14 | 22.08 | 27.56 |

| MULTI-HOP,QF 30 | |||||||||||

| 32*32 | 64*64 | 128*128 | |||||||||

| DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) |

| 18.13 | 23.87 | 17.79 | 23.07 | 20.47 | 24.91 | 19.67 | 23.51 | 21.38 | 25.98 | 20.82 | 25.02 |

| MULTI-HOP,QF 40 | |||||||||||

| 32*32 | 64*64 | 128*128 | |||||||||

| DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) | DCT(2) | DWT(2) | DCT(4) | DWT(4) |

| 18.13 | 22.41 | 17.76 | 21.35 | 19.15 | 22.96 | 18.41 | 22.13 | 20.84 | 24.84 | 19.62 | 23.52 |

The comparison of results of the proposed methodology with DCT and DWT techniques is presented in Tables 2, 3 and 4. It is clear that in terms of PSNR as shown in Table 2 the proposed methodology outperforms the DCT and DWT techniques. Higher values of the PSNR are resulting due to hybridization of two techniques, results show that the proposed compression algorithm is better than others.

5 Conclusion and Future Work

In recent years, WSN deployments have rapidly increased for real time systems in various areas. Power consumption is always a critical issue which affects the overall life time of a wireless sensor network. WSN mainly consist of various types of sensor nodes which are capable of sensing, computing and communicating to sink wirelessly. The communication process is the main source of power consumption in the node, so a data compression technique is required which leads to a reduction in data transmitted over the wireless channels. For reducing the size of multimedia data received from media sensors, the set partitioning in a hierarchal tree (SPIHT) is always a better choice. But due to its high memory requirement and complex coding, it poses a problem for resource constrained systems like wireless media sensor network. The main objective of this work is to reduce the memory consumption & the processing time of the compression algorithm. The superiority of the algorithm over other algorithms present in literature is exhibited with the help of parameters like PSNR (Peak-Signal to noise ratio), packet delivery rate, throughput, compression ratio, energy consumption, etc. In paper proposed methodology is compared with single hop and multi hop scenario of various DCT and DWT based techniques. As a practical application this method can be utilized for compression in WMSN deployed for detecting the disease in the leaves (in agriculture) by sending the images from the camera sensor module to the server.

Declarations

(1) Availability of data and material: Freely available images are used for testing the algorithm.

(2) Competing Interest: No Financial and non-financial competing interest.

(3) Funding: My research is not funded by any funding agency.

References

[1] T. Srisooksai, K. Keamarungsi, P. Lamsrichan and K. Araki, ‘Practical data compression in Wireless sensor networks’, in: A Survey’ in journal of network and computer application, pp. 37–59, 2012.

[2] T. Sheltami, M. Musaddiq, E. Shakshuki, ‘Data Compression technique in Wireless Sensor Networks, in: journal of Future Generation Computer Systems’, pp. 15–162, 2016.

[3] G.K Wallace, ‘the JPEG still picture compression standard’, in: IEEE Transaction consumer electronics. 38(1) pp. 18–34, 1992.

[4] D.M Pham, S.M Aziz, ‘An energy efficient image compression scheme for wireless sensor networks’, in: IEEE 8th International conference on Intelligent sensors, sensor networks and information processing, pp. 260–264, 2013.

[5] G.A Ruiz, J.A Michell, A Buron, ‘High throughput Parallel-pipeline 2-D DCT/ IDCT processor chip’, in:Journal of VLSI Signal Processing system signal image video technology. 45(3) pp. 161–175, 2006.

[6] G.A Ruiz, J.A Michell, A Buron, ‘Parallel-pipeline 8X8 forward 2-D DCT/ IDCT processor chip, for image coding’, in: IEEE Transaction Signal Process. 53(2) pp. 714–723, 2005.

[7] C.F. Chiasserini, E. Magli, ‘Energy consumption and image quality in wireless video-surveillance networks’, in:The 13th IEEE International Symposium on Personal, Indoor and Mobile Radio Communications, Vol. 5, pp. 2357—2361, 2002.

[8] G. Pekhteryev, Z. Sahinoglu, P. Orlik, G. Bhatti, ‘Image transmission over IEEE 802.15.4 and ZigBee networks’, in: IEEE International Symposium on Circuits and Systems, Vol. 4, pp. 3539–3542, 2005.

[9] L. Ferrigno, S. Marano, V. Paciello, A. Pietrosanto, ‘Balancing computational and transmission power consumption in wireless image sensor networks’, in: IEEE International Conference on Virtual Environments, Human–Computer Interfaces and Measurement Systems, July, pp. 18–20, 2005.

[10] Y. Mu, B. Murali, A.L. Ali, ‘Embedded image coding using zero trees of wavelet coefficients for visible human dataset’, in: Conference Record of the Thirty-Ninth Asilomar Conference on Signals, Systems and Computers, pp. 276–280, 2005.

[11] P. Chithra, P. Thangavel, ‘A fast and efficient memory image codec (encoding/decoding) based on all level curvelet transform co-efficients with SPIHT and run length encoding’, in: Recent Advances in Space Technology Services and Climate Change, pp. 174–178, 2005.

[12] Y. Sun, H. Zhang, G. Hu, ‘Real-time implementation of a new low-memory SPIHT image coding algorithm using DSP chip’, in: IEEE Trans. Image Process. 11(9), 1112–1116, 2006.

[13] L. Chew, W. Chia, L. Ang, K. Seng, ‘Very low-memory wavelet compression architecture using strip-based processing for implementation in wireless sensor networks’, in: EURASIP J. Embed. Syst. (1) 1–16, 2009.

[14] D. Taubman, ‘High performance scalable image compression with EBCOT’, in: IEEE Trans. Image Process. 9 (7) 1158–1170, 2000.

[15] C. Lian, K. Chen, H. Chen, L. Chen, ‘Analysis and architecture design of block coding engine for EBCOT in JPEG 2000’, IEEE Trans. Circuits Syst. Video Technol. 13(3) 219–230, 2003.

[16] Q. Lu, X. Ye, L. Du, ‘An architecture for energy efficient image transmission in WSNs’, in: IEEE International Conference on Networks Security, Wireless Communications and Trusted Computing, Vol. 1, pp. 296–299, 2009.

[17] W. Yu, Z. Sahinoglu, A. Vetro, M. Electric, ‘Energy efficient JPEG 2000 image transmission over wireless sensor networks’, in: IEEE Global Telecommunications Conference, Vol. 5, pp. 2738–2743, 2004.

[18] W.A. Pearlman, A. Islam, N. Nagaraj, A. Said,’ Efficient, low-complexity image coding with a set-partitioning embedded block coder’, in: IEEE Transactions on Circuits and Systems for Video Technology, Vol. 14, no. 11, Nov, pp. 1219–1235, 2004.

[19] M.V. Latte, N.H. Ayachit, D.K. Deshpande, ‘Reduced memory listless speck image compression’, in: Digit. Signal Process. 16(6) 817–824, 2006.

[20] H. Matsumoto, S. Member, K. Sasazaki, Y. Suzuki, ‘Color image compression with vector quantization’, in: IEEE Conference on Soft Computing in Industrial Applications, pp. 84–88, 2008.

[21] S. Malviya, N. Gupta, V. Shirvastava, ‘2D-discrete walsh wavelet transform for image compression with arithmetic coding’, in: Fourth International Conference on Computing, Communications and Networking Technologies, ICCCNT, pp. 1–4, 2013.

[22] C.-C. Lai, C.-C. Tsai, ‘Digital image watermarking using discrete wavelet transform and singular value decomposition’, in: IEEE Trans. Instrum. Meas. 59(11) 3060–3063, 2010.

[23] K. Masselos, P. Merakos, C.E. Goutis, ‘Power efficient vector quantization design using pixel truncation’, in: Integr. Circuit Des. Power Timing Model. Optim. Simul, 409–418, 2002.

[24] S. Kasaei, M. Deriche, B. Boashash, ‘A novel fingerprint image compression technique using wavelets packets and pyramid lattice vector quantization’, in: IEEE Trans. Image Process. 11(12) 1365–1378, 2002.

[25] U. Nandi, J.K. Mandal, ‘Fractal image compression by using loss-less encoding on the parameters of affine transforms’, in: 1st International Conference on Automation, Control, Energy and Systems, pp. 1–6, 2014.

[26] H. ZainEldin, Mostafa A. Elhosseini, Hesam A. Ali, ‘A modified listless strip based SPIHT for wireless multimedia sensor network’, in: Computer and Electrical engineering journal, Elsevier, pp. 519–532, 2016.

[27] H. Huang, C. Huang, D. Ma, ‘The cluster based compressive data collection for wireless sensor networks with a mobile sink’, in: Int. J. Electron. Communication (AEÜ) 108, 206–214, 2019.

[28] J. Uthayakumar, T. Vengattaraman, P. Dhavachelvan, ‘A New Lossless Neighborhood Indexing Sequence (NIS) Algorithm for Data Compression in Wireless Sensor Networks’, in: Ad Hoc Networks, doi: https://doi.org/10.1016/j.adhoc.09.009, 2018.

[29] S. Al Fallah, M. Arioua, A. El Oualkadi, J. EL Asrib, ‘PLA Compression Schemes Assessment in Multi-hop Wireless Sensor Networks’, in: Procedia Computer Science 130, 279–286, 2018.

[30] S. Lata, S. Mehfuz, S. Urooj and F. Alrowais, ‘Fuzzy Clustering Algorithm for Enhancing Reliability and Network Lifetime of Wireless Sensor Networks’, in: IEEE Access, vol. 8, pp. 66013–66024, doi: 10.1109/ACCESS.2020.2985495, 2018.

[31] S. Lata, S. Mehfuz, S. Urooj, A. Ali, N. Nasser, ‘Disjoint Spanning Tree Based Reliability Evaluation of Wireless Sensor Network’, in MDPI Sensors, 2020, https://doi.org/10.3390/s20113071.

[32] A. Mishra, H.M. Hassan, U. Tiwari, et al., ‘Subjective Survey on Probabilistic and Non-probabilistic Clusterization in Wireless Sensor Network’, Wireless Pers Commun 132, pp. 1703–1729, 2023. https://doi.org/10.1007/s11277-023-10629-4.

[33] U. Tiwari and S. Mehfuz, ‘An efficient tag generation based data compression algorithm for wireless sensor network’, International Journal of Engineering Research and Technology 11. pp. 117–143.

Biographies

Usha Tiwari (Senior Member, IEEE) is currently Assistant Professor, Department of EECE, Sharda University. Dr. Tiwari is Ph.D. from Jamia Millia Islamia, New Delhi, in Data Compression Schemes for Wireless Sensor Networks. She did M.Tech in Electronics & Communication from MDU Rohtak in year 2010. Dr. Usha graduated with honours from UPTU Lucknow, Uttar Pradesh in 2005, with a degree in the field of Electronics & Instrumentation Engineering. She is holds the 8 th rank in top ten merit list declared by UPTU in 2005.

Shabana Mehfuz received the B.Tech. degree in Electrical Engineering from Jamia Millia Islamia, India, in 1996, the M.Tech. degree in Computer Technology from IIT Delhi, in 2003, and the Ph.D. degree in Computer Engineering from Jamia Millia Islamia, in 2008. She secured First position in the order of merit in B.Tech.(Electrical Engineering). She has been working at the Department of Electrical Engineering, Jamia Millia Islamia, since 1998 and as a Professor since 2014. She has published more than 150 articles in International/National Journals and Conferences. She has guided eleven Ph.D.s and is currently supervising twelve more candidates. She is a Life Member of ISTE, a member of the Institution of Engineers, and a Life Member of the Computer Society of India. She has received grants for research projects from agencies, such as AICTE and UGC. She has acted as the Track Chair for three flagship International conferences held in 2015, 2019, and 2023 which were technically cosponsored by IEEE and Publicity Chair for ETMN 2024. She has been awarded the International Inspirational Women Award 2020 for Best Performer in Government by GISR Foundation and. She has been awarded a national award for excellence in education 2021 in the category of university/college teachers by AMP organization. Her research interests include Cloud Computing, Internet of Things (IoT), Wireless Sensor Networks, Mobile ad-hoc Network, Network Security, Artificial Intelligence and Machine Learning.

Sibaram Khara received his Ph. D. in engineering from Jadavpur University, Kolkata, in next-generation wireless heterogeneous network-essentially in the area of interworking network and protocol convergence techniques for cellular and WiFi integrated networks. He did PG in Digital Systems from National Institute of Technology, Allahabad. He received Best Paper awards for his analytical model of Cellular/WiFi system (IEEE ADCOM 2008 MIT Chennai and IEEE EWT 2004 (1st), I2IT Pune (3rd)). He was honored as best Research Faculty in the School of Electronics Engineering, VIT University, Vellore, India for year 2010. His research articles are presented at seminars and conferences in many countries, namely, WEAS02 Athens, IEEE VTC06 Melbourne, IEEE PWC07 Prague, IEEE/ACM SAC10 Switzerland, etc. His major research interests cover the areas of cluster based wireless sensor networks, spectrum mobility in cognitive radio system, call admission control in heterogeneous network and carrier aggregation in LTE-A technology.

Journal of Mobile Multimedia, Vol. 21_3&4, 783–810.

doi: 10.13052/jmm1550-4646.213425

© 2025 River Publishers