Analysis of Normalized Orthogonal Gradient Adaptive Algorithm Based on Spline Adaptive Filtering for Smart Communication Technology

Theerayod Wiangtong1 and Suchada Sitjongsataporn2,*

1Department of Electrical Engineering, Faculty of Engineering, King Mongkut’s Institute of Technology Ladkrabang, 1 Chalongkrung Rd., Ladkrabang, Bangkok Thailand

2Department of Electronic Engineering, Mahanakorn Institute of Innovation (MII), Faculty of Engineering and Technology, Mahanakorn University of Technology, 140 Cheumsamphan Rd., Nongchok, Bangkok Thailand

E-mail: theerayod.wi@kmitl.ac.th; ssuchada@mut.ac.th

*Corresponding Author

Received 05 May 2020; Accepted 10 February 2021; Publication 18 June 2021

Abstract

This paper presents a general theoretical framework of spline adaptive filtering based on a normalized version of orthogonal gradient adaptive algorithm. A nonlinear spline adaptive filter normally consists of a linear combination with a memory-less function and a spline function for adaptive approach. We explain how the adaptive linear filter and spline control points are derived in a straightforward iterative gradient-based method. In order to improve the convergence characteristics, the normalized version of orthogonal gradient adaptive algorithm is introduced by the orthogonal projection along with the gradient adaptive algorithm. In addition, a simple form of adaptation algorithm is introduced how to obtain a lower bound on the excess mean square error (MSE) in a theoretical basis. Convergence and stability analysis based on the MSE criterion are proven in terms of the excess MSE. Simulation results reveal that the proposed algorithm achieves more robustness compared with the conventional spline adaptive filtering algorithm.

Keywords: Spline adaptive filtering (SAF), normalized orthogonal gradient adaptive (NOGA) algorithm, nonlinear systems.

1 Introduction

In recent years, the modeling and identification of nonlinear systems have become attractive research topics. The requirements of nonlinear filters for system modeling [1, 2] are increasing since many real-time dynamic systems are implemented in nonlinear operating models.

Spline adaptive filter (SAF) is a class of nonlinear adaptive filers described in [2] which has effective performance in the low computational complexity and nonlinear system identification. Nonlinear SAF has been efficiently deployed to the nonlinear systems [3, 4]. SAF consists of the linear merged with a finite impulse response (FIR) filtering and nonlinear network combined with a spline function. Spline control points are adaptively implemented using gradient-based scheme [5]. The structure of SAF is normally described by a linear time-invariant model including with a spline function demonstrated by an adaptive look-up table (LUT). B-spline and Catmull-Rom spline is the spline basis matrix which is determined for the constraint on control parameter. The tap-weight estimated vectors of linear filter and the interpolation points of LUT in the nonlinear network can be implemented adaptively. Both are used to calculate an adaptive tap-weight vector for which the mean square error (MSE) is minimum.

In order to model the nonlinear system identification, the normalized SAF algorithm has been conducted and proved in the experimental simulation [6]. In [7], the architecture of SAF is applied to infinite impulse response for the nonlinear system identification. Based on Wiener SAF, a set-membership normalized least M-estimate algorithm [8] has been proposed. Experimental results achieve fast convergence rate and is effective to suppress the impulsive noise. This indicates more system robustness in the impulsive noise environment when compared with the classical SAF algorithm.

In order to regain the characteristics of convergence rate, the orthogonal gradient adaptive algorithm has been presented by orthogonal projection together with the filtered gradient adaptive algorithm [9]. The convergence analysis of the greedy normalized orthogonal gradient adaptive (NOGA) algorithm with the leaky criterion has been conducted in terms of convergence rate improvement and a low disadjustment [10].

The remainder of this paper is arranged as follows. A SAF is explained in Section 2. Section 3 proposes the SAF based on NOGA algorithm. Section 4 describes the convergence and stability analysis by using excess MSE. Section 5 shows the simulation results and Section 6 concludes the work.

Notations used through this paper include the operator that indicates the transpose operation and is floor operator. Matrices and vectors are in bold uppercase and lowercase, respectively.

2 Spline Adaptive Filtering

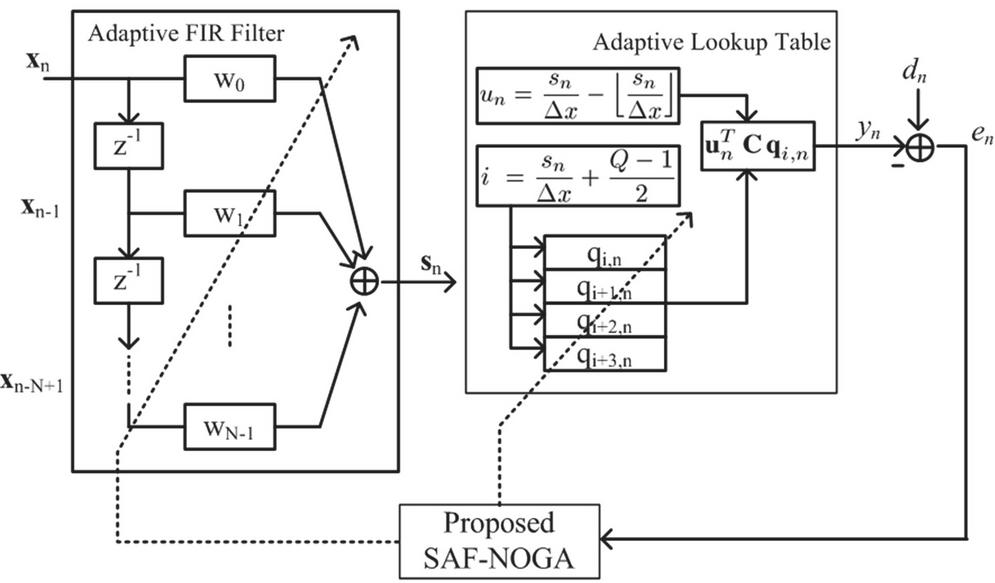

SAF structure as depicted in Figure 1 is a combination of adaptive FIR linear filter and nonlinear network with an adaptive LUT and spline interpolation network, namely linear-nonlinear network.

An adaptive FIR filter output is defined as

| (1) |

where is the adaptive tap-weight vector and is the input vector with the length of tap delay N. It is noticed that is connected with a nonlinear activation function using the span index i and the local parameter u, where . Local parameter and index i are given as [11]

| (2) |

Figure 1 Adaptive tracking of linear-nonlinear network of SAF-NOGA structure.

where is the uniform space between two adjacent control points, and [3]. Q is the number of control point. The local parameter vector is defined as

In particular, we obtain the SAF output as [12]

| (3) |

where C is the spline basis matrix and is the control point vector at index i and symbol n.

3 Proposed Spline Adaptive Filtering Normalized Orthogonal Gradient Adaptive Algorithm

Similar to [8], we suppose that the objective function is minimized as

| (4) |

where is a priori error given by

| (5) |

where is the desired signal and is the SAF output.

Hence, the minimum value can be obtained by differentiating Equation (4) with respect to and using the method of chain rule [13, 14] as follows:

| (6) | ||

| (7) |

where is the derivative of as .

Consequently, a recursion of the tap-weight estimated vector toward the minimum based on NOGA algorithm can be written as

| (8) |

where is the step-size of linear network part of SAF structure and is the directional vector of tap-weight vector denoted by

| (9) |

where is the forgetting-factor of tap-weight vector . The negative gradient of can be evaluated by taking the derivative the cost function in Equation (7) with respect to as

| (10) |

According to [10], the forgetting-factor of tap-weight vector that places on the orthogonal projection of present gradient vector and previous directional vector is defined as

| (11) |

Similarly, the adaptive control point tap-weight vector is given by

| (12) |

where is the directional vector of control point vector in the recursion form as

| (13) |

where is the forgetting-factor of .

Since the negative gradient of can be expressed using the derivation of Equation (7) with respect to as

| (14) |

where the forgetting-factor of tap-weight vector is computed by

| (15) |

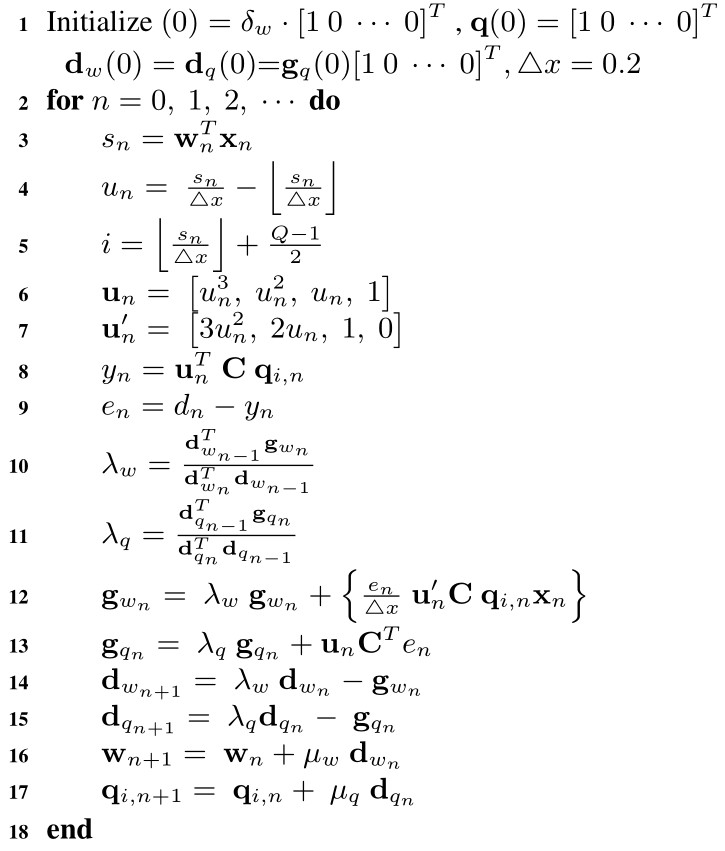

Hence, a proposed SAF based on NOGA algorithm (SAF-NOGA) is described in Algorithm 1.

4 Convergence and Stability Analysis of the Proposed SAF-NOGA Algorithm

Convergence and stability analysis of the proposed SAF-NOGA algorithm are investigated at steady-state as a function of MSE.

4.1 Convergence Analysis

Let us introduce in the approximate form as

| (16) | ||

| (17) |

By using the Taylor series expansion, the estimated error is given by

| (18) | ||

| (19) |

By differentiating in Equation (19) with respect to using chain rule, we get

| (20) |

Algorithm 1 Proposed SAF-NOGA algorithm

From Equation (16), we rewrite as

| (21) |

Substituting Equations (20) and (21) in Equation (18), we then obtain

| (22) |

The norm of both sides of Equation (22) is found as

| (23) |

Therefore, the learning rate of in Equation (23) becomes

| (24) |

Let us consider in the approximate form as

| (25) |

In a similar fashion, the error can be evaluated as

| (26) | ||

| (27) |

By differentiating in Equation (27) with respect to using chain rule, we obtain

| (28) |

Hence, we rewrite Equation (25) in forms of as

| (29) |

Substituting Equations (28) and (29) into Equation (26), we get

| (30) |

The norm on both sides of Equation (30) is now

| (31) |

and the learning rate of is

| (32) |

4.2 Stability Analysis

Considering the case of linear FIR filter, we define the weight error vector as

| (33) |

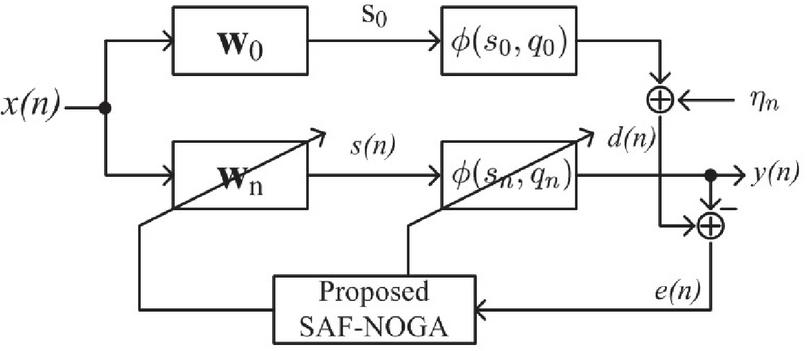

where is the optimal solution shown in Figure 2.

Figure 2 Statistical performance evaluation.

From Equations (16) and (33), we get

| (34) |

In this mathematical analysis, we suppose that proposed algorithm has converged under a few assumptions as follows.

Assumption 1: We deliberate the necessary condition for the convergence, in such a way that

or, equivalently,

From Equation (19), we organize the error in forms of as

| (35) |

where is an error in the optimal solution in the case of adaptive FIR filter as

| (36) |

Let us denote as the expectation of MSE by taking expectation in Equation (35). It can be stated in the form of

| (37) |

By using Assumption 1, we assume that

| (38) |

where is the minimum mean square error (MMSE) produced by the optimal solution as

| (39) |

and is the excess mean square error (EMSE) as

| (40) |

Similarly, the weight error vector following the case of control points as

| (41) |

where is the optimal solution of control points.

From Equations (25) and (41), we get

| (42) |

Assumption 2: We deliberate the necessary condition for the convergence, that is,

or, equivalently,

From Equation (19), we organize the error in forms of as

| (43) |

where , the error produced in the optimal solution in the case of control points, is denoted as

| (44) |

Let us define as the expectation of MSE. It can be given by taking expectation operator on Equation (44) as

| (45) |

By using Assumption 2, we assume that

| (46) |

where is the MMSE produced by the optimal solution as

| (47) |

and is the EMSE as

| (48) |

5 Simulation Results

For the simulation, the experiments with the random process and an unknown Wiener system constituted by a linear component as shown in [2] are used. A 23-point length LUT is appended to a uniform spline with an interval sampling [8] as

The spline basis matrix, called B-spline and named Catmull-Rom spline are used as follows [2]:

| (49) |

and

| (50) |

The comparison of performance of proposed SAF-NOGA (Spline adaptive filter based on normalized orthogonal gradient adaptive algorithm) in accordance with the SAF-LMS (Spline adaptive filter based on least mean square algorithm) [2] in the Wiener system identification over 300 Monte Carlo trials and 10,000 samples is experimented. The generated input signal is the colored signal by [4]

| (51) |

where is a zero mean white Gaussian noise with unitary variance and .

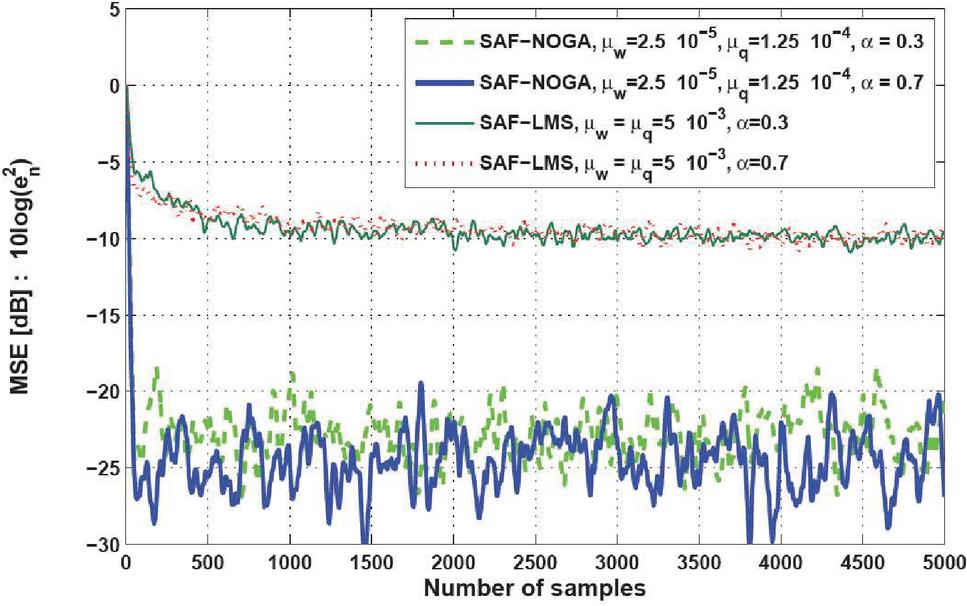

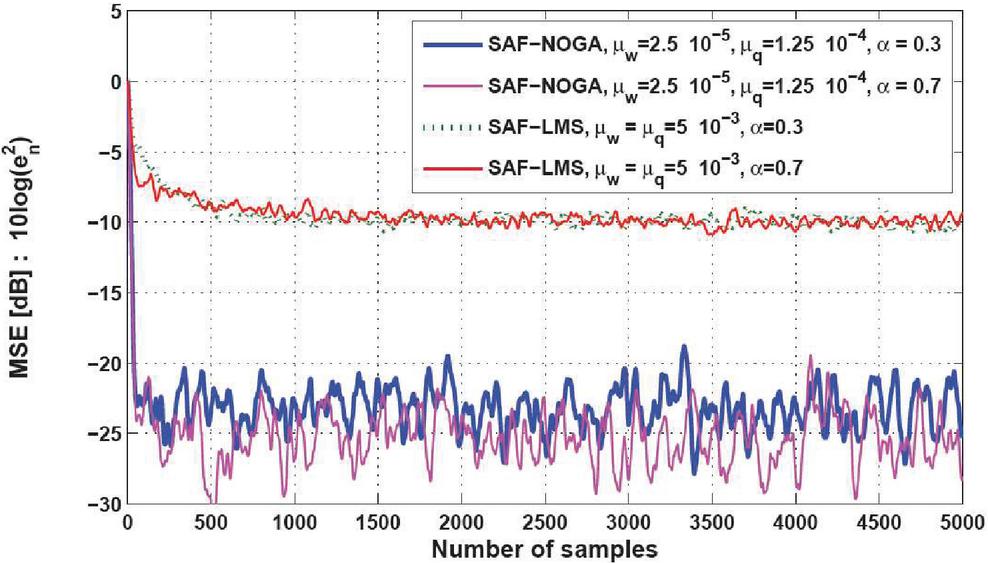

Figure 3 MSE curves of the proposed SAF-NOGA and SAF-LMS [2] using , in Equation (50) and SNR 10 dB.

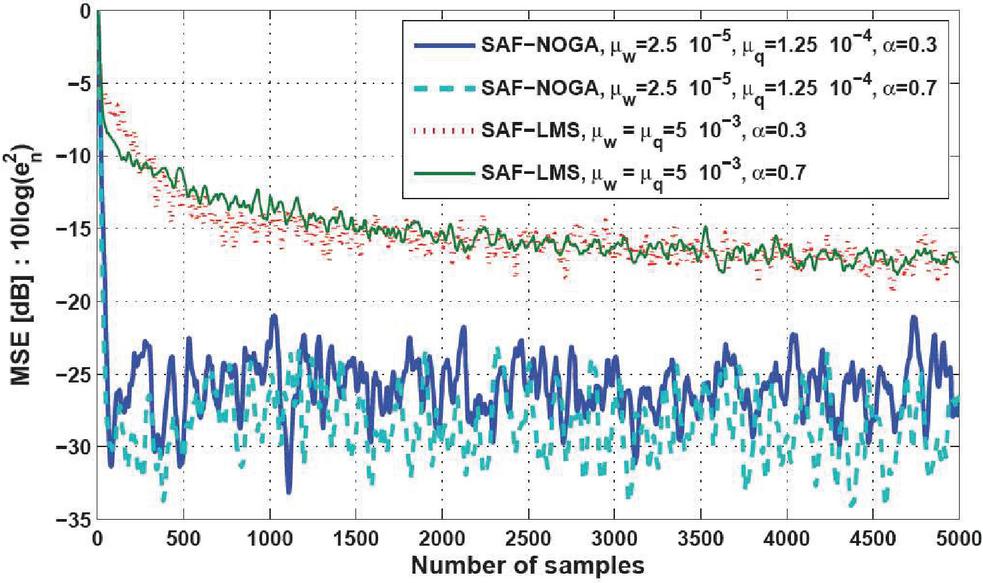

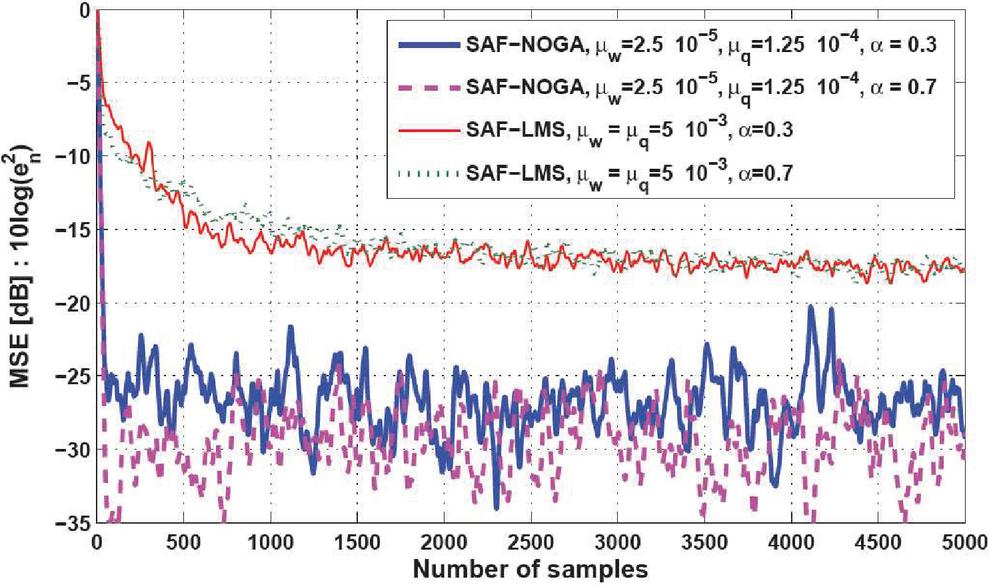

Figure 4 MSE curves of the proposed SAF-NOGA and SAF-LMS [2] using , in Equation (50) and SNR 20 dB.

Figure 5 MSE curves of the proposed SAF-NOGA and SAF-LMS [2] using , in Equation (49) and SNR 10 dB.

Figure 6 MSE curves of the proposed SAF-NOGA and SAF-LMS [2] using , in Equation (49) and SNR 20 dB.

For SAF model, the initial parameters of adaptive linear FIR filter are as , a signal-to-noise ratio SNR 10, 20 dB and the length of coefficients M is tantamount to 5 taps. Initial parameters of proposed SAF-NOGA algorithm are determined as follows: , and of SAF-LMS are as .

Comparison of MSE learning curves is used in the simulation with the different values of . Figures 3 and 4 depict the MSE learning curves of proposed SAF-NOGA and SAF-LMS with these parameters in Equation (50) and SNR 10, 20 dB, respectively, where the MSE in dB is . It is noted that the MSE learning curves of the proposed SAF-NOGA algorithm rapidly reach the steady-state compared with conventional SAF-LMS algorithm.

In addition, Figures 5 and 6 illustrate the MSE learning curves of proposed SAF-NOGA and SAF-LMS with the parameter in Equation (49) and SNR 10, 20 dB, respectively. It is mentioned that the MSE learning rate of proposed SAF-NOGA algorithms exhibit a much faster convergence rate.

6 Conclusion

In this paper, the proposed SAF-NOGA algorithm is explained briefly as to how the NOGA algorithm based on the SAF is investigated using the MMSE criterion. The tap-weight estimated vectors of the adaptive FIR filter and control points have been explicated and analyzed for the NOGA algorithm. Convergence and stability analysis of the proposed algorithms have been considered with the excess MSE approach. Performance of the proposed algorithm in terms of MSE and convergence rate to steady-state is revealed. Simulation results show that the MSE learning curves of proposed SAF-NOGA algorithm can enhance the system performance when compared with the SAF-LMS algorithm or the conventional adaptive filters in the real-time dynamic system. This study is expectedly used for applications such as echo cancelation and adaptive filtering in smart communication technology.

References

[1] L. Ljung. System Identification- Theory for the user. Upper Saddle River, NJ. 1999.

[2] M. Scarpiniti, D. Comminiello, R. Parisi and A. Uncini. Nonlinear Spline Adaptive Filtering. Signal Processing, 93(4): 772–783, 2013.

[3] M. Scarpiniti, D. Comminiello, R. Parisi and A. Uncini. Novel Cascade Spline Architectures for the Identification of Nonlinear Systems. IEEE Transactions on Circuits and Systems I: Regular Papers, 62(7): 1825–1835, 2015.

[4] M. Scarpiniti, D. Comminiello, R. Parisi and A. Uncini. Hammerstein Uniform Cubic Spline Adaptive Filtering: Learning and Convergence Properties. Signal Processing, 100: 112–123, 2014.

[5] M. Scarpiniti, D. Comminiello, R. Parisi and A. Uncini. Spline Adaptive Filters: Theory and Applications, Adaptive Learning Methods for Nonlinear System Modeling, ELSEVIER, 47–69, 2018.

[6] S. Guan and Z. Li. Normalised Spline Adaptive Filtering Algorithm for Nonlinear System Identification. Neural Processing Letter, 46(2), 595–607, 2017.

[7] M. Scarpiniti, D. Comminiello, R. Parisi and A. Uncini. Nonlinear System Identification using IIR Spline Adaptive Filters. Signal Processing, 108, 30–35, 2015.

[8] C. Liu and Z. Zhang. Set-membership Normalised Least M-estimate Spline Adaptive Filtering Algorithm in Impulsive Noise. Electronics Letters, 54(6), 393–395, 2018.

[9] P.S.R. Diniz. Adaptive Filtering: Algorithms and Practical Implementation. Springer, 2008.

[10] S. Sitjongsataporn. Convergence Analysis of Greedy Normalised Orthogonal Gradient Adaptive Algorithm. In Proceedings of IEEE International Symposium on Communications and Information Technologies, Bangkok, Thailand, pp. 345–348, 2018.

[11] S. Guarnieri, F. Piazza and A. Uncini. Multilayer Feedforward Networks with Adaptive Spline Activation Function. IEEE Transactions on Neural Network, 10(3): 672–683, 1999.

[12] S. Kalluri, G.R. Arce. General Class of Nonlinear Normalized Adaptive Filtering Algorithms. IEEE Transactions on Signal Processing, 48(8): 2262–2272, 1999.

[13] S. Sitjongsataporn and T. Wiangtong. Spline Adaptive Filtering based on Normalised Orthogonal Gradient Adaptive Algorithm. In Proceedings of IEEE International Conference on Engineering, Applied Sciences and Technology, pp. 575–578, 2019.

[14] S. Prongnuch and S. Sitjongsataporn. Performance Analysis and Enhancement of Spline Adaptive Filtering based on Adaptive Step-size Variable Leaky Least Mean Square Algorithm. Advances in Science, Technology and Engineering Systems Journal, 5(6): 642–651, 2020.

Biographies

Theerayod Wiangtong received the B.Eng. degree (First-class honors) in electronic engineering from King Mongkut’s Institute of Technology Ladkrabang, the M.Sc. degree in satellite communication from University of Surrey, and the PhD. degree in digital system design, co-design from Imperial College, London, UK. Currently, he is an Assistant Professor with the Department of Electrical Engineering, King Mongkut’s Institute of Technology Ladkrabang. His research interests include digital IC design, hardware/software co-design, embedded systems, algorithm and optimization, and data processing.

Suchada Sitjongsataporn received the B.Eng. (First-class honors) and D.Eng. degrees in electronic engineering from Mahanakorn University of Technology, Bangkok, Thailand, in 2002 and 2009, respectively. She has been working as a Lecturer with the Department of Electronic Engineering, Mahanakorn University of Technology since 2002. Currently, she is an Associate Professor with the Department of Electronic Engineering and the Associate Dean for Research at Faculty of Engineering and Technology in Mahanakorn University of Technology. Her research interests are mathematical and statistical models in the area of adaptive signal processing for communications, networking, embedded system, image and video processing, and embedded systems.

Journal of Mobile Multimedia, Vol. 17_4, 657–672.

doi: 10.13052/jmm1550-4646.1748

© 2021 River Publishers