State-of-the-Art of Artificial Intelligence

Ramjee Prasad and Purva Choudhary*

CITF Global Capsule, Department of Business Development and Technology, Aarhus University, Herring, Denmark

E-mail: ramjee@btech.au.dk; purvachoudhary@gmail.com

*Corresponding Author

Received 23 September 2020; Accepted 30 November 2020; Publication 29 January 2021

Abstract

Artificial Intelligence (AI) as a technology has existed for less than a century. In spite of this, it has managed to achieve great strides. The rapid progress made in this field has aroused the curiosity of many technologists around the globe and many companies across various domains are curious to explore its potential. For a field that has achieved so much in such a short duration, it is imperative that people who aim to work in Artificial Intelligence, study its origins, recent developments, and future possibilities of expansion to gain a better insight into the field. This paper encapsulates the notable progress made in Artificial Intelligence starting from its conceptualization to its current state and future possibilities, in various fields. It covers concepts like a Turing machine, Turing test, historical developments in Artificial Intelligence, expert systems, big data, robotics, current developments in Artificial Intelligence across various fields, and future possibilities of exploration.

Keywords: Artificial Intelligence, Turing test, expert systems, big data, machine learning, deep learning, Gartner Hype Cycle.

1 Introduction

Humans are considered to be the most intelligent species on earth. Intelligence is the facet that sets us apart from the rest of the living world. But human intelligence has its limitations too. Thus, there arises a need for Artificial Intelligence, a technology which when introduced into a system, enables it to attain intellectual capabilities that may assist humans in pursuit of greater progress of mankind.

The conceptualization of such a system was done long before it was realized. In 1937, an English mathematician named Alan Turing, a person who was later considered to be the Father of Modern Computer Science [1], published a paper called – ‘On Computable Numbers, with an Application to the Entscheidungs problem’ [2] in which he described a machine, capable of computing any computable function. This machine was later termed as Turing Machine [3].

It may often be wondered if a machine that imitates the human quality of sentience, is sentient itself. Alan Turing asserted that a machine that could flawlessly imitate the human quality of unrestricted conversation could indeed be claimed to have intelligence [4], when he published his paper entitled ‘Computing Machinery and Intelligence’ [5]. In this paper, he describes a test, which he calls ‘The Imitation Game’. This test requires a human, a machine, and an interrogator. The interrogator asks questions to the machine as well as to the man and based on their answers, determines which amongst the two, is a machine. As the interrogator is neither able to see the participants nor able to hear their voices (as the answers are typed out), the interrogator can come to a conclusion only based on the answers given by both the participants. This test came to be known as the Turing test [6].

For a machine to pass the Turing Test, it should be able to [7]:

– process natural language

– store information which is provided before or during the interrogation

– use stored information to answer questions asked by the interrogator

– detect and estimate new patterns to adapt to new circumstances.

– display its emotional intelligence [8]

Following Turing, many people came forward with their version of the test. The Feigenbaum test [9, 10] expects the machine to pass a test in a particular field and gain expertise in it. The test by Nicholas Negroponte [11] determines a machine’s intelligence in its ability to be able to work together seamlessly with humans. Although many such tests are developed to date, the Turing test remains a benchmark for artificially intelligent systems. This is evident from the coveted Loebner Prize which is awarded every year to the most human-like machine, based on the Turing test [12, 13].

The paper is organized as follows:

Section 2 presents the initial efforts taken by pioneers in Artificial intelligence, starting with the development of chess-playing programs to further progress with the organization of the first workshop on Artificial Intelligence.

Section 3 describes developments in Artificial Intelligence with the advent of Expert Systems and their implementation for commercial purposes. The section also talks about an increase in funding for Artificial Intelligence due to initiatives taken up by various national governments.

The introduction and importance of Big Data and developments in the field of robotics, especially with the evolution of application-specific robots is discussed in Section 4.

Section 5 elaborates on the advancements related to Artificial Intelligence in various fields like health, transportation, finance, defense, aviation, agriculture, etc.

Section 6 predicts the future breakthroughs with a focus on the Gartner Hype Cycle for Artificial Intelligence (2019) which enlightens on the time taken by various areas to reach stability after which they can be incorporated into commercial use.

The paper is concluded in Section 7.

2 Initial Efforts

The first work in Artificial Intelligence was done by Warren McCulloch and Walter Pitts in 1943 [14]. The system was based on a model that was similar to the actual working of neurons in the human brain. Each neuron could either be in an ‘ON’ state or an ‘OFF’ state. The neuron is simulated to ‘ON’ state when sufficient numbers of neighboring neurons are switched to ‘ON’ state. This model demonstrated that logical operators can be implemented using neuron-like net structures. Further, McCulloch and Pitts also suggested that suitably defined networks could also assimilate knowledge. This statement was later realized by Donald Webb [14].

Work on Artificial Intelligence went on progressing in various fields, of which, the prominent one was in writing chess programs. The game was chosen as it has movements as well as an endpoint clearly defined. The possibility of finding a solution to this problem would have led to a clear indication if machines of the future were capable of mechanized thinking since chess itself is considered a game of adroit thinking [15].

The first research paper on developing a chess-playing program was written by Claude Shannon [16, 17]. In his paper titled – ‘Programming a Computer for Playing Chess’ [15], he described two distinct strategies which could be implemented:

Strategy – A: Inspecting multiple moves and implementing a min-max search algorithm. He stated that this strategy relies too much upon “brute force” calculations. He thus suggested a second strategy.

Strategy B: A program based on “type position” which would use specialized heuristics and tactical Artificial Intelligence, exploring only a few key candidate moves.

The first workshop that established Artificial Intelligence as a research discipline is widely believed to have been organized by John McCarthy at Dartmouth College in 1956 [18]. This was also the first time that the term Artificial Intelligence was officially coined [19]. Although most other participants had come up with ideas and programs on various applications, a reasoning program called ‘Logical Theorist’ [14] had been developed by Alien Newell and Herbert Simon. The program was able to solve multiple problems from Principia Mathematica, even coming up with shorter proof for one of the problems in Principia.

The success of Logical Theorist was followed by the invention of many such machine programs including the General Problem Solver [20] which imitated human problem-solving protocols and the Geometry Theorem Prover [20] which proved theorems using explicitly represented axioms. Later in 1958, John McCarthy defined a high-level language termed as ‘Lisp’ [20] which would be prominently used for Artificial Intelligence programming.

3 Rise of Expert Systems and Increase in funding for Artificial Intelligence

After the initial efforts, came the age when systems were developed which found answers based on the knowledge they had about the problem rather than relying on complex searching techniques [21, 22]. DENDRAL [23] was the first knowledge-intensive system which was developed [24] in 1965 at Stanford University by Edward Feigenbaum and Joshua Lederberg to predict the molecular structure of compounds like C6H13NO2, based on data provided by mass spectrometer.

DENDRAL emphasized the power of specialized knowledge over generalized problem-solving methods [25]. The uniqueness of this system lied in the separation of rules and reasoning components of knowledge. The two components of the system, namely, knowledge base and inference engine work in conjunction, with the inference engine interpreting and evaluating the facts given in the knowledge base [26]. Such systems came to be known as ‘knowledge-based systems’ or ‘Expert systems’ [21].

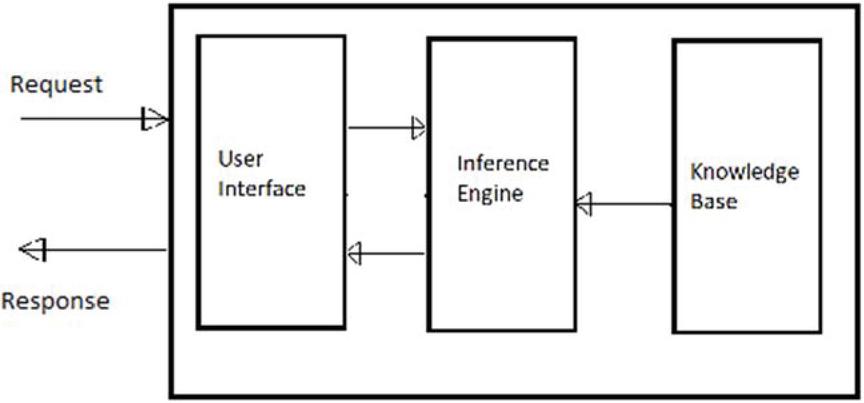

Figure 1 Block diagram of an Expert System.

Figure 1 shows the block diagram which contains the elements inside an Expert system. An Expert system comprises a user interface, an inference engine, and a knowledge base. The knowledge base contains knowledge related to a particular field along with certain rules to solve any problem related to the domain. The user interface lets users insert questions of which they need answers. Based on the request (question), the inference engine extracts knowledge and rules from the pre-existing knowledge base, comprehends them to infer new knowledge, and provides the relevant answers to the user (response) [27]. Expert systems’ tasks include [26] classification, diagnosis, monitoring, designing, scheduling, and planning for the job at hand. These systems are built for one specific application and thus have a narrower area of expertise.

After DENDRAL, other expert systems were developed in the medical field, some of which were MYCIN and INTERNIST-I. MYCIN [28] was developed for identifying bacteria causing infections and selection of antimicrobial therapy along with its dosage based on the patient’s body weight. MYCIN primarily used backward chaining or a goal directed control strategy in the inference engine. INTERNIST-I [29] was a program capable of making diagnoses in internal medicine. In other domains, expert systems like Macsyma [22, 30], R1 (XCON) [31], PROSPECTOR [32] and LUNAR [33] were developed for computer hardware configuration, geology, etc. Macsyma [22, 30] is an expert system that can solve 600 kinds of mathematical problems including calculus, matrix operations, and solving equations using heuristics. Macsyma was later developed into a commercial product [34] in 1999 and is distributed by Symbolics for Microsoft Windows versions 95, 98, 2000, and XP. LUNAR stood out [23] for being the first natural language program that could be used by people other than the system author to get the work done. Since then, many programs have been developed which use natural language as an interface.

Broadly, Hayes-Roth has classified [35] expert systems into ten categories, namely, interpretation, prediction, diagnosis, design, planning, monitoring, debugging, repair, instruction, and control. An expert system may be classified into one or more of these categories.

Following the success of expert systems, Artificial Intelligence based systems started becoming ubiquitous in industries. R1 was the first successful commercial expert system to be implemented [36]. It was installed at Digital Equipment Corporation (DEC) in 1982. The program helped in configuring orders for new computer systems. Gradually, all major US corporations started incorporating Artificial Intelligence. By the end of 1980, it was estimated [37] that over half of the Fortune 500 companies were involved in either developing or maintaining expert systems.

In 1981, a project was announced in Japan called the Fifth Generation computer system project (FGCS) [36, 37]. This was a ten year project which was taken up by Japan to explore the avenues of building intelligent computers that would run Prolog like what traditional computers execute machine code. This would lead to a system that could have the ability to make millions of inferences in a second. This project, though ambitious, threatened other countries in the world of Japanese domination in the area of computers. Fueled by this, a research consortium by the name of The Microelectronics and Computer Technology Corporation (MCC) [36] was launched in the United States to counter the developments achieved by the FGCS project. In Britain too, the Alvey report [36] was released. This report brought back the funding to Artificial Intelligence, which was earlier cut down due to the Lighthill report. By the end of 1988, the Artificial Intelligence industry had grown from a few million dollars to 2 billion dollars in sales [36].

4 Big Data and Robotics

Big Data is the backend data generated from several user actions that can be extracted and analyzed to gain an insight that may benefit other businesses [38]. From the year 2000, such large data started getting collected from several platforms [37] amongst which major ones were social media and genetic sequencing [39]. The data collected from social media platforms helps in understanding user’s latent interests, political proclivity, etc. Based on the analysis done on these parameters, the platforms can present advertisements of your interests, social groups of people with similar interests, and overall a more personalized experience for the user. With the current availability of large amounts of genetic data, the cost of predicting the illnesses a person is likely to face in his lifetime and suggesting the best possible personalized medicine based on his gene analysis has fallen considerably [39].

Humanoid robots had long since captivated the interests of science fiction enthusiasts. The film ‘Metropolis’ released in 1927 depicted the first robot. Thus, the progression of Artificial Intelligence in robotics was a natural one. By 1961, the first industrial robot ‘Unimate’ had started working on the General Motors assembly line in New Jersey [40]. Japan has been a flag-bearer in research related to robotics. Waseda University in Japan started the WABOT project [41, 42] in 1967. By 1972, the prototype of WABOT-I had started walking. In 1994, a fully robotic radiosurgery device called Cyberknife [43] was developed at Stanford University. Today, humanoid robots are used in various sectors to complete repetitive tasks and work in environments that may be hazardous for humans. Robonaut2 (R2) [44, 45] is a dexterous humanoid robot employed by NASA, abode the International Space Station, which can work alongside humans and help them in their quest for space exploration. Pepper [46], is the first social humanoid robot that was developed by SoftBank (Aldebaran) Robotics to have the ability to recognize faces and emotions. Humanoid robots like Sophia [47] developed by Hanson Robotics have gained international recognition for their humanlike qualities of displaying a variety of complex and emotional expressions.

Chatbots is another field of invention under the umbrella of Robotics. Chatbots were created which could communicate with humans. Starting with Chatbots [48] like Eliza, Parry, Jabberwacky, and Dr. Sbaitso which had preliminary abilities, to more advanced chatbots like A.L.I.C.E, Cleverbot, and Smarterchild, we have progressed to now having chatbots like IBM Watson. IBM Watson was primarily developed to compete in Jeopardy. Personal assistants like Siri, Google Now, Alexa, Cortana, etc. have now become a part of our daily life. Chatbots are being developed for specialized applications such as to work as a therapist, tech support, etc.

5 Current Developments in Artificial Intelligence

Today, Artificial Intelligence encompasses various technologies. Machine learning and deep learning are two major areas in Artificial Intelligence that have garnered the spotlight in recent years [49].

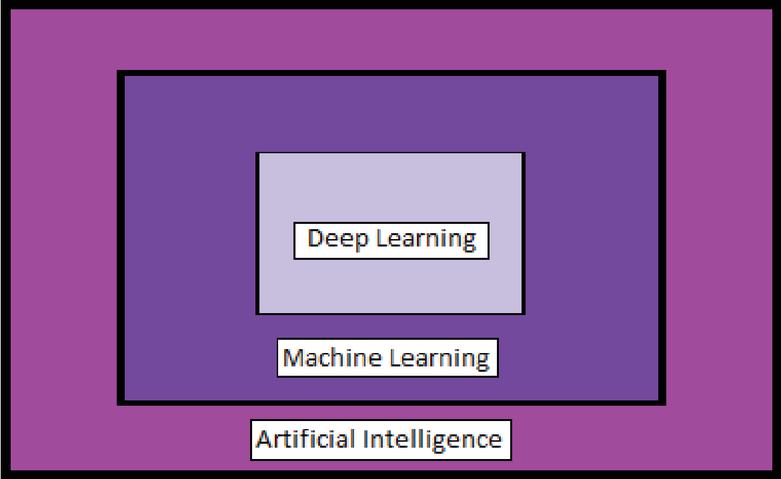

Figure 2 Relationship between Artificial Intelligence, machine learning, and deep learning.

The relationship between the three fields can be explained in Figure 2. Artificial Intelligence encapsulates the field of Machine Learning. Further, Deep Learning is a subset of Machine Learning [50]. Machine Learning [49] is a way of achieving Artificial Intelligence. The phrase was first coined by Arthur Samuel in 1959. He defined it as the ability to learn without being explicitly programmed. Machine Learning enables Artificial Intelligence without hardwiring multiple complex rules and decision trees using millions of lines of code. Instead, this is done by implementing an algorithm that trains a machine on how to learn by itself so that it can adapt to upcoming changes without human intervention. This training is accomplished by feeding large amounts of relevant data, allowing the algorithm to adjust itself and improve.

Deep Learning [49] is a technique to implement Machine Learning. The working of a Deep Learning algorithm is analogous to the working of an actual brain, with the use of multiple layers of neurons and each layer containing multiple neurons, which are connected using Artificial Neural Networks (ANNs). Each layer of an ANN picks out a feature to learn and multiple such layers are thus able to learn the whole information.

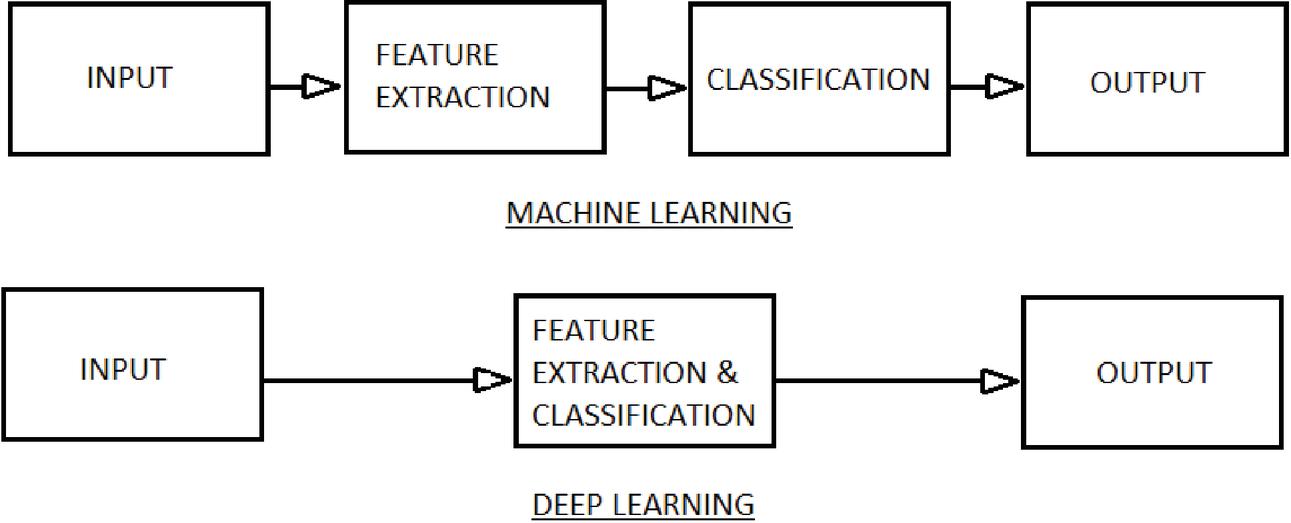

Figure 3 Difference between machine learning and deep learning.

As shown in Figure 3, the Machine Learning technique requires a human to work on identifying important features from the given data and classifying them [51]. This is represented by the ‘Feature Extraction’ and ‘Classification’ blocks in the Machine Learning block diagram. In contrast, Deep Learning employs a neural network to select the most critical features amongst the given data input. The neural network is represented by the ‘Feature Extraction and Classification’ block in the Deep Learning diagram. With the help of neural networks, Deep Learning may sometimes even surpass human capabilities of learning from the given data.

All the various technologies related to Artificial Intelligence can be broadly classified into 3 main areas: Sense, Comprehend, and Act [49]. Sense refers to the perception of the surroundings. This includes the acquisition and processing of sounds, images, speech, etc. Comprehend describes the analysis of the collected information. Lastly, Act represents the physical manifestation of computer language instructions. The below table explains these three main areas along with examples of corresponding Artificial Intelligence technologies.

Table 1 Various Artificial Intelligence technologies mimicking human capabilities

| Human Capabilities | Examples of Corresponding AI Technologies |

| Sense | Computer Vision |

| Audio Processing | |

| Comprehend | Natural Language Processing |

| Knowledge Representation | |

| Act | Machine Learning |

| Expert Systems |

As shown in Table 1, the human capabilities of sensing, comprehension, and action can be imitated by various Artificial Intelligence technologies [52]. Computer Vision and Audio processing perceive the activities being carried out around them. This information can later be used for applications like Speech Recognition and Object Recognition. Similarly, Natural Language Processing can be used for enabling translations.

These solutions are implemented in various domains, the prominent ones of which are given below:

Clinical Decision Support Systems (CDSS) are Artificial Intelligence based tools used in the management of medical data for helping in medical prognosis in one specific area or providing patient-specific recommendations [53]. A study [54] was conducted in 2018 for a systematic classification of the clinical impact of CDSS in in-patient care. The study showed that CDSS offers better results for blood glucose management, blood transfusion management, physiologic deterioration prevention, pressure ulcer prevention, acute kidney injury prevention, and venous thromboembolism prophylaxis. The study also reported a 7% decrease in mortality, 23% reduction in life-threatening events and 40% reduction in non-life-threatening events due to CDSS. Overall, CDSSs were shown [55] to significantly improve clinical practice in 68% of the trials.

Computer Aided Detection (CAD) is an area where Artificial Intelligence and Computer Vision are deployed to aid radiologists in detecting potential abnormalities on diagnostic radiological examinations [56]. Though CAD was earlier considered to be a reliable tool mainly for breast tumor detection, studies [57] have now shown that CAD does not have a significant added advantage in this area.

In the field of genetics, Functional Genomic Analysis has seen the most advancements using techniques of Deep learning. Due to the availability of large amounts of data related to DNA, RNA, methylation, chromatin accessibility, histone modifications, chromosome interactions, etc., we are now able to build [58] large enough training sets to develop accurate prediction models for studying gene expression, genomic regulation or variant interpretation.

Infotainment Human Machine Interface and Advanced Driver Assistance Systems (ADAS) are the two areas in which Artificial Intelligence is being implemented in the automobile sector [59]. Nowadays, most of the cars are equipped with Speech Recognition. Distractions like phone calls, messages, changing playlists, etc. can divert driver’s attention which may lead to an accident. Companies like Apple, Google, and Nuance have therefore come up with voice activation systems which enable drivers to perform various activities like navigating the way to the destination, viewing the latest messages and emails, controlling the music system, and making phone calls all by giving verbal commands [60]. On the other hand, the two main purposes [61] currently served by ADAS algorithms are to provide output to the driver, primarily in the form of alerts, and offering precision in vehicle control. This includes systems that help the vehicle in navigation (braking, steering, or other safety-related commands). Another important aspect in which Artificial Intelligence is being used for automobiles is in diagnosis and repair [62]. Artificial Intelligence based diagnostic system receives data from the electronic control system. The system processes this data logically under program control in accordance with the set of internally stored rules. The result of the computer-aided diagnosis is an assessment of the problem and recommended repair procedures. Such systems can significantly improve the efficiency of the diagnostic process and can thereby reduce maintenance time and costs.

Finance Industry has been deeply impacted by developments in Artificial Intelligence. Fraud detection [63] is accomplished using Machine Learning algorithms. Fraudulent transactions that may otherwise go unnoticed under human vigilance can now be detected and stopped in real-time. Potential security threats may also be detected and flagged for further investigation using Artificial Intelligence. MasterCard has employed one such technology named Decision Intelligence [64] which determines a baseline for each user based on their transaction history and sends an alert whenever it notices any transaction which significantly differs from the baseline.

Assistance in personal finance management and financial advising is another area where developments in Artificial Intelligence are noteworthy. Applications like Digit [65], Walnut [66], Wallet.AI [67], etc. have become ubiquitous as money managers and spending trackers. Banks and other financial institutions too are investing in Artificial Intelligence based advisors which can help their customers make a more informed decision in financial matters. Amelia [68], a chatbot trained in 40 insurance related topics, can assist call center representatives of Allstate Insurance in customer conversations. Similarly, Erica [69], a chatbot created for customers of The Bank of America is available on their mobile app and works as a financial assistant.

Algorithmic trading [63] has also flourished because of Artificial Intelligence. Algorithmic trading involves imbibing the rules of trading in an Artificial Intelligence system to develop a system that can implement these rules to make extremely fast trading decisions. High frequency trading [70] is a subset of algorithmic trading. High frequency trading involves rapid computer driven responses to the changing market conditions leading to a higher number of trades with a lower average gain per trade but a high turnover of capital. In 2008, majority of the corporations implementing high frequency trading were shown to gain profits whereas 70% of the companies who practiced low frequency trading incurred loss [70].

Microtargeting [71, 72] refers to segmentation based on an algorithm for determining the demographic and attitudinal traits to distinguish individuals for each targeted segment. With the advent of Artificial Intelligence, a person’s social media activities can be closely monitored via data mining to gauge their interests. Such data can be used for effective advertising of products to a niche customer base. Microtargeting is also sometimes used to garner public support during election campaigns. The practice of microtargeting, though effective, has raised questions regarding violation of privacy of user information [73].

Artificial Intelligence is widely used in the development of battlefield and weapons control systems [74]. Artificial intelligence helps in various areas like target acquisition, targeting of moving targets, coordination amongst vehicles, and change of flightpath of distributed Join Fires between networked combat vehicles, tanks, ships, etc. in situations of accidental collisions. The main applications of Artificial Intelligence and Machine Learning with respect to defense are to enhance Command and Control (C2), Communications, Sensors, Integration, and Interoperability.

Self-repairing Flight Control Systems [75] are Artificial Intelligence based systems implemented in aircrafts. These systems can detect damages in an aircraft and let the aircraft continue flying using the remaining components that are still intact until the aircraft reaches a safe landing zone. Integrated Vehicle Health Management System (IVHM) [76] has become an essential part of aircraft systems. IVHMs use sensors along with sensor data processing technologies to improve vehicle safety, reduce maintenance costs, and predict potential faults and failures.

The Food and Agriculture Organization of the United Nations (FAO) predicts [77] a 50% increase in agricultural needs from 2012 to 2050 to suffice the growing population demands of the world. In such a situation, it is preemptive to come up with initiatives that will help in increasing per hectare productivity of farming lands. Artificial Intelligence primarily helps [78] in creating automated robots that handle agricultural tasks, developing computer vision which monitors soil health, developing systems which predict the impact of various environmental phenomenon on crop yields, etc. Artificial Intelligence also helps in [79, 80] predicting the time by which a particular crop would be in its prime for harvest, identifying plant diseases, identifying optimal mix for agronomical products, detecting pest infestations, automating farm equipments to increase the farm yield, using the least possible water and fertilizers without compromising on the quality of the crop, etc. Precision farming [81] is an upcoming area in the field of agricultural Artificial Intelligence. Precision farming aims for profitability, efficiency, and sustainability while employing state of art technologies like geo-mapping, sensor and remote sensing, high precision positioning system, etc. Precision farming thus replaces the repetitive and labor-intensive aspect of farming and also provides guidance related to crop rotation, optimum planting, nutrient management, water management, etc.

Although Artificial Intelligence has proven to be a boon in numerous instances, some of which have been cited above, it can also be used for negative purposes. One such deplorable application of Artificial Intelligence is Deepfakes [82]. Deepfake refers to the manipulation of pictures, video, or audio using Deep Learning. Several Artificial Intelligence researchers are struggling to combat the growing terror of Deepfakes by researching programs that can detect tampering of media via Deepfakes [83, 84]. Realizing the consequences of such a technology, a bill [85] has been introduced in the U.S. to place restrictions on Deepfake technology to combat the spread of misinformation.

6 Future Possibilities in Artificial Intelligence

There is a lot of scope for developments related to Artificial Intelligence in the field of medicine. Artificial Intelligence can expand in a number of healthcare related fields [86] like fitness tracking, drug discovery and analysis, diagnosis and tracking of mental health disorders, personalized nutrition planning, etc.

As stated above, Computer Aided Detection (CAD) systems have proven incompetent in detecting breast tumors. But the current state of CAD systems can be improved by employing Faster Deep Convolutional Neural Networks (Faster R-CNN), one of the most successful object detection frameworks for developing systems that can detect and classify benign and malignant lesions without human intervention, with much higher levels of accuracy [87]. CAD can also be used in the future as a part of digital pathology [88].

In the field of genetics, we can expect the identification of novel gene functions and regulation regions [89] using Machine Learning technologies which can analyze large sets of genomic data.

In the field of automobiles, self-driving cars [90] can be expected to become ubiquitous in the future. For that to happen, developments need to be made in some of the key technologies, namely, car navigation system, path planning, environment perception, and car control.

In the field of aerospace, systems are being developed that can imitate human pilots in following Flight Emergency Procedures in case of situations like engine failure to fire, rejected take-off and emergency landing, using the Learning by Imitation approach [91].

Artificial Intelligence can be employed in the field of education [92] with the implementation of Artificial Intelligence graders, deployment of Artificial Intelligence tutors for students with social anxiety, usage of Virtual Reality technology as a part of immersive learning, etc.

Though Artificial intelligence is being implemented in most of the fields related to agriculture, there is still a lot to be explored in its application in the fields of horticulture and greenhouses [93]. Efforts are being made to develop autonomous greenhouses with control setpoints for standard actuators being remotely determined using Artificial Intelligence algorithms. Initial experimentations have proven that Artificial Intelligence may outperform humans in controlling a greenhouse.

Artificial intelligence has the potential to fuel economic growth in the coming years.

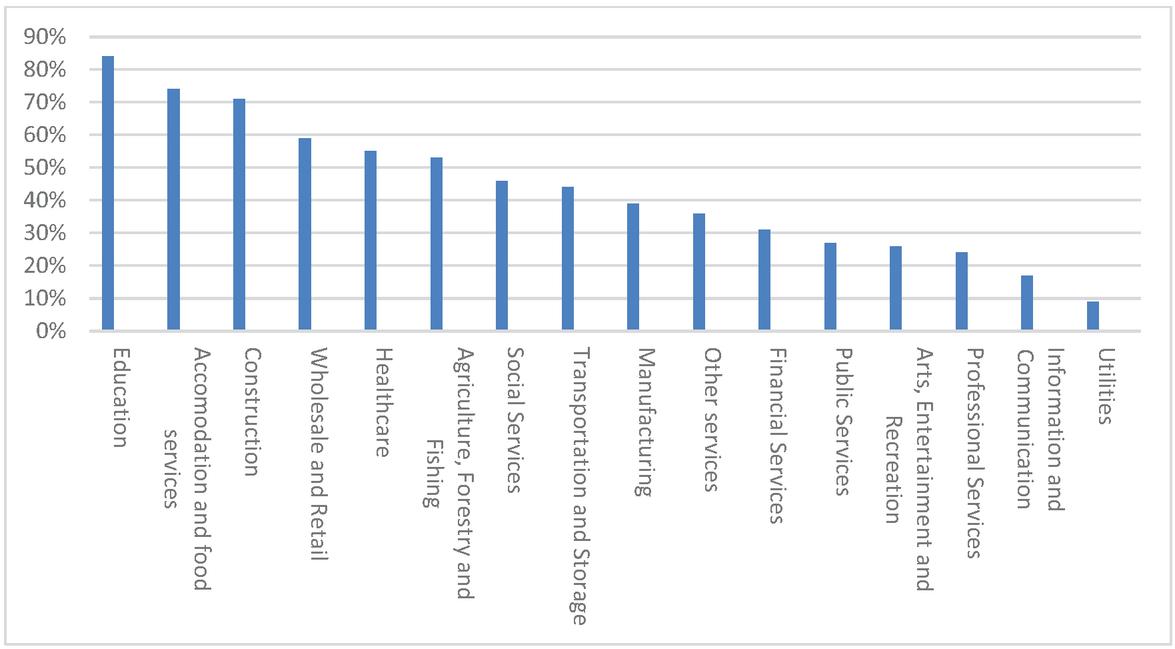

Figure 4 Projected increase in industry profits with inclusion of Artificial Intelligence by 2035.

As shown in Figure 4, a study [94] conducted by Accenture shows that stable inclusion of Artificial Intelligence across various domains will lead to substantially larger economic profits as compared to expected baseline profit levels, by the year 2035.

Artificial Intelligence has been successful in rivaling and sometimes superseding human expertise in various domains [95] right from playing games like Chess, Poker, Jeopardy, etc. to predicting schizophrenia, detecting skin cancer, facial recognition, voice recognition, etc. Ray Kurzweil, an American inventor and futurist, has predicted that humans would be able to achieve Artificial Intelligence systems with human capabilities by 2029 [96].

The Gartner Hype Cycle [97] generally gives an insight on how a particular technology will evolve, thus helping businesses in determining if and when the technology should be used to meet their business goals, based on their risk-taking capacities. The Gartner Hype Cycle for Artificial Intelligence helps us understand the current stage of different technologies under Artificial Intelligence and the time they will take to reach a stable state for widespread commercial usage. The Gartner Hype Cycle postulates that each technology goes through the following phases after its introduction:

Innovation Trigger: Technology experiences wide publicity and heightened expectations because of its initial breakthrough. Its commercial viability is yet to be explored.

Peak of Inflated Expectations: The expectation from the technology rises to its peak. Some applications of the technology may turn successful while others may fail.

Trough of Disillusionment: Funding for the technology wanes off as the technology fails to deliver to its hyped expectations.

Slope of Enlightenment: Advantages and limitations of the technology are realized and it becomes clearer how the technology will benefit certain businesses.

Plateau of Productivity: Technology reaches a point where it can be successfully adopted to mainstream usage.

The Gartner Hype Cycle for Artificial Intelligence, 2019 [98] predicts that areas like Speech Recognition, Robotic Process Automation Software, and Graphics Processing Unit (GPU) accelerators will reach a plateau of productivity in less than 2 years. Amongst the three, Robotic Process Automation Software is currently lying in the phase of Trough of Disillusionment where early explorations have been met with some negative results thus leading to a reduction in funding. On the other hand, areas like Artificial General Intelligence, Quantum Computing, and Autonomous Vehicles are expected to take more than 10 years to reach the plateau.

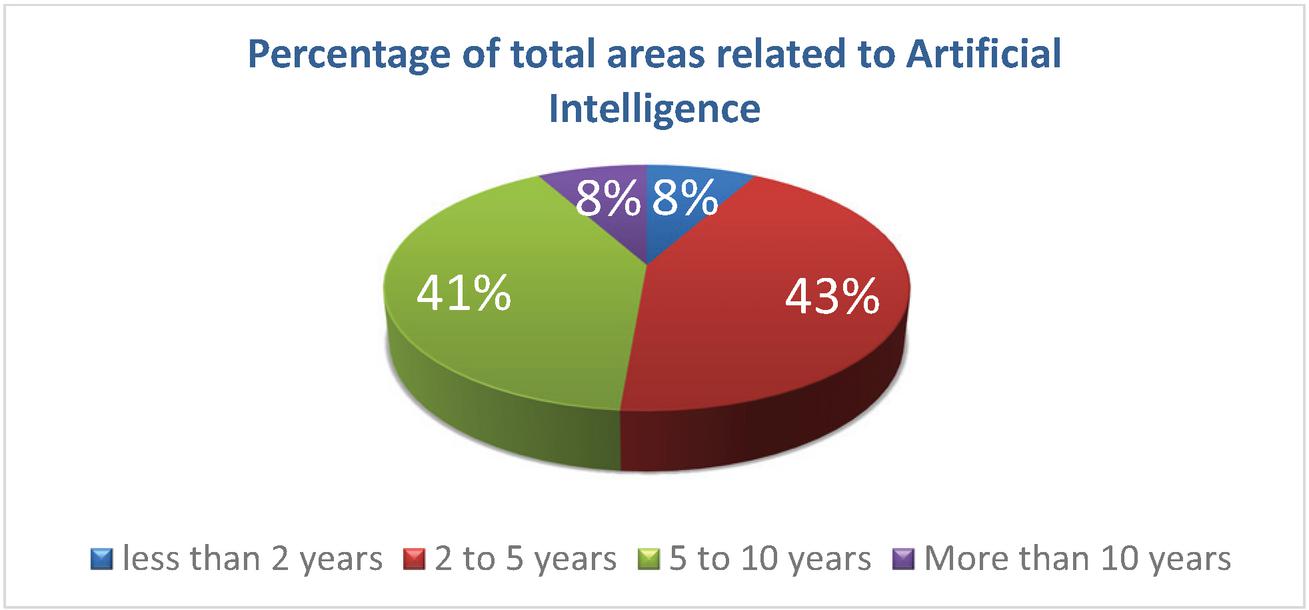

Figure 5 Predicted periods for reaching the plateau pertaining to the percentage of total areas related to Artificial Intelligence.

As shown in the Figure 5 pie chart, it is predicted that 43.24% fields related to Artificial Intelligence will require the next 2 to 5 years to reach the plateau while another 40.54% of total fields related to Artificial Intelligence would require the next 5 to 10 years to reach the plateau. Also, none of the areas related to Artificial Intelligence have been predicted to become obsolete before reaching the plateau.

7 Challenges with Artificial Intelligence

One of the primary challenges in Artificial Intelligence has been, to create robust models which can give correct output for a variety of inputs [99]. While we have come a long way in being able to identify objects using deep learning, it has also been observed that it is just as easy to trick a deep learning Artificial Intelligence model into identifying objects incorrectly. There have been multiple instances where researchers were able to trick the Artificial Intelligence model into giving incorrect results using deceptive inputs. Researchers have proved that a deep learning algorithm can be fooled to misidentify an object just by changing one pixel in the image [100]. In another instance, researchers at McAfee were able to trick Tesla cars by sticking a strip of black tape on the speed limit sign which tricked the vehicle’s autonomous driving model into speeding the cars up by 50 mph [101]. Such techniques to fool the machine learning algorithm by supplying deceptive inputs is called adversarial machine learning and such deceptive examples are called adversarial examples.

Secondly, Artificial Intelligence models have problems in generalizing from pre-trained examples [99]. For example, a baby can identify a giraffe even if it has seen a giraffe only once or twice, because of the human capability of correlating the salient feature of a giraffe to that of other living organisms. Similarly, for Artificial Intelligence models, the ability to reuse the knowledge gained from previous rounds of training to train for a similar task is called transfer learning. But transfer learning has its own limitations. Due to this, a system which has gained expertise in a certain task, may end up loosing its ability to work effectively on that task, in an effort to learn a similar task using transfer learning.

Another problem that plagues the Artificial Intelligence models is that of Algorithmic Bias [102]. Supervised Machine learning algorithms build prediction models based on the labeled data that they receive. When algorithms start receiving biased data, it leads to building of Artificial Intelligence systems which make biased predictions. Such biases can prove to be especially harmful in social scenarios as they amplify the racial and gender biases that are already prevalent in the society. Combatting such algorithmic bias can be very challenging because the data which is fed to the algorithm may not always be scrutinized by experts to be true and unbiased.

8 Conclusions

Rapid developments in the field of Artificial Intelligence are largely talked of today. Artificial Intelligence is seen as this game changing technology that would transform every aspect of human life. With that, we are often left wondering if this ever-evolving field would indeed live up to its unusually high set expectations. Some even wonder if Artificial Intelligence would metamorphose from an aide to human development to a threat to human existence.

Artificial Intelligence has indeed come a long way, from first being envisaged as a machine which could be indistinguishable from a human, to now being realized in various fields in our everyday life. Some of the developments related to Artificial Intelligence covered in this paper like market research and medical imaging are implemented using Machine Learning concepts of Supervised learning, Reinforcement Learning, and Unsupervised Learning.

Artificial Neural Networks, which are inspired by the working of biological neural networks in the brain have helped machines in understanding patterns from given input data and predicting future data sets. Nowadays, Multilayer Artificial Neural Networks, also called Deep Neural Networks, are being implemented to achieve better accuracy. Applications like Functional Genome Analysis and detection of cancers that are covered in this paper are implemented using such Deep Neural Networks.

Natural language processing has been made possible through Artificial Intelligence which has opened up newer arenas for a more cohesive interaction between humans and machines, like in the field of robotics.

With the onset of such great developments, Artificial Intelligence has also brought along with it, a newer set of problems. Artificial Intelligence applications like Deepfakes and Artificial Intelligence Bots having fake accounts are responsible for spreading misinformation, resulting in chaos. Paradoxically enough, solutions for such problems lie in Artificial Intelligence itself.

But, despite such exceptional development, Artificial Intelligence is still a long way from achieving, what it now promises. Models that analyze the present trends in technology and predict future technology trends prove to be useful to gain insight. This paper relies on the Gartner Hype Cycle, which displays the relative positions of different technologies in a domain, with respect to their current stages of development and the anticipated time for them to reach stability. As per the Gartner Hype Cycle for Artificial Intelligence, 2019, currently only 5.4% of the technologies related to Artificial Intelligence have reached the Plateau of Productivity i.e. can be used commercially.

Thus, although Artificial Intelligence seems like a superpower which we may be able to conquer in the near future, in reality, newer technologies related to Artificial Intelligence will have to go through extreme phases of heightened expectations and severe despondency before the technologies become stable enough for commercial use.

References

[1] Beavers A. (2013). Alan Turing: Mathematical Mechanist. In Alan Turing: His Work and Impact. Cooper B. S., van Leeuwen J. (eds.). Waltham: Elsevier. pp. 481. ISBN 978-0-12-386980-7.

[2] Turing A.M. (1937). On Computable Numbers, with an Application to the Entscheidungsproblem. In Proceedings of the London Mathematical Society. s2-42(1): pp. 230. doi: 10.1112/plms/s2-42.1.230.

[3] Minsky 1967:107 “In his 1936 paper, A. M. Turing defined the class of abstract machines that now bear his name. A Turing machine is a finite-state machine associated with a special kind of environment – its tape – in which it can store (and later recover) sequences of symbols”, also Stone 1972:8 where the word “machine” is in quotation marks.

[4] French Robert (2000). The Turing Test: The first 50 years. In Trends in Congnitive Sciences. 4(3). pp. 115. doi: 10.1016/S1364-6613(00)01453-4

[5] Turing A.M. (1950). I.—Computing Machinery and Intelligence. In Mind. LIX(236). pp. 433.

[6] The Turing Test, 1950. turing.org.uk. The Alan Turing Internet Scrapbook.

[7] Russel S. J., Norvig P., et al (1995). Artificial Intelligence: Introduction. In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 5. ISBN 0-13-103805-2.

[8] Smith, G. W. (2015). Art and Artificial Intelligence. ArtEnt. Archived from the original on 25 June 2017.

[9] Feigenbaum E. A. (2003). Some challenges and grand challenges for computational intelligence. In Journal of the ACM. 50 (1). pp. 32. doi:10.1145/602382.602400.

[10] McCorduck P. (2004). The Following Quarter-Century of Artificial Intelligence. In Machines Who Think: A Personal Inquiry into the History and Prospects of Artificial Intelligence (2nd ed.), A. K. Peters, Ltd. pp. 503. ISBN 1-56881-205-1.

[11] Brand S. (1988). The Founding Image and the Connecting Idea. In The media lab: inventing the future at MIT. Penguin Books. pp. 152. ISBN 978-0-14-009701-6

[12] Available at https://aisb.org.uk/aisb-events/

[13] Available at https://web.archive.org/web/20170914152327/http://www.loebner.net/Prizef/loebner-prize.html

[14] Russel S. J., Norvig P., et al. (1995). Artificial Intelligence: The gestation of artificial intelligence (1943-1956). In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 16. ISBN 0-13-103805-2.

[15] Shannon C. E. (1950). Programming a Computer for Playing Chess. In Philosophical Magazine. 741(314). pp. 256

[16] Russel S. J., Norvig P., et al (1995).Game Playing: Introduction: Games as search problems. In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 144. ISBN 0-13-103805-2.

[17] Available at http://billwall.phpwebhosting.com/articles/computer_early_chess.htm

[18] Moor, J. (2006). The Dartmouth College Artificial Intelligence Conference: The Next Fifty Years. In AI Magazine. 27(4). pp. 87. doi:10.1609/aimag.v27i4.1911

[19] Solomonoff R.J. (1985). The time scale of artificial intelligence: Reflections on social effects. In Human Systems Management. 5(2). pp. 149. doi:10.3233/HSM-1985-5207

[20] Russel S. J., Norvig P., et al (1995). Artificial Intelligence: Early enthusiasm, great expectations (1952–1969). In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 17. ISBN 0-13-103805-2.

[21] Durkin J. (2002). History and Applications: Fall and rebirth of artificial intelligence (1970s). In Expert systems: the technology of knowledge management and decision making for the 21st century. Leondes C.T. (eds). Academic Press. pp. 10. ISBN 978-0-12-443880-4.

[22] Tan H. (2017). A brief history and technical review of the expert system research. In IOP Conference Series: Materials Science and Engineering. 242. doi:10.1088/1757-899X/242/1/012111

[23] Russel S. J., Norvig P., et al (1995). Knowledge-based systems: The key to power? (1969-1979). In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 22. ISBN 0-13-103805-2.

[24] Copeland B. J. (2019). DENDRAL. In Encyclopaedia Britannica, inc. Available at https://www.britannica.com/technology/DENDRAL

[25] Feigenbaum, E. A., Buchanan, B. G., Lederberg, J (1971). On generality and problem solving: A case study involving the DENDRAL program. In Machine Intelligence 6, B. Meltzer and D. Michie (eds.). New York: American Elsevier. pp. 165.

[26] Zwass V. (2016). Expert system. In Encyclopaedia Britannica, inc. Available at https://www.britannica.com/technology/expert-system

[27] Mirjankar N., Ghatnatti S. (2016). Prominence of Expert System and Case Study – DENDRAL. International Journal Of Advanced Networking and Applications. pp. 247. ISSN: 0975-0282

[28] Buchanan B. G., Shortliffe E. H. (eds.) (1984). Rule based Expert Systems: The MYCIN Experiments of the Stanford Heuristic Programming Project. Addison Wesley. pp. 4. ISBN 0-201-10172-6

[29] Miller R. A., Pople Jr H. E., Myers J. D. (1982). Internist-I, an experimental computer-based diagnostic consultant for general internal medicine. In New England Journal of Medicine. 307 (8). pp. 468. doi:10.1056/NEJM198208193070803.

[30] Petrick S. R. (1971). Proceedings of the Second Symposium on Symbolic and Algebraic Manipulation. pp. 23.

[31] McDermott J. (1980). R1: An Expert in the Computer Systems Domain. In Proceedings of the First AAAI Conference on Artificial Intelligence. pp. 269.

[32] Hart P.E., Duda R.O., Einaudi M.T. (1978). PROSPECTOR—A computer-based consultation system for mineral exploration. In Journal of the International Association for Mathematical Geology. 10. pp. 589. doi:10.1007/BF02461988

[33] Woods W. A. (1973). Progress in natural language understanding: an application to lunar geology. In Proceedings of the June 4–8, 1973, national computer conference and exposition (AFIPS ’73). Association for Computing Machinery. pp. 441. doi: 10.1145/1499586.1499695

[34] Available at http://www.symbolics-dks.com/Macsyma-1.htm

[35] Hayes-Roth B. F., Waterman D. A., Lenat D. (1983). In Building Expert Systems. Addison-Wesley. ISBN 978-0-201-10686-2.

[36] Russel S. J., Norvig P., et al (1995). AI becomes an industry (1980-1988). In Artificial Intelligence: A Modern Approach. Prentice-Hall, Inc. pp: 24. ISBN 0-13-103805-2.

[37] Aggarwal, A. (2018), Resurgence of Artificial Intelligence During 1983–2010. Available at www.scryanalytics.com/articles

[38] Manyika, C. B. B. D. (2011) Big Data: The Next Frontier for Innovation, Competition, and Productivity. McKinsey Global Institute.

[39] Dietrich D., Hiller B. (2012). Big Data Overview. In Data Science & Big Data Analytics. EMC education services, Wiley publications. pp: 3.

[40] Press G. (2016). A Very Short History Of Artificial Intelligence (AI). Available at https://www.forbes.com/sites/gilpress/

[41] Cafolla D., Ceccarelli M. (2016). Experimental Inspiration and Rapid Prototyping of a Novel Humanoid Torso. In Robotics and Mechatronics: Proceedings of the 4th IFToMM International Symposium on Robotics and Mechatronic. Zeghloul S., Laribi M. E., Gazeau J. P. (eds.). pp. 66. ISBN 978-3-319-22368-1.

[42] Koganezawa K., Takanishi A., Sugano S. (1991). Development of Waseda Robot. Ichiro Kato Laboratory, Tokyo.

[43] Available at https://stanfordhealthcare.org/stanford-health-care-now/2014/cyberknife-technology-20th-anniversary.html

[44] Available at https://robonaut.jsc.nasa.gov/R2/pages/iss-mission.html

[45] Available at https://www.nasa.gov/mission_pages/station/main/robonaut.html

[46] Available at https://www.softbankrobotics.com/emea/en/pepper

[47] Available at https://robots.ieee.org/robots/sophia/

[48] Wizu (2018). A Visual History of Chatbots. Available at https://chatbotsmagazine.com

[49] Clelland C. (2017). The Difference between Artificial Intelligence, Machine Learning and Deep Learning. Available at https://medium.com/

[50] Copeland M. (2016). What’s the Difference Between Artificial Intelligence, Machine Learning and Deep Learning. Available at https://blogs.nvidia.com/

[51] Paradzhanyan (2020). Demystifying Deep Learning and Artificial Intelligence. Available at https://thenewstack.io/

[52] Purdy M., Daugherty P. (2016). Why Artificial Intelligence is the future of growth. Available at http://www.accenture.com/futureofAI

[53] Wasylewicz A. T. M., Scheepers-Hoeks A. M. J. W. (2018). Clinical Decision Support Systems. In Fundamentals of Clinical Data Science. Kubben P., Dumontier M., Dekker A. (eds.). Springer. ISBN 1983319997124

[54] Varghese J., Kleine M., Gessner S., Sandmann S., Dugas M. (2018). Effects of computerized decision support system implementations on patient outcomes in inpatient care: a systematic review. In Journal of American Medical Informatics Association (JAMIA). 25(5). pp. 593. doi: 10.1093/jamia/ocx100.

[55] Kawamoto K., Houlihan C. A., Balas A., Lobach D. F. (2005). Improving clinical practice using clinical decision support systems: a systematic review of trials to identify features critical to success. In BMJ. 330. pp. 765. doi:10.1136/bmj.38398.500764.8F

[56] Castellino R. A. (2005). Computer Aided Detection (CAD): an overview. In Cancer Imaging. 5(1). pp: 17. doi:10.1102/1470-7330.2005.0018

[57] Taylor P., Potts H. W. (2008). Computer aids and human second reading as interventions in screening mammography: two systematic reviews to compare effects on cancer detection and recall rate. In European Journal of Cancer. 44 (6). pp. 798. doi:10.1016/j.ejca.2008.02.016.

[58] (2019) Deep learning for genomics. Nat Genet. 51, 1. doi:10.1038/s41588-018-0328-0

[59] Gadam S. (2018). Artificial Intelligence and Autonomous Vehicles. Available at https://medium.com

[60] Vries E. (2019). In-Car Speech Recognition: The Past, Present and Future. Available at https://www.globalme.net

[61] Available at https://www.autonomousvehicletech.com/articles/1950-artificial-intelligence-enables-smarter-adas

[62] Ribbens W. B. (1998). Diagnostics. In Understanding Automotive Electronics (4th Edition). Elsevier Science, Newnes Publication. pp. 354. ISBN 0-7506-7008-8.

[63] Buchanan B. G. (2019). Artificial Intelligence in Finance. The Alan Turing Institute. doi:10.5281/zenodo.2612537

[64] Decision Intelligence. Mastercard. Available at https://globalrisk.mastercard.com/online_resource

[65] https://digit.co/

[66] https://www.getwalnut.com/

[67] http://wallet.ai/

[68] Morgan B. (2018). Using AI for Customer Experience at Allstate. Available at https://www.forbes.com/sites/blakemorgan

[69] https://promo.bankofamerica.com/erica/

[70] Aldridge, I (2013). Introduction. In High-Frequency Trading: A Practical Guide to Algorithmic Strategies and Trading Systems, 2nd edition. Wiley. ISBN 978-1-118-34350-0.

[71] Barbu-Kleitsch O. (2014). Advertising, Microtargeting and Social Media. In Procedia - Social and Behavioral Sciences. 163. pp. 44. doi:10.1016/j.sbspro.2014.12.284

[72] Papakyriakopoulos O., Hegelich S., Shahrezaye M., Serrano J. C. M. (2018). Social media and microtargeting: Political data processing and the consequences for Germany. In Big Data & Society. doi:10.1177/2053951718811844

[73] Korolova A. (2010). Privacy Violations Using Microtargeted Ads: A Case Study. In Conference: ICDMW 2010, The 10th IEEE International Conference on Data Mining Workshops, Sydney, Australia. doi:10.1109/ICDMW.2010.137

[74] Slyusar V. (2019). Artificial intelligence as the basis of future control networks. doi:10.13140/RG.2.2.30247.50087

[75] Tomayko J. E. (2003). The Story of Self-Repairing Flight Control Systems. Gelzer C. (eds.). NASA Dryden.

[76] Schweikhard K. A., Richards W. L., et al. Flight Demonstration Of X-33 Vehicle Health Management System Components On The F/A-18 Systems Research Aircraft. Available at https://ntrs.nasa.gov/

[77] The future of food and agriculture – Alternative pathways to 2050 (Summary version) (2018). Rome. FAO .pp. 31. Licence: CC BY-NC-SA 3.0 IGO.

[78] Sennaar K. (2019). AI in Agriculture – Present Applications and Impact. Emerj. Available at https://emerj.com/ai-sector-overviews/ai-agriculture-present-applications-impact/

[79] The Future of AI in Agriculture. Intel. Available at https://www.intel.com/content/www/us/en/big-data/article/agriculture-harvests-big-data.html

[80] Dharmaraj V., Vijayanand C. (2018), Artificial Intelligence (AI) in Agriculture. In International Journal of Current Microbiology and Applied Sciences. 7(12). pp. 2122. doi: 10.20546/ijcmas.2018.712.241

[81] Artificial Intelligence in Agriculture. Mindtree. Available at https://www.mindtree.com/

[82] Kietzmann, J., Lee, L. W., McCarthy, I. P., Kietzmann, T. C. (2020). Deepfakes: Trick or treat?. Business Horizons. 63 (2). pp. 135. doi:10.1016/j.bushor.2019.11.006.

[83] Afchar D., Nozick V., Yamagishi J. and Echizen, I. (2018). MesoNet: a Compact Facial Video Forgery Detection Network. pp. 1. doi: 10.1109/WIFS.2018.8630761

[84] Koopman M., Rodriguez A. M. and Geradts Z. (2018). Detection of Deepfake Video Manipulation.

[85] Clarke Y. D. (2019). H.R.3230 - 116th Congress (2019-2020): Defending Each and Every Person from False Appearances by Keeping Exploitation Subject to Accountability Act of 2019. Available at www.congress.gov.

[86] From Drug R&D To Diagnostics: 90+ Artificial Intelligence Startups In Healthcare (2019). Available at https://www.cbinsights.com/research/

[87] Ribli D., Horváth A., Unger Z., Pollner P., Csabai I. (2018). Detecting and classifying lesions in mammograms with Deep Learning. In Sci Rep. 8, 4165. doi:10.1038/s41598-018-22437-z

[88] Van der Laak J. (2017). Computer-aided Diagnosis: The Tipping Point for Digital Pathology. In Digital Pathology Association.

[89] Agaro E. D. (2018). Artificial Intelligence used in genome analysis studies. The EuroBiotech Journal. 2(2). doi:10.2478/ebtj-2018-0012

[90] Zhao J., Liang B., Chen Q. (2018). The key technology toward the self-driving car. In International Journal of Intelligent Unmanned Systems. 6 (1). pp. 2. doi:10.1108/IJIUS-08-2017-0008.

[91] Baomar H., Bentley P. J. (2016). An Intelligent Autopilot System that learns flight emergency procedures by imitating human pilots. In 2016 IEEE Symposium Series on Computational Intelligence (SSCI). pp. 1. doi:10.1109/SSCI.2016.7849881. ISBN 978-1-5090-4240-1.

[92] Sears A. (2018). The Role Of Artificial Intelligence In The Classroom. eLearning Industry. Available at https://elearningindustry.com/

[93] Hemming S., Elings A., Righini I., et al. (2019). Remote Control of Greenhouse Vegetable Production with Artificial Intelligence—Greenhouse Climate, Irrigation, and Crop Production. In Sensors. 19(8). pp. 1807. doi:10.3390/s19081807

[94] Purdy M., Daugherty P. (2017). How AI boosts industry profits and innovation. Available at http://www.accenture.com/futureofAI

[95] Aggarwal A. (2018). Domains in Which Artificial Intelligence is Rivaling Humans. Available at www.scryanalytics.com/articles

[96] Kurzweil R. (2014). Don’t Fear Artificial Intelligence. Available at https://time.com

[97] Gartner Hype Cycle: Interpreting Technology Hype. Available at https://www.gartner.com/

[98] Goasduff L (2019). Top Trends on the Gartner Hype Cycle for Artificial Intelligence, 2019. Available at https://www.gartner.com/

[99] Heaven D. (2019). Why Deep Learning AIs are so easy to fool. In Nature. 574. pp. 163. doi: 10.1038/d41586-019-03013-5

[100] Jiawei S., Vargas D. V., Sakurai K. (2017). One Pixel Attack for Fooling Deep Neural Networks. In IEEE Transactions on Evolutionary Computation. pp. 99. doi: 10.1109/TEVC.2019.2890858

[101] Povolny S., Trivedi S. (2020). Model Hacking ADAS to Pave Safer Roads for Autonomous Vehicles. Available at https://www.mcafee.com//blogs/

[102] Sun W., Olfa N., Shafto P. (2018). Iterating Algorithmic Bias in the interactive Machine Learning Process of Information Filtering. In Scipress. pp. 110. doi:10.5220/0006938301100118

Biographies

Ramjee Prasad, Fellow IEEE, IET, IETE, and WWRF, is a Professor of Future Technologies for Business Ecosystem Innovation (FT4BI) in the Department of Business Development and Technology, Aarhus University, Herning, Denmark. He is the Founder President of the CTIF Global Capsule (CGC). He is also the Founder Chairman of the Global ICT Standardization Forum for India, established in 2009. He has been honored by the University of Rome “Tor Vergata”, Italy as a Distinguished Professor of the Department of Clinical Sciences and Translational Medicine on March 15, 2016. He is an Honorary Professor of the University of Cape Town, South Africa, and the University of KwaZulu-Natal, South Africa. He has received Ridderkorset of Dannebrogordenen (Knight of the Dannebrog) in 2010 from the Danish Queen for the internationalization of top-class telecommunication research and education. He has received several international awards such as IEEE Communications Society Wireless Communications Technical Committee Recognition Award in 2003 for making contribution in the field of “Personal, Wireless and Mobile Systems and Networks”, Telenor’s Research Award in 2005 for impressive merits, both academic and organizational within the field of wireless and personal communication, 2014 IEEE AESS Outstanding Organizational Leadership Award for: “Organizational Leadership in developing and globalizing the CTIF (Center for TeleInFrastruktur) Research Network”, and so on. He has been the Project Coordinator of several EC projects namely, MAGNET, MAGNET Beyond, eWALL. He has published more than 50 books, 1000 plus journal and conference publications, more than 15 patents, over 140 Ph.D. Graduates and a larger number of Masters (over 250). Several of his students are today worldwide telecommunication leaders themselves.

Purva Choudhary worked recently under Prof. Dr. Ramjee Prasad as Guest Researcher at Aarhus University, Denmark. She is a consistently meritorious Electronics and Telecommunication Graduate Engineer from Cummins College of Engineering for Women, University of Pune, India. She has bagged an Indian Patent during her bachelor’s degree. She worked at Accenture Solutions Private Limited, for one and a half years and is currently pursuing M. S. in Computer Science at California State University, Northridge, California, US.

Besides academics, Ms. Purva Choudhary was also very active in extra-curricular activities. During her bachelor’s degree, she worked as Vice Chairman (Editor) for her college magazine, along with working extensively for the National Service Scheme (NSS). Currently, she is an active member of Toastmasters International.

Journal of Mobile Multimedia, Vol. 17_1-3, 427–454.

doi: 10.13052/jmm1550-4646.171322

© 2020 River Publishers