Computer Vision Mobile System for Education Using Augmented Reality Technology

Misiuk Tetiana, Yuriy Kondratenko, Ievgen Sidenko* and Galyna Kondratenko

Intelligent Information Systems Department. Petro Mohyla Black Sea National University, Mykolaiv, Ukraine

E-mail: tetiana.misiuk@gmail.com; yuriy.kondratenko@chmnu.edu.ua; ievgen.sidenko@chmnu.edu.ua; halyna.kondratenko@chmnu.edu.ua

*Corresponding Author

Received 03 April 2021; Accepted 05 April 2021; Publication 18 June 2021

Abstract

This article analyzes the algorithms of computer vision, the features of the application of augmented reality technology and existing software modules, frameworks and libraries. The result is a computer vision mobile system (application) using augmented reality technology, which allows users (for example, students) to obtain additional virtual information about the research object and to be able to interact with it. The functional model of the system is formed, the process of application development using the Vuforia library is described and the results of the work are given. The result is an Android application, which using augmented reality tools, allows the user to obtain a virtual environment object in a real-world. This computer vision mobile system is intended for educational purposes, in particular for use in schools and universities for more effective interaction between users and educational material.

Keywords: Augmented reality, computer vision, mobile application, image processing, target, 3D model, education.

1 Introduction

The modern period of development of society is characterized by the process of informatization. This is due to the rapid development of information technology, which affects all areas of society. It is especially important to use the latest developments in the system of education and science, which is a key condition for training leading professionals in various fields and in general will help increase the intellectual potential of society. Augmented reality (AR) is considered a promising technology and has the potential for application in education [1–4]. The problems of implementing augmented reality in education are the low level of awareness about the effectiveness of such technologies, the lack of methodology for its application and software that can be used in schools and other educational institutions [4–6].

The urgency of the work is determined by the rapid development and continuous improvement of augmented reality technology. This technology is a new way to obtain visual information. Augmented reality allows you to expand the possibilities of visualization and solve a number of problems with it. It is believed that in a few years AR will become one of the main technologies, especially given the diversity of industries in which this technology can be used [2, 5, 7–10].

The aim of the work is to obtain additional information about the recognizable real world object by creating a computer vision mobile system using AR technology. To achieve this aim it is necessary to solve the following tasks: (a) to study the clustering (computer vision) algorithms used for image analysis; (b) to explore the principles of AR technology; (c) analyze existing AR applications and identify their main advantages and disadvantages; (d) analyze the editors for the development of AR applications and choose the environment for application development; (e) create a functional model of the system; (f) create a computer vision mobile system for education using AR technology.

The Vuforia AR library and the Unity game engine using the C# object-oriented programming language were chosen to create the mobile application [11–14]. Computer vision is a scientific discipline that studies the theory and basic algorithms of image and scene analysis. Among the main methods of computer vision are methods of determining the affine and projective structures of the object, methods of accompanying a moving object, methods of building 3D models in the sequence of images, as well as methods of visual analysis and evaluation of the number and parameters of objects scenes [1, 3, 15]. Image processing methods and techniques, such as object segmentation, noise filtration, contrast improving, artifact removal, etc. require the use of artificial intelligence approaches [3, 9, 16–22].

2 Clustering Algorithms and AR Technology for Image Processing. Related Works and Problem Statement

The concept of image (object) segmentation is to divide the image into areas that cover the entire image. At the same time, such areas which have target parameters or characteristics for the solved problem are allocated. Areas can have different shapes and sizes. Clustering in segmentation problems is the process of splitting a set of characteristic vectors into subsets called clusters [17–20].

Consider classical clustering algorithms [18, 19, 23–26]. In these algorithms, the vectors in most cases are pixels or pixel areas. The set of vectors may contain intensity values, color code, texture characteristics, etc. Clustering can be performed on any characteristic. After clustering areas using component labeling algorithms, it is possible to find related areas. The clustering error can be found by (1):

| (1) |

where is the clustering error; is a number or clusters; , () are clusters; , () are vectors; , () are mathematical expectations.

Iterative clustering by mathematical expectation [27, 28]. The problem is solved by finding the extremum. In the first iteration () values () of mathematical expectations for clusters are randomly given ().Then for each vector the distance (clustering error) is calculated by (2):

| (2) |

Distance calculations are performed for each cluster. According to the results of calculations, the vector is moved to the cluster, which has the smallest vector of mathematical expectation. Then the transition to the next iterations with the clarification of the values of mathematical expectation and the calculation of new values of distances. Iterations are performed while condition (3) is satisfied for all clusters:

| (3) |

This means that clusters must remain unchanged when moving to a new iteration. This algorithm in the general case gives a locally optimal result [27, 28].

ISODATA (Iterative Self-Organizing Data Analysis Technique) clustering algorithm. This algorithm is a type of iterative algorithm based on the method of dividing/combining clusters. ISODATA algorithm uses the covariance matrix of the cluster. The algorithm of cluster formation has a set of clusters , () with values of mathematical expectations , () and a covariance matrix for the i-th cluster [29].

Histogram algorithms are more efficient in terms of calculations, as the calculation procedure is non-iterative. These algorithms work effectively with images in which homogeneous objects form clusters in the measurement space. In this case, such a space is a histogram. Image segmentation occurs by inversely reproducing clusters from the measurement space to the image. The maximum connected components on the histogram are used to select image segments. Value intervals between minimum points are selected as clusters. Clusters are marked with indices. All pixels belonging to the same cluster are marked accordingly [30, 31].

Image segmentation algorithm based on graph model. Real scenes and images usually contain a lot of small details, complex texture, a lot of segmented areas. For images with such characteristics, an image segmentation algorithm based on a graph model is used. The model is constructed in the form of a weighted graph , the vertices of which are points in the measurement space. The edges correspond to the weight , which determines the degree of similarity of the vertices and . The problem of dividing a graph into sets , (), which do not intersect and have the greatest similarity of elements within sets and the least similarity between elements of different sets, is solved. This algorithm gives good results in the segmentation of color images of real scenes, but due to the large amount of computation has limited use, especially for real-time systems [32, 33].

To work with segmented image objects, augmented reality is used, which allows to expand the possibilities of perception of information about objects. Unlike virtual reality, AR does not create whole artificial environments. It is displayed in a live view of the real environment and adds to it sounds, videos, graphics [3–5, 9]. AR applications usually connect digital animation to a special marker, or use GPS in phones to determine the location. A well-recognized marker is easily and reliably detected under any circumstances. Characteristic features in good light are easier to detect than in a color environment using machine vision techniques. The greater the contrast, the easier it is to detect objects. In this case, black and white markers are optimal [4, 9].

There are various methods and algorithms for solving augmented reality problems. The RANSAC (Random Sample Consensus) algorithm is used to cluster segments (or lines) into parallel families and find the corresponding points of convergence [34]. Determination of stable image characteristics using the SURF (Speeded up Robust Features) method. For the image of the scene and the image of the standard using the SURF method there are special points and unique descriptors for them. Comparing these sets of descriptors, you can select a reference object on the stage. The method looks for special points using the Hesse matrix [35, 36].

AR adds virtual information in the right context of the real world. To do this, the system must know where the user is and what he is looking at. Typically, the user explores the environment through a display that displays camera images along with additional information. Thus, in practice, the system must determine the location and orientation of the camera. With a calibrated camera, the system will be able to draw virtual objects in the right place. Tracking means calculating the relative position (location and orientation) of the camera in real time. This is one of the fundamental components of AR.

Marker detection systems that use a threshold approach typically use an adaptive threshold method to cope with local lighting changes. After setting the threshold, the system has a binary image consisting of a background and objects. All objects are potential candidate markers at this stage. Usually the next step is to mark them all or otherwise track the objects. During the marking process, the system may reject objects that are too small or clearly different from the markers. Detecting boundaries in halftone images is time consuming, so marker detection systems using this approach typically use subsampling and detect boundaries only on a predefined grid [4, 5, 10, 12]. As a rule, AR applications are aimed at real-time processing, and high performance is extremely important. Systems cannot afford to spend time processing non-markers. Therefore, many implementations use fast-calculated acceptance/rejection criteria to distinguish real markers from objects that are clearly something else. The black and white marker histogram is bipolar, and the marker detection system can check bipolarity as a criterion for rapid acceptance/rejection. Another fast computational criterion used to estimate area is the largest diagonal or span. Sometimes a system can also reject areas that are too large if it has some basic knowledge to justify the assumption that they are something other than markers, such as dark edges. The optimal limit value for the marker size depends on the application and type of marker. For example, ARToolKit assumes that the markers are within a reasonable distance from the camera [37, 38].

Mobile applications that use AR to create content allow users to see reality on the screen of a mobile device, which is complemented by integrated three-dimensional virtual objects. This study analyzed several existing augmented reality applications for educational tasks. The disadvantage of the relevant applications is the lack of interactivity and informativeness of AR objects.

The idea of the study is to develop a mobile application for children who, interacting with a smartphone, could receive useful information for learning. The application must have a database of markers, which will determine the information to be provided to the user. As an example, the application will use images from various children’s books. Images can be both color and black and white. The only condition for the normal operation of the application is a sufficient amount of light in the room where the application is used, and images that can be well recognized by computer vision technology. Not all images can be scanned, due to the state of augmented reality libraries and the capabilities of the device on which the application will run.

3 Modeling and Technical Design of Computer Vision Mobile System for Education

During the analysis and design of the system, their complete and consistent models must be created. A model is a set of interconnected abstract elements with a possible indication of their properties, behavior and relationships between them. Edraw Max was used to build the diagrams [39].

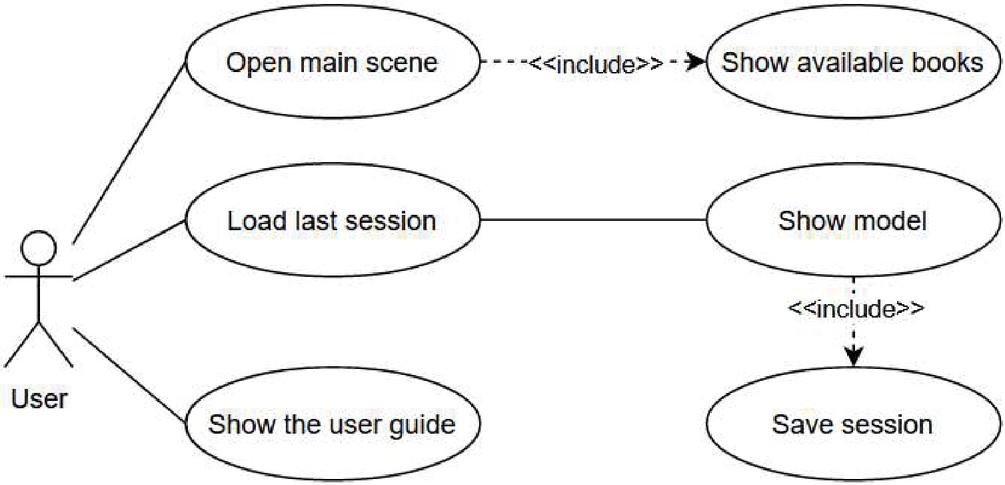

The use case diagram. The purpose of developing a use case diagram is to determine the general boundaries of the system and the subject area; formation of general requirements for the functional behavior of the system; development of the initial model of the system and further detailing in the form of logical and physical models [17]. The use case diagram is presented in Figure 1.

Figure 1 The use case diagram of the computer vision mobile system for education using AR technology.

The user has the opportunity to obtain information about the model; download the last session, which means download information about the last viewed model; save the current session; view user guide. Obtaining information about the model involves selecting a book from the current database of books for which models are available.

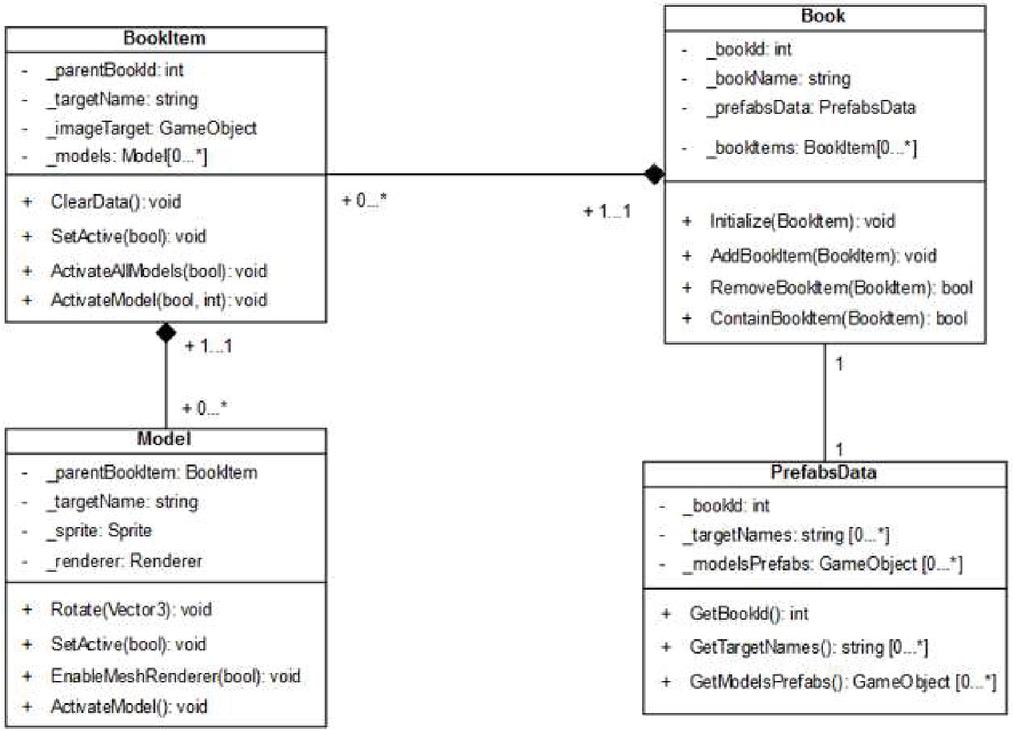

The class diagram. A class diagram is needed to represent the static structure of the system model. The class diagram shows the relationships between the entities of the system, their internal structure and types of relationships. The class diagram presented in Figure 2, reflects the structure of system classes.

Figure 2 The class diagram of the computer vision mobile system for education using AR technology.

The class diagram contains a “Book” class that contains links to other objects. The “Initialize ()” method of this class generates an array of objects of type “BookItem”. The “BookItem” class is used to store links to objects that are directly models that are displayed to users. Its “ClearData ()” method allows you to reset all class fields. The class has the ability to activate and deactivate all models of this object. The diagram shows the associations between the main classes of the system and their multiplicity.

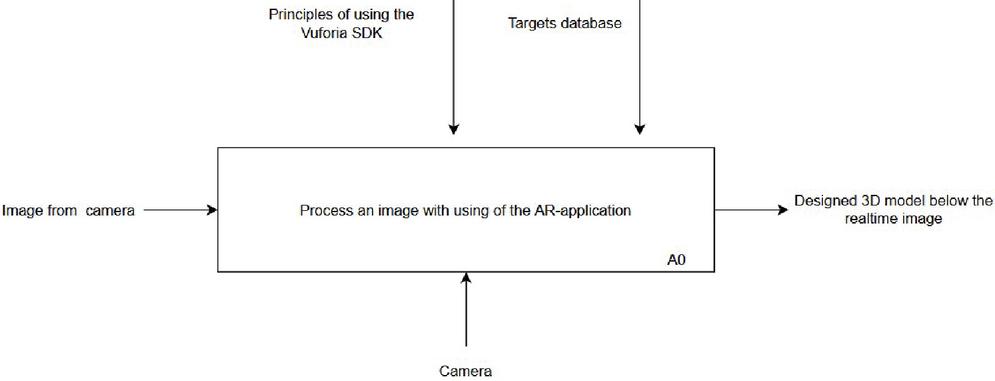

The functional model describes the functions of the system, possible options for its use; may contain information about the circulation of information in the system, objects and entities that interact with the system; can be a dynamic or static model. Building a model begins with the development of a contextual IDEF0 diagram (Figure 3).

Figure 3 The top-level context diagram A-0 of the computer vision mobile system for education using AR technology.

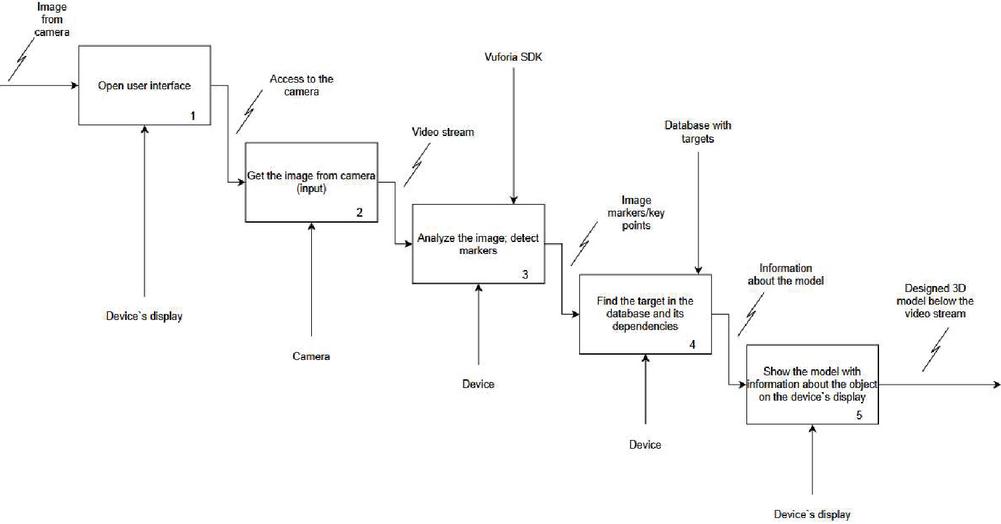

Next, decompose the top-level context diagram A-0 (Figure 4).

Figure 4 The decomposition diagram A-0 of the computer vision mobile system for education using AR technology.

When opening the application, the user must select the desired menu item that corresponds to the book that he will read. Next is the process of scanning the video stream and identifying targets. If a target has been found in the database, information on this class is searched for and displayed on the device screen.

4 Software Implementation of the Computer Vision Mobile System for Education using AR Technology

Currently, there are several frameworks for working with AR, such as Wikitude, EasyAR, ARToolkit, Vuforia [4–6, 11, 37, 38, 40–43]. We will analyze them in order to determine the optimal framework for mobile application development for education using AR technology.

Since the application will be based on target recognition and 3D modeling, it is necessary that the frameworks allow you to work with different settings for the target. Wikitude [40] allows you to overlay just static augmented reality objects. EasyAR [41] does not provide any opportunities for easy and fast use of this framework. The developer has only an SDK and a small documentation that describes the basic principles of object recognition. The documentation covers only the basic principles and class specification. ARToolkit [38, 42] is an open source library, but it has not received updates for a long time, and all the examples provided with the library cannot be run. Vuforia [11, 37, 43] has its own online studio that generates a database of target images and shows the points at which recognition occurs. Unlike Wikitude, Vuforia provides the ability to download images, a cuboid, and a cylinder as targets. Google also has its own augmented reality library ARCore [44], but it is now pre-accessed and may be unstable. After analyzing all the information and taking into account the available equipment for testing the application, it was decided to choose the Vuforia library.

In order to be able to use the Vuforia library, you need to register on the official Vuforia website. After that, you can get a license for the project [11].

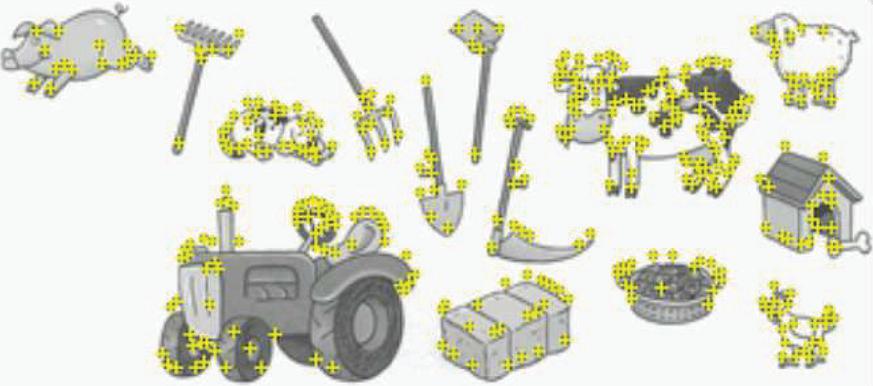

Since the project will use predefined images, it is necessary to create a database with them on the side of Vuforia [37, 43]. You can add different types of markers. In our case, only one type of marker will be used, which is a picture. When uploading images to the database, you must specify the width of the image, as well as the name of the marker. After loading the image, its features are determined by which the marker is given a certain level (rating). The higher the score, the more likely it is that the marker will be recognized. An example of highlighting features in the image is shown in Figure 5.

Figure 5 Examples of objects with highlighted features for application with AR.

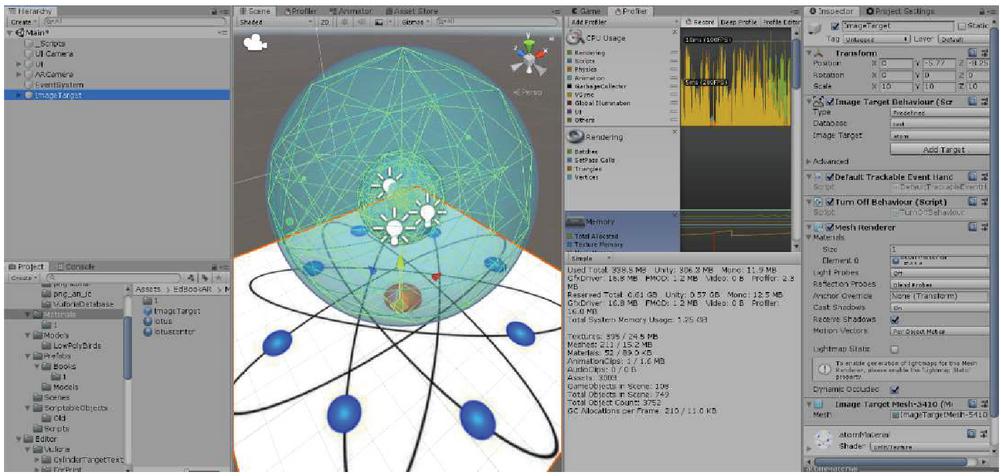

The created database is downloaded to a computer and added to the project. The interaction of virtual objects and target images is adjusted in the scene. The easiest way is to attach a 3D model to the ImageTarget object. When the augmented camera finds the target, the ImageTarget object appears with the 3D-object attached to it. The example is presented in Figure 6.

Figure 6 Unity editor window with ImageTarget and 3D-object on the scene.

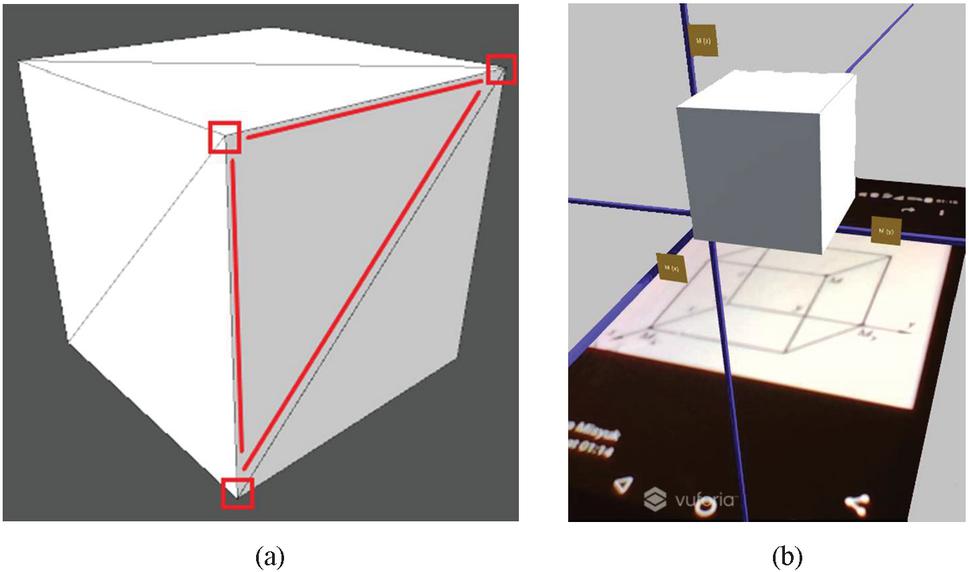

Simple objects can be created using algorithms to construct the mesh of an object. Each mesh consists of vertices and triangles that they form (Figure 7(a)). In Figure 7(b) shows a cube as an AR object.

Figure 7 Construction of 3-D models: cube (wireframe mode) with selected vertices (a) and cube as an AR object (b).

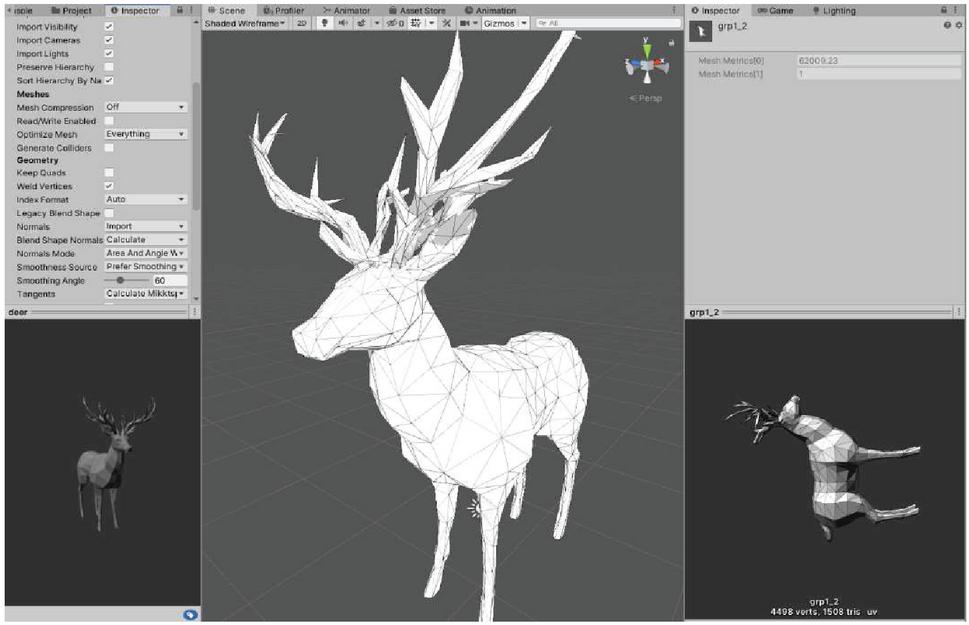

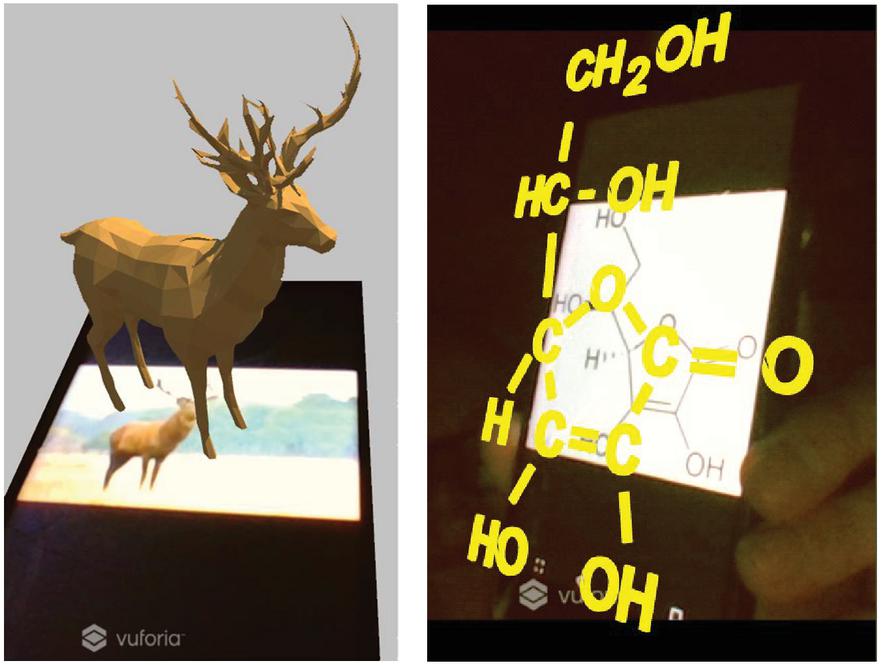

Complex objects have been pre-loaded into the project. For example, the deer mesh is shown in Figure 8.

Figure 8 Complex objects.

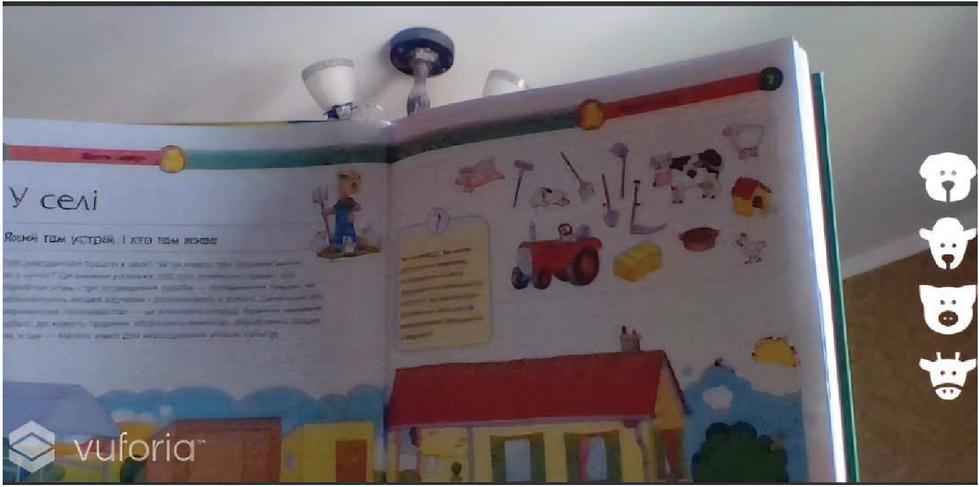

The user interface of the mobile software application for education using AR technology contains the main menu, the book selection menu and the model selection menu if there are several prefabricated models for the label. The model selection menu has a list of buttons on the right side of the screen (Figure 9).

Figure 9 Model selection menu for viewing.

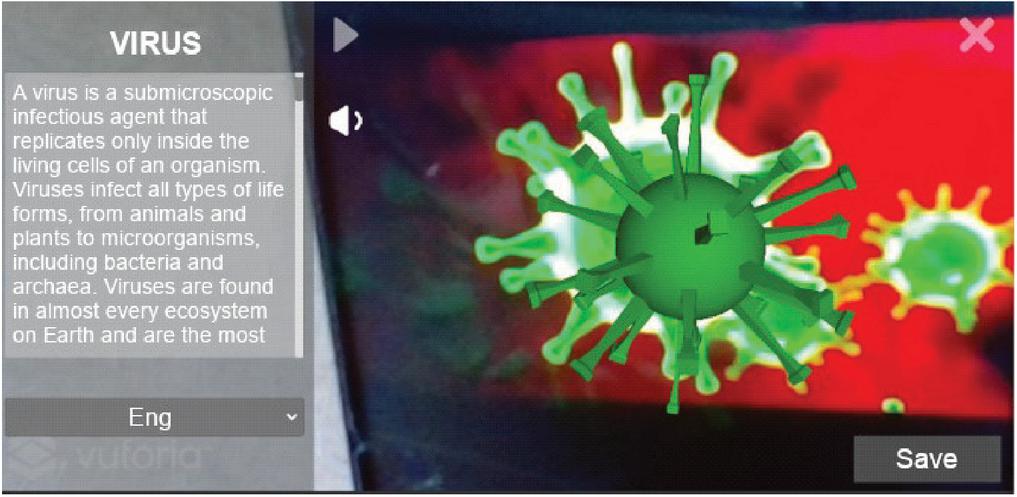

The user had the opportunity to view information about the model in different languages. In addition, user can listen to the audio track recorded for this model. The example is presented in Figure 10 (in our case as a variant in the Ukrainian language about viruses).

Figure 10 The Vuforia module has identified a target.

Examples of application of the computer vision mobile system for education using AR technology are shown in Figure 11.

Figure 11 Examples of application of the developed computer vision mobile system.

The project has great potential for development and use in education. The application can be used to teach preschool children. In this case, books can have many images of animals, plants, objects of the environment. The application can teach children to interact with such objects. In modern schools, you can use this system in lessons of biology, chemistry, physics, geometry and more. When scanning a drawing from the board, the student can get a 3D model of the object being studied. In the presence of animations for this model, the speed of learning a concept/object by the student may increase, as he will see the principle of operation of this object on the display screen of the mobile device. This developed AR application can also be used in the film industry, robotics, education, medicine, transport, etc [45–51].

Using another database and pre-downloading it to the application, you can use the application when teaching medical students. For example, when scanning an image of the human body, organs and their names may appear in appropriate places in the user’s language and Latin; this application can be used to help identify a specific disease that manifests itself visually on the patient’s body.

5 Conclusions

This article describes the process of creating a mobile application that uses augmented reality to display additional information about the objects under study. The application can be used in various fields of human activity, especially in education. Given the rapid development of technology in our time, there is a need for new forms of information about the world around us and new learning tools.

Using the achievements of science and technology, it is possible to teach adults and children to study the environment through augmented reality applications. It is necessary to use existing technologies in the learning process from school. Thus, the generation that had experience with the latest technologies from an early age will have more knowledge about the operation of these technologies and over time will better identify new ways to develop and improve them.

List of Abbreviations

| AR | – | Augmented Reality; |

| ISODATA | – | Iterative Self-Organizing Data Analysis Technique; |

| RANSAC | – | Random Sample Consensus; |

| SURF | – | Speeded up Robust Features. |

References

[1] L. Englestone, ‘.NET Developer’s Guide to Augmented Reality in iOS’, Apress, Berkeley, CA, 2021, doi: 10.1007/978-1-4842-6770-7.

[2] G. R. Shinde, P. S. Dhotre, P. N. Mahalle, N. Dey, ‘Internet of Things Integrated Augmented Reality’, Springer, Singapore, 2021, doi: 10.1007/978-981-15-6374-4.

[3] T. Jung, M. C. tom Dieck, P. A. Rauschnabel, ‘Augmented Reality and Virtual Reality’, Springer, Cham, 2020, doi: 10.1007/978-3-030-37 869-1.

[4] V. Geroimenko, ‘Augmented Reality in Education. A New Technology for Teaching and Learning’, Springer, Cham, 2020, doi: 10.1007/978-3-030-42156-4.

[5] R. Dörner, W. Broll, P. Grimm, B. Jung, ‘Virtual und Augmented Reality (VR/AR)’, Springer Vieweg, Berlin, Heidelberg, 2019, doi: 10.1007/978-3-662-58861-1.

[6] J. Peddie, ‘Augmented Reality’, Springer, Cham, 2017, doi: 10.1007/978-3-319-54502-8.

[7] M. Spanke, ‘Augmented Reality’, in: Retail Isn’t Dead, Palgrave Macmillan, Cham, 2020, doi: 10.1007/978-3-030-36650-6_5.

[8] T. Gek-Siang, K. Ab. Aziz, Z. Ahmad, ‘Correction to: Augmented Reality: The Game Changer of Travel and Tourism Industry in 2025’, in: Park S. H., Gonzalez-Perez M. A., Floriani D. E. (eds) The Palgrave Handbook of Corporate Sustainability in the Digital Era, Palgrave Macmillan, Cham, 2021, doi: 10.1007/978-3-030-42412-1_42.

[9] S. H. Kidd, H. Crompton, ‘Augmented Learning with Augmented Reality’. in: Churchill D., Lu J., Chiu T., Fox B. (eds) Mobile Learning Design. Lecture Notes in Educational Technology, Springer, Singapore, 2016, doi: 10.1007/978-981-10-0027-0_6.

[10] W. Wang, ‘Understanding Augmented Reality and ARKit’, in: Beginning ARKit for iPhone and iPad, Apress, Berkeley, CA, 2018, doi: 10.1007/978-1-4842-4102-8_1.

[11] L. Soussi, Z. Spijkerman, S. Jansen, ‘A Case Study of the Health of an Augmented Reality Software Ecosystem: Vuforia’, in: Maglyas A., Lamprecht AL. (eds) Software Business. ICSOB 2016. Lecture Notes in Business Information Processing, volume 240, Springer, Cham, 2016, doi: 10.1007/978-3-319-40515-5_11.

[12] M. Sharma, R. Vaidya, A. K. Saxena, I. Aggarwal, J. Khurana, ‘Design and Development of Mobile Augmented Reality’, in: Abraham A., Castillo O., Virmani D. (eds) Proceedings of 3rd International Conference on Computing Informatics and Networks. Lecture Notes in Networks and Systems, volume 167, Springer, Singapore, 2021, doi: 10.1007/978-981-15-9712-1_46.

[13] M. Smith, A. Maiti, A. D. Maxwell, A. A. Kist, ‘Using Unity 3D as the Augmented Reality Framework for Remote Access Laboratories’, in: Auer M., Langmann R. (eds) Smart Industry & Smart Education. REV 2018. Lecture Notes in Networks and Systems, volume 47, Springer, Cham, 2019, doi: 10.1007/978-3-319-95678-7_64.

[14] A. Fowler, ‘The Unity ARKit’, in: Beginning iOS AR Game Development, Apress, Berkeley, CA, 2019, doi: 10.1007/978-1-4842-3618-5_3.

[15] S. T. Dougherty, ‘Affine and Projective Planes’, in: Combinatorics and Finite Geometry. Springer Undergraduate Mathematics Series, Springer, Cham, 2020, doi: 10.1007/978-3-030-56395-0_4.

[16] O. Striuk, Y. Kondratenko, I. Sidenko, A. Vorobyova, ‘Generative Adversarial Neural Network for Creating Photorealistic Images’, IEEE 2nd International Conference on Advanced Trends in Information Theory (ATIT), Kyiv, Ukraine, 2020, doi: 10.1109/ATIT50783.2020.9349326.

[17] F. Shi et al., ‘Review of Artificial Intelligence Techniques in Imaging Data Acquisition, Segmentation, and Diagnosis for COVID-19’, in: IEEE Reviews in Biomedical Engineering, 14, 2021, doi: 10.1109/RBME.2020.2987975.

[18] V. Zinchenko, G. Kondratenko, I. Sidenko, Y. Kondratenko, ‘Computer Vision in Control and Optimization of Road Traffic’, IEEE Third International Conference on Data Stream Mining & Processing (DSMP), Lviv, Ukraine, 2020, doi: 10.1109/DSMP47368.2020.9204329.

[19] I. Sova, I. Sidenko, Y. Kondratenko, ‘Machine Learning Technology for Neoplasm Segmentation on Brain MRI Scans’, in: PhD Symposium at ICT in Education, Research, and Industrial Applications (ICTERI-PhD 2020), CEUR Workshop Proceedings, volume 2791, Kharkiv, Ukraine, 2020.

[20] A. Potlapally, P. S. R. Chowdary, S. S. Raja Shekhar, N. Mishra, C. S. V. D. Madhuri, A. V. V. Prasad, ‘Instance Segmentation in Remote Sensing Imagery using Deep Convolutional Neural Networks’, International Conference on contemporary Computing and Informatics (IC3I), Singapore, 2019, doi: 10.1109/IC3I46837.2019.9055569.

[21] D. Mikhov, Y. Kondratenko, G. Kondratenko, I. Sidenko, ‘Fuzzy Logic Approach to Improving the Digital Images Contrast’, IEEE 2nd Ukraine Conference on Electrical and Computer Engineering (UKRCON), Lviv, Ukraine, 2019, doi: 10.1109/UKRCON.2019.8879961.

[22] Y. Pomanysochka, Y. Kondratenko, I. Sidenko, ‘Noise Filtration in the Digital Images Using Fuzzy Sets and Fuzzy Logic’, 15th International Conference on ICT in Education, Research, and Industrial Applications: PhD Symposium (ICTERI 2019: PhD Symposium), CEUR Workshop Proceedings, volume 2403, Kherson, Ukraine, 2019.

[23] D. Oliva, M. Abd Elaziz, S. Hinojosa, ‘Clustering Algorithms for Image Segmentation’, in: Metaheuristic Algorithms for Image Segmentation: Theory and Applications. Studies in Computational Intelligence, volume 825, Springer, Cham, 2019, doi: 10.1007/978-3-030-12931-6_14.

[24] I. Khortiuk, G. Kondratenko, I. Sidenko, Y. Kondratenko, ‘Scoring System Based on Neural Networks for Identification of Factors in Image Perception’, 4th International Conference on Computational Linguistics and Intelligent Systems (COLINS), CEUR Workshop Proceedings, volume 2604, Lviv, Ukraine, 2020.

[25] H. R. Turkar, N. V. Thakur, ‘Performance Comparison of Clustering Algorithms Based Image Segmentation on Mobile Devices’, in: Mallick P., Balas V., Bhoi A., Zobaa A. (eds) Cognitive Informatics and Soft Computing. Advances in Intelligent Systems and Computing, volume 768, Springer, Singapore, 2019, doi: 10.1007/978-981-13-0617-4_56.

[26] K. Ivanova, G. Kondratenko, I. Sidenko, Y. Kondratenko, ‘Artificial Intelligence in Automated System for Web-Interfaces Visual Testing’, 4th International Conference on Computational Linguistics and Intelligent Systems (COLINS), CEUR Workshop Proceedings, volume 2604, Lviv, Ukraine, 2020.

[27] H. Ismkhan, ‘I-k-means-+: An iterative clustering algorithm based on an enhanced version of the k-means’, Pattern Recognition, 79, 2018, doi: 10.1016/j.patcog.2018.02.015.

[28] H. Zhang, Y. Zhu, ‘KSLIC: K-mediods Clustering Based Simple Linear Iterative Clustering’, in: Lin Z. et al. (eds) Pattern Recognition and Computer Vision. PRCV 2019. Lecture Notes in Computer Science, volume 11858, Springer, Cham, 2019, doi: 10.1007/978-3-030-31723-2_44.

[29] M. A. Mahboob, B. Genc, ‘Evaluation of ISODATA Clustering Algorithm for Surface Gold Mining Using Satellite Data’, International Conference on Electrical, Communication, and Computer Engineering (ICECCE), Swat, Pakistan, 2019, doi: 10.1109/ICECCE47252.2019.89 40673.

[30] E. Küçükkülahlı, P. Erdoğmuş, K. Polat, ‘Histogram-based automatic segmentation of images’, Neural Comput & Applic, 27, 2016, doi: 10.1007/s00521-016-2287-7.

[31] S. Kumar, M. Pant, M. Kumar et al., ‘Colour image segmentation with histogram and homogeneity histogram difference using evolutionary algorithms’, Int. J. Mach. Learn. & Cyber., 9, 2018, doi: 10.1007/s13042-015-0360-7.

[32] B. Hou, X. Zhang, D. Gong, S. Wang, X. Zhang, L. Jiao, ‘Fast graph-based SAR image segmentation via simple superpixels’, IEEE International Geoscience and Remote Sensing Symposium (IGARSS), Fort Worth, TX, USA, 2017, doi: 10.1109/IGARSS.2017.8127073.

[33] G. Toscana, S. Rosa, B. Bona, ‘Fast Graph-Based Object Segmentation for RGB-D Images’, in: Bi Y., Kapoor S., Bhatia R. (eds) Proceedings of SAI Intelligent Systems Conference (IntelliSys). Lecture Notes in Networks and Systems, volume 16, Springer, Cham, 2018, doi: 10.1007/978-3-319-56991-8_5.

[34] C. Chen, Z. Mu, ‘An Impoved Image Registration Method Based on SIFT and SC-RANSAC Algorithm’, Chinese Automation Congress (CAC), Xi’an, China, 2018, doi: 10.1109/CAC.2018.8623265.

[35] H. Duan, X. Zhang, W. He, ‘Optimization of SURF Algorithm for Image Matching of Parts’, in: Chen S., Zhang Y., Feng Z. (eds) Transactions on Intelligent Welding Manufacturing. Transactions on Intelligent Welding Manufacturing, Springer, Singapore, 2018, doi: 10.1007/978-981-13-3651-5_11.

[36] S. Routray, A. K. Ray, C. Mishra, ‘Analysis of various image feature extraction methods against noisy image: SIFT, SURF and HOG’, Second International Conference on Electrical, Computer and Communication Technologies (ICECCT), Coimbatore, India, 2017, doi: 10.1109/ICECCT.2017.8117846.

[37] A. B. Dos Santos, J. B. Dourado, A. Bezerra, ‘ARToolkit and Qualcomm Vuforia: An Analytical Collation’, XVIII Symposium on Virtual and Augmented Reality (SVR), Gramado, Brazil, 2016, doi: 10.1109/SVR.2016.46.

[38] D. Khan et al., ‘Robust Tracking Through the Design of High Quality Fiducial Markers: An Optimization Tool for ARToolKit’, in: IEEE Access, 6, 2018, doi: 10.1109/ACCESS.2018.2801028.

[39] X. Luo, ‘Design of full-link digital marketing in business intelligence era with computer software Edraw Max’, Management Science Informatization and Economic Innovation Development Conference (MSIEID), Guangzhou, China, 2020, doi: 10.1109/MSIEID52046.2020.00077.

[40] P. Chaudhari, R. Kamte, K. Kunder, A. Jose, S. Machado, “Street Smart’: Safe Street App for Women Using Augmented Reality’, Fourth International Conference on Computing Communication Control and Automation (ICCUBEA), Pune, India, 2018, doi: 10.1109/ICCUBEA.2018.8697863.

[41] M. A. Bintang, R. Harwahyu, R. F. Sari, ‘SMARIoT: Augmented Reality for Monitoring System of Internet of Things using EasyAR’, 4th International Conference on Informatics and Computational Sciences (ICICoS), Semarang, Indonesia, 2020, doi: 10.1109/ICICoS51170.2020.9299088.

[42] E. Gandolfi, R. E. Ferdig, Z. Immel, ‘Educational Opportunities for Augmented Reality’, in: Voogt J., Knezek G., Christensen R., Lai KW. (eds) Second Handbook of Information Technology in Primary and Secondary Education. Springer International Handbooks of Education, Springer, Cham, 2018, doi: 10.1007/978-3-319-71054-9_112.

[43] L. Soussi, Z. Spijkerman, S. Jansen, ‘A Case Study of the Health of an Augmented Reality Software Ecosystem: Vuforia’, in: Maglyas A., Lamprecht AL. (eds) Software Business. ICSOB 2016. Lecture Notes in Business Information Processing, volume 240, Springer, Cham, 2016, doi: 10.1007/978-3-319-40515-5_11.

[44] Z. Oufqir, A. El Abderrahmani, K. Satori, ‘ARKit and ARCore in serve to augmented reality’, International Conference on Intelligent Systems and Computer Vision (ISCV), Fez, Morocco, 2020, doi: 10.1109/ISCV49265.2020.9204243.

[45] V. Lytvyn, A. Gozhyj, I. Kalinina, V. Vysotska, V. Shatskykh, L. Chyrun, Y. Borzov, ‘An intelligent system of the content relevance at the example of films according to user needs’, CEUR Workshop Proceedings, 1st International Workshop on Information-Communication Technologies and Embedded Systems, ICT and ES, volume 2516, 2019.

[46] S. Kryvyi, O. Grinenko, V. Opanasenko, ‘Logical Approach to the Research of Properties of Software Engineering Ecosystem,’ IEEE 11th International Conference on Dependable Systems, Services and Technologies (DESSERT), Kyiv, Ukraine, 2020, doi: 10.1109/DESSERT50317.2020.9125033.

[47] P. Parekh, S. Patel, N. Patel, et al., ‘Systematic review and meta-analysis of augmented reality in medicine, retail, and games’, Vis. Comput. Ind. Biomed. Art 3 (21), 2020, doi: 10.1186/s42492-020-00057-7.

[48] O. Drozd, K. Zashcholkin, O. Martynyuk, J. Drozd, Y. Sulima, ‘Development of ICT Models in Area of Safety Education’, IEEE East-West Design & Test Symposium, Varna, Bulgaria, 2020, doi: 10.1109/EWDTS50664.2020.9224861.

[49] Y. Boltov, I. Skarga-Bandurova, I. Kotsiuba, M. Hrushka, G. Krivoulya, R. Siriak, ‘Performance Evaluation of Real-Time System for Vision-Based Navigation of Small Autonomous Mobile Robots’, 10th International Conference on Dependable Systems, Services and Technologies (DESSERT), Leeds, UK, 2019, doi: 10.1109/DESSERT.2019.8770045..

[50] D. Skorokhodov, et al., ‘Using Augmented Reality Technology to Improve the Quality of Transport Services’, in: Sukhomlin V., Zubareva E. (eds) Convergent Cognitive Information Technologies. Convergent 2018. Communications in Computer and Information Science, volume 1140, Springer, Cham, 2020, doi: 10.1007/978-3-030-37436-5_30 9.

[51] J. M. T. Ribeiro, J. Martins, R. Garcia, ‘Augmented Reality Technology as a Tool for Better Usability of Medical Equipment’, in: Lhotska L., Sukupova L., Lacković I., Ibbott G. (eds) World Congress on Medical Physics and Biomedical Engineering 2018. IFMBE Proceedings, volume 68, Springer, Singapore, 2019, doi: 10.1007/978-981-10-9023-3_61.

Biographies

Misiuk Tetiana is a master’s student of the Intelligent Information Systems Department of Petro Mohyla Black Sea National University. She received his bachelor and master degrees in specialty 122 “Computer Science” at Petro Mohyla Black Sea National University. From January 2019 till June 2019 Tetiana took part in the implementation of the different projects related to creating mobile applications with the use of AR technology. Tatiana is interested in studying augmented reality technologies; her job is related to this area. Both of her graduate projects are related to the study of augmented reality and the use of this technology in the field of education.

Yuriy Kondratenko is Doctor of Science, Professor, Honour Inventor of Ukraine (2008), Corr. Academician of Royal Academy of Doctors (Barcelona, Spain), Head of the Intelligent Information Systems Department at Petro Mohyla Black Sea National University (PMBSNU), Ukraine. He has received (a) the Ph.D. (1983) and Dr.Sc. (1994) in Elements and Devices of Computer and Control Systems from Odessa National Polytechnic University, (b) several international grants and scholarships for conducting research at Institute of Automation of Chongqing University, P.R.China (1988–1989), Ruhr-University Bochum, Germany (2000, 2010), Nazareth College and Cleveland State University, USA (2003), (c) Fulbright Scholarship for researching in USA (2015/2016) at the Dept. of Electrical Engineering and Computer Science in Cleveland State University. Research interests include robotics, automation, sensors and control systems, intelligent decision support systems, fuzzy logic.

Ievgen Sidenko is a Ph.D., Associate Professor, Associate Professor of the Intelligent Information Systems Department at Petro Mohyla Black Sea National University (PMBSNU), Ukraine. He has received master degree in speciality “Intelligent decision making systems” (2010) at Petro Mohyla Black Sea State University and Ph.D. degree in “Information technologies” (2015) at PMBSNU. Since 2013 took part in the implementation of a number of international and state projects (Tempus Cabriolet, Erasmus+ Aliot) related to intelligent systems, university-industry cooperation, neural networks, Internet of Things, etc. His research interests include fuzzy sets and fuzzy logic, decision-making, optimization methods, neural networks, data mining, clustering and classification.

Galyna Kondratenko is a Ph.D., Associate Professor, Associate Professor of the Intelligent Information Systems Department at Petro Mohyla Black Sea National University (PMBSNU), Ukraine. She is a specialist in control systems, decision-making, fuzzy logic. She worked in the framework of international scientific university cooperation during the implementation of international projects with the European Union: TEMPUS (Cabriolet), Erasmus + (Aliot) and DAAD-Ostpartnerschaftsprogramm (project with the University of Saarland, Germany). Her research interests include computer control systems, fuzzy logic, decision-making, intelligent robotic devices.

Journal of Mobile Multimedia, Vol. 17_4, 555–576.

doi: 10.13052/jmm1550-4646.1744

© 2021 River Publishers