Detection of Cotton Plant Diseases Using Deep Transfer Learning

Vani Rajasekar1, K. Venu1, Soumya Ranjan Jena2, R. Janani Varthini1 and S. Ishwarya1

1Department of CSE, Kongu Engineering College, Perundurai, Erode, India

2Department of CSE, School of Computing, Vel Tech Rangarajan Dr. Sagunthala R&D Institute of Science & Technology, India

E-mail: vanikecit@gmail.com; soumyajena1989@gmail.com

*Corresponding Author

Received 13 June 2021; Accepted 14 August 2021; Publication 29 October 2021

Abstract

Agriculture is a vital part of every country’s economy, and India is regarded an agro-based nation. One of the main purposes of agriculture is to yield healthy crops without any disease. Cotton is a significant crop in India in relation to income. India is the world’s largest producer of cotton. Cotton crops are affected when leaves fall off early or become afflicted with diseases. Farmers and planting experts, on the other hand, have faced numerous concerns and ongoing agricultural obstacles for millennia, including much cotton disease. Because severe cotton disease can result in no grain harvest, a rapid, efficient, less expensive and reliable approach for detecting cotton illnesses is widely wanted in the agricultural information area. Deep learning method is used to solve the issue because it will perform exceptionally well in image processing and classification problems. The network was built using a combination of the benefits of both the ResNet pre-trained on ImageNet and the Xception component, and this technique outperforms other state-of-the-art techniques. Every convolution layer with in dense block is tiny, so each convolution kernel is still in charge of learning the tiniest details. The deep convolution neural networks for the detection of plant leaf diseases contemplate utilising a pre-trained model acquired from usual enormous datasets, and then applying it to a specific task educated with their own data. The experimental results show that for ResNet-50, a training accuracy of 0.95 and validation accuracy of 0.98 is obtained whereas training loss of 0.33 and validation loss of 0.5.

Keywords: Xception, ResNet, ImageNet, convolutional neural network (CNN), deep CNN.

1 Introduction

And over the 50% of global population regards cotton related materials for their use. Cotton diseases have such a significant detrimental impact on agriculture development in terms of quality and quantity. They also pose a serious threat to us. In intensive farming and hydroponics, collecting information regarding real-time cotton healthy growth and development is emphasized. Cotton infections can be predicted before they emerge during the cotton manufacturing process using information gathered from a variety of sources. The traditional method of identifying cotton illnesses relies heavily on visual observations made by knowledgeable producers or cotton professionals in the field. This necessitates constant monitoring by skilled farmers, which could be prohibitively expensive on big farms. Definitely, in some emerging economies, farmers may be required to travel great distances to see agricultural growers, which is unquestionably time-consuming, expensive, and difficult to forecast. Furthermore, with the rapid advancement of image processing and information processing technology, a new method for identifying and diagnosing plant diseases has emerged. Image identification and machine learning are important study subjects because they could be useful in monitoring huge fields of crops and, as a result, automatically identify signs and symptoms as soon as they arise.

Deep learning is a subfield of machine learning that structures algorithms in layers to create an artificial neural network that can learn and make intelligent decisions on its own. Deep Transfer Learning as a field has existed before Deep Learning, and attempts to solve the problem of given a learned model that performs well on one task, modify the model so that it solves another task. Many earlier works have focused on image recognition, and a specific classifier has been developed that distinguishes image categories using various methods. Fully automated image recognition technology clearly shows strong performance in recent decades, owing to advancements in digital cameras and increased computing cost. It has been used in a variety of fields, including medical image analysis, biometrics, and manufacturing output, and has yielded amazing outcomes. Most plant illnesses are discovered first on the leaves and stems, that can be recognized automatically using effective image processing systems.

2 Related Research Works

After winning the ImageNet contest in 2012 with the very first CNN architecture. Each successive winning design employs an increasing number of layers in a deep neural network to decrease defects and increase efficiency. This works for a limited number of layers, however as the number of layers grows larger, a typical deep learning problem known as Exploding Gradient emerges. As a result, the gradient becomes 0 or excessively huge, and so on. As a result, expanding the number of layers to achieve non-linearity raises the training and test failure rate. Currently, only a few research projects have been involved in overcoming many of the problems in this subject. In [1] authors have extensible studied various ways to improve crop productivity by predicting leaf diseases early and taking procedures to eradicate or at the very least prevent the illnesses from spreading beyond the afflicted area. Phadikar et al. [2] classified plant diseases using two main approaches: Bayes and SVM classifiers. They employed 10 distinct combinations of training phase and test data in their research, which resulting in an efficiency of 79.5 percent. With tenfold cross-validation, the SVM obtained 68.1 percent accuracy for classification and other tasks. In the most recent days the deep transfer learning techniques, particularly CNNs are increasingly has become the ultimate strategy for overcoming some of the issues that prior classifier techniques faced.

In [3] Shang-Tse Chen et al. have proposed ShapeShifter based on faster R-CNN which tackles the more challenging problem of crafting physical adversarial perturbations to fool image-based object detectors. In [4] Jagan Mohan K. et al. have experimented that detection and recognition of Diseases from Paddy Plant Leaf accuracy rate is 91.10% using SVM and 93.33% using k-NN. In [5] N. Hatami et al. have investigated the performance of Recurrence Plots (RP) 14 within the deep CNN model using Time-Series Classification (TSC). Authors in [6] uses over CNN, a Deep-learning system for image-based crop disease diagnosis was created. The findings of this article used photographs of plant leaves to build a classifier with the purpose of classifying agricultural crops as well as the presence and identification of disease on photographs. They used a PlantVillage statistical model of 54,306 photos encompassing 38 classes of 14 crop plants and 26 illnesses for testing. The trained model archives an accuracy of 99.35% on a held-out test set. In [7] authors have described a method that’s been validated on the FER-2013 and CK+ datasets. The findings of the experiment reveal that CNN with Dense SIFT outperforms CNN and CNN with SIFT. On the FER-2013 and CK datasets, the proposed system provides state-of-the-art accuracy, with 73.4 percent on FER-2013 and 99.1 percent on CK+, correspondingly. In [8] Sourav Samanta et al. have described multilevel threshold technique. This methodology, which employs a new Cuckoo Search methodology, has been employed for feature extraction (CS). This is used to determine the best threshold value. For the random initial input parameters, the suggested technique saves the most optimal solution. Authors in [9] described a multi-scale system. CNN was given a curriculum learning technique that allows it to identify mammograms with good accuracy. In their paper [11], Junghwan Cho et al. discuss how to obtain high detection accuracy with low computational complexity in medical data theoretical frameworks by optimising the amount of training data set. Authors in [12] presented a model that uses Deep CNN and Activation Function to enhance image lesion categorization. Aside from that, they’ve explained how picture data augmentation can be used to overcome data limitations [13] proposes a unique CNN methodology for skin lesion classification given the information from several picture resolutions and the use of pre trained CNNs.

D. Venugopal et al. in [14] have suitably explained about a unique multi-modal data fusion-based feature extraction technique with Deep Learning (DL) model. In [15, 16] authors have narrated how early prediction of leaf diseases without affecting the crop production and taking efforts to eradicate or at the very least prevent the spread of disease in the areas around afflicted places. In [17] researchers have reported a unique strategy for assessing plant health by measuring the water quality level for plant species using a data mining methods to analyse plant and soil pictures. Alvaro Fuentes et al. [18] found that each illness includes important information in the affected region of the leaves that responds differently to other unaffected regions of the crop, focused on the identified of illnesses and plagues on the Tomato illness dataset. Sharada P. Mohanty et al. [19] have developed a deep-learning-based system for image-based crop disease diagnosis that achieves a 99.35 percent accuracy on an expected to hold test set, proving the practicality of this approach. In [20] the authors identify wheat stripe disease from wheat leaf rust, the scientists investigated four different applications of machine learning: back propagation, radial basis, generalised regression networks, and stochastic networks. In [21] Sindhuja Sankaran et al. have discussed different types of plant disease detection which are categorized by direct methods and indirect methods. Apart from that they have also compares the benefits and limitations of plant disease detection using molecular technique, spectroscopic technique, and imaging technique. In [22] authors have rigorously demonstrated the effect of the convolutional network depth on its accuracy in the large-scale image recognition setting. Usama Mokhtar et al. [23] extensible studied an approach to detect and identify unhealthy tomato leaves by the use of image processing technique which achieves an accuracy of 99.83% using linear kernel function. In [24] an artificial Neural classifier has been described by the authors. This is based on historical classification and can effectively detect and categorise the analysed diseases with an accuracy of roughly 93 percent in all investigated forms of plant leaves. Finally at last but not the list authors in [25] have used visualization the various methods to understand symptoms and to localize disease regions in leaf which achieves 99.18% accuracy.

3 Preliminaries

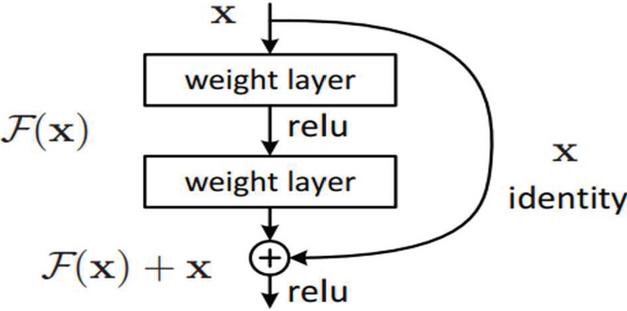

3.1 Residual Block

We established the concept of Residual Network to deal with the problem of increasing gradient (ResNet). ResNet, a novel architecture developed by Microsoft Research in 2017, founded a special architecture called Residual Network. This network based on the VGG-19’s 34-layer plain system architecture, with the fast connection placed on top. The architecture is then converted to ResNet using these shortcut links. They are simple to optimize, and ResNets can quickly acquire accuracy from greater depth, resulting in better outcomes than other systems. As illustrated in Figure 1, we utilize a special method known as Residual block loss function in this networking. This method bypasses a few stages of training and links output current. Without using layers to learn the cognitive process, this network allows the system to fit inside of the residual surveying.

Figure 1 Residual block skip connection.

It is denoted as

| (1) |

This gives

| (2) |

Where is an identity, is hash function and is residual function.

The main benefit of including this loss function is that if any layer degrades the architecture and design performance, it will be omitted by regularization. As a result, we were able to train very convolutional neural networks without the issue of blowing gradients, and we tested with 100–150 layers on CIFAR-10 dataset. Also there is a similar principle used by ”highway networks,” which use the sample is removed as well. Parametric gates are used in hidden neurons as well. These gates govern how much devices communicate through into the skip connections. This structure, on the other hand, has not achieved accuracy comparable to ResNet architecture.

3.2 Transfer Learning

Transfer learning is a machine learning technique in which a CNN learned for one job is utilised as the foundation for a model on a different task. This is due to the fact that deep learning models require a lot of time and computer resources to train vast parameters and datasets, and gathering a large labeled dataset for training phase is surely a difficult undertaking [10]. As a result, the transfer learning strategy has gradually become the preferred method and is automatically used in practice, with solutions consisting of employing a pre-trained network in which only the structure of the final classification layers must be deduced from scratch using the training data. The following are the key processes of the transferable active learning

A. Determine the base network

The weights of the network (W1,W2,…,Wn) are provided using the pre-trained CNN model, which is pre-trained specific data, such as ImageNet.

B. Establish a new neural network

The base layer network can be modified to establish a new network design, which could be implemented to accomplish the specific objective or aim, by changing, integrating, or removing layers.

C. Model training

In essence, fine-tuning refers to making minor tweaks to a process in order to get the intended output or effectiveness. The weights of a prior deep learning algorithm are used to design another comparable deep learning experience for fine-tuning deep learning. Though fine-tuning is useful for training new deep learning techniques, it can only be employed when the dataset of an existing system and the dataset of the prospective deep learning model are comparable. Fine-tuning is the process of fine-tuning or changing a model that has already been trained for one task to make it execute a second related task.

4 Proposed Methodology

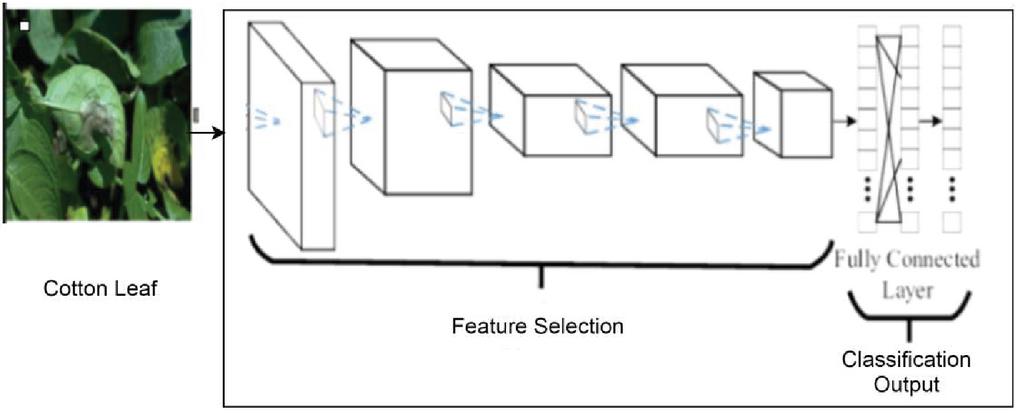

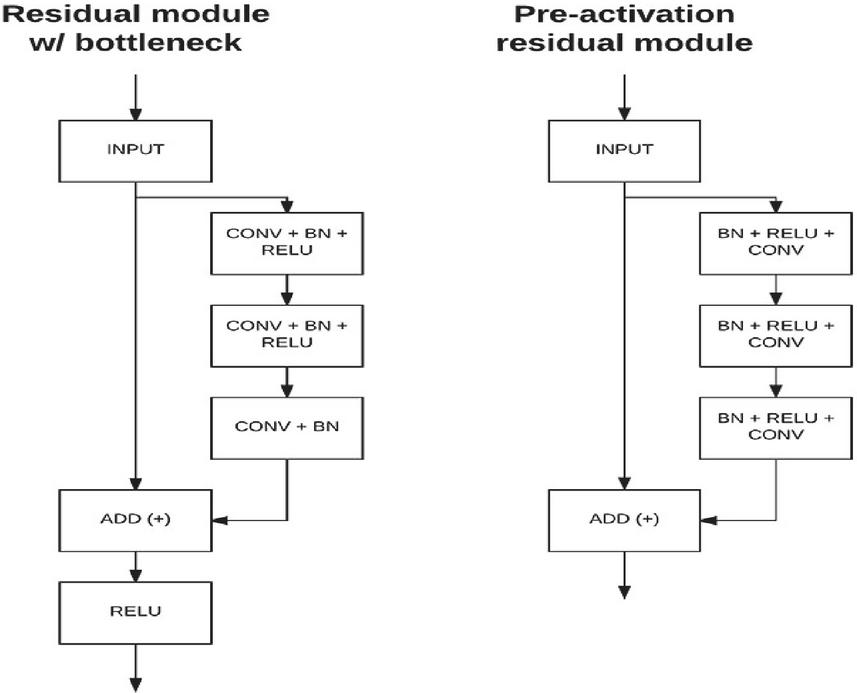

Residual module with bottleneck and pre-activation residual module, both has two branches. In residual module with bottleneck, one branch straight to the input output and another branch with series of CONV, BN, and RELU and finally adds both outputs and add RELU to activate. Whereas pre-activation residual module will not use RELU at last instead it makes the flow like BN, RELU and then CONV for 3 times and adds it to the output for better training results. The flow of the proposed approach is shown in the Figure 2.

Figure 2 Flow of the proposed CNN model.

4.1 Algorithm Steps for ResNet-50 Implementation

Step 1: Obtaining the required libraries: Perhaps the most important step is to import the required library for categorizing the images.

Step 2: Download and unzip the following files: The next step is to open Google Collab and downloading the dataset file. Then it will be placed to your Collab file repositories, where we may construct a gateway to the image or dataset we need to use. The next step is to unzip the document using the instruction and the file’s entire name.

Step 3: Pre processing photos for ResNet-50: Before beginning the pre processing procedure, load a photo from the dataset. Try to change the correct target size while loading the image, which is 224*224 for Res Net.

Step 4: Make the following estimate in Keras using ResNet-50 model: We can begin categorizing the picture after pre processing it by simply incorporating the ResNet-50 model.

Figure 3 Residual module flow diagram.

4.2 Implementation of Resnet

We may create ResNet architecture (containing Residual Blocks) starting beginning using the Tensor flow and Keras API, and that we can learn a lot in the process. The ResNet architecture is depicted in Figure 5 as a flow diagram. The CIFAR-10 dataset is used in this implementation. This dataset includes 60,000 32-bit colour photos of aeroplanes, cars, birds, cats, deer, dogs, frogs, ponies, ships, and trucks, among other things. Keras databases API functions can be used to evaluate these datasets. The Keras component and its APIs are first imported (application programming interface). Those APIs (application programming interfaces) aid in the development of the ResNet mod’s structure.

A. Convolutional Layer

Convolution is the basic process of applying a filtering to an input to produce activated layers. When the same filter is applied repeatedly to an input, a feature vector appears, representing the positions and intensity of a recognized characteristic in the input, such as images. The capacity of convolutional neural networks to train a huge number of pixels in parallel particular to the testing data under the constraints of a given forecast modelling issue, such as image classification, is its novelty. As a result, extremely distinct characteristics can be found across the input photos.

B. Pooling Layer

Just after convolutional layer, a second layer called a pooling layer is introduced. After just a non-linearity, such as ReLU, has been linked to the feature vectors produced by a convolutional layer. Pooling is similar to filtering in that it includes choosing a pooling procedure for feature maps. The max pooling or filter is generally smaller than the image, with a step of 2 pixels also often employed.

C. Xception Module

The xception module is an expansion of the Inception component Architecture that substitutes conventional Inception modules with local patterns Separable Convolutions. The Inception modules in convolutional neural networks are explained as an intermediary between conventional normal convolution and the depth wise separable convolutional process, which is a depth wise convolution proceeded by a point wise convolution. A local patterns separable convolution can be acquired as an Inception module with a maximum number of towers in this regard. This insight leads us to propose a new deep convolutional neural network architecture inspired by Inception, in which Inception modules are substituted by depth wise separable convolutions. We demonstrate that our architecture, termed Xception, outperforms Inception V3 on the Imagenet database (about which Inception V3 was created) and outperforms Inception V3 substantially on a bigger object recognition dataset with 350 million images and 17,000 classes. Because the Xception architecture has same amount of characteristics as Inception V3, the productivity gains are due to a greater and more efficient utilization of parameter values rather than greater capacity.

5 Results and Discussions

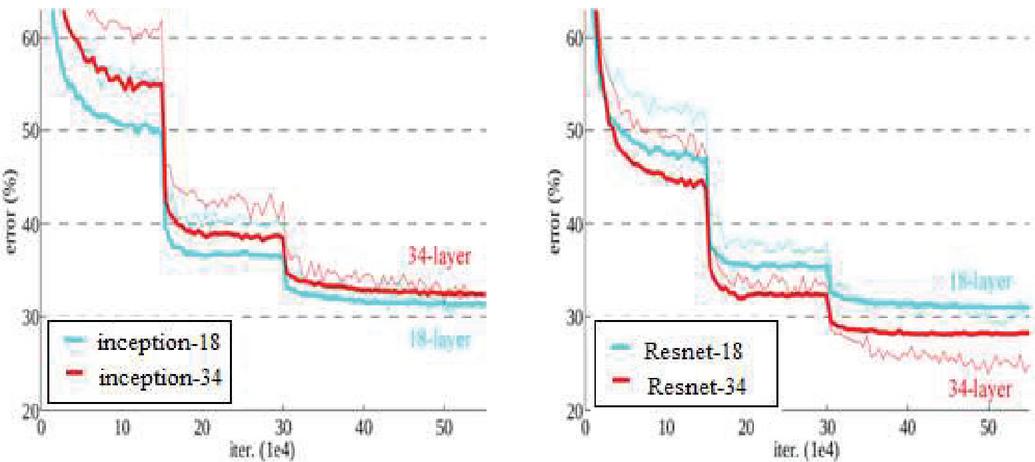

We employ a 152-layer ResNet on the Imagenet database, which is 8 times deeper than VGG-19 but it still has less foundation. On the ImageNet test set, a set of these ResNets caused an error of only 3.7 percent, which was the winning performance in the competition. Due to its incredibly deep portrayal, it also delivers a 28 percent significant enhancement on the COCO object recognition dataset and is shown in Table 1.

Table 1 Result of plain and ResNet

| No of Layers | Plain | ResNet |

| 18 Layers | 27.94 | 27.88 |

| 34 Layers | 28.54 | 25.03 |

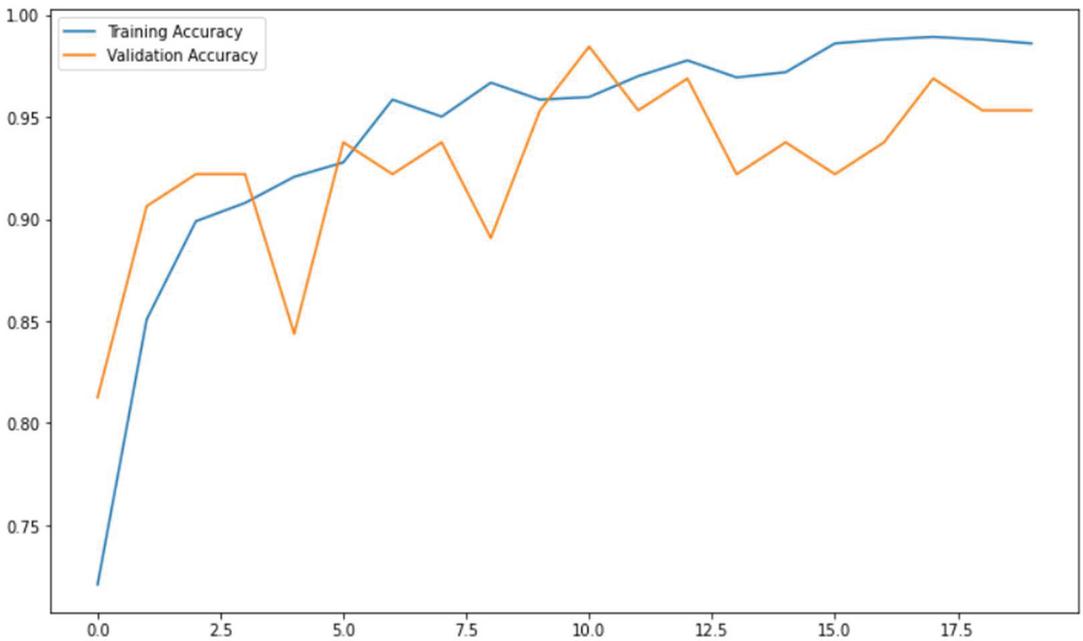

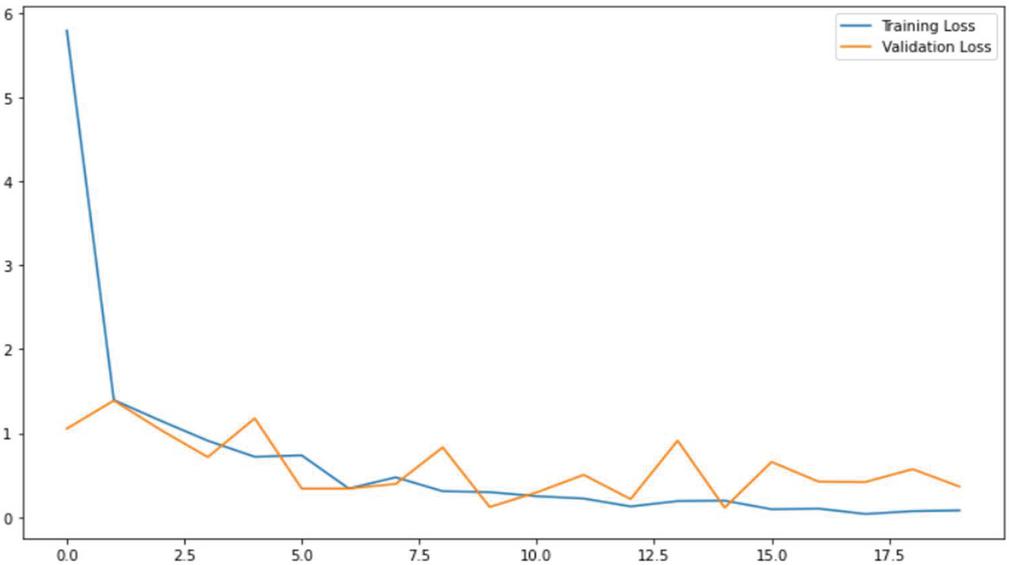

The twin measures of accuracy and loss are frequently employed in the machine learning community to measure and evaluate neural network models. Accuracy rate refers to the percentage of training data that is correctly classified, whereas training loss refers to the percentage of training data that is incorrectly classified. The experimental results show that for ResNet-50, a training accuracy of 0.95 and validation accuracy of 0.98 is obtained whereas training loss of 0.33 and validation loss of 0.5. Unlike accuracy, loss is an aggregate of the errors committed for each sample in the training or validation sets, rather than a percentage. In the training process, loss is frequently utilised to discover the “best” parameters for the model. The training and the validation accuracy is specified in the Figure 4. Similarly the training and validation loss is given in Figure 5. From the Figure 4 it is inferred that validation accuracy is considerably lower that training accuracy.

Figure 4 Accuracy of ResNet-50.

Figure 5 Training and Validation Loss of ResNet-50.

Figure 6 Inception model and ResNet comparison.

The loss is determined for both training and testing, and it is used to determine how well the model performs for these two sets. A loss, unlike accuracy, is not a proportion. It’s the total number of errors committed in each training or validation set for every example. The result reveals that the shortcuts interconnections would be able to tackle the difficulty caused by massively increasing the levels since, unlike the plain network, as the layers rise from 18 to 34, the failure rate on the ImageNet acceptability set lowers. Figure 5 shows that the loss function is substantially lower than the loss function. Figure 6 depicts the error percentage.

6 Conclusion and Future Enhancement

This paper presents the effective mechanism using deep learning approach thus the pursuit of fast, automated, less expensive, and reliable way to identify cotton. CNN based deep learning has performed well in labelling the majority of the technical issues connected with cotton disease. By defining a new fully integrated Softmax layer with the realistic amount of classifications, the pre-trained ResNet on imagenet is integrated with the Xception module for image classification. Moreover, the Focal Loss function, rather than the original Cross Entropy Loss function, is used in the system to improve the learning capacity of the smaller features. In this approach, the combined ResNet improves feature extraction capabilities while lowering model computation time without sacrificing discriminating construction. From the result analysis it is inferred that the proposed model performed well both on huge repository and the cotton disease picture dataset. In future the proposed method will be implemented on mobile products to continuously watch and recognize a wide variety of plant disease information. Also in the future, a discussion platform for formers will be included to analyse the current developments in various diseases.

References

[1] Das, A., Mallick, C., Dutta, S.: Deep Learning-Based Automated Feature Engineering for Rice Leaf Disease Prediction: Computational Intelligence in Pattern Recognition, 133–141, Springer AISC Series, 2020.

[2] Phadikar, S., Sil, J., Das, A.K: Rice Diseases Classification Using Feature Selection and Rule Generation Techniques, Computers and Electronic in Agriculture, 90, 76–85, 2013.

[3] Shang-Tse Chen, Cory Cornelius, Jason Martin, and Duen Horng (Polo) Chau, Robust Physical Adversarial Attack on Faster R-CNN Object Detector – Joint European Conference, 2018.

[4] Mohan, K.J., Balasubramanian, M., Palanivel, S.: Detection and Recognition of Diseases from Paddy Plant Leaf Image: International Journal of Computer Applications (0975 – 8887) Volume 144 – No.12, June 2016.

[5] Hatami, N., Gavet, Y., Debayle, J.: Classification of Time-Series Images Using Deep Convolutional Neural Networks, – Proceedings Volume 10696, Tenth International Conference on Machine Vision – ICMV 2017), Tenth International Conference on Machine Vision, 2017, Vienna, Austria.

[6] Mohanty, S.P., Hughes, D., Salathe, M: Using Deep Learning for Image-Based Plant Disease Detection. arXiv preprint, 2016.

[7] Tee Connie, Mundher Al-Shabi, Wooi Ping Cheah, Michael Goh: Facial Expression Recognition Using a Hybrid CNN–SIFT Aggregator, – Multi-disciplinary Trends in Artificial Intelligence, 2017, Springer.

[8] Sourav Samantaa, Nilanjan Dey, Poulami Das, Suvojit Acharjee, Sheli Sinha Chaudhuri.: Multilevel Threshold Based Gray Scale Image Segmentation Using Cuckoo Search, arXiv preprint, 2013.

[9] William Lotter, Greg Sorensen, and David Cox: A Multi-Scale CNN and Curriculum Learning Strategy for Mammogram Classification, arXiv:1707.06978, 2017.

[10] Deep Learning in Medical Image Analysis and Multimodal Learning for Clinical Decision Support Third International Workshop, DLMIA 2017, and 7th International Workshop, ML-CDS 2017, Springer (Book).

[11] J. Cho, K. Lee, E. Shin, G. Choy, and S. Do, “How Much Data is Needed To Train a Medical Image Deep Learning System To Achieve Necessary High Accuracy?” arXiv preprint arXiv:1511.06348, 2015.

[12] Tri-Cong Pham, Chi-Mai Luong, Muriel Visani, Van-Dung Hoang: Deep CNN and Data Augmentation for Skin Lesion Classification, Springer International Publishing, 2018.

[13] Jeremy Kawahara, and Ghassan Hamarneh: Multi-resolution-Tract CNN with Hybrid Pretrained and Skin-Lesion Trained Layers, Machine Learning in Medical Imaging (MLMI) Workshop (part of the MICCAI conference).

[14] D. Venugopal, T. Jayasankar, Mohamed Yacin Sikkandar, Mohamed Ibrahim Waly, Irina V. Pustokhina, Denis A. Pustokhin and K. Shankar: A Novel Deep Neural Network for Intracranial Haemorrhage Detection and Classification, Computers, Materials & Continua, Tech Science Press, DOI:10.32604/cmc.2021.015480.

[15] M. Karki, J. Cho, E. Lee, M. H. Hahm, S.Y. Yoon et al., “CT Window Trainable Neural Network For Improving Intracranial Hemorrhage Detection By Combining Multiple Settings,” Artificial Intelligence in Medicine, vol. 106, pp. 1–24, 2020.

[16] Das A., Mallick C., Dutta S.: Deep Learning-Based Automated Feature Engineering for Rice Leaf Disease Prediction, Computational Intelligence in Pattern Recognition. Advances in Intelligent Systems and Computing, Vol. 1120. Springer, Singapore. https://doi.org/10.1007/978-981-15-2449-3_11.

[17] Rajasekar, V., Premalatha, J., & Sathya, K. (2021). Cancelable Iris template for secure authentication based on random projection and double random phase encoding. Peer-to-Peer Networking and Applications, 14(2), 747–762.

[18] Han, L., Haleem, M.S., Taylor, M.: A novel computer vision-based approach to automatic detection and severity assessment of crop diseases. International Journal of Applied Engineering Research ISSN: 0973-4562, Volume 12, Number 18, pp. 7169–7175, 2017.

[19] Alvaro Fuentes, Dong Hyeok Im, Sook Yoon, and Dong Sun Park: Spectral Analysis of CNN for Tomato Disease Identification, International Conference on Artificial Intelligence and Soft Computing, ICAISC 2017: Artificial Intelligence and Soft Computing, pp. 40–51.

[20] Mohanty, S., Hughes, D., Salathe, M.: Using Deep Learning For Image-Based Plant Disease Detection. Frontiers in Plant Science., September 2016, Volume 7, Article 1419, https://doi.org/10.3389/fpls.2016.01419.

[21] Haiguang Wang, Guanlin Li, Zhanhong Ma, Xiaolong L: Application of Neural Networks to Image Recognition of Plant Diseases,IEEE International Conference on Systems and Informatics (ICSAI), 2012.

Biographies

Vani Rajasekar completed B. Tech (Information Technology), M. Tech (Information and Cyber warfare) in Department of Information Technology, Kongu Engineering College, Erode, Tamil Nadu, India. She is pursuing her Ph.D. (Information and Communication Engineering) in the area of Biometrics and Network security. Presently she is working as an Assistant professor in the Department of Computer Science and Engineering, Kongu Engineering College Erode, Tamil Nadu, India for the past 5 years. Her areas of interest include Cryptography, Biometrics, Network Security, and Wireless Networks. She has authored around 20 research papers and book chapters published in various international journals and conferences which were indexed in Scopus, Web of Science, and SCI.

K. Venu is currently working as Assistant Professor in the department of Computer Science & Engineering in Kongu Engineering College, Tamilnadu, India. She is pursuing Ph.D., in Machine Learning under Anna University. She has completed 5 years of teaching service. She has published 5 articles in International/National Conference. She has authored 1 book chapter with reputed publishers. She has published 3 articles in International Journals.

Soumya Ranjan Jena is currently working as an Assistant Professor in the Department of CSE, School of Computing at Vel Tech Rangarajan Dr. Sagunthala R&D Institute of Science & Technology, Avadi, Chennai, Tamil Nadu, India. He has teaching and research experience from various reputed institutions in India like Galgotias University, Greater Noida, Uttar Pradesh, AKS University, Satna, Madhya Pradesh, K L Deemed to be University, Guntur, Andhra Pradesh, GITA (Autonomous), Bhubaneswar, Odisha. He has been awarded M.Tech in Information Technology from Utkal University, Odisha, B.Tech in Computer Science & Engineering from BPUT, Odisha, and Cisco Certified Network Associate (CCNA) from Central Tool Room and Training Centre (CTTC), Bhubaneswar, Odisha. He has got the immense experience to teach to graduate as well as post-graduate students and author of three books i.e. “Mastering Disruptive Technologies- Applications of Cloud Computing, IoT, Blockchain, Artificial Intelligence and Machine Learning Techniques”, “Theory of Computation and Application” and “Design and Analysis of Algorithms”. Apart from that he has also published more than 25 research papers on Cloud Computing, IoT in various international journals and conferences which are indexed by Scopus, Web of Science, and also published six patents out of which one is granted in Australia.

R. Janani Varthini is BE student of Computer Science and Engineering department of Kongu Engineering College. She graduated in the year 2021. Her area of interest includes Datamining, Machine learning and Deep learning.

S. Ishwarya is BE student of Computer Science and Engineering department of Kongu Engineering College. She graduated in the year 2021. Her area of interest includes Machine learning and Deep learning.

Journal of Mobile Multimedia, Vol. 18_2, 307–324.

doi: 10.13052/jmm1550-4646.1828

© 2021 River Publishers