Weed Detection Model Using the Generative Adversarial Network and Deep Convolutional Neural Network

S. Anthoniraj1,*, P. Karthikeyan2 and V. Vivek3

1MVJ College of Engineering, Bangalore, Karnataka, India

2Jain (Deemed to be University), Bangalore, Karnataka, India

3Faculty of Engineering & Technology, JAIN (Deemed-to-be University), Bangalore, Karnataka, India

E-mail: anthoniraj@mvjce.edu.in; nrmkarthi@gmail.com; v.vullikanti@jainuniversity.ac.in

*Corresponding Author

Received 03 July 2021; Accepted 10 August 2021; Publication 28 October 2021

Abstract

Agriculture crop demand is increasing day by day because of population. Crop production can be increased by removing weeds in the agriculture field. However, weed detection is a complicated problem in the agriculture field. The main objective of this paper is to improve the accuracy of weed detection by combining generative adversarial networks and convolutional neural networks. We have implemented deep learning models, namely Generative Adversarial Network and Deep Convolutional Neural Network (GAN-DCNN), AlexNet, VGG16, ResNet50, and Google Net perform the detection of the weed. A generative Adversarial Network generates the weed image, and Deep Convolutional Neural Network detects the weed in the image. GAN-DCNN method outperforms than existing weed detection method. Simulation results confirm that the proposed GAN-DCNN has improved performance with a maximum weed detection rate of 87.12 and 96.34 accuracies.

Keywords: Deep learning, weed detection, generative adversarial network, deep convolutional neural network.

1 Introduction

A deep learning model requires a massive data set to train the model and expected output in the image classification problem. Weed detection is a challenging issue in the farming field that needs help from deep neural networks to recognize weeds with different plants. When the farmers cultivate the crop in the field, weed will also grow because of the soil nature and seed. So weed detection discovers the space of weeds. The population in the world are increasing day by day, and existing agricultural land is used for constructing building because of the less revenue in the agriculture business [1, 2].

The researcher uses deep CNN to detect weeds and few strategies to group the weeds from crop plants dependent on various highlights of harvest plants and weeds like tone, ghostly reflectance, shape, vein designs, size, and so forth, Be that as it may, these strategies cannot dependably and precisely play out the separation task in the complex task in agriculture farm field [3, 4].

Recently many deep learning techniques have been investigated for crop-weed classification. For example, Sa et al. [5] applied deep neural network for crop row detection in Unmanned Aerial Vehicle (UAV) Images on multi-classification datasets for grouping sugar beet crop from weed. Developed six models on various unearthly channels and accomplished excellent performances in weed detections. A few other deep learning CNN were applied for weed detection with pictures taken from UAVs and ground-based vehicles. However, much pre-processing of the information is required for getting expected accuracy. To address this issue, this paper uses a semi-directed adaptation of the GAN. In the introduced GAN based semi-directed grouping strategy, a generator makes huge practical pictures, thus, constraining a discriminator to learn better highlights for more exact pixel grouping. To the best of our insight, utilizing GAN strategies for multispectral picture characterization will provide good performances [6].

This research work takes advantage of the generative adversarial network (GAN) and Deep CNN to detect the weed. Firstly, all weed features are extracted by different sizes of filters; The filters are essential in the deep learning model, which is used to extract the features from the given input image and later is used for classifying the images. The filters can be designed in two different ways. One is small size filters used to detect fine-grained image features in the input image, and the second one is large size filters, which are used to extract coarse-grained image features in the input images. Finally, weed image generated using GAN. The GAN method performs feature propagation between convolution layers [7, 8].

The significant work of the paper is presented as follows.

1. Generative Adversarial Network and Deep Convolutional Neural Network (GAN-DCNN) weed detection are developed to detect weed.

2. The proposed model parameters are optimized in order to improve the weed detection rate.

3. The proposed model performances are compared with the AlexNet, VGG16, ResNet50 and Google Net.

The paper is organized as follows: Section 1 outlines weed detection in the agriculture field using a deep convolutional neural network and the challenges present. Section 2 discuss the eight literary works for detecting weed in the agriculture field. Section 3 presents the proposed GAN-DCNN model for detecting weed. The experimental setup and result analysis are discussed in Section 4. Finally concluded the paper in Section 5 with possible future directions.

2 Literature Survey

The related work of recent weed detection models on agriculture using the deep CNN neural network method is discussed.

Jiang et al. developed a convolutional graph network & residual neural network (GCN&ResNet) for weed detection accuracy. The proposed method combines the advantage of CNN feature extractions and GCN for weed detections. The proposed method is compared with three different methods (Alex Net, Visual Geometry Group-16 and ResNet-101) and four datasets, and it provides superior accuracy. However, the proposed method will not provide good accuracy if different crop locations, soils and image acquisition heights [9].

Abdalla et al. assessed three transfer learning models using a VGG19-based encoder with and without data augmentation, and performances were analyzed. First, the VGG19-based encoder net in which the adjusted model was utilized for extraction and the division was performed utilizing shallow AI classifiers (MLCs). Second, move learning exhibited proficiency and introduced a strong execution in fragmenting plants among high-thickness weeds [10].

Raja et al. discussed crop signalling for a fully automated weed-spraying control system. The framework used PC vision procedures to effectively decide the spatial area of every lettuce plant and weed. The proposed method beat many current techniques regarding weed-crop identification and grouping exactness in primary in-field conditions, including high weed densities. In addition, different components may influence the characterization of yields and weeds in the indigenous habitat, like impediment of the painted leaf [11].

Gao et al. developed a deep convolutional neural network for image-based Convolvulus sepium detection in sugar beet fields. The developed method attained a better trade-off between speed and accuracy. Furthermore, the additional manufactured pictures in the preparation cycle improved the presentation of the created network in C. The trained model is deployed on uncrewed aerial vehicles and autonomous field robots for weed detection and management [12].

You et al. discussed deep neural network-based semantic segmentation for detecting weed and crop. The proposed model has combined the convolutional layer and Drop Block to enlarge the receptive field and learn robustness features. It provides excellent performance compared to the traditional deep learning model, and every part can help the division exactness. However, the proposed method has neglected to further build the precision because of the boisterous or equivocal commented on names among weed and yield limits [13].

Asad et al. designed a weed detection model for canola fields using maximum likelihood classification and a deep convolutional neural network. The prepared method map the weed dataset and crop pixels alongside foundation pixels. In the wake of securing high-goal RGB pictures from the canola field, the foundation is divided as a first naming advance. Afterwards, the minority class pixel is physically marked. The technique has better outcomes when we contrast it with the conventional weed detection model [14].

Dos Santos Ferreira et al. reported deep unsupervised and semi-automatic data labelling in weed detections. The proposed method uses the semi-automatic labelling in weed discrimination, accomplished unbelievable execution in Grass-Broadleaf and presented as a choice to the significant test of Deep Learning in agriculture. Unsupervised deep clustering predicts semi-automatic labelling results like the Grass-Broadleaf dataset in more unpredictable farming datasets like Deep Weeds. Besides, this procedure is basic and clear to be recreated in subjective datasets, anyhow the farming degree, with basically no change [15].

Hu et al. discussed the novel deep neural network and Graph-based weeds Net(GWN), which plans to identify weed species using RGB pictures gathered from rangelands. GWN design incorporates convolution layers and the pooling layers, which can build fine-grained level portrayal and is required to improve the presentation of weed recognizable proof assignments. Furthermore, GWN gives the capacity to find the critical areas of the whole picture, which demonstrates the from head to foot likelihood of containing the objective weeds instead of the foundation or different plants. Critically, earlier information on weed limitation thorough explanation is not needed. Accordingly, GWN can be seen as a semi-managed learning approach that eases the troublesome comment errands [16].

3 Generative Adversarial Network and Deep Convolutional Neural Network model (GAN-DCNN)

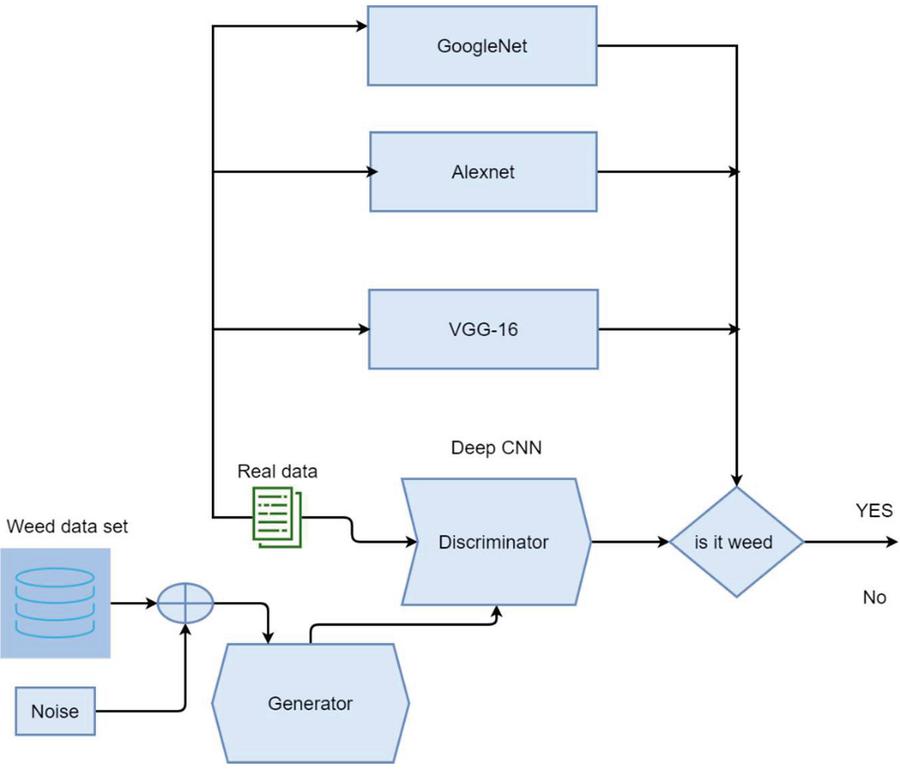

A generative adversarial network contains two core components (i) generator and (ii) discriminator. The generator generates an enormous amount of fake images using the weed data set, and the purpose of the discriminator is to classify whether the given input image is an actual image or not. Weed identification in the agriculture farm field, first we need to identify the weed location and size. It differs from phase to phase. Traditional item-based arrangement approaches are prone to failure because of cloudy yield weed limits. The architecture of the proposed GAN-CNN for weed detections is shown in Figure 1.

The operational flow of the proposed work is summarized as follows.

i. Weed data set with noise given to the generator will generate the fake weed image. Then, the generated image is passed to the discriminator, which uses a deep CNN model to classify the images.

ii. Deep CNN uses convolutional layers and filters to extract the fine-grained features and coarse-grained features in the input images.

iii. Fine-grained features and coarse-grained features are forwarded to pooling layers which will reduce the dimension of the feature.

iv. The reduced feature is forwarded to the fully connected layer to categorize whether the given image contains weed or not. If the image contains weed first neuron will fire and give the output in fully connected layers. Otherwise, the second neuron will fire, and it will inform that image does not contain weed.

v. Compute accuracy if the accuracy is not acceptable tune network structure and hyperparameters. Repeat this process until we get the expected accuracy.

Figure 1 The architecture of the GAN-DCNN for weed detections in agriculture.

The main structure block of Deep CNN is the convolution layer. The convolution layer learns the main features in the image. Therefore, it is customary to attempt to improve this part of deep convolutional neural network engineering. Here we present the inspirations driving a portion of the key developments.

GAN uses two-loss functions, one used on the generator side, and it can be written using Equation (1).

| (1) |

where p refers to the actual data and p is the model distribution indirectly well-defined by x G(Z, Y).

Similarly, discriminator loss can be computed the Equation (2)

| (2) |

where N refers to the number of classes in the weed data set. The generator is trained to minimize the generator loss, and the discriminator is trained to minimize the discriminator loss.

Classification in weed detection is done using linear filters. Linear filters are suitable for the linear separable data set. In addition, we have introduced a nonlinear filter for the weed detection image classifications. Nonlinear filters are expressed mathematically in Equation (3).

| (3) |

Where i, j refer to the index of the tiny features and the refers to the input locations. k refers to the feature map channel. n refers to the number of layers in the deep neural network.

The primary purpose of the pooling layers is to reduce the image size of the input image so that that model will give an immediate response to the given input images. Although the max-pooling will give a better result than other pooling, one of the main disadvantages of the max-pooling is that it will overfit the training data. Therefore, it will give good accuracy in the training data set and not in the testing data set. To solve this problem, we have introduced the mixing of average pooling and max pooling. Then, based on Equation (2), we can compute the average and max pooling of the given region .

| (4) |

Here, the p 1denote the average pooling and p refers to the max pooling.

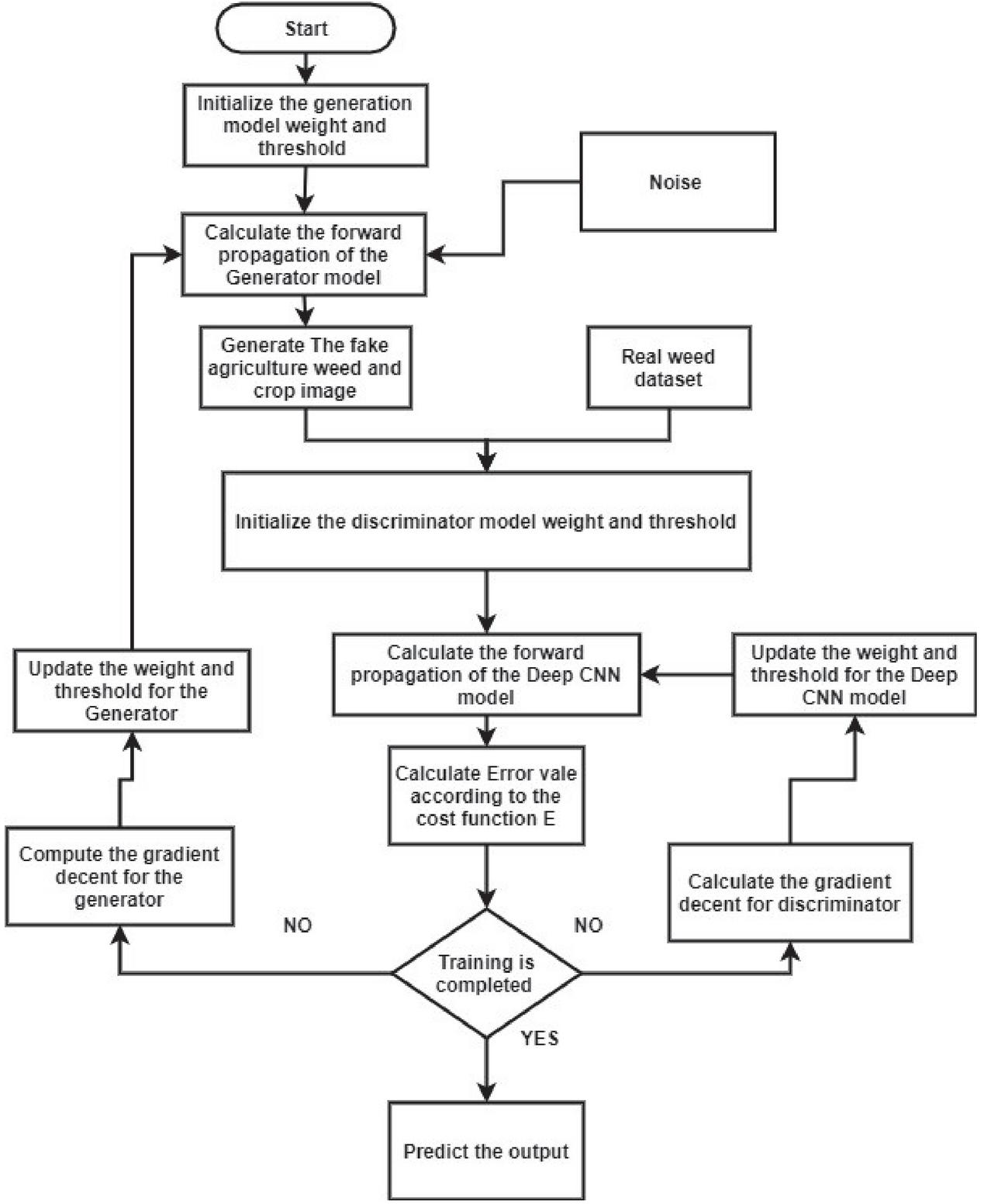

Figure 2 Proposed system workflow.

The figure shows the proposed system workflow. The discriminative method attempts to order input information; given the highlights of a case of information, they anticipate a name or classification to which that information has a place. For instance, given weed and crop image, a discriminative model could anticipate whether the message is a weed or not weed. Weed is one of the marks. When this issue is communicated numerically, the name is called y, and the highlights are called x. The plan p(yx) is utilized to signify “the likelihood of y given x”, which for this situation would mean “the likelihood that an image is a weed or not”. So, the discriminative model map highlights names. They are concerned exclusively with that relationship. One approach to consider generative models is that they do the inverse. Rather than foreseeing a mark given specific highlights, they endeavour to anticipate highlights given a specific name.

4 Results and Discussion

This section presents the experimental setup and results in discussing the GAN-DCNN weed detection in the agriculture field.

4.1 Comparative Techniques

The proposed weed detection method compares well-known image classification methods such as AlexNet, VGG16, ResNet50 and Google Net.

Alex Net: Alex Net is classical convolutional neural network image classification. It used the non-saturating ReLU activation function [17].

VGG16: It makes the development over AlexNet by replacing large kernel-sized filters (11 and 5 in the first and second convolutional layer, respectively) with multiple 3 3 kernel-sized filters one after another [18].

ResNet50: ResNet50 is different from the ResNet model, which has 48 Convolution layers and 1 MaxPool and 1 Average Pool layer [19].

GoogleNet: GoogLeNet is a type of CNN based on the Inception architecture. It uses convolutional layers, and filters are used for classifying the images [20].

4.2 Data Set

Performances of the proposed GAN-DCNN method is evaluated using the DeepWeeds dataset.

DeepWeeds dataset: The DeepWeeds dataset contains 17,509 images for deep learning-based weed detections. Each image has a height size of 256 and width size 256, and weed species has more than 1000 pictures. Since this dataset gives class names for each picture, it is customized for the weed grouping undertakings. DeepWeeds was officially added to the TensorFlow Datasets catalogue in August 2019 [21].

4.3 Experimental Setup

The proposed GAN-DCNN weed detection is implemented by python. We have used a single computer node with 16 GB Random Access memory, Ubuntu operating system, and Intel I7.

4.4 Comparative Result Discussion

The comparative result discussion of the GAN-DCNN weed detection is discussed here. The conventional weed detection model and proposed GAN-DCNN weed detection model are analyzed by changing the percentage training, and testing data set percentage.

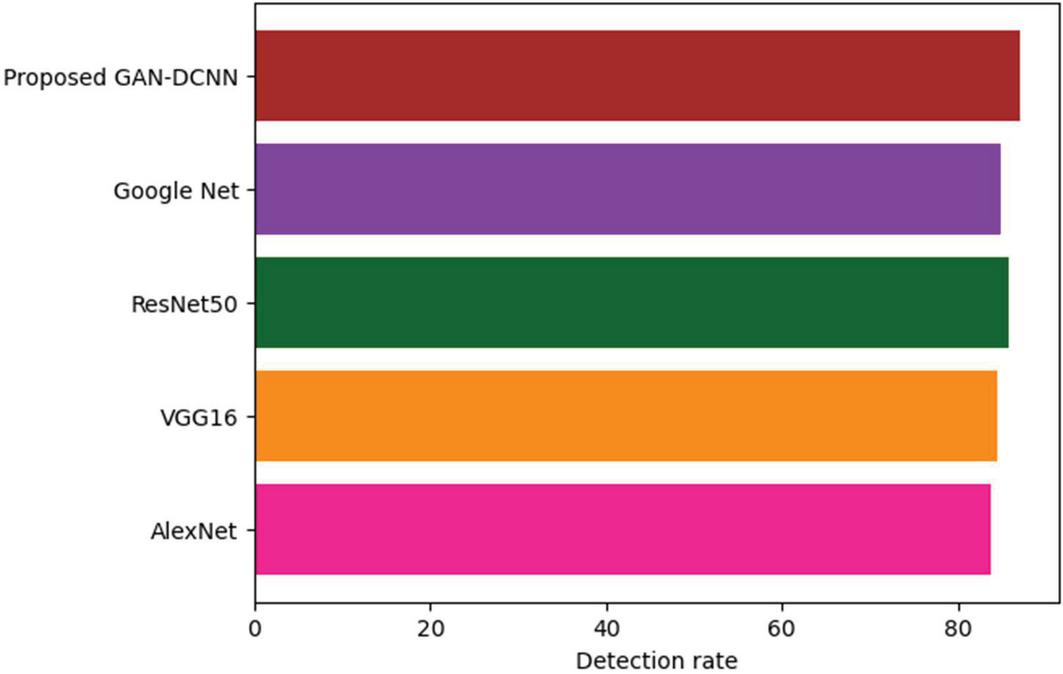

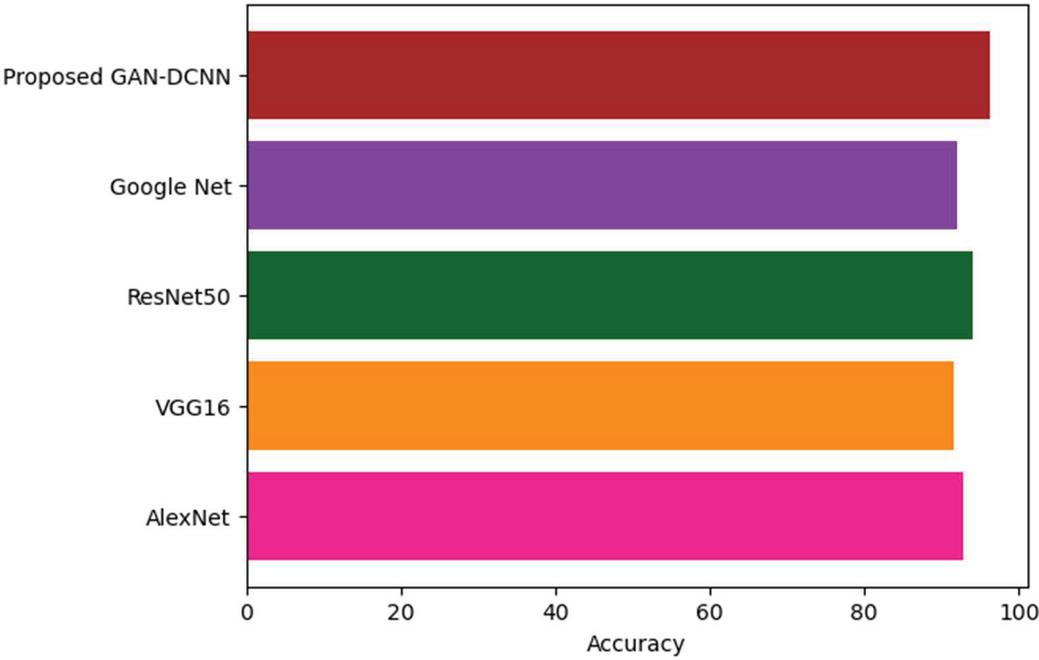

The comparative analysis of the AlexNet, VGG16, ResNet50, Google Net against the performance of GAN-DCNN while using the deep weed net dataset is presented in Table 1.

Table 1 Comparative analysis

| Method | Detection Rate | Accuracy | Recall | Precision |

| AlexNet | 83.79 | 92.77 | 93.30 | 84.62 |

| VGG16 | 84.41 | 91.53 | 92.56 | 79.34 |

| ResNet50 | 85.82 | 94.12 | 91.22 | 80.90 |

| Google Net | 84.89 | 91.90 | 89.23 | 81.22 |

| Propose GAN-DCNN | 87.12 | 96.34 | 94.78 | 87.23 |

Figure 3 Comparative analysis of detection rate for the different model.

Figure 3 shows the detection rate of AlexNet, VGG16, Google Net, ResNet50 and GAN-DCNN. The X-axis refers to the detection rate, and Y-axis refers to different image detection models. The existing AlexNet, VGG16, ResNet50, Google Net have the detection rate of 83.79, 84.41, 85.82 and 84.89. The GAN-DCNN has improved the detection rate to 87.12 because GAN is a model specially used for enriching datasets. Alex net uses sigmoid and tanh activation functions because this vanishing gradient problem will arise, so training the deep network is complicated. Therefore, AlexNet is not performing in terms of detection rate than our proposed model. AlexNet also addresses the overfitting problem by using the dropout layer techniques.VGG 16 net takes a tremendous amount of training parameters than GAN-DCNN, Google net, and ResNet50.VGG 16 net also not performing in terms of detection rate because of this considerable number of training parameters.

Figure 4 shows the accuracy of AlexNet, VGG16, Google Net, ResNet50 and GAN-DCNN. The existing AlexNet, VGG16, ResNet50, Google Net have the accuracy of 92.77, 91.53, 94.12 and 91.90. The GAN-DCNN has improved the accuracy to 96.34. There are two different types of filters used in GAN-DCNN. One is small size filters used to detect fine-grained image features in the input image, and the second one is large size filters, which are used to extract coarse-grained image features in the input images. GAN-DCNN detects both tiny and significant features present in the weed image, so it provides good accuracy than our existing model.

Figure 4 Comparative analysis of accuracy for the different model.

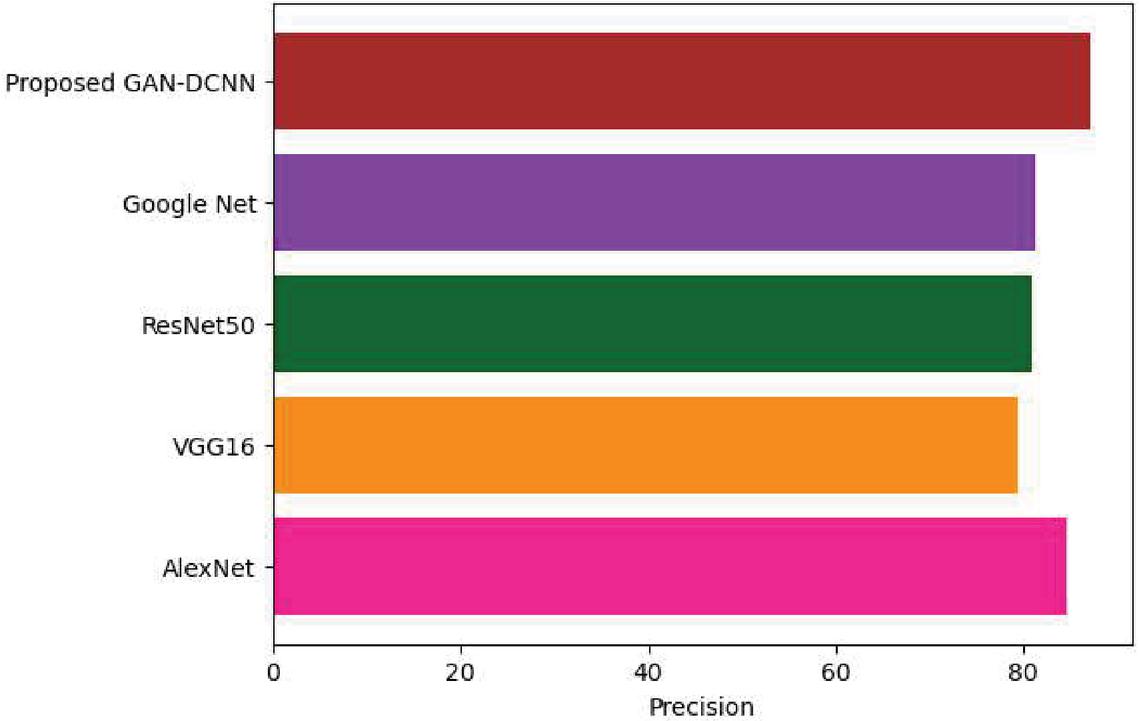

Figure 5 Comparative analysis of precision for the different model.

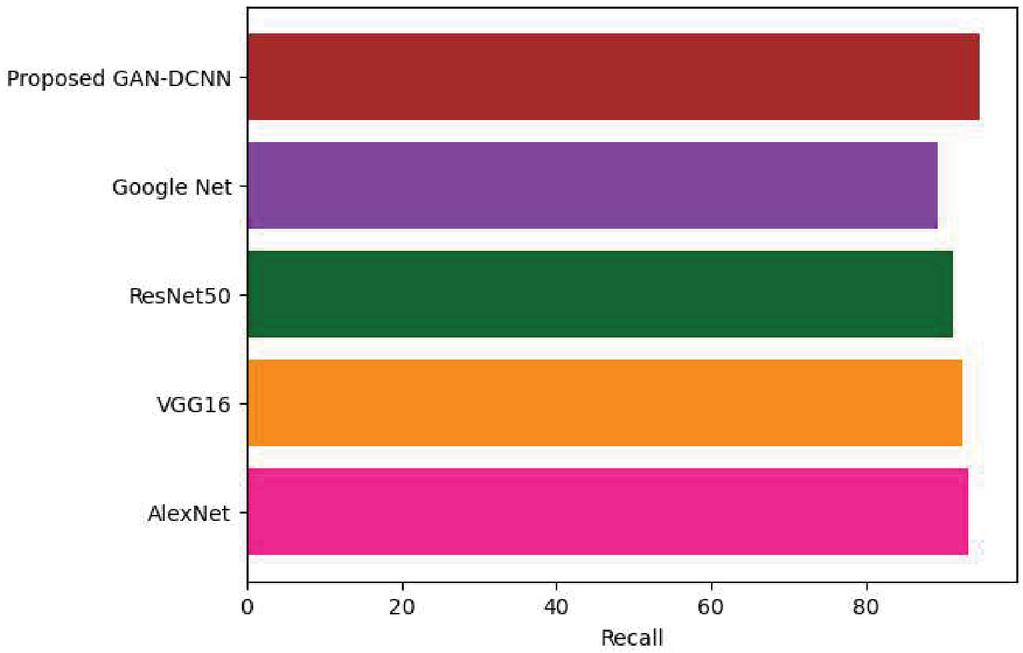

Figure 6 Comparative analysis recall for the different model.

Figure 5 shows the precision of AlexNet, VGG16, Google Net, ResNet50 and GAN-DCNN. The existing AlexNet, VGG16, ResNet50, Google Net have the precision of 84.62, 79.34, 80.90 and 81.22. The GAN-DCNN has improved the accuracy to 87.23. ResNet50 uses fast connection neurons to solve vanishing gradient problems, so it provides good precision than VGG16.VGG16 suffers from a vanishing gradient problem because of the activation function used in that model. VGG 16 takes a tremendous amount of training time because of the many parameters used in the model.

Figure 6 shows the comparative analysis of the recall for different weed detection models. Although ReLU assists with the evaporating inclination issue, the learned factors can turn out to be pointlessly high because of its unbounded nature. To forestall this, AlexNet presented Local Response Normalization (LRN). The thought behind LRN is to complete standardization in a neighbourhood of pixels, intensifying the invigorated neuron while hosing the encompassing neurons simultaneously. Alex Net perform better than google net, ResNet50, and VGG16 in terms of recall.

5 Conclusion and Future Work

This paper presents a weed detection model using the generative adversarial network and deep convolutional neural network. The proposed system combines the advantage of GAN and DCNN to detect weeds in the agriculture field. The generator model generates the new image using the weednet data set, and the discriminator uses the deep CNN model to detect the image contain weed or not. The implementation of the GAN-DCNN model is accomplished by considering deep weednet datasets. The simulation results confirm that our prosed model performs better than the exiting model AlexNet, VGG16, ResNet50, Google Net with the values like 87.12, 96.34, 94.78 and 87.23 for detection rate accuracy, recall and precision. In the future, this work can be extended by applying this model for weed detection in different locations, soil, and field.

References

[1] J. Du, Y. Liu, and Z. Liu, “Study of precipitation forecast based on deep belief networks,” Algorithms, vol. 11, no. 9, pp. 1–11, 2018, DOI: 10.3390/a11090132.

[2] C. Wang et al., “Pulmonary image classification based on inception-v3 transfer learning model,” IEEE Access, vol. 7, pp. 146533–146541, 2019, DOI: 10.1109/ACCESS.2019.2946000.

[3] F. Liu, Y. Yang, Y. Zeng, and Z. Liu, “Bending diagnosis of rice seedling lines and guidance line extraction of automatic weeding equipment in paddy field,” Mech. Syst. Signal Process., vol. 142, no. 381, p. 106791, 2020, DOI: 10.1016/j.ymssp.2020.106791.

[4] A. Wang, Y. Xu, X. Wei, and B. Cui, “Semantic Segmentation of Crop and Weed using an Encoder-Decoder Network and Image Enhancement Method under Uncontrolled Outdoor Illumination,” IEEE Access, vol. 8, pp. 81724–81734, 2020, DOI: 10.1109/ACCESS.2020.2991354.

[5] M. D. Bah, A. Hafiane, and R. Canals, “CRowNet: Deep Network for Crop Row Detection in UAV Images,” IEEE Access, vol. 8, pp. 5189–5200, 2020, DOI: 10.1109/ACCESS.2019.2960873.

[6] S. L. Madsen, M. Dyrmann, R. N. Jørgensen, and H. Karstoft, “Generating artificial images of plant seedlings using generative adversarial networks,” Biosyst. Eng., vol. 187, pp. 147–159, 2019, doi: 10.1016/j.biosystemseng.2019.09.005.

[7] S. P. Adhikari, G. Kim, and H. Kim, “Deep neural network-based system for autonomous navigation in paddy field,” IEEE Access, vol. 8, pp. 71272–71278, 2020, DOI: 10.1109/ACCESS.2020.2987642.

[8] V. Maeda-Gutiérrez et al., “Comparison of convolutional neural network architectures for classification of tomato plant diseases,” Appl. Sci., vol. 10, no. 4, 2020, DOI: 10.3390/app10041245.

[9] H. Jiang, C. Zhang, Y. Qiao, Z. Zhang, W. Zhang, and C. Song, “CNN feature-based graph convolutional network for weed and crop recognition in smart farming,” Comput. Electron. Agric., vol. 174, no. October 2019, p. 105450, 2020, DOI: 10.1016/j.compag.2020.105450.

[10] A. Abdalla et al., “Fine-tuning convolutional neural network with transfer learning for semantic segmentation of ground-level oilseed rape images in a field with high weed pressure,” Comput. Electron. Agric., vol. 167, no. October, p. 105091, 2019, DOI: 10.1016/j.compag. 2019.105091.

[11] R. Raja, T. T. Nguyen, D. C. Slaughter, and S. A. Fennimore, “Real-time weed-crop classification and localization technique for robotic weed control in lettuce,” Biosyst. Eng., vol. 192, pp. 257–274, 2020, doi: 10.1016/j.biosystemseng.2020.02.002.

[12] J. Gao, A. P. French, M. P. Pound, Y. He, T. P. Pridmore, and J. G. Pieters, “Deep convolutional neural networks for image-based Convolvulus sepium detection in sugar beet fields,” Plant Methods, vol. 16, no. 1, pp. 1–12, 2020, DOI: 10.1186/s13007-020-00570-z.

[13] J. You, W. Liu, and J. Lee, “A DNN-based semantic segmentation for detecting weed and crop,” Comput. Electron. Agric., vol. 178, no. March, p. 105750, 2020, DOI: 10.1016/j.compag.2020.105750.

[14] M. H. Asad and A. Bais, “Weed detection in canola fields using maximum likelihood classification and deep convolutional neural network,” Inf. Process. Agric., vol. 7, no. 4, pp. 535–545, 2020, DOI: 10.1016/ j.inpa.2019.12.002.

[15] A. dos Santos Ferreira, D. M. Freitas, G. G. da Silva, H. Pistorius, and M. T. Folhes, “Unsupervised deep learning and semi-automatic data labelling in weed discrimination,” Comput. Electron. Agric., vol. 165, no. July, p. 104963, 2019, DOI: 10.1016/j.compag.2019.104963.

[16] K. Hu, G. Coleman, S. Zeng, Z. Wang, and M. Walsh, “Graph weeds net: A graph-based deep learning method for weed recognition,” Comput. Electron. Agric., vol. 174, no. April, p. 105520, 2020, DOI: 10.1016/j.compag.2020.105520.

[17] T. F. Gonzalez, “ImageNet Classification with Deep Convolutional Neural network,” Handb. Approx. Algorithms Metaheuristics, pp. 1–1432, 2007, DOI: 10.1201/9781420010749.

[18] K. Simonyan and A. Zisserman, “Intense convolutional networks for large-scale image recognition,” 3rd Int. Conf. Learn. Represent. ICLR 2015 – Conf. Track Proc., pp. 1–14, 2015.

[19] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 2016 – December, pp. 770–778, 2016, DOI: 10.1109/CVPR.2016.90.

[20] C. Szegedy et al., “Going deeper with convolutions,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 07-12-June, pp. 1–9, 2015, DOI: 10.1109/CVPR.2015.7298594.

[21] A. Olsen et al., “DeepWeeds: A Multiclass Weed Species Image Dataset for Deep Learning,” Sci. Rep., vol. 9, no. 1, pp. 1–12, 2019, DOI: 10.1038/s41598-018-38343-3.

[22] I. Sa et al., “WeedNet: Dense Semantic Weed Classification Using Multispectral Images and MAV for Smart Farming,” IEEE Robot. Autom. Lett., vol. 3, no. 1, pp. 588–595, 2018, DOI: 10.1109/LRA.2017. 2774979.

Biographies

S. Anthoniraj received his BE in Computer Science Engineering from Anna University Chennai, ME in Computer Science Engineering from Vinayaka Mission University Salem and PhD in Server Virtualization from Manonmaniam Sundarnar University Tirunelveli, Tamilnadu. He is working as an Professor in Computer Science Engineering at M V J College of Engineering, Whitefield, Bangalore. His area of interest includes Virtualization, Data Science, Machine Learning Java Programming, Internet Programming, and Computer Networks, Open-source tools and components.

P. Karthikeyan obtained his the Bachelor of Engineering (B.E.,) in Computer Science and Engineering from Anna University, Chennai, and Tamil nadu, India in 2005 and received his Master of Engineering (M.E,) in Computer Science and Engineering from Anna University, Coimbatore India in 2009. He has completed Ph.D. degree in Anna University, Chennai in 2018. Skilled in developing projects and carrying out research in the area of Cloud computing and Data science with the programming skill in Java, Python, R and C. He published more than 20 International journals with good impact factor and presented more than 10 International conferences. He was the reviewer of Elsevier, Springer, Inderscience and reputed Scopus indexed journals. He is acting as editorial board members in EAI Endorsed Transactions on Energy Web, The International Arab Journal of Information Technology and Blue Eyes Intelligence Engineering and Sciences Publication journal.

V. Vivek is a dedicated educationist with 13 years of experience in teaching and research domains. His area of expertise includes Distributed Systems, Cloud Computing, Computer Networks, Agent-based Computing. As a continuous learner and researcher, published research articles in leading journals (SCI, and Scopus) and was a resource person for various guest lectures. Dr. V. Vivek currently working as a Program Coordinator in the Dept. of CSE (AI&ML) and (Cybersecurity) at FET-JAIN (Deemed-to-be University)-City campus.

Journal of Mobile Multimedia, Vol. 18_2, 275–292.

doi: 10.13052/jmm1550-4646.1826

© 2021 River Publishers