A Deep Learning Based Social Distance Analyzer with Person Detection and Tracking Using Region Based Convolutional Neural Networks for Novel Coronavirus

N. Prabakaran*, Suvarna Sree Sai Kumar, P. Kranthi Kiran and Pabbidi Supriya

School of Computer Science and Engineering (SCOPE), Vellore Institute of Technology, Vellore, Tamil Nadu, India-632014

E-mail: dhoni.praba@gmail.com; s.sreesaikumar@gmail.com; kranthi97.ssk@gmail.com; supriyareddypabbidi98@gmail.com

*Corresponding Author

Received 26 July 2021; Accepted 08 September 2021; Publication 11 January 2022

Abstract

With its staggering spread, the continuous novel coronavirus Covid flare-up has caused a worldwide disaster. Populace weakness develops because of an absence of productive helpful prescriptions and a shortage of antibodies against the infection. Since there are no antibodies accessible as of now, social detachment is viewed as a sufficient insurance (standard) against the transmission of the illness. With the ascent in cases, the public authority has ordered a base actual division of 2 meters in all open spaces as a security measure. Utilizing PC vision on video reconnaissance, we made an AI device to forestall the spread of the (novel coronavirus). A social separating analyzer AI apparatus that utilizes video observing from CCTV cameras and robots to control social removing convention. The made AI device was introduced in broad daylight spaces and guaranteed the distance between gatherings of individuals. If the hole was excessively close, the red line showed up, demonstrating a higher danger of being influenced, trailed by the green and yellow light, showing a protected line, and the other, demonstrating a generally safe of being influenced.

Keywords: Convolutional neural network (CNN), YOLO V3, region-based convolutional neural network (RCNN), CCTV cameras, drones, novel coronavirus.

1 Introduction

As the (novel coronavirus) spread all throughout the planet, the planet responded. In spite of the fact that we don’t think a lot about the Covid, we do know a ton about it. We’ve found that social confinement is a significant part of keeping the disease from spreading. The vast majority allude to it as “social removing,” yet it’s smarter to consider it “real removing.” People are isolated by friendly separation. Individuals who are tainted with the contamination are more unwilling to impart it if they avoid others. At the point when somebody inhales, talks, hacks, or sniffles, minuscule drops are delivered into the air, which spread the infection. These beads can get at people, noses, and mouths, or they can inhale them in. These drops are bound to fall onto the ground than on others in light of the fact that there are at any rate 6 feet between them. More modest beads will stay noticeable all around for quite a long time to hours less as often as possible. They are alluded to as pressurized canned products. Since debilitated individuals don’t generally give indications, it’s ideal to keep a solid distance while you’re around individuals you don’t have a clue.

In a few states, organizations have returned and recreation exercises have continued. Accordingly, you can be thinking about how to keep a protected separation from others when you take off from the house. Here are some family-accommodating ideas: Keep at any rate 6 feet from others and wear a veil whether you’re outside or inside. When taking public travel, sit or remain at any rate 6 feet from different travelers. For food orders and shopping, utilize the drive-through or curbside get at whatever point conceivable. Another approach to staying away from others is to shop online from home. Where conceivable, utilize open-air scenes for feasting or occasions, which urges individuals to spread further.

Covid is a wide group of infections that can taint people and creatures. Numerous social distance measures are taken in broad daylight places, for example, cross blemishes on seats that keep individuals from sitting close to one another, with the base of social removing there would be a decline in Covid circumstances, with the distance in any event 2 meters from one another, numerous social distance measures are taken openly puts, with the distance at any rate 2 meters from one another, numerous social distance measures are taken out in the open spots, with the distance at any rate 2 meters away. The proposed paper depends on Covid19 spread relocation exercises. It is primarily used to follow individuals’ walk designs. The exercises on Covid19 spread movement are the focal point of the proposed paper. It essentially fills in as a method for recognizing packs in broad daylight puts just as on parkways. The individual discovery calculation is fundamentally used to precisely ascertain individuals’ association with each other just as the distance between them.

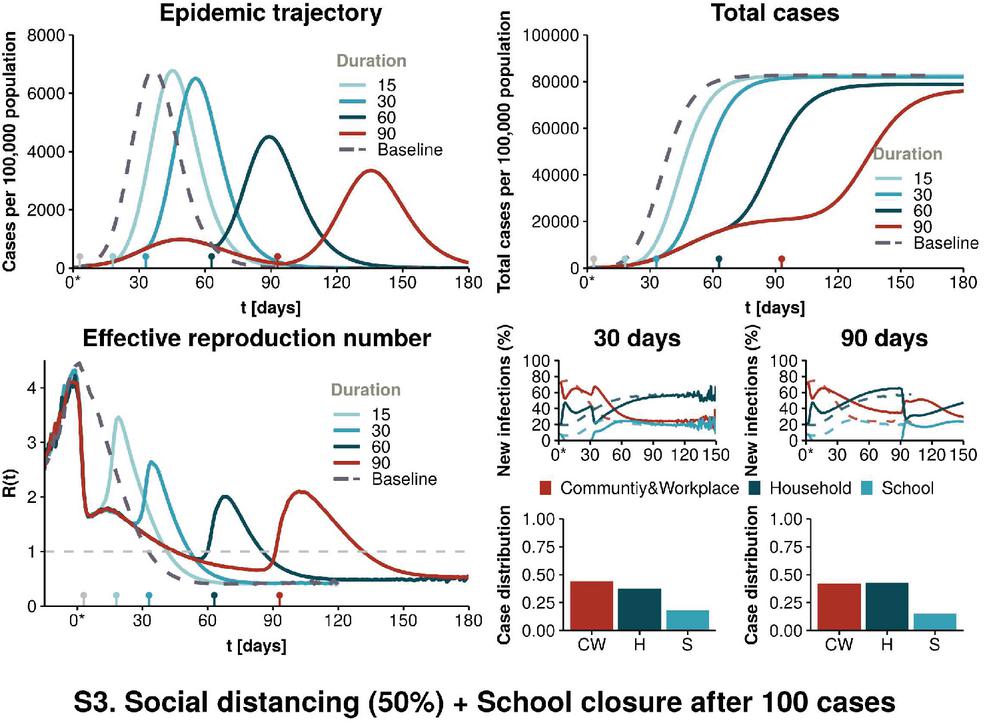

Figure 1 Overview of COVID cases.

In the above Figure 1 we could see there was a drastic decrease in the cases after implementing social distance by the closure of 50% schools. A minimum of 6 feet distance is necessary to decrease the spread of the virus. By considering the scenario Government has implemented social distance in almost all parts of the world especially in the places where the cases were very high this actually decreases the spread of the virus.

In the preferred paper, we will utilize PC vision on video reconnaissance to make an AI technique to forestall the spread of the Coronavirus. For human detection, a deep learning model called YOLOv3 (You Only Look Once) is used. Transfer learning is also utilized to increase the detection model’s efficiency. Humans are detected and bounding box information is provided by the detection model. The Euclidean distance between each identified centroid pair is calculated using the observed bounding box and its centroid information after human detection. At the point when individuals are strolling on open streets, the AI-based device regularly shows three tones: green, red, and orange. In the event that the distance between individuals is more noteworthy than 2 meters, it shows green, demonstrating wellbeing; in the event that they are extremely near one another, it shows red, showing a high danger; and on the off chance that they are less near one another, it shows yellow, showing a generally safe, these interfacing lines are utilized to show individuals’ vicinity, and they are estimated utilizing all-out tallies, just as the okay, substantial danger, and security checks.

2 Related Works

There was proof of easing from that second forward, unprecedented for five days, up until March 23, 2020, with no new cases found. The quantity of cases increments significantly throughout the span of a month, with 2,000 to 4,000 new affirmed cases enlisted each day in the primary seven-day stretch of February 2020. There was proof of easing from that second forward, unprecedented for five days, up until March 23, 2020, with no new cases found. This is because of the social distance practice, which started in China and was subsequently received by the remainder of the world to battle novel coronavirus (Ainslie et al., 2020) investigated the association between the district’s monetary condition and the level of social removing. The investigation tracked down those moderate degrees of activity ought to be endured to stay away from a significant episode. Numerous nations have utilized innovation-based answers for battle pandemic misfortune up until now (Punn et al., 2020). A few created nations are utilizing GPS innovation to screen debased and dubious people’s developments. gives an outline of different arising advancements, like Bluetooth, Wi-Fi, cell phone situating (restriction), PC vision, and profound realizing, that can be helpful in an assortment of common sense social removing situations. Robots and other reconnaissance cameras are being utilized by certain scientists to spot swarms. So far, scientists have invested in a ton of energy to recognize pandemics (Yash Chaudhary and Mehta, 2020), and some have devised a fantastic medical care framework for pandemics based on the Internet of Medical Things (Chakraborty, 2021). The impact of social removal on the spread of the novel coronavirus flare-up was researched by (Prem, 2020). Furthermore, according to the perceptions, early and prompt social separating may assist with limiting the pinnacle of the infection assault. While social removing is basic for levelling the contamination bend, it is a financially awkward measure, as we as a whole know. Experimenters use reconnaissance chronicles, just as PC vision, AI, and profound learning-based strategies, to give valuable answers for social distance estimation. They utilized a front- facing view information assortment from an open picture informational collection (OID) store. The creators have contrasted the discoveries and SSD and quicker RCNN. (Ramadass et al., 2020) made a social distance checking model zeroed in on a self-ruling robot. They utilized the custom informational index to prepare the YOLOv3 model. The information assortment comprises of front- facing and side perspectives on few people. The investigation is likewise being extended to incorporate facial veil following. The YOLOv3 calculation and the robot camera help recognize social distance and notice individuals wearing veils in broad daylight from the side or front. For physical removal and group control, (Pouw et al., 2020) proposed a compelling chart-based checking framework. in a packed circumstance, led to human discovery. The module is intended for those who might not comply to a six-foot social distance cut-off between them. We determined that the analyst had spent a substantial period of time evaluating social distance openly places. The framework is introduced for those who might not adhere to a six-foot social distance cut-off amongst them. We reasoned that the analyst had invested a great deal of energy into observing social distance openly spaces. Be that as it may, most of the work is focused on the front-facing or side-view camera viewpoints. Accordingly, we introduced an overhead view social distance observing framework in this paper that gives a bigger field of view and beats impediment issues, subsequently assuming a basic part in friendly distance estimation to compute the connection among individuals. YOLOv3 is an amazing item identification model since it is quick and has exactness practically identical to the best two-stage indicators (on 0.5 IOU). Item identification applications in spaces, for example, media, retail, assembling, advanced mechanics, and others expect models to be fast (a little penance error is adequate), however, YOLOv3 is additionally exceptionally exact. This makes it the best model to use in applications where speed is basic, either because the products should be conveyed progressively or because the information is essentially excessively huge. Different applications, like security or independent driving, require an undeniable degree of model precision because of the sensitive idea of the space; you don’t need individuals to bite the dust.

Different researcher had done a considerable amount of work for employing various machine learning algorithms for vision-based monitoring systems for monitoring crowded areas but there has been very little effort put into developing an efficient framework that uses deep learning-based algorithms for real-world unit distance mapping. Therefore, inspired by few above works, we have developed a system that allows a better view of the scene and overcomes occlusion concerns by performing a pivotal role in social distance monitoring tasks to help out in this deadly situation.

3 Overview of Object Detection

Items can be found all over! Therefore, Object Detection and Image Classification are two of the most famous PC vision undertakings. They’re utilized in military, medical care, sports, and the space business, in addition to other things. The essential qualification between these two undertakings is that picture arrangement perceives an item in a picture, while object identification recognizes both the article and its position. As far as usefulness, Object Tracking and Object Detection are practically the same. These two undertakings involve finding and distinguishing the thing. Notwithstanding, the solitary distinction is the kind of information you’re working with. Article Detection is for photographs, while Object Tracking is for recordings.

4 R-CNN for Object Detection

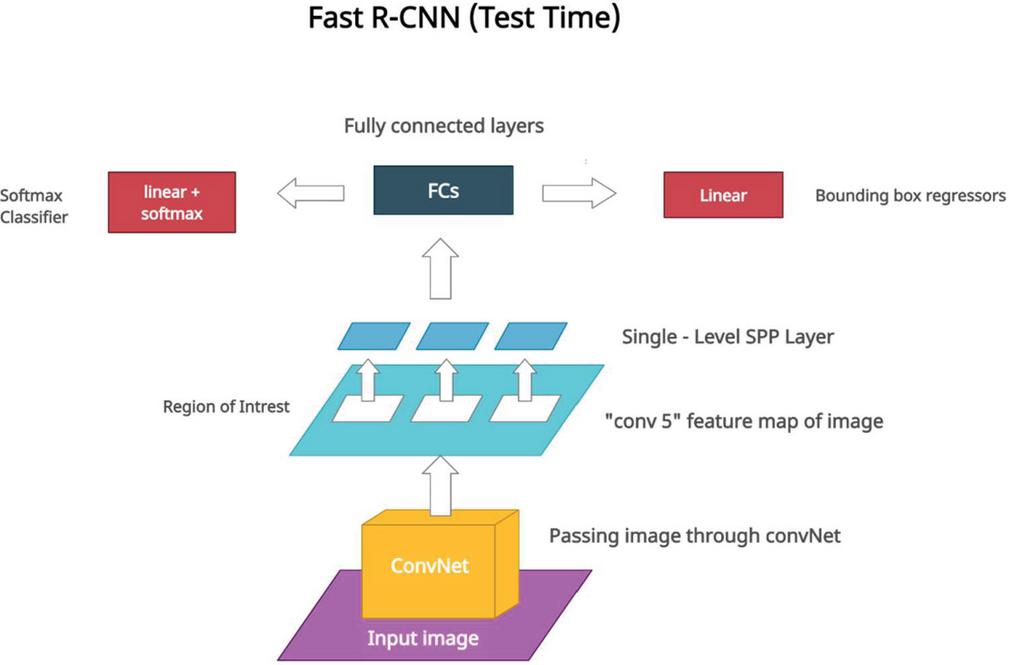

Numerous calculations for choosing a Region of Interest have been introduced (ROI). Objectness, particular hunt, classification free item recommendations, etc. are probably the most well-known. Therefore, R-CNN was recommended fully intent on utilizing the outside locale proposition technique. Area based Convolutional Neural Network (R-CNN) is an abbreviation for Region-based Convolutional Neural Network. It chooses areas utilizing one of the outside district proposition calculations. A humble change in the R-CNN work process, known as Fast R-CNN, was made and proposed to limit derivation speed. The change was made to the area proposition highlight extraction. Highlight extraction happens for every space proposition in R-CNN, yet include extraction happens only once for every unique picture in Fast R-CNN.

Figure 2 Test time of Faster R-CNN.

Work Flow of a Model: Think About a Picture

1. Select Regions of Interest (ROI) utilizing outside locale proposition calculation

2. Pass a picture to the CNN

3. Concentrate the highlights of a picture

4. Pick applicable ROI highlights utilizing the area of ROI

5. For every ROI include, pass highlights to a classifier and regression

During derivation, Fast R-CNN burns-through almost 2 seconds for each test picture and is around multiple times quicker than R-CNN. The adjustment of ROI component extraction is the explanation shown in the Figure 2. For instance, if a photograph has a total of 2000 recommendations, the number of forward passes to CNN will be around 1.

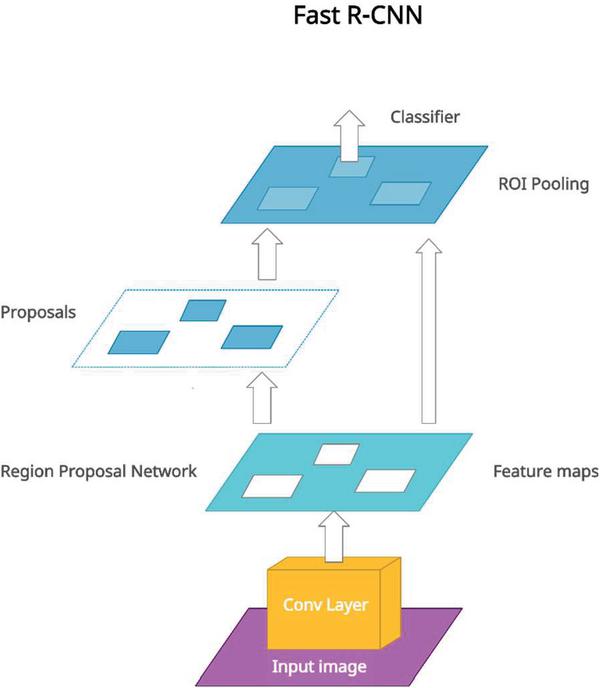

Quicker R-CNN for Object Detection

Quicker R-CNN replaces the outside area proposition calculation with a Region Proposal Network (RPN). RPN figures out how to propose the locale of interests which thus saves a great deal of time and calculation when contrasted with a Fast R-CNN.

5 Proposed Work

The Euclidean distance is utilized to surmise the distance between each pair of the centroid of the jumping box distinguished to screen the social distance between people. A pixel to separate assessment is regularly used to indicate a social distance infringement edge. Using a centroid finding calculation to follow individuals who pass the social hole boundary. Evaluate the exhibition of pre-prepared YOLOv3 on an overhead informational collection to perceive how well it performs. The recognition system’s presentation is assessed both with and without move learning. The exhibition of the model is likewise contrasted with that of other profound learning models. YOLOv3 is utilized for human discovery in this investigation since it improves prescient exactness, especially for limited-scope ancient rarities. The key advantage is that the organization structure has been adjusted for multi-scale object discovery. Besides, as opposed to utilizing SoftMax, it utilizes an assortment of autonomous strategies for object grouping. The convolutional layers, otherwise called Residual Blocks, are utilized to perform highlight learning. Numerous convolutional layers and skip associations make up the squares. The model’s distinctive property is that it identifies at three unique scales as shown in the Figure 3.

Figure 3 Faster R-CNN for object detection.

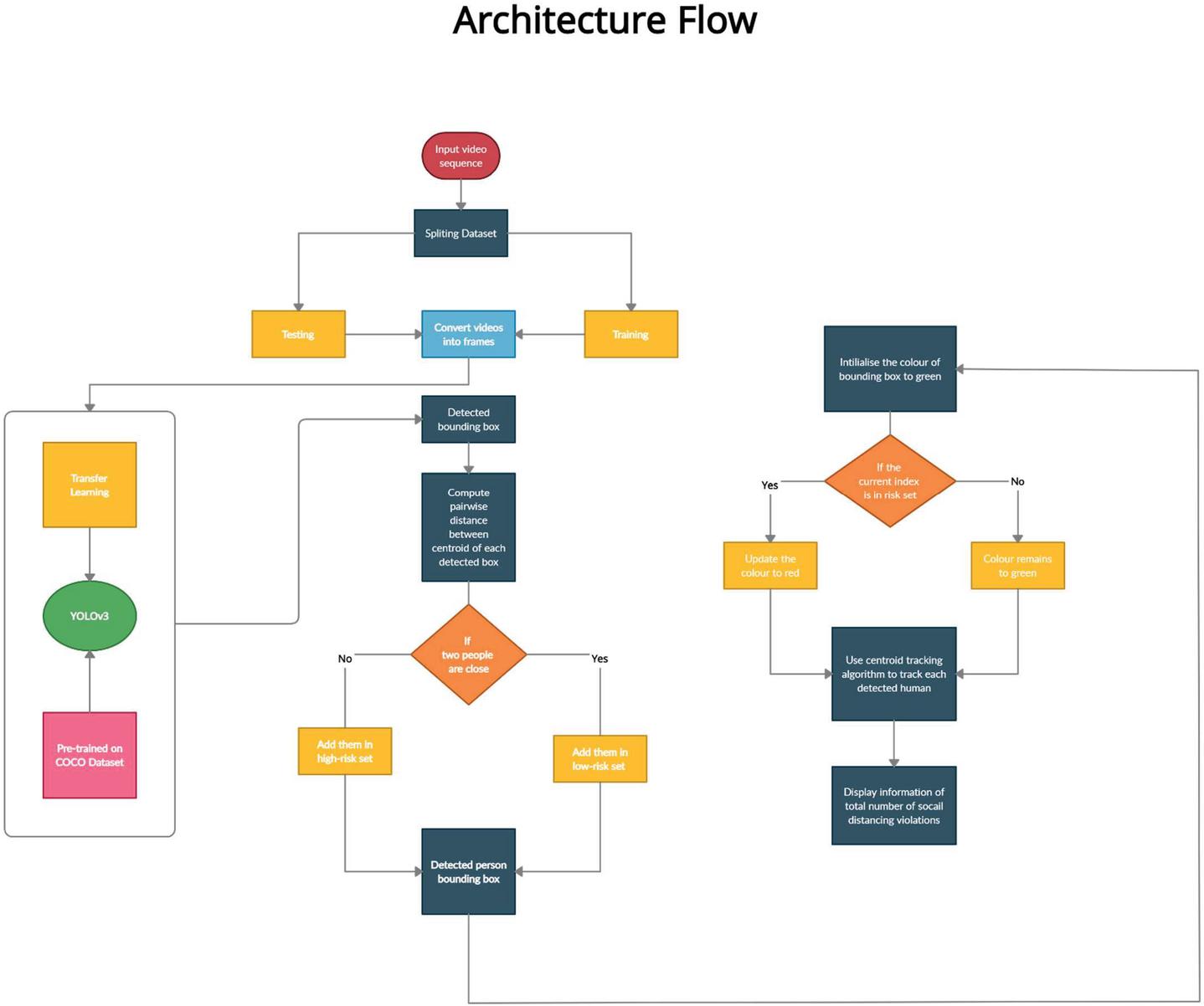

With an overhead viewpoint, this research proposes a profound attempt to learn social distance control paradigm. The detailed overhead informational collection is isolated into two gatherings: preparing and testing. People in groupings are distinguished utilizing a profound learning- based identification model. The detailed architectural flow diagram of the framework is shown in Figure 4.

Figure 4 Proposed Architecture and its flow.

The info video groupings are gathered by a CCTV camera and passed to our Deep Neural Network model. The identified individuals in the scene, alongside their particular confinement jumping boxes, would be the model’s yield. It is the objective to construct a vigorous human (individuals) recognition model fit for adapting to an assortment of difficulties, for example, apparel changes, stances, distances between individuals, impediments, and lighting conditions. In maintaining the social distancing, the CCTV camera takes the input video of the detected people in the public places and it split the set into training and testing. In the testing and training, it converts the video into frames and then it transfers into learning and YOLOV3 algorithm will be pre trained on data set

shows whether or not the person present is within the detected bounding box. If the value is 1 then it is yes and if it is 0 then it is no. Anticipated bounding boxes is determined by .

where is the ground truth box manually labelled in the training data set, and represents the predicted bounding box area represents the intersection area. For each identified individual in the input frame, a suitable area is anticipated and determined. After prediction, the confidence value is used to choose the best bounding box. are calculated for each projected bounding box, with defining bounding box coordinates and determining width and height.

are predicted coordinate bounding boxes, with representing the coordinates’ center and representing the coordinates’ width and height. define the network output, and correspond to the grid cell’s top-left coordinates and are anchor width and height. The high confidence values are processed, while the low confidence values are discarded, using a threshold value. The final location parameters for the observed bounding box are calculated via non-maximal suppression. Loss function are covered by regression, classification, and confidence. If an item is identified in each grid cell, the classification loss is calculated as the squared error of the conditional class probabilities.

If the person is identified in cell then, , else equals 0. represents the conditional class probabilities for class c in grid cell i. The failures in the projected bounding box sizes and positions are estimated using the localization loss. The bounding box that contains the identified item, in this case a human, is added.

equals 1 in the following equation if the jth bounding box in grid cell I is utilised for object detection, else it equals 0. The model predicts the square root of the bounding box width and height rather than simple height and width. The scaling parameter cord is utilized in above equation to forecast bounding box coordinates and equals 5. In the ith cell of the detected bounding box, the anticipated locations are represented by , whereas the actual positions of the bounding box in the ith cell are specified by . The loss function of a predicted bounding box with coordinates of x, y is measured by above equation. is used to denote the potential of the identified person in the jth bounding box. When to ) is used as a predictor for each grid cell to , the function in above equation calculates the sum across each bounding box.

where, complement of , When identifying background, the bounding box’s confidence score confidence score in cell and is utilised to weight down the loss. Because bounding boxes do not include any objects that produce a class imbalance problem in the majority of situations, the model is trained to identify background rather than detect objects. The loss is weighted down by a factor of .

Figure 5 High risk count among people in public places.

Figure 6 High risk count among people in public places.

Figure 7 Low risk & safe count among people in public places.

6 Results and Discussion

Since there was an enormous ascent in cases and no lockdowns in many regions, individuals would be meandering out in the open spaces, so the device we made was just valuable for public spaces. The CCTV camera was introduced to recognize individuals. There was at least a 2-meter hole between individuals, so it shows green boundary with a parameter as safe count as shown in the Figures 5, 6, if the distance between them diminishes little bit then it shows yellow boundary with a parameter as low risk count as shown in the Figures 7, 8, and if the distance between them diminishes completely, it shows red boundary with a parameter as high risk count as shown in the Figures 5, 6, demonstrating that there are more odds of being influenced. The made AI device was introduced in broad daylight spaces and guaranteed the distance between gatherings of individuals. If the hole was excessively close, the red line showed up, demonstrating a higher danger of being influenced, trailed by the green and yellow light, showing a protected line, and the other, demonstrating a generally safe of being influenced. As per our discoveries, social removing with 90% reception and complete superfluous terminations are suitable choices for containing the episode. Notwithstanding, when the limitations are lifted, there is a huge danger of a subsequent episode. Forceful strategies like mass testing, distant side effects following, disconnection of new cases, and contact following should be acquainted with stay away from the present circumstance.

Figure 8 Low risk & safe count among people in public places.

7 Future Scope and Challenges

This investigation offers an imaginative constant profound learning-based engineering for mechanizing the interaction of social separating checking utilizing object data recovery methods. With the guide of nine jumping boxes, every individual is depicted continuously. The created jumping encloses help recognizing bunches or gatherings of individuals that fulfill the pairwise vectorized approach’s closeness property. The quantity of infringement is confirmed by figuring the quantity of gatherings made and the infringement file word, which is determined as the proportion of individuals to gatherings. Broad tests were completed utilizing regular best-in-class object identification programming. RCNN, SSD, and YOLO v3 were all quicker, with YOLO exhibiting the best outcomes with a decent FPS and map positioning. Since this strategy is exceptionally touchy to the camera’s spatial position, it tends to be calibrated to all the more likely line up with the comparing field of view. In the future, the research might be enhanced for diverse indoor and outdoor locations and low lighting conditions. To track the individual or persons who are breaking or breaching the social distance threshold, several detections and tracking techniques may be utilized.

8 Conclusion

This study developed and tested persistent confirmed by measuring architecture for automating the interaction of social separating checks using object data recovery methods. Every individual is repeatedly represented using just a nine-jumping-box guide. The created jumping encloses help distinguishing bunches or gatherings of individuals that fulfill the pairwise vectorized approach’s closeness property. The pairwise centroid distances between discovered bounding boxes are calculated using the Euclidean distance. An approximation of physical distance to the pixel and a threshold are employed to check for social distance violations between two persons. A violation threshold is utilized to determine whether or not the distance value breaches the predefined minimum social distance. In addition, for monitoring individuals in the scene, a centroid tracking technique is utilized. The framework effectively recognizes persons moving too close together and breaches social distance, according to test data.

References

[1] Jung, Heechul, Sihaeng Lee, Sunjeong Park, Byungju Kim, Junmo Kim, Injae Lee, and Chunghyun Ahn. “Development of deep learning-based facial expression recognition system.” In 2015 21st Korea-Japan Joint Workshop on Frontiers of Computer Vision (FCV), pp. 1–4. IEEE, 2015.

[2] Leng, Biao, Yu Liu, Kai Yu, Songting Xu, Ziqing Yuan, and Jingyan Qin. “Cascade shallow CNN structure for face verification and identification.” Neurocomputing 215: 232–240, 2016.

[3] Imitation of visual illusion via Open https://www.worldscientific.com/doi/abs/10.1142/S0218127408022573.

[4] Redmon, Joseph, et al. “You only look once: Unified, real-time object detection.” Proceedings of the IEEE conference on computer vision and pattern recognition. 2016.

[5] De Oliveira, Diulhio Candido, and Marco Aurelio Wehrmeister. “Towards real-time people recognition on aerial imagery using convolutional neural networks.” 2016 IEEE 19th international symposium on real-time distributed computing (ISORC). IEEE, 2016.

[6] Arsenovic, M., Sladojevic, S., Anderla, A., and Stefanovic, D. (2017, September). FaceTime—Deep learning based face recognition attendance system. In 2017 IEEE 15th International Symposium on Intelligent Systems and Informatics (SISY) (pp. 000053–000058). IEEE, 2017.

[7] Pitaloka, Diah Anggraeni, Ajeng Wulandari, T. Basaruddin, and Dewi Yanti Liliana. “Enhancing CNN with preprocessing stage in automatic emotion recognition.” Procedia computer science 116: 523–529, 2017.

[8] Redmon, Joseph, and Ali Farhadi. “Yolov3: An incremental improvement.” arXiv preprint arXiv:1804.02767, 2018.

[9] Jose, Reny. “A Convolutional Neural Network (CNN) Approach to Detect Face Using TensorFlow and Keras.” International Journal of Emerging Technologies and Innovative Research, ISSN: 2349-5162, 2019.

[10] Shanmugamani, Rajalingappaa. Deep Learning for Computer Vision: Expert techniques to train advanced neural networks using TensorFlow and Keras. Packt Publishing Ltd, 2018.

[11] Nikouei, Seyed Yahya, et al. “Real-time human detection as an edge service enabled by a lightweight cnn.” 2018 IEEE International Conference on Edge Computing (EDGE). IEEE, 2018.

[12] Chandan, G., Ayush Jain, and Harsh Jain. “Real time object detection and tracking using Deep Learning and OpenCV.” 2018 international conference on inventive research in computing applications (ICIRCA). IEEE, 2018.

[13] Ahmad, Misbah, et al. “Convolutional neural network–based person tracking using overhead views.” International Journal of Distributed Sensor Networks 16.6: 1550147720934738, 2020.

[14] Ainslie, Kylie E.C., Caroline E. Walters, Han Fu, Sangeeta Bhatia, Haowei Wang, Xiaoyue Xi, Marc Baguelin et al. “Evidence of initial success for China exiting COVID-19 social distancing policy after achieving containment.” Wellcome Open Research 5, 2020.

[15] Chakraborty, Chinmay, Amit Banerjee, Lalit Garg, and J. J. Rodrigues. “Internet of Medical Things for Smart Healthcare.” Studies in Big Data; Springer: Cham, Switzerland 80, 2020.

[16] Yash Chaudhary D.G., Mehta M. 22nd international conference on E-health networking, applications and services (IEEE Healthcom 2020); Shenzhen, China, December 12–15; 2020.

[17] Prem K., Liu Y., Russell T.W., Kucharski A.J., Eggo R.M., Davies N. The Lancet Public Health. 2020.

[18] Ramadass L., Arunachalam S., Sagayasree Z. International Journal of Pervasive Computing and Communications. 2020.

[19] Pouw, Caspar AS, Federico Toschi, Frank van Schadewijk, and Alessandro Corbetta. “Monitoring physical distancing for crowd management: Real-time trajectory and group analysis.” PloS one 15, no. 10: e0240963, 2020.

[20] Punn N.S., Sonbhadra S.K., Agarwal S. “COVID-19 Epidemic Analysis” medRxiv. 2020.

[21] Das, Arjya, Mohammad Wasif Ansari, and Rohini Basak. “Covid-19 Face Mask Detection Using TensorFlow, Keras and OpenCV.” In 2020 IEEE 17th India Council International Conference (INDICON), pp. 1–5. IEEE, 2020.

[22] Badave, Harshada, and Madhav Kuber. “Face Recognition Based Activity Detection for Security Application.” In 2021 International Conference on Artificial Intelligence and Smart Systems (ICAIS), pp. 487–491. IEEE, 2021.

[23] Ashraf, Md. Shakeeb. “Real Time Face Detection Using OpenCV.” 2021.

[24] Bouhlel, Fatma, Hazar Mliki, and Mohamed Hammami. “Crowd Behavior Analysis based on Convolutional Neural Network: Social Distancing Control COVID-19.” VISIGRAPP (5: VISAPP). 2021.

[25] Ayachi, Riadh, Yahia Said, and Mohamed Atri. “A Convolutional Neural Network to Perform Object Detection and Identification in Visual Large-Scale Data.” Big Data 9, no. 1: 41–52, 2021.

[26] Gómez-Silva, María J. “Deep multi-shot network for modelling appearance similarity in multi-person tracking applications.” Multimedia Tools and Applications: 1–21, 2021.

[27] Lu, Peng, Baoye Song, and Lin Xu. “Human face recognition based on convolutional neural network and augmented dataset.” Systems Science & Control Engineering 9, no. sup2: 29–37, 2021.

[28] Mohammed, Soleen Basim, and Adnan Mohsin Abdulazeez. “Deep Convolution Neural Network for Facial Expression Recognition.” PalArch’s Journal of Archaeology of Egypt/Egyptology 18, no. 4: 3578–3586, 2021.

Biographies

N. Prabakaran is currently working as an Assistant Professor senior in School of Computer Science and Engineering, Vellore Institute of Technology (VIT), Vellore, Tamil Nadu and India. He received BE in Computer Science and Engineering from Anna University, Chennai, India in 2009, ME in Computer Science and Engineering from Anna University, Chennai, India in 2011. He has completed Ph.D. at Vellore Institute of Technology (VIT), Vellore, India in 2017. His research interest includes Massive mining Data, Machine learning Algorithms in Financial series, sensor Networks and Pervasive Computing.

Suvarna Sree Sai Kumar received the bachelor’s degree in Computer Science and Engineering from Sree Vidyanikethan Enginnering College 2018, the master’s degree in Computer Science and Engineering from Vellore Institute of Technology 2022. His research areas include Internet of Things, deep learning, and Machine Learning.

P. Kranthi Kiran received the bachelor’s degree in Mechanical engineering from Saveetha Engineering College 2019, the master’s degree in Computer Science and Engineering from Vellore Institute of Technology 2022. His research areas include Wireless Communication, Internet of Things, deep learning and Artificial Intelligence.

Pabbidi Supriya received the bachelor’s degree in Instrumentation from Bharath Institute of Higher Education and research 2022, the master’s degree in Computer Science and Engineering from Vellore Institute of Technology 2020. Her research areas include Mobile Applications, Internet of Things, Deep Learning.

Journal of Mobile Multimedia, Vol. 18_3, 541–560.

doi: 10.13052/jmm1550-4646.1834

© 2022 River Publishers