Extended Reality Platform for Metaverse Exhibition

Choonsung Shin1, Seokhee Oh2,* and Hieyong Jeong3,*

1Graduate School of Culture, Chonnam National University, Gwangju, 61186, Republic of Korea

2Korea Creative Content Agency, Daejeon, 34863, Republic of Korea

3Department of Artificial Intelligence Convergence, Chonnam National University, Gwangju, 61186, Republic of Korea

E-mail: cshin@jnu.ac.kr; shoh@kocca.kr; h.jeong@jnu.ac.kr

*Corresponding Author

Received 08 September 2023; Accepted 27 December 2023; Publication 03 February 2024

Abstract

This paper proposes a web-based extended reality (XR) platform integrating exhibitions based on augmented reality (AR) and virtual reality (VR). The proposed platform includes an XR cloud for managing and sharing data in XR spaces, a VR client for users, and an AR client for real-world users. The XR cloud is a web-based VR service responsible for managing objects and spatial information related to real and virtual spaces while simultaneously facilitating real-time information sharing and synchronization between AR and VR users. The VR client offers a virtual exhibition environment based on the virtual space and information for VR users. The AR client overlays virtual information over objects in a real space, allowing for intuitive interaction with them. The proposed XR platform was implemented with Oculus Quest 2 for VR and HoloLens 2 for AR and was evaluated in a small exhibition environment. In this experimental environment, VR and AR users were able to participate in the same exhibition from different spaces and share their experiences in real time.

Keywords: webXR, augmented reality, virtual reality, metaverse exhibition, web application.

1 Introduction

Due to the unforeseen circumstances caused by the corona virus disease 2019 (COVID-19) pandemic, the metaverse, overcoming the limitations of physical spaces by merging real and virtual spaces, has attracted significant attention from researchers and end users [1, 2]. Exhibitions in the metaverse have garnered considerable attention from visitors and researchers who want to meet in a virtual space [3, 4]. Several platforms to overcome the limitations of physical exhibition spaces have been developed to offer metaverse exhibitions, including Spatial, Mozilla Hubs, and ArtSteps [5, 6, 7]. These metaverse platforms offer exhibitions that enable visitors to view visual arts and artists to create exhibition sites. One of the advantages of such exhibitions is that they are not limited by location or time constraints. Furthermore, these platforms are web-based metaverse platforms allowing users to participate in exhibitions via web services. However, these exhibitions remain confined to virtual spaces; thus, they lack a connection to the real world (containing the actual exhibition items) and the genuine experiences of visitors. Considering sustainable and successful exhibitions, it is also crucial for virtual reality (VR)-based exhibitions to support the connection to the real space.

Several studies have exploited augmented reality (AR), VR, and mixed reality (MR) technologies to support interactive and immersive exhibitions for recent exhibitions [8, 9]. The most common types of exhibitions offer AR and VR experiences in separate settings or different areas [10], which are relatively simple and quite easy to configure and operate. More enhanced methods involve combining AR and VR in the same museum space, where objects and space are linked in real and virtual spaces with AR, VR, and MR technologies. The Light House project presented the possibility of experiencing an exhibition from different perspectives by creating identical exhibits in web, mobile, and VR [11]. Recently, exhibitions combining real and virtual spaces, such as VR exhibitions with game elements based on actual photographs, can offer improved fun and enjoyment compared to traditional reality-based exhibitions [12].

However, existing exhibitions focus only on the goal of creating exhibitions by simply connecting the exhibited work within a space, making it difficult for users in different spaces to share a real-time experience, where visitors must visit the exhibition together when in different spaces. In addition, the existing exhibition spaces have limited opportunities for user participation, making it difficult to expand the user experience and incorporate external information into the exhibition using additional information provided in each space.

To overcome these problems, we propose a web-based XR platform for linked AR and VR exhibitions. The proposed XR platform consists of an XR cloud, AR client, and VR client to enable exhibitions that link real and virtual exhibitions. The XR cloud is a web service that manages the XR space, information, and visitors of real and VR spaces and facilitates each other’s events and states in real time. The VR client provides virtual space exhibitions based on XR cloud information, whereas the AR client provides an augmented exhibition environment in real space. With this XR platform, visitors in different spaces can participate in VR and AR exhibitions in real spaces, allowing them to share different experiences.

The contributions of this paper are as follows. First, we propose a web-based XR platform design integrating real-world and virtual exhibitions. We configure AR devices, XR spaces, and VR devices to be interconnected for a web-based XR experience. Second, the proposed design provides an exhibition environment enabling the real-time sharing of space and information among users in various locations based on this platform. The status and information of users and objects are shared among users in real time. Third, the platform allows users to participate in the exhibition intuitively by placing and sharing AR and VR exhibits based on hand interactions.

This paper is organized as follows. Section 2 analyzes previous studies in terms of AR, VR, and XR exhibitions. Then, Section 3 introduces the web-based metaverse platform and its internal components. Next, Sections 4 and 5 illustrate and discuss the implementation results, respectively. Finally, Section 6 concludes with future work.

2 Related Work

Both VR and AR technologies have been applied to enhance the user experience at exhibition sites. Recently, mixed exhibitions combining AR and VR have been explored to provide a more immersive and interactive experience.

2.1 Virtual Reality for Exhibition

Research on constructing immersive exhibition environments using VR has been conducted. In the initial stages, immersive spaces were constructed as a way to use VR for exhibitions [13, 14]. Similarly, these exhibitions have expanded into actual exhibitions based on virtual world platforms, such as the Google Art Project and Second Life [10]. Similarly, these exhibitions have expanded into actual exhibitions based on virtual world platforms, such as the Google Art Project and Second Life [15]. Research has also been conducted on incorporating gestures and multimodal interactions to provide interactivity in VR. Research has been conducted on using 360 videos obtained from actual locations to create real exhibition effects to enhance immersion [16, 17, 18].

Further, research has been conducted on providing game-like interactions to promote more active participation in immersive exhibition environments [19]. In addition, a VR exhibition space that incorporates high-quality three-dimensional (3D) models, including 360 videos and photometric-based realistic models, is being constructed to enhance immersion in various exhibition settings [20, 21].

2.2 Augmented Reality for Exhibition

Notably, AR technology has been integrated into real-world environments to create enhanced exhibitions. Moreover, AR has been developed through mobile and smartphone devices. More recently, wearable glasses have improved mobility, increased immersion, and promoted interaction in exhibition experience research [22]. Initially, this research augmented information regarding the location and target objects in an environment as the user moved. In archaeological sites, AR has been used to provide users with knowledge and experience related to the site by augmenting digital information on cultural heritage [23]. Further, AR has also been used to enhance the user experience by transforming the artwork content based on video processing of real-world objects[24]. Additionally, gaming elements in AR have made exhibition experiences more enjoyable for users [25]. In contrast, specialized mobile devices dedicated to AR provide information through video processing and interactions, increasing exhibition satisfaction and continuous viewing intentions [26].

Furthermore, the emergence of wearable glasses has improved spatial immersion and provided bidirectional interactions to enhance museum exhibitions [27]. Glasses-based AR offers a more immersive and natural experience in an environment where mobility is required and where the hands are free [28]. Moreover, a single photograph can be used to create a 3D object within a photograph in wearable environments, expanding the exhibition[29]. Wearable AR using smart glasses allows users to view historical sites with virtual information in outdoor areas [30, 31]. This AR exhibition also extends to the MR experience. A cloud-based collective and multimodal MR was proposed to support the sharing of virtual heritage [32]. With cloud-based MR, a user and curator share virtual heritage in the same real space.

2.3 Extended Reality for Exhibition

There is an increasing trend in research on the fusion of physical and virtual spaces to create XR experiences where multiple users can participate in exhibitions of different reality spaces. Early research has also been conducted on mixed exhibition spaces that include physical, virtual, and web-based environments [11]. Sharing experiences in different reality environments has provided new possibilities for exhibitions. Further research has focused on determining the appropriate methods for collaboration between virtual and physical realities [33]. Subsequently, real-time sharing and collaborating between these physical and virtual realities are possible from different perspectives [34]. Exhibitions in such fusion spaces can comprise combinations of real- and non-real-time local and remote interactions, and AR and VR have been suggested as additional classifications [35]. Additionally, a hybrid exhibition concept that integrates physical reality, VR, and smart displays has been proposed [12]. This method presents common elements of the exhibition and combines physical, smart device-based exhibition and VR to allow user participation. In addition, the XR space has been proposed to improve the museum experience. A conceptual XR framework has been proposed for sharing VR and AR heritage. This framework highlights the importance of user experiences and information sharing among users in VR and AR experiential spaces [36].

The research on VR and AR continues to progress in enhancing exhibition experiences. Recent studies indicate ongoing research into spaces that combine both technologies. Both VR and AR are evolving in the direction of improving immersion, interaction, and the experience of multiple users within their respective spaces. Exhibitions in MR spaces are advancing toward XR, where VR and AR are blended, although this is still in the initial stages

One challenge is that real-time location and status sharing among users in different spaces has not yet been achieved well. Additionally, information and database construction for basic objects, such as exhibits and users, and the creation of user-centric experiences and mutual sharing have yet to be resolved. Therefore, systematic approaches to interconnect real-world, AR, and VR spaces are needed. A coherent system is required to facilitate user interaction, allowing users to generate and share information in this environment freely.

3 Extended Reality Framework for Metaverse Exhibition

3.1 Metaverse Exhibition

First, we introduce a metaverse exhibition combining AR and VR technologies, as depicted in Figure 1. The exhibition consists of two immersive spaces connected to a real space and a shared space, facilitating information sharing between these spaces.

Figure 1 Metaverse exhibition based on AR and VR.

One of the spaces is an AR exhibition, and the other is a VR exhibition. The shared space plays a critical role in managing information and enables AR and VR users to share exhibitions between the two spaces. In the real space, several artworks are physically arranged, and the same artworks are also placed in VR. In AR, additional information, such as related artworks and tags about them, is overlaid on the AR glass, whereas the same artworks and information are displayed in the VR device. Further, AR and VR users can be aware of each other’s location and status in this scenario. Additionally, by adding virtual artwork in AR or VR spaces, information about these objects is shared, expanding the exhibition and making it accessible to others.

3.2 Extended Reality Platform for Metaverse Exhibition

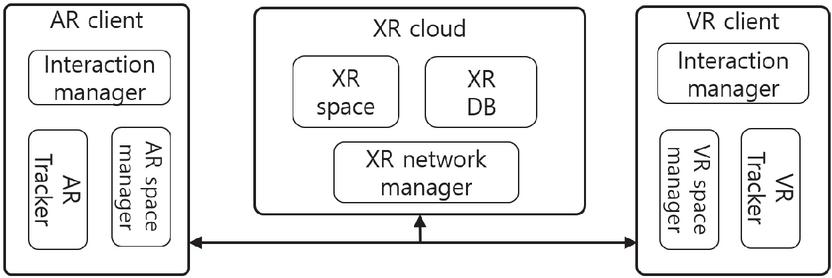

To support hybrid exhibitions with real and virtual spaces, we proposed an XR platform that combines AR and VR via the XR cloud. This proposed metaverse exhibition is established by following the platform illustrated in Figure 2.

Figure 2 XR platform for metaverse exhibition.

The proposed architecture consists of three parts: the XR cloud, AR viewer, and VR viewer. The XR cloud manages the information, and users share the VR space. The AR viewer augments actual artworks with virtual information stored in the XR cloud, whereas the VR viewer offers a virtual exhibition view using VR devices. In addition, the AR and VR viewers are connected via a network to support the real-time data exchange between these devices. While users participate in VR and AR exhibitions in the metaverse, they share their position and orientation and new information generated by other users. We describe the details about the shared information, VR and AR clients, and sharing procedures in the next section.

3.2.1 Extended reality cloud

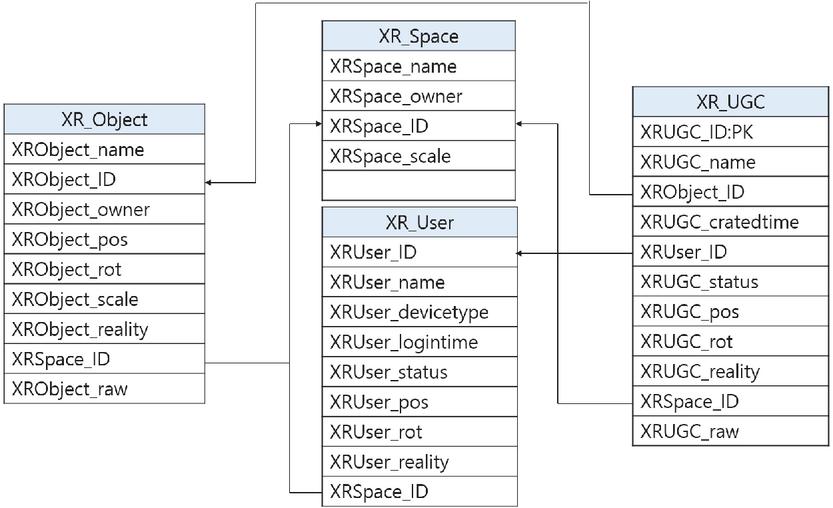

The XR cloud comprises an XR database, XR space management, and XR network manager linking VR and AR spaces and synchronizing events. The database consists of information to link the real space to its virtual space, including the XR user, XR space, XR object, and XR user-generated content (UGC). Figure 3 displays the tables and their fields in the database. The XR user refers to information about the user in real and virtual spaces, including the username, user ID, device type, login time, status, location, and reality type. The term XR space refers to information about the XR space, including the creator, name, ID, and scale. Furthermore, an XR object in an XR space refers to objects that exist in real and virtual spaces and is the basic information required for interactions, consisting of the name, identifier, location, rotation, scale, and original location of objects and spaces that comprise VR and can have an ID for connected real-world objects. The object is linked to raw data on the location, rotation, xy size, and object image in AR and to information that exists in reality, and it can also have an ID for connected VR objects. Additional information for users to create and share in AR and VR spaces is referred to as XR information. The XR UGC refers to user-created content connected to the XR object, including the ID of the creator, information type, position, rotation, raw data, and scale.

Figure 3 The information about XR space.

The XR space management module consists of an AR map and VR space, both centered on a real-world space. The AR map is generated to track the real-world space, whereas the VR space is created based on the map. When the XR cloud is executed, the database reflects the location and status of objects, initializing and managing these within the virtual space. The XR network manager creates a shared space for sharing and synchronizing the AR and VR spaces, immediately delivering and reflecting the state and events of users and objects.

3.2.2 Virtual reality client

The VR client is a device that provides a virtual exhibition for VR users. The VR device comprises a VR tracker, interaction manager, and VR space manager to recognize the user’s location, visualize the view, and interact with the virtual space to provide this exhibition. The VR tracker recognizes the user’s position in real time through the head-mounted displays and controllers, enabling the visualization of space and objects. The interaction manager provides essential user functions, such as object selection, movement, scaling, and dragging based on the controller or hand. The VR space manager is connected to the XR cloud and continuously shares and updates the status and location based on the objects and events visualized in the virtual space.

3.2.3 Augmented reality client

The AR client provides augmented exhibitions corresponding to the user’s view through an AR user device, consisting of an AR tracker, interaction manager, AR space manager for location recognition, view visualization, and interactions. The AR tracker loads and initializes AR maps for the target space and estimates the position of the space or object. The interaction manager is designed for immersive exhibition environments focusing on natural interaction and user movements based on the functions of a dual-handed device, even though the smartphone environment is touch-oriented by default. The AR space manager is connected to the XR cloud through the network and continuously updates events and movements by detecting them around augmented and virtual 3D objects that are shared through the network.

3.2.4 Extended reality space sharing and synchronization

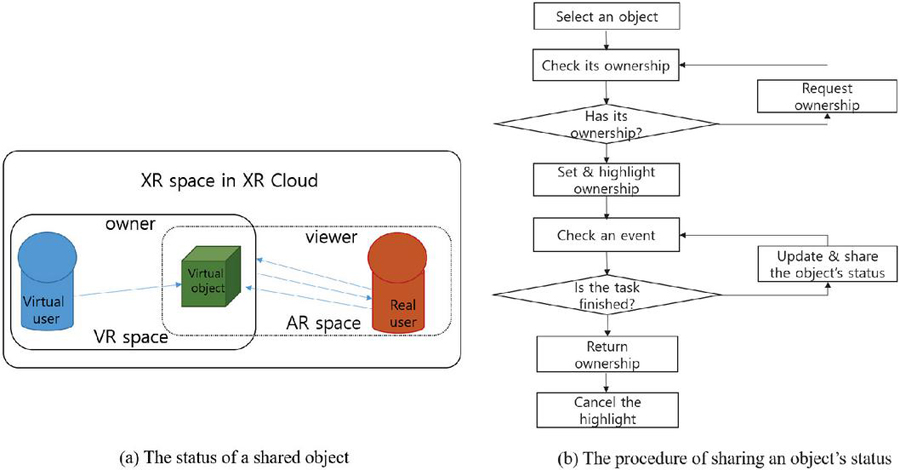

Sharing and synchronizing space is crucial when users are participating in an exhibition. The XR cloud shares information and status between AR users in the real world and VR users based on the XR cloud. The XR cloud consists of an XR network module and XR database, which sets and updates the object status information necessary for spatial configuration in real time. First, when executed, the XR database loads spatial and object information in the space. The AR space manager in the AR device receives a list of AR maps, selects a specific map, and acquires information. The AR space manager loads real-world object information and XR information associated with the AR map to initialize it. When the VR device is initially executed, the XR space in the XR cloud loads and initializes the VR space and its included objects. After initialization, the XR network manager shares and updates the status and events between AR and VR based on the XR network module. The XR network module collects and transmits 3D pose information about object movements and status based on shared object IDs between the virtual and real worlds. As observed in Figure 4, the object is shared by AR and VR users with its status information. While users have virtual objects, the device also has ownership of them for the purpose of moving and configuring the 3D objects.

Figure 4 The status and procedure of XR space sharing and synchronization.

4 Implementation and Results

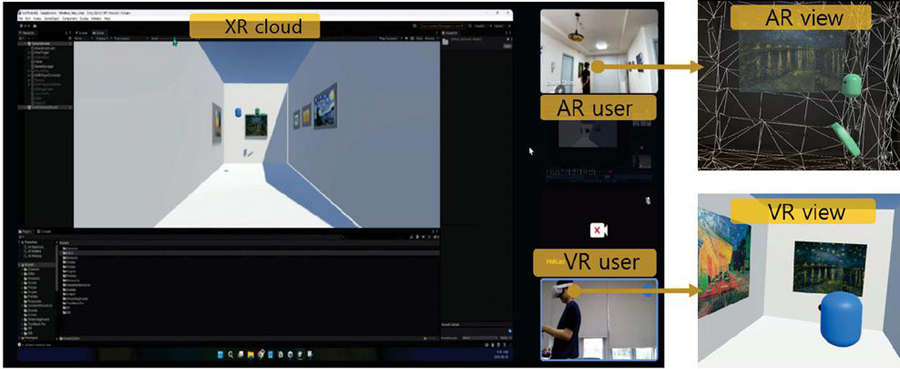

We implemented the proposed XR platform for a metaverse exhibition that combined real and virtual spaces. Initially, we created a small exhibition space comprising four physical art objects and one empty frame. The exhibition space was a small gallery section with dimensions of 4 m wide, 3 m high, and 2 m deep. We employed the Vuforia Area Target Creator to scan the small exhibition space and obtain its 3D tracking map [37]. Then, we designed a corresponding VR space containing a range of virtual objects using Unity 3D (v. 2021.3.1.16f1). The left and right images in Figure 5 depict the real-world exhibition space and its corresponding VR space.

Figure 5 Implemented metaverse exhibition: (a) real, (b) augmented reality (AR), and (c) virtual reality (VR) exhibitions.

To implement the proposed XR platform, we employed an AR glass, VR device, and a server. We selected HoloLens 2 for the AR device (because it is the most advanced wearable glass that supports two-handed interaction), a stereo AR display, and a network connection. We used the MR feature tool to develop the AR client on HoloLens 2 [38]. The center image in Figure 5 presents the AR exhibition compared to the real and VR exhibitions. Similarly, we used Oculus Quest 2 as the VR device because it supports controller-based hand interactions and mobile wireless connections. We included Oculus Unity Interaction to develop the VR client on Oculus Quest 2. The cloud server was implemented with Unity 3D and WebXR, which supports web-based VR and AR applications [39]. To support networking between the VR, AR, and server, we used the Photon network service [40].Thus, a user wearing the HoloLens 2 could move around the exhibition space while another user in the VR space visited the VR exhibition, which was similar to an actual exhibition

Furthermore, we conducted a test to determine how well sharing and generating XR information works between AR and VR users in the exhibition space. The goal was to add and arrange a new artwork to the empty frame. The VR user added new artwork. First, when a virtual object was placed in the space in VR, it was immediately reflected in AR. After the VR user placed the object based on the characteristics of the virtual space, the AR user adjusted it appropriately in the real environment to complete the exhibition layout. In addition, if the AR user placed an object in reality, the result was reflected in VR. When the AR user roughly placed their photograph or material in the space, the VR user accurately placed it to complete the exhibition.

Figure 6 Extended reality (XR) information sharing in a metaverse exhibition.

The left side of Figure 6 displays the XR cloud, and the right side presents the augmented exhibition along with the real and virtual exhibitions. In this example, a user in the real space could view the real exhibition items while gaining more information about the exhibited items with AR, while users in the virtual space could also view the virtual exhibition items. The movement of virtual exhibition items was managed by the server and shared by the users in real time. During sharing, we found that the latency to exchange the position and location of two users and the new object was an average of 100 ms. Therefore, while three works existed in both the physical and virtual spaces, a new artwork was created in the AR and VR spaces. The new artwork was registered in the XR UGC with details, such as the name of the piece, time, position, orientation, scale, creator, creation time, and raw data. This artwork was linked and registered in the XR object.

5 Discussion

The connection between real and virtual exhibitions through the enhanced metaverse is vital because the strengths of the physical and virtual spaces can be employed to create an exhibition that allows for different viewing experiences from various perspectives. This approach creates the effect of viewing the same exhibition for visitors from other physical spaces. While existing metaverse exhibitions remain in the virtual realm, the proposed exhibition form has significance as an expanded exhibition connected to a physical space.

Synchronized viewing of real-time users and spatial states is critical for shared viewing. By sharing and synchronizing events based on the presence and movement of users in different spaces, it is possible to achieve the effect of jointly viewing an exhibition. Interaction and simultaneous exhibition viewing enhance the sense of presence and satisfaction in an exhibition space for users who visit together.

Flexible exhibitions through user participation are also helpful for user-driven curating and visiting. Curators provide most exhibitions as fixed or preconstructed exhibitions. In contrast, users can arrange and share their artworks by directly constructing the exhibition via the proposed platform, creating an interactive environment where the exhibition space can be expanded through the placement of UGC in VR and physical spaces.

Nevertheless, limitations still exist. Metaverse exhibitions in the initial stages were only evaluated in small spaces. Suppose an exhibition on the scale of an actual exhibition environment is constructed, and many users create diverse works and routes. In that case, it is expected that a metaverse exhibition environment connected to physical and virtual spaces can be achieved. In addition, improvements are required to arrange a greater variety of works automatically based on the displayed works in the actual exhibition space, meaning the virtual space and works can be expanded. Finally, the exhibition experience of users who participate in physical and virtual spaces must be evaluated, and practical feedback must be received to verify the possibility of enhanced metaverse exhibition spaces for users.

6 Conclusions

This paper proposed a metaverse exhibition platform based on reality, AR, and VR to combine online and off-line exhibitions. The proposed platform comprises AR and VR clients for users. An XR cloud was also included to allow the sharing and synchronizing of exhibition spaces and events between users. The proposed platform was implemented using recent VR and AR devices, allowing users to experience the exhibition from their own spaces while sharing generated information and movements in real time. While previous metaverse exhibitions have focused on virtual worlds, this study is significant by taking the first step toward a practical metaverse exhibition combining real and virtual environments.

In future research, we intend to add the annotation of target objects in the real space to distinguish exhibition and non-exhibition areas and automatically configure the exhibition. Additionally, an artificial intelligence-based curator could be combined with the platform to provide users with automatic arrangements and intelligent guidance regarding exhibition items.

In future research, we intent to add annotation over target objects in the real space to distinguish exhibition and non-exhibition areas, and automatically configure the exhibition. Additionally, an AI-based curator would be combined with the platform to provide users with automatic arrangement and intelligent guidance with respect to a number of exhibition items.

Acknowledgements

This work was supported by the National Research Foundation of Korea(NRF) grant funded by the Korea Government (MSIT) (No. 2021R1F1A1047682) and by Technology Commercialization Collaboration Platform program of the Korea Innovation Cluster (Project Number: 2022-DD-RD-0065).

References

[1] X. Han, J. Liu, B. Tan, and L. Duan (2021), Design and Implementation of Smart Ocean Visualization System Based on Extended Reality Technology, Journal of Web Engineering, 20(2), 557–574. https://doi.org/10.13052/jwe1540-9589.20215.

[2] W.-J Jeong, G.-S. Oh and T.-K. Whangbo (2023), Establishment of Production Standards for Web-based Metaverse Content: Focusing on Accessibility and HCI, Journal of Web Engineering, 21(08), 2231–2256. https://doi.org/10.13052/jwe1540-9589.2181.

[3] L.-H. Lee, T. Braud, P. Zhou, L. Wang L, D. Xu D, Z. Lin, A. Kumar, C. Bermejo and P. Hui, (2021), All one needs to know about metaverse: a complete survey on technological singularity, virtual ecosystem, and research agenda, Journal Of Latex Class Files, vol. 14.

[4] X. Zhang, D. Yang, C.H. Yow, L. Huang, X. Wu, X. Huang, J. Guo, S. Zhou, Y. Cai (2022), Metaverse for Cultural Heritages, Electronics, 11, 3730. https://doi.org/10.3390/electronics11223730.

[5] Spatial. Available online: https://www.spatial.io/ (accessed on 26 April 2023).

[6] Mozillar Hubs. Available online: https://hubs.mozilla.com/ (accessed on 26 April 2023).

[7] ArtSteps. Available online: https://www.artsteps.com/ (accessed on 26 April 2023).

[8] Y. Wu, Q. Jiang, S. Ni, H. Liang(2021), Critical Factors for Predicting Users’ Acceptance of Digital Museums for Experience-Influenced Environments, Information, 12, 426. https://doi.org/10.3390/info12100426.

[9] M.K. Bekele, R. Pierdicca, E. Frontoni, E.S. Malinverni, J. Gain (2018), A survey of augmented, virtual, and mixed reality for cultural heritage, Journal on Computing and Cultural Heritage (JOCCH), 11(2), pp. 1–36.

[10] S. Vosinakis,T. Yannis (2016), Visitor experience in Goggle Art Project and in Second life-based virtual museum : A Comprative study, (2016).

[11] B. Brown, I. MacColl, M. Chalmers, A. Galani. C. Randell, A. Steed (2003), Lessons from the lighthouse: collaboration in a shared mixed reality system, In Proceedings of the SIGCHI conference on Human factors in computing systems, pp. 577–584.

[12] F. Rahimi, J. Boyd, R. Levy J. Eiserman (2022), New Media and Space: An Empirical Study of Learning and Enjoyment Through Museum Hybrid Space, IEEE Transactions on Visualization & Computer Graphics, vol. 28, no. 08, pp. 3013–3021.

[13] K. Walczak, W. Cellary, M. White (2006), M. Virtual museum exbibitions, Computer, 39(3), pp. 93–95.

[14] M. Carrozzino, M. Bergamasco (2010), Beyond virtual museums: Experiencing immersive virtual reality in real museums, Journal of Cultural Heritage, Volume 11, Issue 4, pp. 452–458,

[15] M. Shehade, T. Stylianou-Lambert (2020), Virtual Reality in Museums: Exploring the Experiences of Museum Professionals, Appl. Sci., 10, 4031. https://doi.org/10.3390/app10114031.

[16] M.K. Bekele, E. Champion (2019), A comparison of immersive realities and interaction methods: Cultural learning in virtual heritage, Frontiers in Robotics and AI, 6, p. 91.

[17] V. Okanovic, I. Ivkovic-Kihic, D. Boskovic, B. Mijatovic, I. Prazina, E. Skaljo, S. Rizvic (2022), Interaction in eXtended Reality Applications for Cultural Heritage, Appl. Sci. 2022, 12, 1241. https://doi.org/10.3390/app12031241.

[18] D.-M. Popovici, D. Iordache, R. Comes, C.G.D Neamțu, E. Băutu (2022), Interactive Exploration of Virtual Heritage by Means of Natural Gestures, Appl. Sci., 12, 4452. https://doi.org/10.3390/app12094452.

[19] D. Ferdani, B. Fanini, M.C. Piccioli, F. Carboni, P. Vigliarolo (2020), 3D reconstruction and validation of historical background for immersive VR applications and games: The case study of the Forum of Augustus in Rome Journal of Cultural Heritage, 43, pp. 129–143.

[20] F. Škola, S. Rizvić, M. Cozza, L. Barbieri, F. Bruno, D. Skarlatos, F. Liarokapis (2020), Virtual Reality with 360-Video Storytelling in Cultural Heritage: Study of Presence, Engagement, and Immersion. Sensors 2020, 20, 5851.

[21] H. Cecotti (2022), Cultural Heritage in Fully Immersive Virtual Reality. Virtual Worlds 2022, 1, 82–102. https://doi.org/10.3390/virtualworlds1010006.

[22] R.G. Boboc, E. Băutu, F. Gîrbacia, N. Popovici, D.-M. Popovici (2022), Augmented Reality in Cultural Heritage: An Overview of the Last Decade of Applications, Appl. Sci. 2022, 12, 9859. https://doi.org/10.3390/app12199859.

[23] R.G. Boboc, M. Duguleană, G.D. Voinea, C.C. Postelnicu, D.M. Popovici, M. and Carrozzino (2019),Mobile augmented reality for cultural heritage: Following the footsteps of Ovid among different locations in Europe, Sustainability, Vol. 11, No. 4, p. 1167, 2019.

[24] M. Ryffel, F. Zünd Y. Aksoy A. Marra M. Nitti T. Aydın B. Sumner (2017),AR Museum: A mobile augmented reality application for interactive painting recoloring, ACM Transactions on Graphics (TOG), 36(2), 2017, 19.

[25] I. Paliokas, A.T Patenidis, E.E. Mitsopoulou, C. Tsita, G. Pehlivanides, E. Karyati, S. Tsafaras, E.A. Stathopoulos, A. Kokkalas, S. Diplaris, G. Meditskos, S. Vrochidis, E. Tasiopoulou, C. Riggas, K. Votis, I. Kompatsiaris, D. Tzovaras (2020), Gamified Augmented Reality Application for Digital Heritage and Tourism, Appl. Sci. 2020, 10, 7868. https://doi.org/10.3390/app10217868.

[26] Q. Jiang, J. Chen, Y. Wu, C. Gu, J. Sun (2022), A study of factors influencing the continuance intention to the usage of augmented reality in museums, Systems, 2022, 10(3), p. 73.

[27] R. Hammady, M. Ma, Z. Al-Kalha, C. Strathearn (2021), A framework for constructing and evaluating the role of MR as a holographic virtual guide in museums, Virtual Reality, 25(4), 2021, pp. 895–918.

[28] I. Pedersen, N. Gale, P. Mirza-Babaei, S. Reid (2017), More than Meets the Eye: The Benefits of Augmented Reality and Holographic Displays for Digital Cultural Heritage, Journal on Computing and Cultural Heritage. 10. 1–15. doi: 10.1145/3051480.

[29] C.-Y. Weng, B. Curless, I. Kemelmacher (2018), Photo Wake-Up: 3D Character Animation from a Single Photo, https://arxiv.org/abs/1812.02246.

[30] J. Jacob, R. Nóbrega (2021), Collaborative Augmented Reality for Cultural Heritage, Tourist Sites and Museums: Sharing Visitors’ Experiences and Interactions, In: Geroimenko, V. (eds) Augmented Reality in Tourism, Museums and Heritage. Springer Series on Cultural Computing. Springer, Cham. https://doi.org/10.1007/978-3-030-70198-7-2.

[31] A. Matviienko, S. Günther, S. Ritzenhofen, M. Mühlhäuser (2022), AR Sightseeing: Comparing Information Placements at Outdoor Historical Heritage Sites using Augmented Reality, In Proc. ACM Hum.-Comput. Interact., 6, MHCI, Article 194, September 2022, 17 pages. https://doi.org/10.1145/3546729.

[32] M. K. Bekele (2021), Clouds-Based Collaborative and Multi-Modal Mixed Reality for Virtual Heritage, Heritage 2021, 4, 1447–1459. https://doi.org/10.3390/heritage4030080.

[33] K. Kiyokawa, H. Takemura, N. Yokoya (1999), A collaboration support technique by integrating a shared virtual reality and a shared augmented reality, In IEEE SMC’99 Conference Proceedings. 1999 IEEE International Conference on Systems, Man, and Cybernetics (Cat. No. 99CH37028) (Vol. 6, pp. 48–53). IEEE.

[34] T. Piumsomboon, Y. Lee, G. Lee, M. Billinghurst (2017), CoVAR: a collaborative virtual and augmented reality system for remote collaboration, In SIGGRAPH Asia 2017 Emerging Technologies, pp. 1–2. 2017.

[35] C. Pidel, P. Ackermann (2020), Collaboration in virtual and augmented reality: a systematic overview, In Augmented Reality, Virtual Reality, and Computer Graphics: 7th International Conference, AVR 2020, Lecce, Italy, September 7–10, 2020, Proceedings, Part I 7 (pp. 141–156). Springer International Publishing.

[36] Y. Li, E. Ch’ng (2022), A Framework for Sharing Cultural Heritage Objects in Hybrid Virtual and Augmented Reality Environments, In: Ch’ng, E., Chapman, H., Gaffney, V.; Wilson, A.S. (eds) Visual Heritage: Digital Approaches in Heritage Science. Springer Series on Cultural Computing; Springer International Publishing, pp. 471–492. https://doi.org/10.1007/978-3-030-77028-0\_23.

[37] Vuforia Area Target. Available online: https://library.vuforia.com/environments/area-targets (accessed on 26 April 2023).

[38] Mixed Reality Feature Tool. Available online: https://learn.microsoft.com/en-us/windows/mixed-reality/mrtk-unity/mrtk2 (accessed on 26 April 2023).

[39] WebXR. Available online: https://www.w3.org/TR/webxr/ (accessed on 26 April 2023).

[40] Photon Engine. Available online: https://www.photonengine.com/ (accessed on 26 April 2023).

Biographies

Choonsung Shin received his B.Sc. degree in Computer Science from Soongsil University in 2004. He received his M.Sc. and Ph.D. degrees in Information and Communication (Computer Science and Engineering) from the Gwangju Institute of Science and Technology (GIST), in 2006 and 2010, respectively. He was a Postdoctoral Fellow at the HCI Institute of Carnage Mellon University (CMU) from 2010 to 2012. He was a principal researcher at Korea Electronics Technology Institute (KETI) from 2013 to 2019 and a CT RD Program Director of Ministry of Culture, Sports and Tourism (MCST) from 2018 to 2019. In September 2019, he joined the Graduate School of Culture, Chonnam National University where he is currently an associate professor. His research interests include culture technology and contents, VR/AR and human–computer interaction.

Seokhee Oh is a Program Director at R&D Division, Korea Creative Contents Agency(KOCCA) in Korea. He was as an associate professor in the Department of Computer Engineering at Gachon University, Seongnam, from 2016 to 2021. Oh received his Ph.D. in 2016 from the Department of IT Convergence Engineering, Gachon University Graduate School. Oh’s current and previous research interests include Metaverse, XR, virtual reality, HCI, UX, and game design.

Hieyong Jeong received his Ph.D. degree in mechanical engineering from Osaka University, Japan, in 2009. From 2009 to 2013, he was a senior research engineer (full-time) with Samsung Heavy Industries, Company, Ltd., Daejeon, Republic of Korea. From 2013 to 2014, he was a researcher (full-time) with Chonnam National University, Gwangju, South Korea. From 2014 to 2019, he was an associate professor (full-time) with the Department of Robotics & Design for Innovative Healthcare, Graduate School of Medicine, Osaka University, Suita, Japan. Since 2019, he has been working as an associate professor (full-time) with the Department of Artificial Intelligence Convergence, Chonnam National University, Republic of Korea. His research interests include robotic intelligence, and artificial intelligence of things (AIoT) system.

Journal of Web Engineering, Vol. 22_7, 1055–1074.

doi: 10.13052/jwe1540-9589.2275

© 2024 River Publishers