A Web Engineering-based Robust Watermark Restoration and Recognition Method for Protecting Online Video Content

Jieun Lee1, Byeongchan Park1, Uijin Jang2 and Yongtae Shin3,*

1Dept. of Computer Science and Engineering, Soongsil University, Republic of Korea

2Spartan SW Education Center, Soongsil University, Republic of Korea

3School of Computer Science and Engineering, Soongsil University, Republic of Korea

E-mail: lhsgsse10@soongsil.ac.kr; pbc866@ssu.ac.kr; neon7624@ssu.ac.kr; shin@ssu.ac.kr

*Corresponding Author

Received 05 March 2025; Accepted 23 April 2025

Abstract

With the rapid expansion of over-the-top (OTT) services and web-based video streaming platforms, copyright protection has become a critical concern. Unauthorized redistribution and modification of digital content via composite transformations and distortions threaten content security. While watermarking and digital rights management (DRM) offer protection, existing methods often fail under real-world web-based attack scenarios. In this paper, we present a web engineering-based robust watermark restoration and recognition method to enhance the security of online video content. Our approach employs AKAZE feature detection to extract robust feature points, while a discrete wavelet transform (DWT) is used for subband decomposition, embedding the watermark in the lowest-energy subband near the detected feature points. To ensure resilience against distortions common in web environments, we evaluate our method under four types of noise (Gaussian, salt-and-pepper, uniform, and Poisson) and four rotation angles (0, 90, 180, and 270). AKAZE-based feature matching compensates for rotation distortions, while noise removal is handled using Gaussian, Median, or BM3D filtering. Performance evaluation using the peak signal-to-noise ratio (PSNR), structural similarity index measure (SSIM), normalized correlation (NC), and bit error rate (BER) confirms the effectiveness of our method. Results show that BM3D filtering achieves the highest average NC (0.8996) and the lowest BER (0.1137), demonstrating strong robustness against composite transformation attacks. This study contributes to web-based video security by integrating feature-based watermarking techniques with web engineering principles, ensuring effective protection for modern web applications.

Keywords: Web video content, copyright protection, robustness indicators, digital watermark, feature point extracting and matching.

1 Introduction

The video content platform industry has rapidly evolved into the over-the-top (OTT), online video service era, where movies, dramas, and TV programs are delivered over the Internet to various devices. This transformation has been accelerated by changes in consumer behavior during the COVID-19 pandemic [1, 2]. According to a Samjong KPMG report, the global video platform market, as analyzed by MarketsandMarkets, is projected to grow from USD13.2 billion in 2024 to USD25.6 billion in 2028 [2]. Furthermore, a report from the Korea Information Society Development Institute (KISDI) indicates that domestic OTT service usage continues to expand, with 77% of the population using free or paid OTT platforms as of 2023 [3]. The rapid expansion of web-based streaming services has raised critical copyright protection issues. In particular, the illegal duplication and redistribution of content has become a serious challenge for online content providers. A study by the Ministry of Culture, Sports, and Tourism estimates that losses due to illegal content distribution reached approximately KRW27 trillion by 2021 [4]. To counteract this, streaming services employ digital rights management (DRM) and watermarking technologies to enhance content protection. However, content pirates continuously exploit various techniques to bypass these security measures. One of the most common methods involves modifying content before re-uploading it to social media or other platforms. For instance, movies, TV programs, and major video platform content (e.g., YouTube and SOOP) are often rotated at specific angles or embedded with noise before being reuploaded. Figure 1 illustrates an example of illegally duplicated content modified through such transformations.

Figure 1 Examples of illegally duplicated content created through content transformations (some portions blurred to protect privacy). Source: Facebook “Reading YouTube” page (2 images) and “Drama/Film Archive” page (1 image).

These composite transformations exploit the limitations of conventional watermarking techniques, which struggle to restore watermarks once content has been altered. To evade existing content protection systems, pirates apply geometric transformations (e.g., rotation, scaling) and signal-processing-based transformations (e.g., noise addition, compression) in combination. While various detection methods have recently been developed, most existing solutions are only effective for single transformations and face significant challenges in detecting complex composite transformations and distortions (e.g., rotation combined with noise addition). Therefore, a new approach that integrates both geometric and signal-processing transformations is required. In this paper, we propose a robust watermark restoration and recognition method based on feature points extracted from manipulated web video content undergoing various composite transformations and distortions. Specifically, we focus on images (frames) subjected to rotational transformations and noise addition, which are common examples of composite transformations in illicit content distribution. By leveraging a feature point algorithm, our approach enables watermark recovery from illegally redistributed OTT content that has undergone both geometric transformation and signal distortion. This study contributes to web-based video security from a web engineering perspective by integrating feature-based watermarking techniques with web application principles. Furthermore, it provides an effective solution for enhancing the resilience of watermarking methods in modern web-based streaming environments.

The remainder of this paper is organized as follows. Section 2 introduces related research, including an overview of digital watermarking, watermark embedding techniques, and feature point extraction and matching algorithms. In Section 3, we describe our proposed method for recovering and recognizing an original watermark from manipulated web content using feature points. Section 4 presents the experimental setup and results, and Section 5 concludes the paper.

2 Related Work

2.1 Digital Watermarks and Watermark Embedding Techniques

A digital watermark is a type of marker covertly embedded into noise-tolerant signals (e.g., audio or image data) to identify the owner of an image and address ownership disputes [5]. Watermarking techniques can be broadly classified into two categories based on the working domain in which the watermark is inserted: spatial and frequency domain methods. In spatial domain embedding, the pixel values of images or videos are modified directly to embed a watermark. Representative examples include least significant bit (LSB) and spread spectrum modulation (SSM) methods. In frequency domain embedding, an image or video is transformed into the frequency domain, and the watermark is embedded into specific frequency bands, such as discrete cosine transform (DCT), discrete wavelet transform (DWT), and discrete Fourier transform (DFT). Among the frequency domain methods, when the watermark signal is transformed back into the spatial domain, DWT is often regarded as superior to other discrete-transform-based approaches, such as DCT and DFT, in terms of subjective visual quality. This is because the watermark signal energy tends to concentrate around the boundary areas, which typically contain more high-frequency components [6]. Hence, this study employed DWT to embed the watermark.

2.1.1 DWT

DWT can simultaneously analyze signals in both the time and frequency domains and is advantageous for handling discontinuous data [7]. This involves decomposing a signal using a set of basis functions called wavelets. Unlike the Fourier transform or DCT, DWT possesses spatial localization properties, enabling the analysis of not only overall frequency characteristics but also local features [8]. In particular, DWT facilitates multi-resolution and multi-scale analysis, thereby allowing the detailed high-frequency aspects of an image and its overall structure to be separately examined. It has been widely applied in various fields including image processing, data compression, signal analysis, and digital watermarking. An example of applying DWT to an input image is shown in Figure 2 where the image is decomposed into four frequency subbands: LL (low-frequency), LH, HL, and HH (high-frequency).

Figure 2 Sketch map of input image DWT decomposed.

2.2 Image Filter

A filtering process is used to enhance images and remove noise [9, 10]. The filter is composed of a matrix called an kernel that serves as a matrix of weights for each corresponding pixel. The following types of filters are used.

2.2.1 Gaussian filter

A Gaussian filter is used as a linear filtering method to achieve more effective image smoothing [11]. The Gaussian filter obtains a weighted average of the pixels centered on each pixel of the image using a filter kernel. This is based on a Gaussian distribution, and the two-dimensional Gaussian function is given by:

| (1) |

where and represent the relative coordinates of each pixel within the kernel, while (the standard deviation of the Gaussian distribution) controls the smoothing intensity.

Onyedinma et al. [12] found that the Gaussian filter is most effective for Gaussian noise, Poisson noise, and Speckle noise, but less effective for salt-and-pepper noise.

2.2.2 Median filter

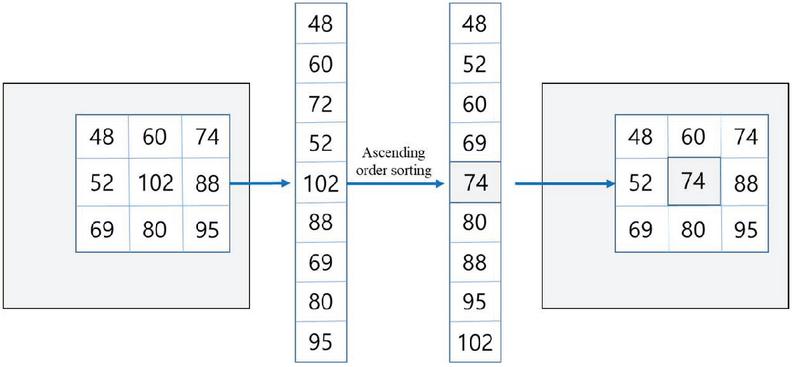

As a nonlinear filtering method, the median filter processes the image pixel-by-pixel, replacing each value with the median of its neighboring pixels. It is a type of smoothing technique similar to linear Gaussian filtering [13]. To compute the median filter, all values within the sliding window are sorted in ascending order. If the number of values is odd, the central value is selected; if it is even, the average of the two central values is used. Figure 3 illustrates an example of median filtering using a sliding.

Figure 3 Example of sliding window median filtering.

Selvi et al. [14] reported that the median filter performs best in the presence of salt-and-pepper noise.

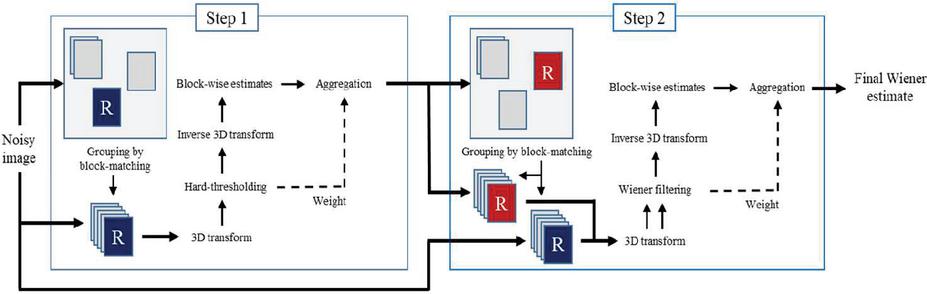

2.2.3 BM3D (block-matching and 3D filtering)

BM3D [15], which builds upon the concept of non-local means (NLM) [16], removes noise by searching for similar patches within a given window [17]. The BM3D algorithm comprises two main stages. In the first stage, a hard-thresholding technique is employed, and in the second stage, Wiener filtering is applied for additional noise reduction. In the first stage, BM3D produces a basic estimate by attempting to remove the noise. This basic estimate then serves as an oracle (i.e., degradation model) for the Wiener filtering process in the second stage. The hard-thresholding technique sets the coefficients below a certain threshold to zero, thereby significantly reducing both the computational complexity and noise components [18]. Wiener filtering is an adaptive filter that assigns different weights to each coefficient using noise statistics; it preserves boundary information more effectively than other linear filters [19]. Figure 4 shows a block diagram of the BM3D process.

Figure 4 Block diagram of the BM3D algorithm.

Danish [20] reported that when the performance of NL-means, K-SVD, and BM3D was compared, BM3D produced the best results.

2.3 AKAZE

AKAZE [21] is an algorithm for extracting feature points from local regions in a two-dimensional image, as proposed by Alcantarilla et al. in 2013. It is a high-speed version of KAZE [22] that shares the same four-step structure as SIFT [23] and employs a nonlinear filter to construct the image scale space. In addition, it utilizes a nonlinear diffusion filter to extract feature points across multiple scales and adopts modified SURF (M-SURF) and modified local difference binary (M-LDB) descriptors. These descriptors are generated relatively quickly, providing both scale and rotation invariance with high speed and accuracy.

Lee et al. [24] reported that AKAZE is effective in both image quality assessment and watermark recovery at various angles (0, 10, 30, 60, and 90). Therefore, this study employs AKAZE for the angle restoration of images.

3 Feature Point-based Watermark Restoration And Recognition for Manipulated Web Video Content

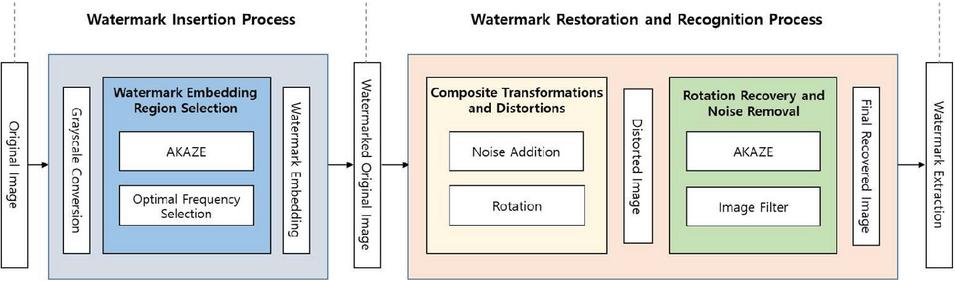

The watermark restoration and recognition method proposed in this paper, which uses feature points for manipulated web video content under composite transformations and distortions, consists of two processes. The first process involves inserting a watermark into the original image (frame). The second process involves generating a transformed image by applying composite transformations and distortions (including rotation and noise) to the watermarked image, followed by watermark restoration and recognition through a comparison with the original watermark. The configuration is shown in Figure 5.

Figure 5 Overall configuration of the proposed feature point-based watermark restoration and recognition method.

3.1 Watermark Insertion Process

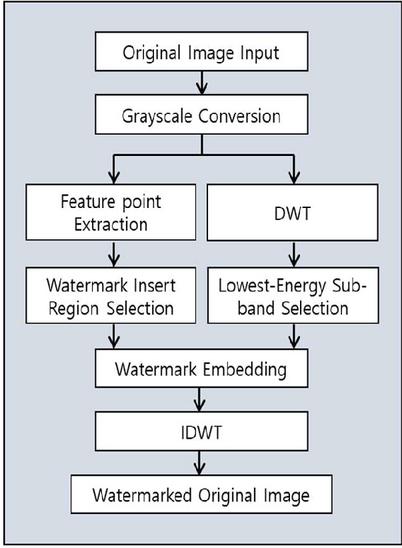

The process of inserting a watermark into the original image is illustrated in Figure 6.

Figure 6 Watermark insertion process.

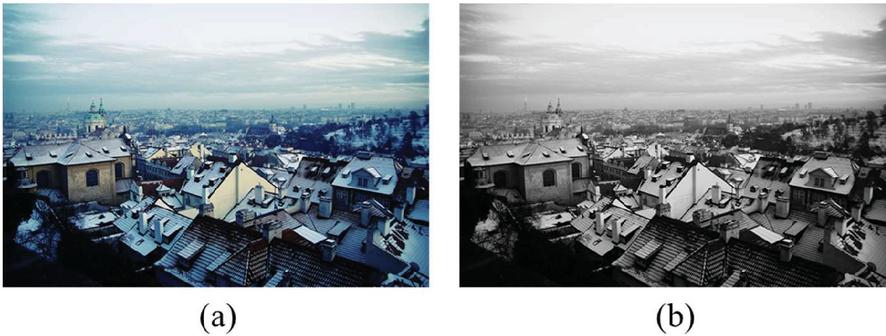

First, the original image was converted to grayscale. Although it is possible to independently embed watermarks into each R/G/B (or Y/Cb/Cr) channel of a color image, we converted it to grayscale in this study to reduce the computational complexity. The grayscale conversion was performed using the ITU-R BT.601 standard, as follows:

where is the grayscale intensity, and , , are the red, green, and blue components of the original image. An example of converting an original image into grayscale is shown in Figure 7.

Figure 7 Grayscale transformation of the original image: (a) original image, (b) gray scale-transformed image.

After the grayscale conversion, the watermark insertion process is divided into two main stages. The first stage involves selecting the region for watermark insertion by extracting feature points using the AKAZE algorithm and designating those regions as the insertion area. Feature-point-based watermarking enables distributed embedding, allowing the identification of the same or neighboring feature points even if the image undergoes attacks and is manipulated. Moreover, by embedding the watermark into the local regions around those feature points rather than the entire image, visual quality can be maintained.

The second stage involves the actual watermark embedding process. First, the grayscale image is decomposed using a single-level two-dimensional discrete wavelet transform (2D DWT), which yields four frequency subbands: LL, LH, HL, and HH.

To determine the optimal subband for embedding, we calculate the energy of each subband using the following equation:

| (2) |

where represents a DWT subband, and are the pixel coordinates within the subband of size . The subband with the lowest energy is selected for embedding, as defined by:

| (3) |

This approach minimizes perceptual distortion while preserving the visual quality of the image. The watermark is then embedded within a square region centered at a feature point detected by the AKAZE feature extractor in the selected subband.

After selecting the optimal subband for embedding, the watermark is embedded into the corresponding frequency coefficients. Figure 8 shows the original watermark image used in this paper. The embedding process follows the equation:

| (4) |

where represents the modified coefficients after embedding the watermark, denotes the original coefficients of the selected subband, is the watermark signal, and is a scaling factor that adjusts the embedding strength to balance imperceptibility and robustness. Here, denote the pixel coordinates within the selected subband. The watermark is embedded into a square region centered at a feature point detected by the AKAZE feature extractor in the selected subband.

Once the watermark is embedded, the inverse discrete wavelet transform (IDWT) is applied to reconstruct the final watermarked image:

where represent the four frequency subbands after the watermark embedding process.

This approach ensures that the watermark is imperceptible while maintaining robustness against common image processing attacks. The resulting watermarked image preserves the visual quality of the original image while embedding the necessary authentication information.

Figure 8 Watermark.

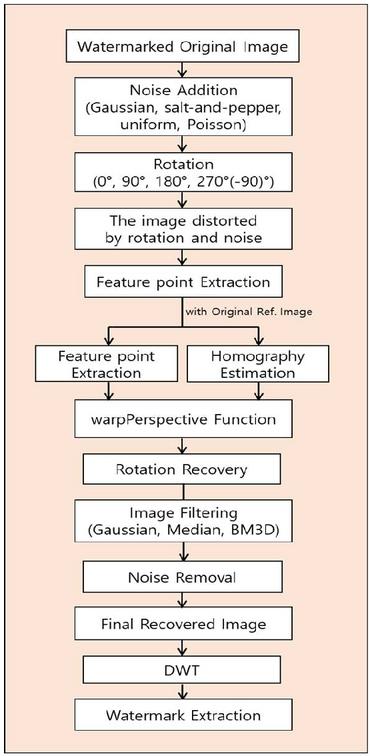

3.2 Watermark Restoration and Recognition Process from Rotation- and Noise-distorted Images

The processes of watermark restoration and original watermark recognition from an image distorted by rotation and noise are illustrated in Figure 9.

Figure 9 Watermark restoration and recognition process from rotation- and noise-distorted images.

This process can be divided into two stages. In the first step, noise was added to the original watermarked image, followed by rotation to generate a distorted image. Four representative types of noise – Gaussian, salt-and-pepper, uniform, and Poisson – were used. Gaussian noise arises from random variations within the signal and is modeled by adding random values drawn from a normal (Gaussian) distribution, characterized by its specific probability density function (PDF). Salt-and-pepper noise is a type of impulse noise, also known as spike noise, appearing randomly as pixels set to minimum (black) or maximum (white) intensity in digital images. Uniform noise is a type of noise in which every value within a specified range is equally probable. Poisson noise follows a Poisson distribution, representing the number of events occurring in a fixed interval of time or space. The parameters for each noise type are summarized in Table 1.

Table 1 Parameters used for each noise type

| Noise Type | Parameter(s) | Value |

| Gaussian | Mean (), variance () | , |

| Salt-and-pepper | Amount, salt vs pepper ratio | , |

| Uniform | Lower bound, upper bound | , |

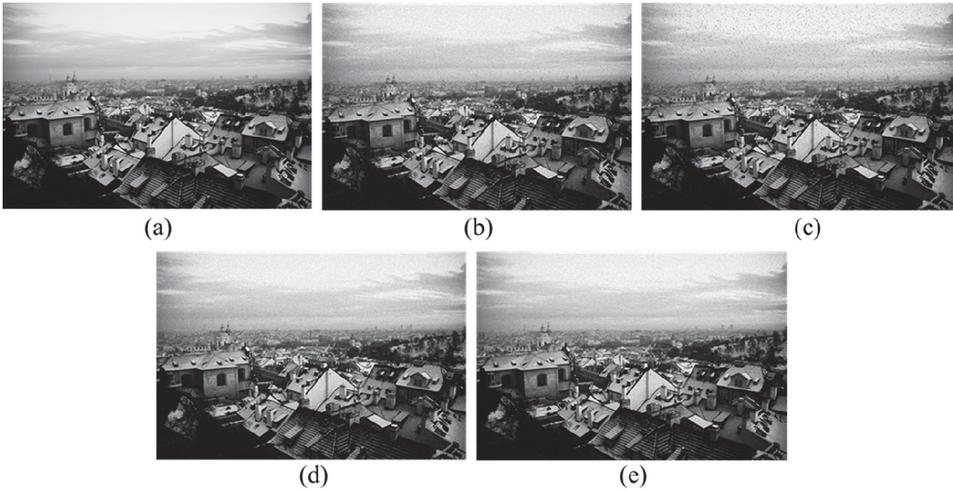

Figure 10 Examples of images distorted by noise addition: (a) watermarked original image, (b) Gaussian noise, (c) salt-and-pepper noise, (d) uniform noise, (e) Poisson noise.

Each noisy image was then rotated by 0, 90, 180, or 270 (90) to produce an image distorted by both noise and geometric transformation. Examples of the noise types added to the image are shown in Figure 10 with zoomed views to better observe visual degradation. Although noise-removal methods are relatively robust against noise addition, once a geometric transformation such as rotation occurs, changes in pixel coordinates make it difficult to estimate the location of the watermark. Therefore, in this paper, we employed the AKAZE feature point extraction algorithm again in this stage.

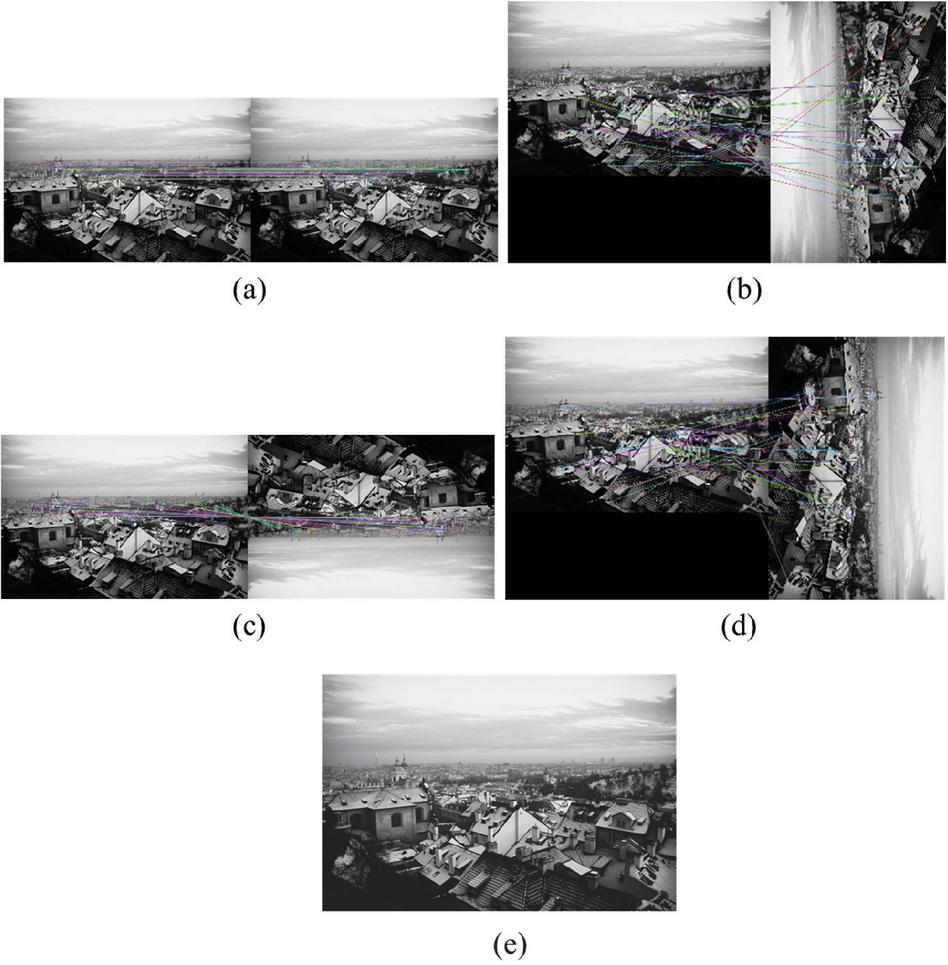

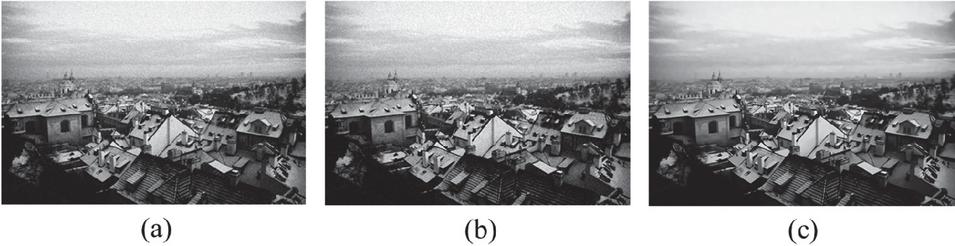

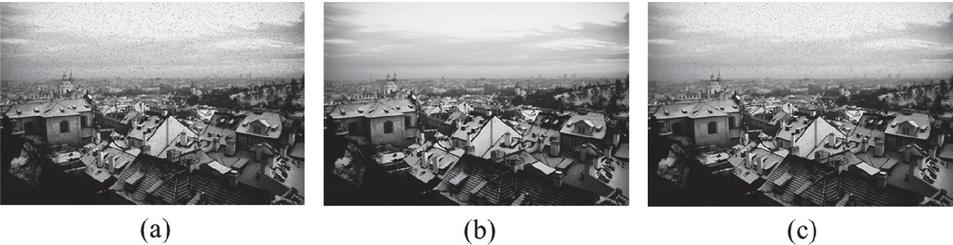

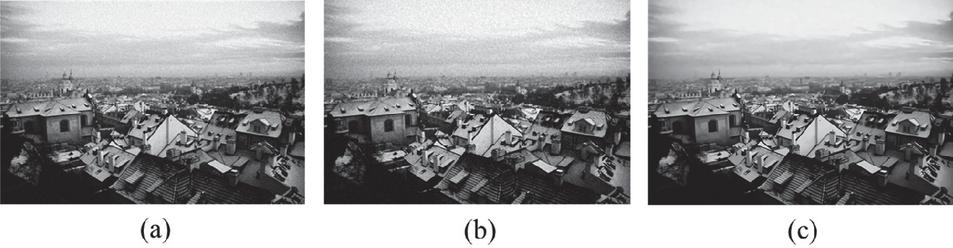

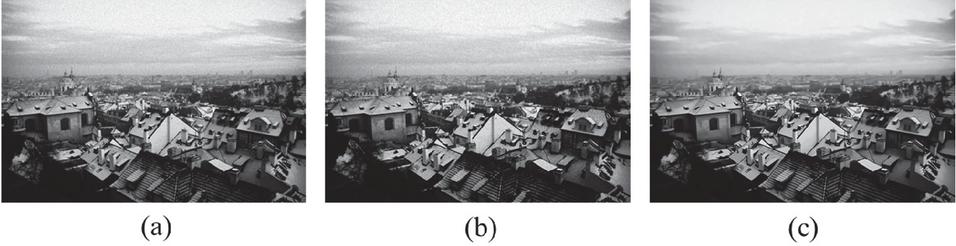

In the second stage, we restored the watermark from the distorted image and recognized the watermark. Specifically, we estimate a homography transformation matrix by matching the AKAZE feature points between the noise- and rotation-distorted image and the original image. We then applied the estimated matrix with the warpPerspective function to restore the image at 0, 90, 180, and 270 (90) back to the original orientation. The results of the AKAZE matching and angle restoration for rotation are shown in Figure 11. Next, we removed noise from each angle-restored image using three filters with the following parameters: a Gaussian filter ( kernel, ), a median filter ( kernel), and BM3D (). The images restored using these filters are shown in Figures 12, 13, 14, and 15.

Finally, we compared the final recovered image – obtained after noise removal and angle restoration – with the watermarked original image to evaluate image quality loss and structural similarity using the peak signal-to-noise ratio (PSNR) and the structural similarity index measure (SSIM), respectively. We then applied DWT to the final recovered image to extract the watermark. By comparing this extracted watermark with the original watermark, we computed normalized correlation (NC) and bit error rate (BER) to measure the accuracy of watermark restoration.

Figure 11 AKAZE matching results: (a) 0, (b) 90, (c) 180, (d) 270 (90), (e) angle-restored images.

Figure 12 Results of applying noise removal filters to Gaussian noise-added images: (a) Gaussian filter, (b) median filter, (c) BM3D.

Figure 13 Results of applying noise removal filters to salt-and-pepper noise-added images: (a) Gaussian filter, (b) median filter, (c) BM3D.

Figure 14 Results of applying noise removal filters to uniform noise-added images: (a) Gaussian filter, (b) median filter, (c) BM3D.

Figure 15 Results of applying noise removal filters to Poisson noise-added images: (a) Gaussian filter, (b) median filter, (c) BM3D.

4 Experimental Results

4.1 Experimental Environment

In this paper, we conducted experiments using 1000 original images to restore and recognize watermarks from web video content distorted by rotation and noise addition. The experimental environment is presented in Table 2.

Table 2 Experimental environment

| OS | Windows 11 |

| CPU | AMD Ryzen 7 7800X3D |

| GPU | RTX 4070 Ti SUPER 16GB |

| Anaconda | Anaconda v.24.5.0 |

| PyTorch | PyTorch v.3.11.7 |

4.2 Verification and Results

To verify the performance of the watermark restoration and recognition method using the feature points of the modified web image content proposed in this paper, four performance evaluation indicators were used: peak signal-to-noise ratio (PSNR) [25], structural similarity index measure (SSIM) [26], normalized correlation (NC), and bit error rate (BER) [27].

PSNR is an objective metric based on the signal-to-noise ratio, quantifying the absolute pixel-level difference between the watermarked original image and the final recovered image (i.e., after angle restoration and noise removal). It is computed using the mean squared error (MSE) and is defined by (5) [25]:

| (5) |

where represents the maximum possible pixel value in the image and MSE is the average of the squared intensity differences between the watermarked original image () and the final recovered image (). Mathematically, MSE is given by (6):

| (6) |

where and denote the pixel values at coordinates , and is the total number of pixels. A higher PSNR value indicates that the final recovered image is similar to the watermarked original image.

SSIM is a subjective image-quality metric designed to assess the structural similarity between two images-the watermarked original image () and the final recovered image () in a manner that aligns more closely with human visual perception. It evaluates the luminance (), contrast (), and structure () components of the images. The SSIM is defined in (7) [26]:

| (7) |

where , , and adjusts the relative importance of the three components. To simplify the SSIM formulation, these parameters are set to 1, yielding the commonly used standard SSIM form shown in (8) [26]:

| (8) |

where and denote the mean luminance of and , respectively; and represent their standard deviations (i.e., contrasts); is the covariance (i.e., structure); and and are small constants used to avoid instability [26]. An SSIM value closer to 1 indicates higher similarity between the final recovered image and the watermarked original image.

NC measures the correlation between the original watermark and the extracted watermark (from the final recovered image), reflecting the similarity in their pixel values. NC is defined by (9) [27]:

| (9) |

where is the embedded watermark, is the watermark extracted from the final recovered image, and are the dimensions of the watermark, and are the row and column indices [27]. An NC value closer to 1 indicates higher similarity between the extracted watermark and the original watermark.

BER represents the bit error rate, comparing each bit of the original watermark to its corresponding bit in the extracted watermark from the final recovered image, thereby measuring the proportion of bit errors. BER is defined by (10) [27]:

| (10) |

where denotes the XOR operation, is the embedded watermark, is the extracted watermark, is the total number of bits in the watermark, and i is the bit index. A BER value closer to 0 indicates that the extracted watermark resembles the original watermark more closely.

To objectively compare the watermark extracted from the final recovered image with the original watermark, no additional post-processing was performed.

Table 3 Restoration performance of denoising filters under various noise addition (pre-rotation)

| Gaussian Filter | Median Filter | BM3D | ||||||||||

| PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | |

| Gaussian noise | 27.1 | 0.7464 | 0.6414 | 0.1693 | 25.81 | 0.6937 | 0.7381 | 0.1692 | 29.63 | 0.8597 | 0.92 | 0.0625 |

| Salt-and-pepper noise | 27.31 | 0.8346 | 0.6403 | 0.1693 | 29.01 | 0.8831 | 0.7979 | 0.1674 | 27.22 | 0.8086 | 0.922 | 0.0571 |

| Uniform noise | 27.88 | 0.7896 | 0.6621 | 0.1693 | 25.87 | 0.6899 | 0.7645 | 0.169 | 29.88 | 0.8632 | 0.9223 | 0.0643 |

| Poisson noise | 29.26 | 0.8762 | 0.7028 | 0.1693 | 27.69 | 0.8220 | 0.8028 | 0.1685 | 30.25 | 0.8684 | 0.9278 | 0.0505 |

Table 4 Denoising performance of filters under various noise addition at 0

| AKAZE Gaussian Filter | AKAZE Median Filter | AKAZE BM3D | ||||||||||

| PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | |

| Gaussian noise | 26.86 | 0.751 | 0.6592 | 0.1689 | 26.21 | 0.7223 | 0.6365 | 0.1689 | 27.77 | 0.8471 | 0.8996 | 0.1177 |

| Salt-and-pepper noise | 26.51 | 0.8138 | 0.6013 | 0.1689 | 27.81 | 0.8664 | 0.7535 | 0.1689 | 27.34 | 0.8227 | 0.9063 | 0.1046 |

| Uniform noise | 27.53 | 0.7911 | 0.64 | 0.1689 | 26.35 | 0.7349 | 0.6374 | 0.1688 | 28.43 | 0.8455 | 0.9113 | 0.1208 |

| Poisson noise | 28.82 | 0.8662 | 0.7247 | 0.1689 | 27.41 | 0.8229 | 0.7481 | 0.1689 | 28.41 | 0.8462 | 0.9157 | 0.1204 |

| Average | 27.43 | 0.8055 | 0.6563 | 0.1689 | 26.945 | 0.7866 | 0.6939 | 0.1689 | 27.99 | 0.8404 | 0.9082 | 0.1159 |

Table 5 Denoising performance of filters under various noise addition at 90

| AKAZE Gaussian Filter | AKAZE Median Filter | AKAZE BM3D | ||||||||||

| PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | |

| Gaussian noise | 26.88 | 0.7551 | 0.6403 | 0.1689 | 26.78 | 0.7271 | 0.7173 | 0.1688 | 27.94 | 0.8348 | 0.9011 | 0.1163 |

| Salt-and-pepper noise | 27.03 | 0.8226 | 0.6141 | 0.1689 | 27.27 | 0.8724 | 0.755 | 0.1689 | 26.93 | 0.815 | 0.9106 | 0.0914 |

| Uniform noise | 27.55 | 0.7897 | 0.6357 | 0.1689 | 26.26 | 0.7295 | 0.6805 | 0.1689 | 28.03 | 0.8387 | 0.8268 | 0.1335 |

| Poisson noise | 28.74 | 0.8636 | 0.7288 | 0.1689 | 27.4 | 0.8226 | 0.8013 | 0.1689 | 28.57 | 0.85 | 0.8896 | 0.1232 |

| Average | 27.55 | 0.8078 | 0.6547 | 0.1689 | 26.93 | 0.7879 | 0.7385 | 0.1689 | 27.87 | 0.8346 | 0.882 | 0.1161 |

Under Gaussian, uniform, and Poisson noise addition, BM3D achieved the highest average PSNR and SSIM among all the filters, indicating the smallest degradation in image quality (Table 5). Furthermore, the NC and BER values indicated that the extracted watermark most closely matched the original watermark under these three noise additions when BM3D was used. However, with salt-and-pepper noise addition, the median filter yielded a higher PSNR and SSIM, suggesting that it may be more effective for this specific type of noise.

Table 6 Denoising performance of filters under various noise addition at 180

| AKAZE Gaussian Filter | AKAZE Median Filter | AKAZE BM3D | ||||||||||

| PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | |

| Gaussian noise | 26.85 | 0.7482 | 0.6113 | 0.1689 | 25.88 | 0.7221 | 0.6354 | 0.1688 | 28.13 | 0.8396 | 0.8996 | 0.112 |

| Salt-and-pepper noise | 26.89 | 0.8201 | 0.6313 | 0.1689 | 28.02 | 0.8685 | 0.7859 | 0.1689 | 27.3 | 0.8206 | 0.9119 | 0.0931 |

| Uniform noise | 27.52 | 0.7886 | 0.6286 | 0.1689 | 26.24 | 0.7278 | 0.6948 | 0.1688 | 28.31 | 0.845 | 0.917 | 0.0994 |

| Poisson noise | 28.83 | 0.8639 | 0.7293 | 0.1689 | 27.47 | 0.8244 | 0.7985 | 0.1688 | 28.21 | 0.8462 | 0.9057 | 0.1065 |

| Average | 27.52 | 0.8052 | 0.6501 | 0.1689 | 26.9 | 0.7857 | 0.7287 | 0.1688 | 27.99 | 0.8379 | 0.9086 | 0.1028 |

Table 7 Denoising performance of filters under various noise addition at 270 (90)

| AKAZE Gaussian Filter | AKAZE Median Filter | AKAZE BM3D | ||||||||||

| PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | PSNR | SSIM | NC | BER | |

| Gaussian noise | 26.87 | 0.7559 | 0.6395 | 0.1689 | 26.08 | 0.7228 | 0.6634 | 0.1689 | 28.17 | 0.8413 | 0.9114 | 0.1233 |

| Salt-and-pepper noise | 27 | 0.8221 | 0.6082 | 0.1689 | 27.74 | 0.8669 | 0.7288 | 0.1689 | 27.11 | 0.8154 | 0.9095 | 0.0926 |

| Uniform noise | 27.48 | 0.7901 | 0.6145 | 0.1689 | 26.16 | 0.7388 | 0.6834 | 0.1688 | 28.09 | 0.8408 | 0.89 | 0.1405 |

| Poisson noise | 28.27 | 0.8638 | 0.6479 | 0.1689 | 27.56 | 0.8271 | 0.7938 | 0.1689 | 28.5 | 0.8461 | 0.8874 | 0.1238 |

| Average | 27.41 | 0.808 | 0.6275 | 0.1689 | 26.89 | 0.7877 | 0.7174 | 0.1689 | 27.97 | 0.8359 | 0.8996 | 0.1201 |

As shown in Tables 5–7, there was a slight decrease in the PSNR and SSIM for all filters as the rotation angle increased. Although BM3D generally demonstrated the highest average performance, the median filter still exhibited marginally better PSNR and SSIM under salt-and-pepper noise, which is consistent with the findings presented in Table 5.

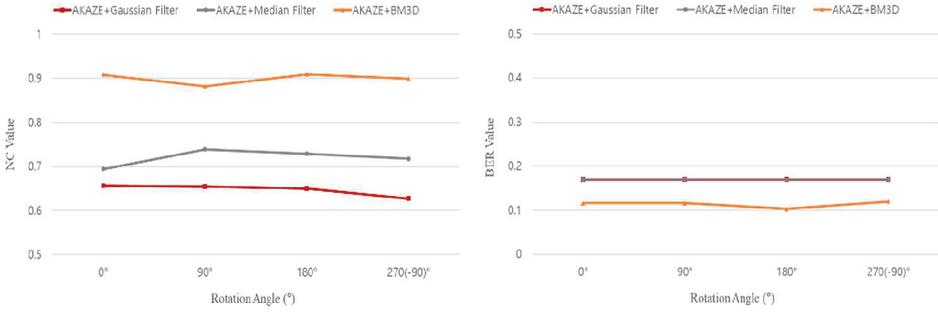

Figure 16 shows the average NC and BER values of the extracted watermark for all noise attacks and rotation angles for each filter.

Figure 16 Comparison of extracted watermark NC and BER values for various filters under all noise additions and rotation angles.

From the NC results, BM3D achieved the highest average value (0.8996) across all the angles. Moreover, the BER results show that BM3D consistently yields the lowest average BER (0.1137), indicating that the watermark extracted using BM3D is most similar to the original watermark.

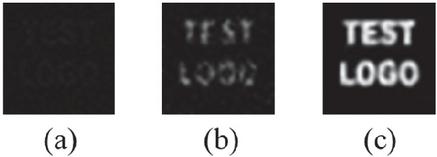

Figure 17 shows representative examples of the extracted watermark for each filter under uniform noise and 90 rotation. Consistent with the quantitative findings, the BM3D- extracted watermark retains clearer lettering.

Figure 17 Representative examples of extracted watermarks under uniform noise and 90 rotation: (a) AKAZE + Gaussian filter, (b) AKAZE + median filter, (c) AKAZE + BM3D.

5 Conclusion

Driven by changes in consumer behavior during the COVID-19 pandemic, the video content platform industry has rapidly evolved into an era of over-the-top (OTT) services and online video streaming platforms, where movies, dramas, and TV programs are delivered over the Internet to various devices. While the growth of streaming services has broadened content accessibility, it has also introduced critical copyright protection issues, with the unauthorized duplication and redistribution of content posing major challenges for online service providers. To address these challenges, this study examined a robust watermark restoration approach designed to withstand composite transformations, specifically noise addition and rotation, which are commonly exploited in illicit content distribution scenarios. Our method integrates the AKAZE algorithm to compensate for rotational distortions and employs Gaussian, median, or BM3D filtering to remove noise. The final recovered image is then compared with the watermarked original image using the peak signal-to-noise ratio (PSNR) and structural similarity index measure (SSIM) to evaluate the visual quality and structural similarity. Additionally, the extracted watermark is assessed via normalized correlation (NC) and bit error rate (BER), providing quantitative measures of the restoration accuracy. The experimental results revealed that BM3D consistently exhibited superior performance under various rotation angles and noise conditions, achieving higher NC values and lower BER than the other tested filters. This outcome underscores the strong resilience of the method against real-world web-based attacks that combine geometric and signal-processing transformations. In future research, we plan to explore AI-based adaptive learning methods that can accommodate new or evolving transformation techniques, thereby further strengthening the security and robustness of watermarking in modern web streaming environments.

Acknowledgment

This research project supported by Ministry of Culture, Sport and Tourism R&D Program through the Korea Creative Content Agency grant funded by the Ministry of Culture, Sport and Tourism in 2025 (Project Name: Development of Copyright Technology for OTT Contents Copyright Protection Technology Development and Application, Project Number: RS-1375027563, Contribution Rate: 100%).

References

[1] C. S. Kim. Copyright Infringement Issue Report: Status of YouTube Fast Movie Channels and Copyright Infringement. Korea Copyright Protection Agency, 2023. [Online]. Available: https://www.kcopa.or.kr/download.do?uuid=ade036e0-7fd4-40c1-a73b-9d6b90f34a6a.pdf. [Accessed: Jan. 31, 2025]

[2] KPMG. A New Change in OTT-Driven Video Platform Industry. 2024. [Online]. Available: https://assets.kpmg.com/content/dam/kpmg/kr/pdf/2024/business-focus/kpmg-korea-video-content-platform-20240927.pdf. [Accessed: Jan. 31, 2025]

[3] KISDI. Recent Trends in Domestic Pay-TV Service Market. 2024. [Online]. Available: https://library.kisdi.re.kr/$/10110/contents/4334696?checkinId=2418989&articleId=1494256. [Accessed: Jan. 31, 2025]

[4] Up to 3 billion compensation will be given to internal reporters of ’illegal videos and webtoons. (2023, October 17). Retrieved from https://www.korea.kr/news/policyNewsView.do?newsId=148921433. [Accessed: Jan. 31, 2025]

[5] V. N. Kirti. A review on digital watermarking and its techniques. IJCSMC, vol. 3, no. 6, pp. 686–690, 2014.

[6] K. J. Lim and T. Y. Choi. Performance comparison of frequency-based watermarking methods. J. Inst. Electron. Eng. Korea-CI, vol. 38, no. 5, pp. 65–76, 2001.

[7] H.-Y. Ryu, G.-W. Lee, and B.-D. Gwon. Noise removal from satellite images using wavelet filter. in Proc. Korean Earth Sci. Soc. Conf., pp. 400–407, 2005.

[8] S. Y. Choi, Y. H. Seo, J. S. Yoo, D. K. Kim, and D. W. Kim. Real-time watermarking algorithm using statistical properties of multi-resolution in DWT-based image compression. J. Korean Inst. Inf. Secur. Cryptol., vol. 13, no. 6, pp. 33–43, 2003. doi:10.13089/JKIISC.2003.13.6.33.

[9] A. Ravishankar, S. Anusha, H. K. Akshatha, A. Raj, S. Jahnavi, and J. Madhura. A survey on noise reduction techniques in medical images. in Proc. IEEE Int. Conf. Electron. Commun. Aerosp. Technol. (ICECA), pp. 385–389, 2017.

[10] R. C. Gonzalez and R. E. Woods. Digital Image Processing. Pearson Education, 2008.

[11] A. Buades, B. Coll, and J. M. Morel. A review of image denoising algorithms, with a new one. Multiscale Model. Simul. vol. 4, no. 2, pp. 490–530, 2005.

[12] E. G. Onyedinma and I. E. Onyenwe. Image Restoration: A Comparative Analysis of Image De noising Using Different Spatial Filtering Techniques. arXiv preprint arXiv:2401.09460, 2024.

[13] A. Buades, B. Coll, and J. M. Morel. A review of image denoising algorithms, with a new one. Multiscale Model. Simul., vol. 4, no. 2, pp. 490–530, 2005. doi:10.1137/040616024.

[14] R. S. Selvi, B. A. Varshini, and S. Deekshiga. A comparative analysis of image denoising filters for salt and pepper noise. in Proc. 2023 Int. Conf. Smart Struct. Syst. (ICSSS), Chennai, India, pp. 1–10, 2023. doi:10.1109/ICSSS58085.2023.10407368.

[15] K. Dabov, A. Foi, V. Katkovnik, and K. Egiazarian. Image denoising by sparse 3-D transform-domain collaborative filtering. IEEE Trans. Image Process., vol. 16, no. 8, pp. 2080–2095, Aug. 2007. doi:10.1109/TIP.2007.901238.

[16] A. Buades, B. Coll, and J.-M. Morel. A non-local algorithm for image denoising. in Proc. 2005 IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit. (CVPR’05), vol. 2, San Diego, CA, USA, pp. 60–65, 2005. doi:10.1109/CVPR.2005.38.

[17] M. Hasan and M. R. El-Sakka. Improved BM3D image denoising using SSIM-optimized Wiener filter. EURASIP J. Image Video Process., vol. 2018, no. 25, pp. 1–12, 2018. doi:10.1186/s13640-018-0264-z.

[18] R. C. Gonzalez, R. E. Woods. Digital Image Processing, 4th ed., Pearson, 2018.

[19] A. Dixit and P. Sharma. A comparative study of wavelet thresholding for image denoising. Int. J. Image Graph. Signal Process., vol. 12, pp. 39–46, 2014. doi:10.5815/ijigsp.2014.12.06.

[20] M. U. Danish. A comparative study of image denoising algorithms. arXiv preprint, arXiv:2412.05490, 2024.

[21] P. F. Alcantarilla and T. Solutions. Fast explicit diffusion for accelerated features in nonlinear scale spaces. IEEE Trans. Pattern Anal. Mach. Intell., vol. 34, no. 7, pp. 1281–1298, 2011. doi:10.5244/C.27.13.

[22] P. F. Alcantarilla, A. Bartoli, and A. J. Davison. KAZE features. in Proc. Eur. Conf. Comput. Vis. (ECCV), pp. 214–227, 2012. doi:10.1007/978-3-642-33783-3_16.

[23] D. G. Lowe. Distinctive image features from scale-invariant keypoints. Int. J. Comput. Vis., vol. 60, no. 2, pp. 91–110, 2004. doi:10.1023/B:VISI.0000029664.99615.94.

[24] J. E. Lee and Y. T. Shin. Performance evaluation of feature point extraction algorithms for watermark restoration at various angles. J. Korea Softw. Asset Valuation, vol. 20, no. 4, pp. 91–101, 2024, doi:10.29056/jsav.2024.12.10.

[25] O. O. Khalifa, Y. binti Yusof, and R. F. Olanrewaju. Performance evaluations of digital watermarking systems. in Proc. 2012 8th Int. Conf. Inf. Sci. Digit. Content Technol. (ICIDT2012), vol. 3, pp. 533–536, 2012.

[26] Z. Wang, A. C. Bovik, H. R. Sheikh, and E. P. Simoncelli. Image quality assessment: From error visibility to structural similarity. IEEE Trans. Image Process., vol. 13, no. 4, pp. 600–612, 2004. doi:10.1109/TIP.2003.819861.

[27] O. Evsutin and K. Dzhanashia. Watermarking schemes for digital images: Robustness overview. Signal Process. Image Commun., vol. 100, p. 116523, 2022. doi:10.1016/j.image.2021.116523.

Biographies

Jieun Lee received her B.Sc. in Aviation Information and Communication Engineering from Kyungwoon University, Republic of Korea, in 2023. Since 2023, she has been enrolled in the integrated M.Sc.-Ph.D. program in the Department of Computer Science and Engineering at Soongsil University, Republic of Korea. Her research interests include computer networks, artificial intelligence, cloud computing, and information security.

Byeongchan Park received his Bachelor’s degree in 2015 and his Master’s degree in computer engineering from Soongsil University in 2018, and his doctorate in computer engineering from Soongsil University in 2023. His research interests include copyright protection and utilization activation.

Uijin Jang received her Ph.D. in Computer Science and Engineering from Soongsil University, Republic of Korea, in 2010. Since 2018, she has been working at the Spartan SW Education Center at Soongsil University, Republic of Korea. Her research interests include networks, digital forensics, and DRM.

Yongtae Shin received his Ph.D. in Computer Science from the University of Iowa in 1994. Since 1995, he has been serving as a Professor in the School of Computer Science and Engineering at Soongsil University, Republic of Korea. His research interests include computer networks, distributed computing, Internet protocols, and e-commerce technology.

Journal of Web Engineering, Vol. 24_4, 473–498.

doi: 10.13052/jwe1540-9589.2441

© 2025 River Publishers