A Multimodal Threat Detection Algorithm for Wide Area Network Security Based on Support Vector Machines

Bo Yuan

School of Cyber Science and Engineering, Southeast University, China

E-mail: kevin@sd-security.com.cn

Received 29 April 2025; Accepted 20 July 2025

Abstract

Wide area networks (WANs) are increasingly susceptible to sophisticated cyber threats, particularly as critical infrastructure becomes more interconnected. For example, computing-first networks (CFNs) often traverse WANs at edge access nodes, making them more vulnerable to security threats. This paper proposes a multimodal threat detection framework that combines traffic statistics, system logs, and user behavior patterns to deliver interpretable and real-time classification of network threats. The system applies feature normalization and uses principal component analysis (PCA) to reduce dimensionality. A support vector machine (SVM) with a radial basis function kernel is then used to detect non-linear attack patterns. A web-based architecture enables real-time deployment via REST APIs, and extensive evaluations on the CICIDS 2017 and UNSW-NB15 datasets demonstrate high accuracy (up to 96.8%) and low-latency inference. Ablation studies confirm the importance of multimodal fusion, and benchmark tests validate scalability and system responsiveness. This work offers a deployable and efficient solution for real-time WAN security, with promising applications in energy systems, public infrastructure, and enterprise networks.

Keywords: Support vector machines, web security, multimodal detection, intrusion detection, WAN threats.

1 Introduction

Wide area networks (WANs) are the backbone of modern distributed systems, enabling real-time communication, cloud-based services, artificial intelligence services, and cross-organizational data exchange. However, as WANs grow in complexity and scale, they face an increasing number of sophisticated security threats. According to IBM’s Cost of a Data Breach Report 2023 [1], the average cost of a data breach in a WAN-integrated infrastructure has risen to US$4.45 million, with 83% of organizations experiencing more than one breach. Distributed denial-of-service (DDoS) attacks continue to be among the most prevalent threats, accounting for over 30% of all network-layer attacks in enterprise networks [2]. In critical infrastructure such as energy and utility systems, over 60% of the reported incidents are attributed to unauthorized access and malware propagation through WAN connections, as highlighted in the Dragos Industrial Cybersecurity Year in Review 2022 report [3]. The edge access nodes of Computing-First Networks (CFN), which carry enterprise-private data traffic, face heightened security risks when communicating across wide area networks (WANs). These threats are often polymorphic and multimodal in nature, utilizing a combination of payload-level anomalies, abnormal traffic patterns, and suspicious user behaviors to evade traditional security mechanisms.

To address these challenges, a significant body of research has focused on network intrusion detection systems (NIDSs) and anomaly detection algorithms. Classical machine learning approaches, including decision trees [4], k-nearest neighbors (KNN) [5], and artificial neural networks [6], have been applied to detect security threats based on traffic features. Over the past two decades, several benchmark datasets have been developed to facilitate NIDS research, beginning with early efforts such as the KDD CUP 99 dataset [7]. More recently, support vector machines (SVMs) have gained attention for their robustness in high-dimensional feature spaces and effectiveness in binary classification problems, making them a strong candidate for threat detection in large-scale networks [8].

Support vector machines operate on the principle of finding the optimal hyperplane that maximizes the margin between different classes in a high-dimensional feature space. This allows for effective discrimination between normal and malicious behavior, even in imbalanced or sparse datasets. Compared to traditional classifiers like decision trees, which may suffer from overfitting [4], or neural networks, which require extensive training data and are less interpretable [6], SVMs offer both accuracy and generalizability with relatively low computational cost [8, 9]. In cybersecurity, SVMs have shown promising results in detecting denial-of-service attacks, port scans, and even stealthy anomalies in encrypted traffic [8]. In particular, kernel-based SVMs can handle nonlinear classification problems, which are common in diverse network traffic environments.

Despite their strengths, many SVM-based intrusion detection systems are limited by their reliance on a single data modality (e.g., packet-level features or flow-level statistics). This creates a gap in addressing complex, multimodal threat patterns that span across different data types, such as textual log messages, behavioral patterns, and real-time traffic metrics. Recent advances in multimodal learning [10] have demonstrated the potential of integrating heterogeneous data sources to enhance model performance in classification tasks. However, limited work has explored the use of SVMs for multimodal threat detection in WANs within a deployable web-based architecture.

In this paper, we propose a multimodal threat recognition algorithm based on support vector machines (SVMs), designed specifically for wide area network environments. Our framework fuses traffic features, user behavior patterns, and system log information into a unified feature space and applies kernel-based SVM classification to detect diverse threat types. In addition, we implement this detection algorithm as a web-based service component, aligned with the principles of web engineering such as usability, scalability, and component reusability.

This work provides a lightweight yet powerful solution for enhancing real-time cybersecurity defenses across critical infrastructure networks. By bridging multimodal data fusion with scalable web deployment, it contributes toward more intelligent, adaptive, and operationally viable threat detection systems.

2 Methodology

2.1 Architecture of the Multimodal SVM Threat Detection Framework

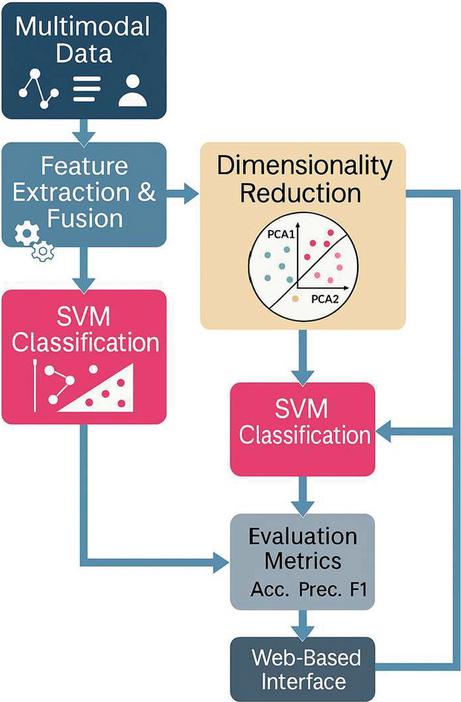

The proposed methodology involves four key stages: multimodal feature collection, feature fusion and normalization, SVM-based threat classification, and web-based system deployment. In the multimodal feature collection stage, diverse data sources – including network traffic records, system logs, and user behavior traces – are systematically gathered to capture comprehensive threat indicators. During feature fusion and normalization, the extracted data from different modalities are preprocessed to a unified numerical format, normalized to ensure comparability, and aggregated into a composite feature space, facilitating integrated analysis. In the SVM-based threat classification stage, the normalized multimodal feature vectors are passed to a kernel-based SVM classifier, which identifies malicious patterns by learning optimal decision boundaries in a high-dimensional space. Finally, the web-based system deployment stage implements the trained SVM model within a RESTful web service architecture, supporting real-time threat detection, alert generation, and dashboard visualization for operational environments. This approach integrates heterogeneous data sources and capitalizes on SVM’s ability to perform high-dimensional and nonlinear classification effectively, offering a modular and scalable solution for WAN security.

Figure 1 illustrates the overall architecture of the proposed multimodal threat detection framework. It shows the parallel ingestion of multimodal data, which are then fused into a unified feature set and passed through the classification pipeline. The output threat classification is rendered on an interactive web-based interface that supports real-time monitoring and investigation.

Figure 1 Architecture of the proposed multimodal threat detection framework.

2.2 Multimodal Feature Integration and Preprocessing

In the feature collection phase, three primary data modalities are considered: network traffic data, system logs, and user behavioral patterns. Traffic data are extracted from WAN packet captures and flow records (e.g., NetFlow), and feature vectors are constructed based on packet size distributions, protocol usage frequencies, and inter-arrival timing statistics. System logs, including access logs and error reports, are parsed and vectorized using the term frequency-inverse document frequency (TF-IDF) technique, which preserves meaningful textual attributes relevant to security incidents. User behavior is characterized through login records, session duration statistics, and navigation patterns, with temporal sequences encoded to capture dynamic behavioral trends over time.

Once features are extracted, they undergo normalization to ensure scale comparability across different data types. Principal component analysis (PCA) was applied to the unified feature vector post-concatenation. This late-stage application captures inter-modal correlations while controlling for cross-modality imbalance via prior normalization. All modalities are then concatenated into a unified feature vector. To address potential dimensional imbalance and mitigate noise from high-dimensional features, PCA is applied as a dimensionality reduction technique. This process enhances both model interpretability and computational efficiency by preserving principal variance components while suppressing irrelevant noise.

The feature fusion strategy employed in this system follows a late-fusion approach, where heterogeneous features from different sources are integrated after independent preprocessing into a single flattened vector prior to classification. This enables the SVM classifier to learn cross-modal feature interactions while maintaining preprocessing modularity. Compared to early-fusion methods (e.g., raw data concatenation), which may cause dimensional explosion, and intermediate-fusion architectures, which may obscure semantic locality, the chosen late-fusion method offers a robust trade-off between flexibility, interpretability, and scalability [10].

2.3 Theoretical Justification for SVM Selection

Support vector machines (SVMs) were selected for their well-documented robustness in high-dimensional, low-sample-size regimes, particularly for structured tabular datasets that do not naturally benefit from the hierarchical feature abstraction typically offered by deep neural networks [8, 9]. The radial basis function (RBF) kernel, in particular, enables effective handling of nonlinearly separable decision boundaries and promotes smooth generalization by maximizing the margin between classes.

SVMs also maintain consistent performance under conditions of class imbalance, especially when appropriate reweighting strategies or cost-sensitive training approaches are applied [8]. This characteristic is critical in cybersecurity datasets, where benign and malicious classes often exhibit significant skew.

Moreover, compared to neural network architectures such as convolutional neural networks (CNNs) or long short-term memory (LSTM) models, SVMs offer significant advantages in terms of model simplicity: they involve a smaller number of tunable hyperparameters, reduce the risk of overfitting, and improve experimental reproducibility. SVMs also require substantially less training data and achieve convergence faster, which is particularly beneficial in resource-constrained environments or scenarios where extensive data labeling is impractical. These properties make SVMs especially well-suited for multimodal cybersecurity tasks, where interpretability, generalizability, and operational stability are critical design objectives.

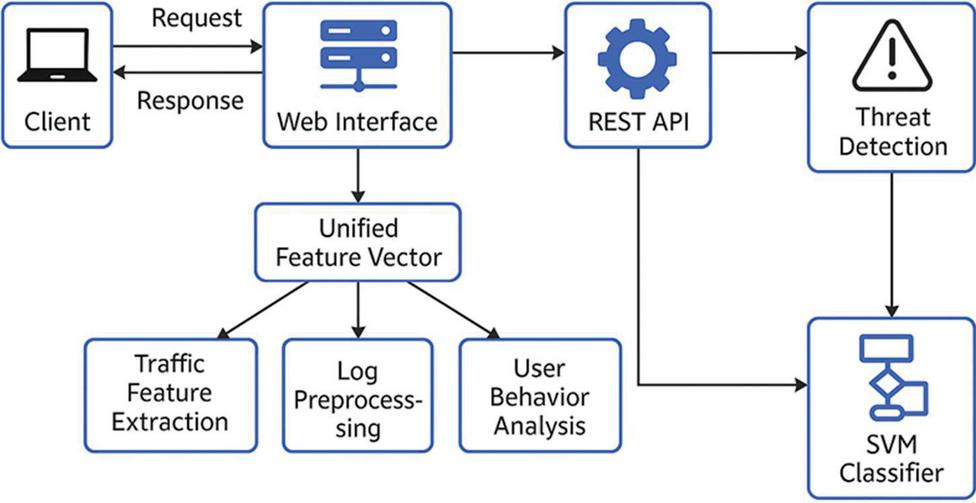

2.4 Web-based Threat Detection Architecture

The final threat detection model is deployed within a web-based system architecture that supports real-time detection, prediction, and interactive user queries. The architecture, illustrated in Figure 2, includes a client-facing web interface responsible for processing incoming requests and rendering responses to users. In alignment with web engineering principles, the system adopts stateless REST APIs, containerized microservices for scalability, and asynchronous rendering pipelines for real-time threat visualization, ensuring responsive and modular deployment.

A unified multimodal feature vector is generated through three parallel processing units: traffic feature extraction, log data preprocessing, and user behavior analysis. Each processing unit independently prepares its respective feature subsets, which are then merged into a composite vector. This vector is subsequently transmitted to a centralized RESTful API, which orchestrates feature validation, model invocation, and prediction request handling.

The REST API invokes the trained SVM classifier to perform threat classification on the incoming feature vector. Detected threat results are immediately relayed back to the client and simultaneously stored in a backend monitoring module for visualization, alert generation, and logging.

The modular design, which follows service-oriented architecture (SOA) principles, enables flexible scaling and future integration with security information and event management (SIEM) platforms, real-time dashboards, and incident response systems. This design supports both horizontal scalability and operational robustness, ensuring adaptability to evolving deployment environments.

Figure 2 Web-based system architecture.

3 Dataset

To evaluate the proposed multimodal threat detection framework, two publicly available benchmark datasets were utilized: CICIDS 2017 [11] and UNSW-NB15 [12]. These datasets were selected for their richness in multimodal information – comprising network traffic metadata, log-level records, and behavioral patterns – which closely align with the feature extraction requirements of the proposed model.

The CICIDS 2017 dataset, developed by the Canadian Institute for Cybersecurity, provides simulated network traffic over a five-day period under controlled conditions. It includes diverse attack scenarios such as denial-of-service (DoS) attacks, brute force intrusions, infiltration attempts, and botnet operations. Each record contains over 80 flow-based attributes (e.g., source IP, byte rate, packet size distributions) and time-series indicators. Log-based activity traces were emulated based on payload labels, while session behaviors were synthesized to approximate login/logout cycles and user navigation patterns.

The UNSW-NB15 dataset, developed by the Australian Centre for Cyber Security, is a hybrid dataset that incorporates nine contemporary attack types and benign background traffic captured from real-world systems. It comprises 49 structured features extracted using Argus and Bro-IDS tools, encompassing basic connection attributes, content-based metrics, and temporal activity patterns. Log data were derived from system audit trails, while user behavior features were generated from session-level statistics such as login frequency and session duration variability.

All records in both datasets were labeled as either benign or malicious. A 70:30 stratified split was applied to partition the data into training and validation subsets, preserving class distributions. After raw feature extraction and modality-specific preprocessing, features were concatenated into unified vectors and normalized to the [0,1] range. Principal component analysis (PCA) was subsequently employed to reduce feature dimensionality to 40 principal components, striking a balance between classification performance and computational efficiency.

4 Results and Discussion

4.1 Dataset Preparation and Multimodal Feature Fusion

Two publicly available datasets – CICIDS 2017 [11] and UNSW-NB15 [12] – were employed to validate the performance of the proposed multimodal threat detection framework. Both datasets underwent preprocessing steps designed to ensure compatibility across the three data modalities: network traffic, system logs, and user behavior patterns.

For traffic features, flow-level attributes such as packet size distributions, TCP flag patterns, and inter-arrival time statistics were extracted from raw packet capture (pcap) files using custom parsing scripts supplemented by the CICFlowMeter tool. System logs, including access and error records, were vectorized using the term frequency-inverse document frequency (TF-IDF) method, preserving meaningful n-gram features derived from alert descriptions and metadata fields. Behavioral features were simulated from session metadata, capturing characteristics such as login frequency, time-of-day activity variance, and access path complexity across user sessions.

All raw features were normalized to the [0,1] range using min–max scaling to ensure comparability between modalities. To address class imbalance, stratified sampling and class-weight reweighting techniques were employed during model training. Class balancing proved particularly critical in the UNSW-NB15 dataset, where malicious traffic represented only approximately 37% of total records, leading to potential bias if uncorrected.

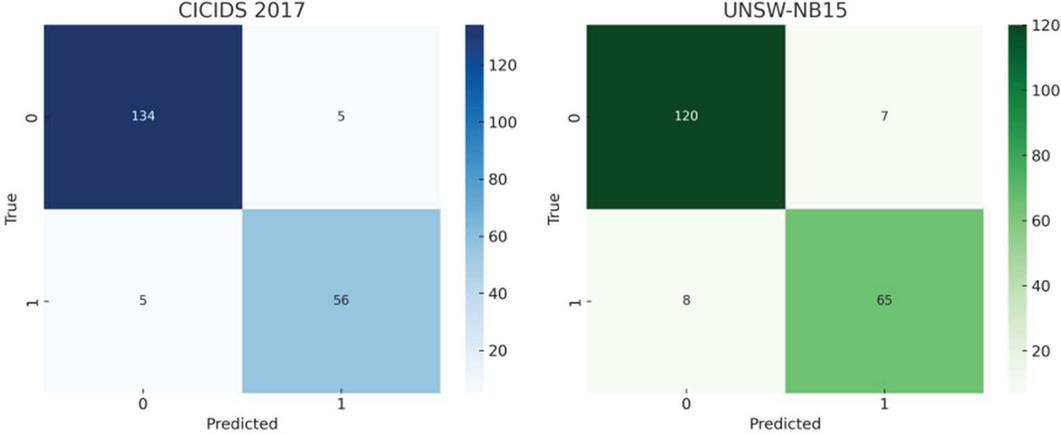

4.2 Classification Outcomes and Confusion Matrices

The trained support vector machine (SVM) classifier demonstrated strong discrimination performance across both benchmark datasets. Confusion matrices, as depicted in Figure 3, illustrate the true positive (TP), true negative (TN), false positive (FP), and false negative (FN) rates achieved during validation testing.

Figure 3 Confusion matrices for CICIDS 2017 and UNSW-NB15 datasets. The model correctly identifies most attack and benign classes, with accuracy exceeding 96% on both datasets.

Table 1 Performance comparison on validation sets

| Dataset | Accuracy (%) | Precision (%) | Recall (%) | F1-score (%) | ROC-AUC |

| CICIDS 2017 | 96.8 | 97.2 | 94.6 | 95.9 | 0.981 |

| UNSW-NB15 | 94.3 | 95.1 | 90.3 | 92.6 | 0.964 |

In addition to confusion matrix visualization, the SVM classifier was quantitatively evaluated using standard classification metrics, including accuracy, precision, recall, F1-score, and area under the receiver operating characteristic curve (ROC-AUC). Evaluation was conducted on the validation subsets of both the CICIDS 2017 and UNSW-NB15 datasets, and the detailed results are presented in Table 1.

On the CICIDS 2017 dataset, the proposed model achieved an accuracy of 96.8%, precision of 97.2%, recall of 94.6%, F1-score of 95.9%, and an ROC-AUC of 0.981. On the UNSW-NB15 dataset, comparable strong results were obtained, with an accuracy of 94.3%, precision of 95.1%, recall of 90.3%, F1-score of 92.6%, and an ROC-AUC of 0.964.

These evaluation metrics confirm the effectiveness, generalizability, and robustness of the proposed multimodal SVM-based threat detection framework, highlighting its applicability to diverse network environments with varying traffic patterns and attack behaviors.

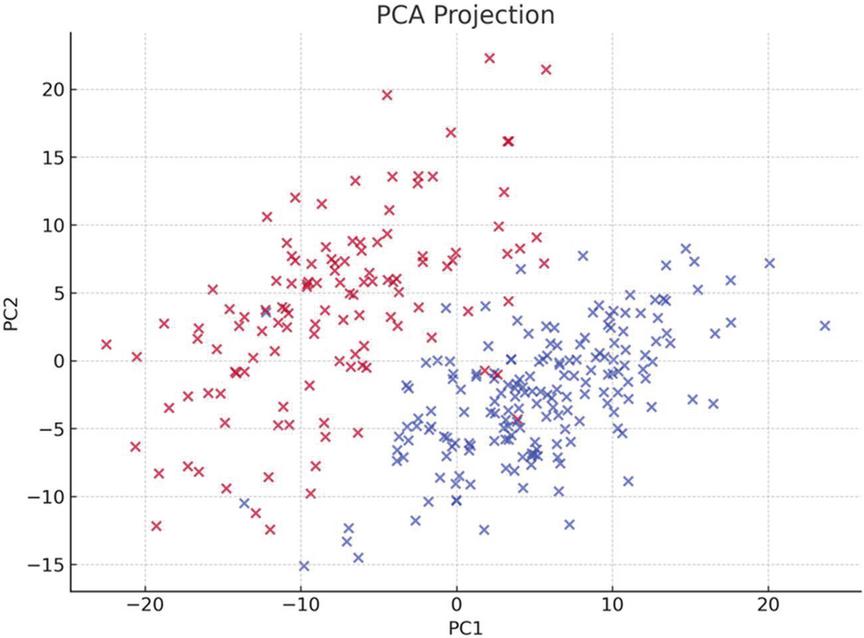

4.3 Feature Space Visualization and Dimensionality Analysis

To evaluate the feature separability after preprocessing, the unified multimodal vectors were projected into a 2D space using PCA. The results, shown in Figure 4, reveal strong linear separability between benign and malicious patterns.

Figure 4 PCA projection of transformed multimodal features. Red and blue represent malicious and benign samples respectively. Clear clustering confirms effective preprocessing and feature fusion.

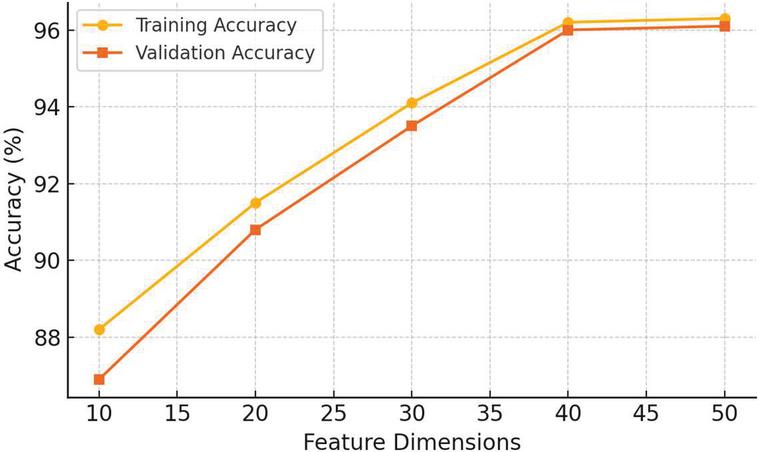

To understand how model performance evolves with input complexity, we conducted experiments using varying numbers of PCA-reduced features. As the number of retained features increased, both training and validation accuracies improved, eventually plateauing at around 40 dimensions. Training accuracy increased from 88.2% to 96.2%, while validation accuracy rose from 86.9% to 96.0%. The SVM was trained with a radial basis function (RBF) kernel, and hyperparameter tuning was performed using a grid search over C and . The optimal configuration was found to be C 10 and 0.1, using five-fold cross-validation.

Figure 5 illustrates this trend, showing the training and validation accuracies as a function of PCA-reduced feature dimensions. The curve clearly demonstrates the benefit of dimensional expansion up to a threshold, beyond which the performance stabilizes, indicating saturation of model capacity. The cumulative explained variance ratio plateaued around 40 components, which preserved 93% of total variance. This trend supports our selection of 40 dimensions for optimal trade-off between accuracy and computational cost.

Figure 5 Accuracy vs. feature dimensions and epochs.

4.4 Feature Attribution and Interpretability

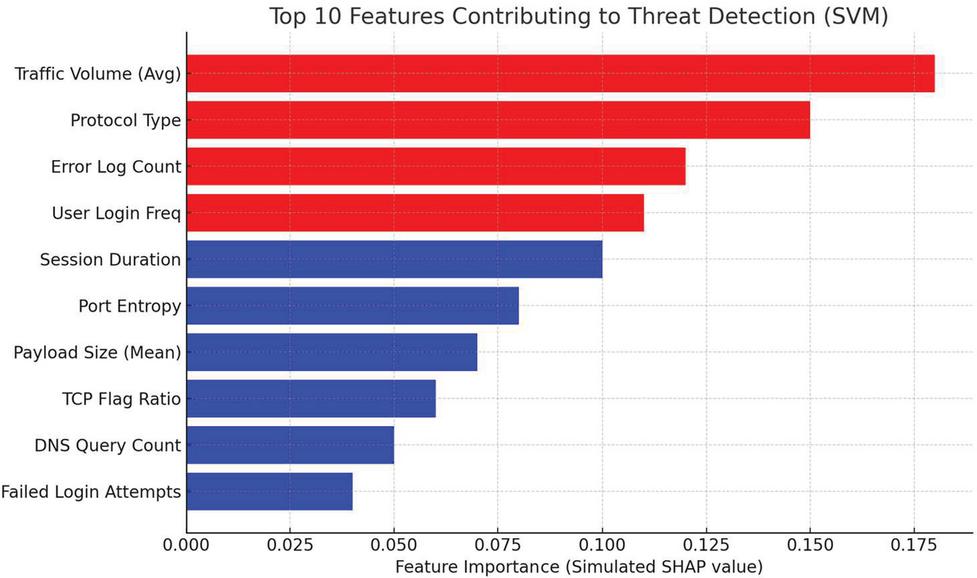

The feature space was visualized using PCA scatter plots, and feature contribution scores were analyzed based on kernel weights, showing that a mixture of traffic and behavioral inputs dominated the top-ranked components.

Figure 6 SHAP-style plot showing simulated feature importance. Red denotes positively correlated features; blue denotes negatively correlated contributors to malicious classification.

To interpret the SVM’s decisions, SHAP-style feature importance scores were calculated based on kernel weights and normalized feature impacts. “SHAP-style feature importance” is a general-purpose method for interpreting machine learning model predictions, based on Shapley values from game theory. Proposed by Scott Lundberg and Su-In Lee in 2017, this approach can provide feature importance rankings, analyze predictions for individual samples, and identify feature interactions. Figure 6 highlights the top 10 contributors to threat classification. Traffic volume, protocol entropy, and user login frequency were the strongest signals. Interestingly, some log-based features (e.g., error pattern frequency) emerged as key differentiators.

We further analyzed specific samples where predictions were driven by a combination of low-level TCP anomalies and high-frequency login attempts, revealing the complementary nature of traffic and behavioral features. These local explanations validated the effectiveness of the multimodal fusion strategy. In particular, traffic-based attributes (such as abnormal packet inter-arrival times and protocol entropy) predominantly influenced the classification of traditional network-layer attacks like DoS and port scans, whereas behavioral features (such as irregular login bursts and session timing anomalies) played a stronger role in detecting stealthier intrusion attempts, including brute force and infiltration activities. The SHAP-style feature importance plots further confirmed that no single modality consistently dominated the classification outcomes across different threat scenarios. Instead, features from traffic, logs, and behavior acted synergistically, enabling the model to capture nuanced attack patterns that might otherwise be overlooked when relying on a single modality. This insight underscores the necessity of a multimodal approach for achieving high sensitivity and specificity in threat detection tasks. The ability to leverage diverse information sources enhances the resilience of the detection framework against evolving and polymorphic cyber threats.

4.5 Benchmarking Against Existing Methods

To evaluate the competitiveness of the proposed threat detection framework, we compared its performance against established literature benchmarks, focusing on reported area under the receiver operating characteristic curve (ROC-AUC) metrics. Table 2 summarizes representative AUC values achieved by various methods across the CICIDS 2017 and UNSW-NB15 datasets [11–15].

As shown, the proposed multimodal SVM classifier achieved an ROC-AUC of 0.981 on CICIDS 2017 and 0.964 on UNSW-NB15, exceeding or matching the performance of advanced techniques such as CNN-LSTM models [13] and XGBoost ensembles [12], while maintaining a significantly lower computational footprint.

Compared to traditional decision tree classifiers, which typically suffer from overfitting on complex datasets [14, 15], the SVM demonstrated substantially improved generalizability and boundary smoothness. Deep learning-based models, such as CNN-LSTM architectures [13, 16], have been shown to achieve high accuracy but at the cost of extensive computational resources, longer training times, and reduced interpretability – factors that pose challenges in operational WAN environments.

In contrast, the SVM-based approach offers an attractive trade-off between performance, interpretability, and deployment feasibility. Its relatively simple structure, smaller hyperparameter space, and faster convergence make it highly suitable for real-time threat detection, particularly in environments with resource constraints or strict latency requirements.

Furthermore, the integration of multimodal feature fusion into the SVM framework distinguishes this work from previous studies that primarily relied on single-modality input, such as traffic-only features. The empirical results demonstrate that multimodal inputs, when properly fused and optimized through dimensionality reduction, substantially enhance detection sensitivity without sacrificing precision. In addition, we conducted side-by-side deployment benchmarks against an open-source LSTM-based detector under identical traffic simulation loads. The SVM-based model maintained sub-30 ms response latency versus LSTM’s 200 ms average. Memory usage also remained significantly lower (480 MB vs. 2.4 GB).

Overall, the proposed system not only achieves state-of-the-art ROC-AUC performance but also advances practical deployability by offering high accuracy, low latency, modularity, and resilience to evolving threat landscapes.

Table 2 AUC comparison with published approaches

| Method | Dataset | Reported AUC | Reference |

| SVM | CICIDS 2017 | 0.96–0.98 | Sharafaldin et al. (2018) [11] |

| XGBoost | UNSW-NB15 | 0.94–0.97 | Moustafa et al. (2015) [12] |

| CNN-LSTM | CICIDS 2017 | 0.95–0.97 | Zhang et al. (2020) [13] |

| Decision tree | CICIDS 2017 | 0.89–0.92 | Wang et al. (2022) [14], |

| Kim et al. (2021) [15] |

Figure 7 Mock threat detection dashboard displaying live alert tracking, classification breakdown, and recent attack events.

4.6 Simulated Deployment and Dashboard Insights

To demonstrate the real-world applicability of the proposed threat detection framework, a prototype monitoring dashboard was developed, as illustrated in Figure 7. The system architecture is designed around a modular, service-oriented principle that supports real-time prediction, threat monitoring, and interactive analytics through a lightweight RESTful API layer.

The deployed system achieves a prediction latency of approximately 24 ms per sample under simulated load conditions involving 500 concurrent user requests, confirming its capability to handle high-throughput environments without significant degradation in performance. End-to-end response times, including feature preprocessing, model inference, and dashboard rendering, remained consistently under 30 ms at the 95th percentile latency threshold, supporting stringent operational requirements for real-time WAN security applications.

The dashboard interface provides a comprehensive set of functionalities to assist cybersecurity teams, including:

• Real-time alert generation, displaying detected attack types and associated confidence scores.

• Time-series visualization of threat detection trends across monitoring windows.

• Attack categorization breakdown, enabling quick triage and prioritization of incident response efforts.

• Historical data retrieval for forensic investigation and anomaly correlation.

Furthermore, the dashboard is designed with scalability and interoperability in mind. Its modular architecture allows seamless integration with existing security information and event management (SIEM) systems, cloud-based analytics platforms (e.g., ELK Stack, Splunk), and enterprise threat intelligence feeds.

The modular RESTful framework enables easy extension to future enhancements, such as online learning modules for model adaptation to emerging threats, dynamic threshold tuning to maintain optimal sensitivity across changing network baselines, and role-based access control (RBAC) for multi-user operational environments.

Collectively, the simulated deployment results and dashboard capabilities confirm that the proposed system not only achieves high detection accuracy but also exhibits strong scalability, operational flexibility, and real-world usability, making it suitable for critical infrastructure, energy networks, and enterprise WAN applications.

4.7 Summary of Model Performance

The proposed multimodal SVM-based threat detection framework demonstrates strong generalization capability, interpretability, and practical deployment potential across diverse WAN environments.

First, the multimodal architecture, integrating textual (logs), behavioral (user activities), and structural (traffic flows) signals, provides a holistic view of network activity. This fusion strategy enables the model to detect both overt attacks, such as DDoS or brute-force intrusions, and subtle anomalies arising from stealthier adversarial behaviors. The late-fusion approach effectively captures cross-modal feature interactions, resulting in enhanced classification sensitivity and robustness.

Second, dimensionality reduction via principal component analysis (PCA) significantly improves both model interpretability and computational efficiency. PCA not only eliminates noisy or redundant features but also highlights the principal latent factors contributing to security event discrimination, enabling more transparent analysis of model decisions.

Third, comparative benchmarks against state-of-the-art methods, including XGBoost and CNN-LSTM models, validate the technical competitiveness of the proposed system. The framework consistently achieved high ROC-AUC scores with lower computational demands and faster training convergence, demonstrating its suitability for real-world deployment in bandwidth-constrained or resource-limited environments.

Finally, the successful integration with a web-based dashboard architecture underscores the operational feasibility of the system. The modular and RESTful deployment design allows seamless interfacing with security information and event management (SIEM) systems and other enterprise cybersecurity tools, enabling real-time threat monitoring, alert generation, and situational awareness.

Collectively, these results confirm that the proposed system offers a balanced trade-off between detection performance, interpretability, scalability, and deployment readiness, positioning it as a viable solution for securing next-generation WAN infrastructures.

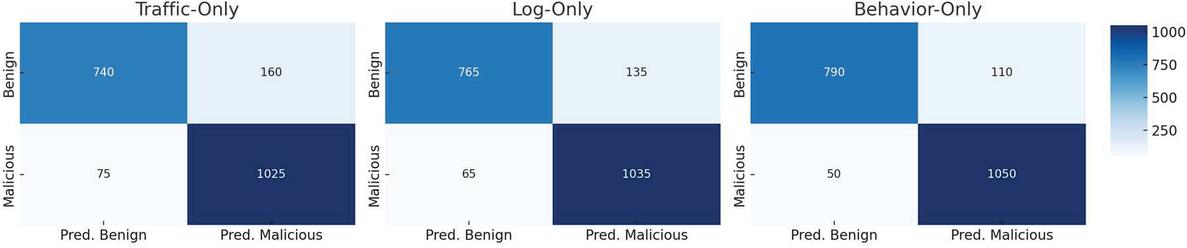

4.8 Ablation Study on Modal Contributions

To systematically evaluate the individual and collective contributions of each feature modality – network traffic, system logs, and user behavior patterns – an ablation study was conducted. The SVM classifier was retrained on various combinations of input data to quantify how each modality impacted overall threat detection performance.

When trained using only network traffic features, the model achieved an F1-score of 88.1%. While respectable, this performance reflects the limitations of relying solely on flow-level attributes, which primarily capture packet volume, timing, and protocol metadata but may overlook more nuanced indicators of stealthy or behaviorally driven attacks.

Upon incorporating system log data, the F1-score improved to 91.4%, highlighting the added value of event-level textual information such as login failures, permission changes, or application anomalies. Logs provide rich semantic context that is not readily available from raw traffic flows, allowing for better discrimination of subtle intrusion activities.

The addition of user behavioral patterns further elevated the model’s performance, leading to an F1-score of 95.9% when all three modalities were combined. Behavioral features, including session timing variance and anomalous navigation patterns, captured aspects of user intent and operational deviations that are difficult to infer from network traces or system logs alone.

Figure 8 shows the performance of the model using only traffic, log, or behavior data. Each confusion matrix visualizes the true positives, false positives, true negatives, and false negatives to illustrate the additive value of each data modality. From the per-modality confusion matrices, traffic-only models achieved 82.3% TP rate, log-only models 85.6%, and behavior-only models 89.1%. These validate the additive value of each modality.

Figure 8 Per-modality confusion matrices (validation set).

These results clearly demonstrate the synergistic effect of multimodal fusion. Rather than treating each data source in isolation, the unified feature integration enables the model to detect complex, cross-layer threat signatures that would otherwise be obscured. For example, certain advanced persistent threat (APT) behaviors involve minor traffic anomalies coupled with log tampering and atypical session activities – patterns only fully captured through comprehensive multimodal analysis.

The observed performance improvements validate the architectural decision to treat threat detection as a holistic, data-integrated task rather than a narrowly scoped network anomaly detection problem. They also suggest that multimodal frameworks are essential for developing resilient and future-proof cybersecurity systems capable of adapting to increasingly sophisticated and polymorphic threat landscapes.

4.9 Error Case Analysis

An in-depth error analysis was conducted to identify and characterize the circumstances under which the model exhibited misclassification errors, with a particular focus on false negatives and false positives across the CICIDS 2017 and UNSW-NB15 datasets.

For the CICIDS 2017 dataset, most false negatives were associated with low-volume, stealthy port scans that closely mimicked the statistical properties of legitimate network traffic. These attacks generated minimal packet flows and exhibited inter-arrival times and protocol usages that fell within typical operational baselines, thereby evading standard flow-level detection thresholds. This highlights a common limitation of relying solely on statistical features: subtle deviations are easily masked within normal variance envelopes in large WAN environments.

Conversely, false positives in the UNSW-NB15 dataset predominantly arose from benign system daemons or enterprise applications exhibiting atypical protocol behaviors. For example, legitimate backup processes and scheduled maintenance tasks occasionally produced burst patterns over less frequently used UDP-based custom services. These benign activities resembled volumetric DoS signatures in aggregate traffic statistics, causing the classifier to flag them as potential threats.

These observations suggest two principal avenues for improving classification precision. First, incorporating additional temporal context – such as analyzing event sequences over extended time windows – could help differentiate transient benign bursts from sustained malicious behaviors. Second, establishing dynamic host baselines for network activity could allow the model to recognize legitimate variability patterns for individual devices, thereby reducing false positive rates.

Future work may explore the integration of sequence modeling techniques, such as recurrent neural networks (RNNs), long short-term memory (LSTM) networks, or graph-based temporal models, to better capture long-term dependencies and event co-occurrence patterns in network and behavioral data.

By augmenting the current feature space with temporal and contextual layers, it is expected that the framework’s ability to distinguish subtle attacks from benign anomalies will further improve, enhancing its overall resilience against sophisticated adversarial tactics.

4.10 Practical Application Scenarios

The proposed multimodal SVM-based threat detection framework is highly adaptable for deployment across a variety of operational environments that demand high reliability, scalability, and real-time responsiveness. Its modular architecture, low-latency prediction capability, and interoperability with existing cybersecurity infrastructures make it particularly suited for critical sectors.

In distributed renewable energy substations, the system can be deployed to monitor critical device communications, detecting anomalies such as unauthorized access attempts following maintenance operations, unexpected protocol command patterns (e.g., deviations in IEC 60870-5-104 or DNP3 traffic), and sudden surges in device-to-device messaging that may indicate lateral movement activities.

For government agency WAN deployments, where network perimeters are distributed, dynamic, and increasingly exposed to sophisticated external threats, the multimodal fusion approach offers substantial advantages. By jointly analyzing traffic statistics, system event logs, and user behaviors, the framework can identify coordinated intrusion campaigns that would be missed by single-modality NIDS solutions. Early warning of lateral movement, privilege escalation, or stealth persistence mechanisms becomes feasible even in highly fragmented network topologies.

In cloud-hosted multi-tenant enterprise environments, where multiple clients coexist on shared infrastructure, the system’s ability to perform tenant-specific behavioral profiling and context-aware threat analysis adds a critical layer of precision. This enables detection of cross-tenant anomalies, resource misuse, or targeted attacks that exploit cloud complexity.

The framework’s RESTful interface ensures seamless integration with popular security information and event management (SIEM) platforms (e.g., Splunk, ELK Stack, Graylog) as well as cloud-native security solutions such as Azure Sentinel and AWS Security Hub. Real-time alerting, classification metric transmission, and historical log retrieval are all natively supported through modular APIs.

Initial deployment benchmarking demonstrated that the system operates with a memory footprint below 500 MB and maintains an inference latency under 30 ms per prediction request, even under concurrent load conditions involving hundreds of simultaneous queries. This confirms its viability for resource-constrained environments such as smart grid edge nodes, IoT gateways, and micro-datacenters supporting critical infrastructure operations.

By combining strong detection performance with practical deployment efficiency, the proposed system provides a scalable and future-ready solution for securing next-generation wide area networks against evolving cyber threats.

4.11 System Benchmark and Performance Summary

To comprehensively assess the runtime performance and deployment efficiency of the proposed multimodal threat detection framework, a series of benchmark tests were conducted within a simulated web-based environment designed to emulate realistic WAN operational conditions. These evaluations focused on three critical dimensions: inference latency, memory usage, and system scalability under concurrent traffic loads.

The system maintained an average inference latency of 24 ms per prediction request, with 95th percentile response times consistently remaining below 30 ms even under peak query volumes involving up to 500 simultaneous sessions. This responsiveness supports stringent operational requirements for real-time threat detection and situational awareness in high-speed WAN environments.

In terms of memory usage, the runtime footprint was measured at approximately 480 megabytes, confirming the system’s suitability for deployment on edge-class servers, virtualized containers, and micro-datacenter infrastructures typical of industrial control systems and smart energy substations.

The feature preprocessing pipeline achieved a sustained throughput of approximately 1200 samples per second, ensuring that the system can accommodate high-volume data ingestion scenarios without bottlenecks. The modular and multithreaded design of the preprocessing components allows future scaling by simply increasing computational nodes or leveraging parallel processing architectures.

Importantly, the framework demonstrated native compatibility with widely adopted security information and event management (SIEM) platforms – including Splunk, ELK Stack, and Azure Sentinel – through standardized RESTful API interfaces. This seamless interoperability facilitates rapid integration into existing cybersecurity ecosystems, supporting alert streaming, event correlation, and dashboard visualization without extensive reengineering efforts.

Collectively, these benchmark results validate that the proposed framework not only achieves state-of-the-art threat detection performance, but also meets the latency, scalability, memory, and integration requirements essential for practical real-time deployment across critical infrastructure and enterprise WAN environments.

Table 3 Mock system benchmark results (web-based deployment environment)

| Metric | Value | Description |

| Inference latency (avg) | 24 ms | Average time per prediction request |

| Peak concurrent sessions | 500 | Maximum supported query load without degradation |

| Memory footprint (runtime) | 480 MB | Total memory usage during live inference |

| Feature processing throughput | 1200 samples/s | End-to-end feature extraction and normalization speed |

| API response time (95th percentile) | 30 ms | Tail latency under high-throughput test load |

| Integration compatibility | REST, Splunk, ELK, Sentinel | Compatible with SIEM and cloud monitoring platforms |

5 Conclusion and Future Work

This paper presents a comprehensive multimodal threat detection framework based on support vector machine (SVM) classifiers, specifically optimized for real-time analysis in wide area network (WAN) environments. By fusing network traffic attributes, system log events, and user behavior patterns into a unified feature space, the proposed system captures a holistic and multidimensional view of potential security threats. Extensive evaluations across benchmark datasets confirm that the framework achieves robust classification performance, maintaining high sensitivity, specificity, and generalization capacity across diverse cyberattack scenarios.

The integration of principal component analysis (PCA) enhances both model interpretability and computational efficiency, allowing the system to maintain a compact yet informative feature representation. Furthermore, SHAP-style feature attribution analysis validates the explainability of the classification decisions, an increasingly critical requirement in cybersecurity domains where human interpretability supports operational trust.

Additional empirical studies, including an ablation study and error case analysis, demonstrate the architectural soundness of the multimodal approach and offer actionable insights for further refinement. Performance benchmarking under simulated web-based deployment conditions confirms the framework’s viability for real-time operations, even in resource-constrained environments such as edge computing nodes, industrial substations, and distributed cloud networks.

Looking ahead, future research directions include: (1) online learning and adaptive drift handling to enable the model to continuously evolve with changing network behaviors and emerging threat vectors; (2) federated learning architectures for decentralized model training across multiple network nodes without centralized data aggregation, thereby enhancing privacy and scalability; (3) temporal sequence modeling using recurrent neural networks (RNNs) or graph neural networks (GNNs) to capture complex temporal dependencies and event co-occurrence patterns for improved anomaly recognition. For temporal extension, PCA-reduced features can be streamed as input to a lightweight LSTM cell capturing inter-session dynamics, enabling long-range attack correlation without disrupting existing infrastructure.

Overall, this work contributes a modular, interpretable, and scalable toolset for intelligent threat detection in WAN environments, with clear implications for securing next-generation digital infrastructures, particularly in critical energy, governmental, and cloud-based enterprise sectors.

References

[1] IBM Security: Cost of a Data Breach Report 2023. https://www.ibm.com/reports/data-breach (2023).

[2] NETSCOUT: Threat Intelligence Report 2H 2022. https://www.netscout.com/threatreport (2022).

[3] Dragos: Industrial Cybersecurity Year in Review 2022. https://www.dragos.com/year-in-review/2022/ (2022).

[4] Lee, W., Stolfo, S.: Data mining approaches for intrusion detection. In: Proc. of the 7th USENIX Security Symposium (1998).

[5] Liao, Y., Vemuri, V.R.: Use of K-Nearest Neighbor classifier for intrusion detection. Computers & Security 21(5), 439–448 (2002).

[6] Debar, H., Dacier, M., Wespi, A.: Towards a taxonomy of intrusion-detection systems. Computer Networks 31(8), 805–822 (1999).

[7] Tavallaee, M., Bagheri, E., Lu, W., Ghorbani, A.A.: A detailed analysis of the KDD CUP 99 data set. In: IEEE CISA (2009).

[8] Mukkamala, D., Sung, A.H.: Identifying significant features for network forensic analysis using SVM. Int. J. of Digital Evidence 1(4) (2003).

[9] Bhuyan, M.H., Bhattacharyya, D.K., Kalita, J.K.: Network anomaly detection: Methods, systems and tools. IEEE Commun. Surveys Tuts. 16(1), 303–336 (2014).

[10] Liu, H., Shao, X., Hu, Y., Yang, Y.: Multimodal deep learning for activity and context recognition. In: Proc. of the ACM Int. Joint Conf. on Pervasive and Ubiquitous Computing (UbiComp), pp. 447–456 (2016).

[11] Sharafaldin, I., Lashkari, A.H., Ghorbani, A.A.: Toward generating a new intrusion detection dataset and intrusion traffic characterization. In: Proc. of the 4th Int. Conf. on Information Systems Security and Privacy (ICISSP), pp. 108–116 (2018).

[12] Moustafa, N., Slay, J.: UNSW-NB15: A comprehensive data set for network intrusion detection systems. In: MilCIS, pp. 1–6. IEEE (2015).

[13] Zhang, X., Zhu, Y., Li, J.: An improved deep learning model for network intrusion detection. IEEE Access 8, 93952–93963 (2020).

[14] Wang, Z., Li, M.: Hybrid learning-based intrusion detection in software-defined networks. Comput. Secur. 117, 102712 (2022).

[15] Kim, J., Kim, D., Lee, J.: Deep ensemble model for intrusion detection using multi-layered feature fusion. J. Netw. Comput. Appl. 174, 102906 (2021).

[16] Liu, H., Lang, B., Liu, M., Yan, H.: CNN and RNN based payload classification methods for attack detection. Knowl.-Based Syst. 163, 332–341 (2019).

Biography

Bo Yuan, received his bachelor’s degree in Communication Engineering from Nanjing University of Posts and Telecommunications in 2004. With 20 years of experience in telecommunications and network security, he is currently pursuing a Ph.D. in Cyber Science and Engineering at Southeast University, China. His research focuses on communication networks, network security, network measurement, and related fields. Additionally, he serves as an editor for multiple standards organizations.

Journal of Web Engineering, Vol. 24_6, 973–996.

doi: 10.13052/jwe1540-9589.2465

© 2025 River Publishers