A Review of Question Answering Systems

Bolanle Ojokoh1,* and Emmanuel Adebisi2

1 Department of Information Systems, Federal University of Technology, Akure, Nigeria

2 Department of Computer Science, Federal University of Technology, Akure, Nigeria

* Corresponding Author

Received 15 November 2018;

Accepted 02 May 2019

Abstract

Question Answering (QA) targets answering questions defined in natural language. Question Answering Systems offer an automated approach to procuring solutions to queries expressed in natural language. A lot of QA surveys have classified Question Answering systems based on different criteria such as queries inquired by users, features of data bases used, nature of generated answers, question answering approaches and techniques. To fully understand QA systems, how it has grown into its current QA needs, and the need to scale up to meet future expectations, a broader survey of QA systems becomes essential. Hence, in this paper, we take a short study of the generic QA framework vis a vis Question Analysis, Passage Retrieval and Answer Extraction and some important issues associated with QA systems. These issues include Question Processing, Question Classes, Data Sources for QA, Context and QA, Answer Extraction, Real time Question Answering, Answer Formulation, Multilingual (or cross-lingual) question answering, Advanced reasoning for QA, Interactive QA, User profiling for QA and Information clustering for QA. Finally, we classify QA systems based on some identified criteria in literature. These include Application domain, Question type, Data source, Form of answer generated, Language paradigm and Approaches. We subsequently made an informed judgment of the basis for each classification criterion through literature on QA systems.

Journal of Web Engineering, Vol. 17 8, 717–758.

doi: 10.13052/jwe1540-9589.1785

© 2019 River Publishers

Keywords: Question Analysis, Answer Extraction, Information Retrieval, Classification, Review.

1 Introduction

Question Answering (QA) aims to provide solution to queries expressed in natural language automatically (Hovy, Gerber, Hermjakob, Junk and Lin 2000). Question Answering systems aim to retrieve expected answers to questions rather than a ranked list of documents as most Information Retrieval Systems do (Nalawade, Kumar and Tiwari 2012). The idea of question answering systems shows a noteworthy advancement in information retrieval technologies, especially in its ability to access knowledge resources in a natural way (Ahmed and Babu 2016) by querying and retrieving right replies in succinct words.

A lot of QA Systems (QAS) have emerged since the 1960s. Some of these include those referenced by Androutsopoulos, Ritchie and Thanisch (1993), Kolomiyets (2011), Mishra and Jain (2015), Hoffner, Walter, Marx, Usbeck, Lehmann and Ngomo (2016). These QA systems have tried to answer user questions using different methods. QA implementations have addressed diverse domains, data bases, question types, and answer structure (Mishra et al., 2015). Recent methods retrieve and process data from multiple sources to answer questions presented in natural language (Lopeza and Uren 2011; Dwivedi and Singh 2013; Kumar and Zayaraz 2014).

Over the years, question answering reviews have been more tailored towards issues, tasks and program structure in question answering (Burger, Cardie, Chaudhri and Weischedel 2001; Mishra et al., 2015). A lot of QA surveys have classified Question Answering systems based on different criteria such as asked questions, features of data bases consulted and right answer forms (Biplab 2014), question answering approaches and techniques (Dwivedi et al., 2013; Biplab 2014; Mishra et al., 2015; Jurafsky and Martin 2015; Pundge, Khillare and Mahender 2016; Saini and Yadav 2017).

To fully understand QA systems, how it has grown into its current QA needs, and the need to scale up to meet future expectations, a broader survey of QA systems becomes essential.

In this paper, we take a short study of the generic QA framework, issues of QA systems and finally we classify QA systems based on some identified criteria in literature. We make an informed judgment of the basis for each classification criterion through literature on Question Answering systems.

The rest of this review is ordered as follows: Section 2 highlights related works on Question Answering systems. Section 3 presents the generic QA framework. Section 4 addresses certain important issues relating to QA systems. Section 5 presents classification of QA systems based on diverse criteria, while Section 6 makes a contrast of the proposed classification with others. Conclusions are drawn in the last section.

2 Background Information

The creation of systems that can process natural language queries started in 1950. Turing planned the “Imitation Game” widely known as “Turing Test” that allows human and machine converse through an interface. An example of the first QA systems is Natural Language Interface to Databases (NLIDB), and it created the avenue for users to present queries in natural language and retrieve responses from databases (Androutsopoulos et al., 1993). This helped lessen the need to interact with systems using formal languages for representing queries. Green, Wolf, Chomsky and Laughery (1961) proposed BASEBALL, a QA system that relays information about baseball league played in America. The system replies are in form of dates and locations. Another prominent QA, LUNAR was proposed by Woods (1973). LUNAR provided information on soil samples. Even though these systems that worked on simple pattern matching techniques performed well, they were limited by inadequate domain information in their repositories.

As research in the field grew, with the aim to detect intended question requirements in a natural way, QA systems started making linguistic analysis of asked questions. One of such systems is MASQUE. MASQUE represented queries in a logic form, and then converts it into a database query for information retrieval (Androutsopoulos et al., 1993; Lopeza et al., 2011). This system separated mapped procedures from linguistic ones. Semantic and statistical means were also used for the mapping process by Burke, Hammond and Kulyukin (1997). Riloff and Thelen (2000) used semantic and lexical means for query classification to get answer types. With time the focus of QA systems skewed toward open domain QA systems.

Open Domain QA by the TREC Evaluation campaign started in 1999 and it has been a yearly program since then (Voorhees 2004). This program over the years has hosted challenge on open domain questions in all forms they can be. Over the years there have been an increase in the volume, complexity, and evaluation of their problem set. The program also provides dataset for answer generation. Enormous information on the web brought about its (that is, Web) consideration as an information source (Soricut and Brill 2006). Hence QA systems are created with the web as their data source (Li and Roth 2002; Vanitha, Sanampudi and Lakshmi 2010). They are further grouped as Open/Closed domain QA system (Lopeza et al., 2011). Most of the questions addressed by these QA systems are factoid questions. Various QA systems use different methods to solve factoid questions, some of such methods include: snippet tolerance and word match (Carbonell, Harman and Hovy 2000). The output of factoid QA systems are also in the form of text, xml or Wiki documents (Lopeza et al., 2011). QA systems developed by Katz et al. (2002), Chung, Song, Han, Yoon, Lee and Rim (2004) and Mishra (2010) extend their knowledge base by leveraging on the massive information available on the web for retrieving answers to queries using linguistic and rule based approaches.

Aside the sources considered above, QA consideration has also shifted to using semantic web (Mishra et al., 2015). Unger, Bühmann, Lehmann, Ngomo, Gerber and Cimiano (2012) also used a template based method on a Resource Description Framework (RDF) data by using SPARQL. Fader, Zettlemoyer and Etzioni (2013) used phrase to concept mappings in a lexicon that is trained from a corpus of paraphrases, which is constructed from WikiAnswers. Hakimov, Tunc, Akimaliev and Dogdu (2013) proposes a Semantic Question Answering system using syntactic dependency trees of input questions on a Resource Description Framework (RDF) knowledge base. Peyet, Pradel, Haemmerle and Hernandez (2013) developed SWIP (Semantic Web intercase using Pattern) to generate a pivot query which proffers a hybrid solution between the natural language question and the formal SPARQL target query. Xu, Feng and Zhao (2014) used a phrase level dependency graph to determine question structure and then use a database to create an instance of the created template. Sun, Ma, Yih, Tsai, Liu and Chang (2015) also develop a multi staged open domain QA framework by leveraging on available datasets and the web.

3 Framework of QA Systems

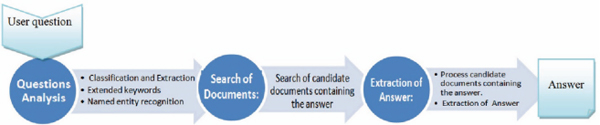

Question Answering systems generally follow a pipeline structure with three major modules (Prager, Brown, Coden and Radev 2000; Hovy et al., 2000; Clarke, Cormack and Lynam 2001; Iftene 2009) namely: Question Analysis, Passage Retrieval, and Answer Extraction. Figure 1 shows the flow of QA system’s framework.

Figure 1 Framework of QA system (Bouziane, Bouchiha, Doumi and Malki, 2015).

3.1 Question Analysis Module

Typically, questions posed to QA systems need to be parsed and understood before answers can be found. Hence, all necessary question processing is carried out in the Question Analysis module. The input for this stage is the user query and the output are representations of the query; this is useful for analysis in other modules. At this level, the semantic information contained in the query, constraints and needed keywords are extracted (Iftene 2009).

The activities in this module includes: parsing, question classification and query reformulation (Dwivedi et al., 2013; Saini et al., 2017). This means the query is analyzed for representation of the main information required to answer the user’s query; the question is classified according to the keyword or taxonomy used in the query, which leads to the expected answer type; also the query is reformulated for enhancing question phrasing and the query is transformed into semantically equivalent ones which helps the information retrieval process.

Different techniques exist for processes carried out in the Question Analysis stage. These include tokenization, disambiguation, internationalization, logical forms, semantic role labels, reformulation of questions, co-reference resolution, relation extraction and named entity recognition among others.

Different QA systems have used and combined different methods for the question analysis phase to better fit the kind of data source they are working on or to solve a particular research problem (Hoffner et al., 2016). Some implementations include the ones highlighted in Table 1.

The analysis in Table 1 indicates that the Question Analysis phase is implemented by different QA systems in diverse ways. This diversity can be very complex depending on, the reuse and how several known techniques are matched together to function in that phase (Hoffner et al., 2016).

Table 1 Methods used in the question analysis phase of QA systems

| Hakimov et al. (2013) | A Semantic Question Answering system was designed using syntactic dependency trees of input questions. Patterns in form of triples were extracted using dependency tree and Part of Speech tags of queries. |

| Xu et al. (2014) | Phrase level dependency graph was adopted to determine question structure and the domain dataset was used to instantiate created patterns. |

| Usbeck, Ngomo, Bühmann and Unger (2015) |

An eight-fold pipeline Question Analysis phase was implemented. The phase include entity annotation, POS tagging, linguistic trimming heuristics for better analysis of queries, dependency parsing, semantic tagging of attributes, the creation of patterns for each query with ranking. |

| Lally, Prager, McCord, Boguraev, Patwardhan, Fan, Fodor and Chu-Carroll (2012) | IBM Watson uses rule and classification based methods to analyze different part of the input query. |

| Bhaskar, Banerjee, Pakray, Banerjee, Bandyopadhyay and Gelbukh (2013) | A Question Analysis module consisting of – Stop word Remover, Interrogative Remover, and Answer Pattern Builder was implemented. |

| Pakray, Bhaskar, Banerjee, Chandra Pal, Bandyopadhyay and Gelbukh (2011) | A Question Analysis module was implemented using Stanford Dependency parser to recognize query and target result type. |

| Chu-Carroll, Ferrucci, Prager and Welty, (2003) | A Question Analysis phase that performs stop-word removal, conversion of inflected term to it canonical form, query term expansion, and syntactic query generation was implemented. |

3.2 Paragraph Retrieval Module

Passage retrieval is naturally based on an orthodox search engine to retrieve a set of significant candidate passages or sentences from a knowledge base. This stage makes use of the queries formulated from the question analysis module, and looks up information sources for suitable answers to the posed questions. Candidate answers from dynamic sources such as the Web or online databases can also be incorporated here.

Text retrieval structures split retrieval process in three stages (Hoffner et al., 2016): retrieval, processing and ranking.

The processing step involves the use of query analyzers to identify texts in a database. Then, retrieval is done by matching documents with resemblance of the query patterns. Ranking of returned texts are then carried out using ranking functions like tf-idf (Jones 1972).

Developing the Information retrieval phase of a QA system is complex because systems deploy different mix of Natural Language techniques with conventional Information Retrieval systems. Some implementations use the syntax information extracted from the query (Unger et al., 2012; Hakimov et al., 2013) to deduce the query intention whereas others use knowledge graph (Shekarpour, Marx, Ngomo and Auer 2015; Usbeck et al., 2015). The IR phase retrieves answer bearing texts from both a structured and an unstructured data source. There are hybrid systems that works both on structured and unstructured data (Gliozzo and Kalyanpur 2012; Usbeck et al., 2015). HAWK is the first hybrid Semantic Question Answering system that answer queries using textual information and Linked Data (Usbeck et al., 2015).

Source expansion algorithms are also used to automatically extend text corpus using unstructured sources with related information. This is leveraged on by some works to extract needed information for valuing assumptions (Schlaefer 2011), even though practically there is a high probability of noise inclusion and high variance due to techniques used (Thompson and Callan 2007). For these reasons, query expansion is most time considered as a backup plan when the user question yields low recall (Harabagiu, Moldovan, Pasca, Mihalcea, Surdeanu, Bunescu, Girju, Rus and Morarescu 2001; Attardi, Cisternino, Formica, Simi and Tommasi 2001).

Some other works on the paragraph retrieval phase include that of Sun et al. (2015) that used search engines to mine important texts, and a ranked list of answers is returned after the text has been linked to Freebase. Watson used existing, off-the-shelf IR components to perform the retrieval step, but used a variety of novel mechanisms to formulate queries posed to the retrieval phase (Ferrucci, Brown, Chu-Carroll, Fan, Gondek, Kalyanpur and Lally, 2010).

Finally, ranking techniques are used on the retrieved texts. Wade and Allan (2005) stated that most of the generally used ranking techniques are statistical in nature. Some of which includes: query-likelihood language modeling, tfidf, support vector based models, significance modeling method for ranking text, a method for retrieving and scoring variable-length passages and finally a method based on simple mixture of language models.

3.3 Answer Extraction Module

Answer extraction is a major part of a Question Answering system. It produces the exact answer from the passages that are generated. It does this by firstly producing a set of candidate answers from the generated passages and then ranking the answers using some scoring functions.

Previous studies on answer extraction have discussed utilizing different techniques for answer extraction, including n-grams, patterns, named entities and syntactic structures. Some of those works include:

Soubbotin (2001) used manually created patterns to identify candidate answers from text for specific query types. Each pattern influences the scores of answer it matches. Their work suffered a low recall and a small question class coverage. Ravichandran and Hovy (2002) automatically derived patterns (hand-crafted) to extract possible answer from passages for specific question type with scores. Query terms with the answers are sent to a search engine together with extracted patterns from retrieved passages. Ravichandran, Ittycheriah and Roukos (2003) added semantic forms to the query terms, and used learned patterns as features to model the correctness of answers extracted. Although the method has a high precision in extracting answers, it is still restricted to pre-known query type.

Apart from patterns, diverse linguistic units are also mined and ordered according to frequency. Prager et al. (2000), Pasca and Harabagiu (2001), Yang, Cui, Maslennikov, Qiu, Kan and Chua (2003) and Xu, Licuanan, May, Miller and Weischedel (2003) used Named Entity Recognition for answer extraction. Their approach includes the extraction and filtration of entities to retain candidates entities in the expected answer type scope. Implementing a NE tool particularly for QA typology helps achieve better performance because many answer types are not included by existing Named Entity Recognition tools (Sun, Duan, Duan and Zhou, 2013).

n-Gram is also employed in AE. Brill, Lin, Banko, Dumais and Ng (2001) retrieved recurrent n-grams from documents on the web. Their technique then used features (surface string and handcrafted) to decide the type information. Finally, filtering is performed. Additionally, identification of some of the text units are done through external information such as VerbOcean (Na, Kang, Lee and Lee 2002; Echihabi, Hermjakob, Hovy, Marcu, Melz and Ravichandran 2008), anchor text, title, and meta-information in Wiki (Chu-Carroll and Fan 2011). Also, many approaches depend on syntactic compositions and extract other useful information from passages such as dependency tree nodes or noun phrases. These approaches leverage majorly on the similarity between query and retrieved passages for ranking candidate’s answers.

Sun et al. (2013) employed the use of factor graph as the model to executeAE. They argued that contrasting other approaches of AE, which independently extract answers in each passage, their work performs AE on a graph built from linked passages with related information on asked questions. Their conclusion shows an AE performed without isolation of linked information contained in all retrieved passages. The work considered only connections among words with the same stem.

Juan, Chunxia and Zhendong (2016) introduced an AE technique by Merging score strategy (MSS) based on hot terms. They defined the hot terms according to lexical and syntactic features to show the role of query terms in QA systems. Their approach aims to cope with the syntactic diversities of retrieved passages for AE, so they proposed four improved candidate answer score algorithms. Their implementation failed to consider semantic variations contained in asked questions and retrieved paragraphs.

4 Issues of QA Systems

Burger et al. (2001) identified some issues that are related to QA systems. The issues include Question Processing, Question Classes, Data Sources for QA, Context and QA, Answer Extraction, Real time Question Answering, Answer Formulation, Multilingual (or cross-lingual) question answering, Advanced reasoning for QA, Interactive QA, User profiling for QA and Information clustering for QA. These issues are discussed in the following subsections.

4.1 Question Classes

Different strategies are considered in answering questions. based on the category a question falls, a particular strategy can be used in answering it, and such strategy may not work for another question of a different category. Therefore, complete understanding of what class a question falls is required to be able to answer questions correctly (Basic, Lombarovic and Snajder 2011; Banerjee and Bandyopadhyay 2012; Stupina, Shigina, Shigin, Karaseva and Korpacheva 2016).

4.2 Question Processing

In natural language, the same question may be posed in different ways. The question may be asked assertively or interrogatively. The need to understand the semantics comes up, that is knowing what the query focus is before trying to find a solution to the query. The whole act involved in finding what class a question belongs to is known as Question Processing (Biplab 2014; Stupina et al., 2016).

4.3 Context and QA

Queries are represented in contexts. It is almost impossible to find questions expressed in a universal context. Therefore, it is important that the context of an asked question is known before a step is taken in the direction of answering the question (Lin, Quan, Sinha, Bakshi, Huynh, Katz and Karger 2003). Context questions are never expressed in isolation, but in an interconnected way that involves some extra steps to fully understand the query (Chai and Jin 2004). For example: When did it happen.

The interpretation of this question depends on the resolution of “it” from the context of the question.

4.4 Data Sources and QA

The answers to questions asked or posed to a question answering system are sourced from a knowledge base. The base or source must be relevant and exhaustive. It may be a collection of documents, the web, or a database from where we can get the answers (Hans, Feiyu, Uszkoreit, Brigitte and Schäfer 2006; Jurafsky et al., 2015; Park, Kwon, Kim, Han, Shim and Lee 2015).

4.5 Answer Extraction

The class of query asked informs the type of answer that will be extracted from any source a QA is using. So the need to understand and produce the expectation of the user from the question provided is the goal in Answer Extraction (Prager et al., 2000; Pasca et al., 2001; Soubbotin 2001; Ravichandran et al., 2002; Yang et al., 2003; Xu et al., 2003; Shen, Dan, Kruijff and Klakow 2005; Sun et al., 2013; Juan et al., 2016; Xianfeng and Pengfei 2016).

4.6 Answer Formulation

Basic extraction can be sufficient for certain queries. For some questions, the solutions are extracted in parts from different bases which are then combined to answer asked questions (Kosseim, Plamondon and Guillemette 2003). At the same time, the output of the Question Answering System must be natural. Creating such answers is termed answer formulation. In QA, answer formulation does: improving AE or improving human-computer interaction (HCI).

4.7 Real Time Question Answering

Real time question answering requires instantaneous answers (replies) to questions posed in Natural Language. In cases where instantaneous replies are required, the need to answer questions regardless of it complexity in seconds comes up, hence the need for architectures that can produce valid answers in given time constraint (Biplab 2014; Savenkov and Agichtein 2016; Datla, Hasan, Liu, Lee, Qadir, Prakash and Farri 2016).The system Watson was able to provide answers to asked questions in an average of 3 seconds.

4.8 Cross Lingual or Multilingual QA

Cross lingual QA or Multilingual QA involves retrieving answers from sources different from the language the query was expressed. Various English Question Answering systems data sources exist, but some other languages still lacks such resources e.g. Hindi. So to answer questions posed in such resource deficient languages, the query (question) is first translated to a resourceful language to obtain answer, then the answer is converted to the previous language the question was asked (Magnini, Vallin, Romagnoli, Peñas, Peinado, Herrera, Verdejo and Rijke 2003; Herrera, Peñas and Verdejo 2005; Jijkoun and Rijke 2006; Giampiccolo, Forner, Herrera, Peñas, Ayache, Forascu, Jijkoun, Osenova, Rocha, Sacaleanu and Sutcliffe 2007; Lamel, Rosset, Ayache, Mostefa, Turmo and Comas 2007; Forner, Giampiccolo, Magnini, Peñas, Rodrigo and Sutcliffe 2008). Foster and Plamondon (2003) and Kaur and Gupta (2013) are examples of cross lingual systems. More information about multi lingua system can be found in Magnini et al. (2003).

4.9 Interactive QA

Extra information about asked questions from users can help guide the question answering process. Hence the need for an interactive system that is not boring in the sense that it relates back and clear doubts in case it finds the question ambiguous (Webb and Strzalkowski 2006; Wenyin, Hao, Chen and Feng 2009). Chen, Zeng, Wenyin and Hao (2007) and Quarteroni and Manandhar (2009) contains more information on Interactive QA systems.

4.10 Advanced Reasoning for QA

In advanced reasoning, the QA system does more than generating what it finds in the corpus. It does more by learning facts and applying reasoning to create new facts which can be used to better answer posed question (Benamara and Kaci 2006; Melo, Rodrigues and Nogueira 2012).

4.11 Information Clustering for QA

Retrieving precise information for a simple question has distorted to a tough and resource expensive act due to excessive information growth in the web. Clustering reduces the search space and by so doing decrease work load of techniques. The ability to categorize information according to its type will help the system narrow down its search process. Hence the need for means to categorize asked questions. (Wu, Kashioka and Zhao 2007; Perera 2012).

4.12 User Profiling for QA

The need to tailor question answering system replies around the user that is asking the question is another problem. The intention of the user can be known by examining the user’searlier queries. To do this, there is the need to develop the user’s profile (Quarteroni and Manandhar 2007; Bergeron, Schmidt, Khoury and Lamontagne 2016; Dong, Furbach, Glockner and Pelzer 2016).

5 A Classification of Question Answering Systems

Various works have classified question answering systems, viewing the systems from different points of views and classifying them based on different criteria. Jurafsky etal. (2015) classified Question Answering systems based on data source; Yogish, Manjunath and Hegadi (2016) also classified Question Answering systems based on knowledge source and techniques used in the system; Pundge et al. (2016) also classified Question Answering systems based on question domain and further discussed some techniques used in the system; Tirpude (2015) also classified QA systems based on domain. Mishra et al. (2015) listed eight criteria in support of categorizing question answering systems: domain, question types, query analysis, techniques for answer retrieval, features of databases, types of matching functions, databases and forms of answer generated. From the literature above, we identify six important criteria supporting QA classification. These criteria are Application domain, Question type, Data source, Form of answer generated, Language paradigm, Approaches.

The proposed classification details are given as follows:

5.1 Classification based on Domain:

Two types of QA systems exist. Open domain and Closed or Restricted domain (Kaur et al., 2013; Tirpude 2015; Pundge et al., 2016; Yogish et al., 2016). Some other reviews address these as General domain and restricted domain Question Answering System respectively (Mishra et al., 2015).

An Open domain system provides answers to any question, for instance START system has no topic restrictions as well as many other like Google Knowledge Graph, Answers.com and Yodaqa (Baudiš and Šedivy 2015). General domain questions has a large repository of queries that can be asked. QA systems leverages on general structured texts and world knowledge in their approaches to produce answers (Kan and Lam 2006). In this system, the answer quality is not high, and casual users are responsible for posing questions (Indurkhya and Damereau 2010).

A closed domain Question answering system offers answers on some fixed topics for instance BASEBALL answers questions about baseball games and LUNAR answers queries about rock samples. This domain requires a linguistic provision to comprehend the natural language text to provide solution to queries precisely. Its development involves a better method which improves the accuracy of the QA system by confining the field of the queries and the size of database. The answer quality based on the approach is expected to be better. There are several closed domain QA systems created in the literature. These domains include temporal QA systems, geospatial QA systems, medical QA systems, patent QA systems and community QA systems. Closed domain QA systems can be combined to create an Open domain QA systems (Indurkhya et al., 2010; Lopeza et al., 2011). The difference between open and closed domain QA systems is the presence of domain-dependent information that can be used to better the accuracy of the system (Molla and Vicedo 2007).

5.2 Classification based on Types of Questions

Generating answers to users’ queries is directly related to the question type asked (Moldovan, Pasca and Harabagiu 2003). Therefore, the taxonomy of queries asked in QA systems directly affects the responses. QA system outputs reveal that 36.4% of errors occur due to mis-classification of queries in QA systems (Moldovan et al., 2003). Li et al. (2002) categorize queries into a content based classification but they work on a small class of real world queries. Benamara (2004) performed question classification based on response type. Fan, Xingwei and Hau (2010) performed question classification using pattern matching and machine learning methods. Mishra et al. (2015) classified QA systems based on question types queried by users. This work classifies based on all the possible types of questions it identifies from literature. The classes types are: list questions, factoid questions, Definition, causal questions, confirmation questionsand hypothetical type questions.

The categories are described briefly below.

i. Factoid questions [what, when, which, who, how]

These questions are factual in nature and they refer to a single answer (Indurkhya et al., 2010), as for instance: Who is the president of Nigeria? Factoid queries commonly start with wh-word (Mishra et al., 2015). Current QAsystem’s performance in providing answers to factoid queries have got satisfactory results (Indurkhya et al., 2010; Lopeza et al., 2011; Kolomiyets 2011; Dwivedi et al., 2013; Kumar et al., 2014).

The answer types expected for common factoid questions are usually named entities (NE) (Kolomiyets 2011; Lopeza et al., 2011). They are the wh-category of queries. Therefore, improved accuracy can be realized. Factoid query identification and automatic classification is itself a research problem in QA systems (Mishra et al., 2015).

ii. List type questions

The response to a list query is an enumeration: “Which state export cocoa?” the enumerations are usually an enumeration of entities or facts in responses. Threshold value setting for list question remains a problem in QA (Indurkhya et al., 2010).

Methods with successful application on factoid questions will handle list questions since the answers are factual in nature and deep NLP to extract answers is not required (Mishra et al., 2015).

iii. Definition type questions

Definition question types require anintricate processing of retrieved documents and the absolute answer consists of a text piece or is acquired after summarizing more documents (Cui, Kan and Chua 2007; Lopeza et al., 2011): “What is a noun?”. Usually they start with ‘what is’. Answers to Definition type’s questions can be any event or entity.

iv. Hypothetical type questions

This require information associated to any assumed event. Usually it starts with “ What would happen if ” (Kolomiyets 2011). QA systems need knowledge retrieval methods for producing answers (Mishra et al., 2015). Also, the solutions are subjective to these queries. No specific right answer for queries. exist The reliability for QA systems answers to this type of question is low and it relies on context and users.

v. Causal questions [how or why]

Causal queries require clarifications on entities they contain (Mishra et al., 2015). While factoid questions extract named entities as answers, causal answers are not named entities. These questions seek explanations, reasons or elaborations for specific events or objects. Text analysis is required at both the pragmatic and discourse level by QA systems to generate answers using advanced NLP techniques (Moldovan, Harabagiu and Pasca 2000; Verberne, Boves, Oostdijk and Coppen 2007; Higashinaka and Isozaki 2008; Verberne, Boves, Oostdijk and Coppen, 2008; Verberne, Boves, Oostdijk and Coppen 2010).

vi. Confirmation questions

Solutions to Confirmation questions are expected to be in a YES or NO form. Inference mechanism, common sense reasoning and world knowledge is required by QA systems to generate answers to causal question.

5.3 Classification based on Data Source

Jurafsky et al. (2015) identified Information Retrieval and Knowledge based QA as the two major modern paradigm for answering questions, Yogish et al. (2016) classified QA systems under: system based on Web source and system based on Information Retrieval. On the basis of Data source, a review of QA systems classification is done in terms of three further categories:

i. Information Retrieval based (IR-based) Question Answering System

ii. Knowledge Based System

iii. Hybridized or Multiple Knowledge Source Question Answering System

5.3.1 IR-based question answering

IR or text-based QA relies on the gigantic size of information that can be accessed on the Web as text or in ontologies (Jurafsky et al., 2015).

This QA provides answer to user’s query by retrieving text snippets from the Web or corpora (Jurafsky et al., 2015). A sample factoid query is “Who is the president of Nigeria?” answer is “President Buhari on vacation in London”. IR methods extract passages directly from these documents, guided by the question asked. The IR QA system is explained as it performs it function through the QA framework structure.

First, the method pre-process the query to detect the answer type (person, location, or time). A heuristically informed classifier can be built to perform question classification (Li and Roth 2005), or statistical classifier (Harabagiu et al., 2001; Pasca 2003). Question classification accuracies are relatively high for factoid questions with named entities as expected output, but question types like REASON and DESCRIPTION questions can be much harder since it will require deep understanding of the query (Jurafsky et al., 2015).

It then creates questions to query search engines. This involves isolating from the question, terms that form a query which is then posed to a retrieval system. This occasionally involves the removal of stop-words and punctua-tions. The query formation is dependent on the data source (Jurafsky et al., 2015). Structured systems can represent their data in triples, tuples or more complex forms. The generated query is expected to take the form in which the systems data is represented.

The search engine yields ranked results which are further split into passages for re-ranking. Major ranking methods used are statistical in nature (Wade et al., 2005), and some of those methods include: tf-idf, a relevance modeling approach for scoring passages or documents, query-likelihood language modeling, a model based on SVM. Passage ranking is further achieved using supervised machine learning, using a set of attributes that can be extracted from answer passages (Brill et al., 2001; Pasca 2003; Monz 2004).

Lastly, potential answer strings are extracted for ranking from the passages. Some of the methods used for extraction includes Answer-Type pattern extraction (Soubbotin 2001) and N-gram tiling (Brill et al., 2001). The discovered expected answer type help guides the right answer selection (Juan et al., 2016).

5.3.2 Knowledge Based (KB) QA

The Information Retrieval method depends on the size of text on the web. Popular ontologies like DBpedia (Bizer, Lehmann, Kobilarov, Auer, Becker, Cyganiak and Hellmann 2009) or Freebase (Bollacker, Evans, Paritosh, Sturge and Taylor 2008) contains triples extracted from Wikipedia info-boxes and the structured data in some Wiki articles.

Knowledge-based question answering involves giving answers to natural language questions by mapping it to a query over an ontology (Jurafsky et al., 2015). Whatever logical form derived from the mapping, is used to retrieve facts from databases. The data source can be any complex structure, for example scientific facts or geospatial readings, which requires complex logical or SQL queries. It can also be triples composed in a database, Freebase or DBpedia of simple relations. Mapping a user asked query to a logical or query-like form is performed by semantic parsers (Hoffner et al., 2016). Semantic parsers for QA always map either to some predicate calculus or a query language. Systems that maps a texts to logical forms are known as semantic parsers. To perform the mapping from the text (natural language question) to a logical form, the KB QA uses some of these method (Jurafsky et al., 2015):

i. The Rule-Based method: This focuses on developing manually created rules to extract frequently occurring associations from the query (Ravichandran et al., 2002).

ii. Supervised methods: This involves using a training data, which contains pairs of questions and their logical forms, then creating a model that maps questions to its logical form (Tirpude 2015).

iii. Semi-supervised method: Supervised datasets that can fully represent all possible question forms that queries can be in has not been achieved yet and for this reason textual redundancy is leveraged by most methods for mapping queries to it canonical relations or other structures in knowledge (Jurafsky et al., 2015). This method is used by IBM Watson and most hybridized QA systems. The major home for redundancy, is the web, which houses massive textual variants conveying different relations. To leverage on the resourcefulness of the web, most approaches use it as a knowledge base (Fader et al., 2013). The alignment of these strings with structured knowledge sources help create new relations (Jurafsky et al., 2015).

5.3.3 Using multiple information sources

This involves the use of structured knowledge bases and text datasets in providing answers to queries (Chu-Carroll et al., 2003; Park et al., 2015). DeepQA system is a practical hybridized QA system based on information source. This system extracts a wide variety of senses from the query (named entities, parses, relations, ontological information), then it retrieves candidate answers textual sources and knowledge bases. Scoring is then carried out for each candidate answer using a number of knowledge bases like temporal reasoning, geospatial databases, taxonomical classification (Pakray et al., 2011; Jurafsky et al., 2015; Usbeck et al., 2015).

5.3.4 Classification based on forms of answers generated by QA system

There are two categories of answers: extracted and generated.

i. Extracted answers

Extracted answers are divided into three categories (Mishra et al., 2015) vis a vis: Answers in form of Sentences, Paragraph and Multimedia. Answers in sentence form depend on retrieved documents which are divided into individual sentence. Answer forms depends majorly on the users’ query. The highest ranked sentence is returned to the user. Usually, factoid or confirmation questions have extracted answers (Ng and Kan 2010; Baudiš et al., 2015; Juan et al., 2016). For example, questions like “Who is the highest paid coach in premiership?” or “Who built the first Aeroplane?” are factoid questions. Answers in paragraph form also depend on retrieved documents which are divided into individual paragraphs (Wade et al., 2005). The paragraph which qualifies most as answers are presented to the user. Causal or hypothetical queries are in this group. Causal questions require clarifications on entities it contains (Sobrino, Olivas and Puente 2012), this kind of questions are posed by users who want the following: elaborations, reasons, explanations and answer. For example, “how” or “why” questions. Audio, video, or sound clip are multimedia files which can be presented as answers to users (CHUA, Hong, Li, and Tang 2010; Bharat, Vilas, Uddav and Sugriv 2016).

ii Generated answers

Generated answers are classified into conformational answers (yes or no), opinionated answers or dialog answers (Mishra et al., 2015). Con-firmation questions have generated answers in the form of either yes or No, via confirmation and reasoning. Opinionated answers are created by QA systems which give star ratings to the object or features of the object. Dialog QA system returns answers to asked questions in a dialog form (Hovy et al., 2000; Hirschman and Gaizauskas 2001; Bosma, Ghijsen, Johnson, Kole, Rizzo and Welbergen 2004).

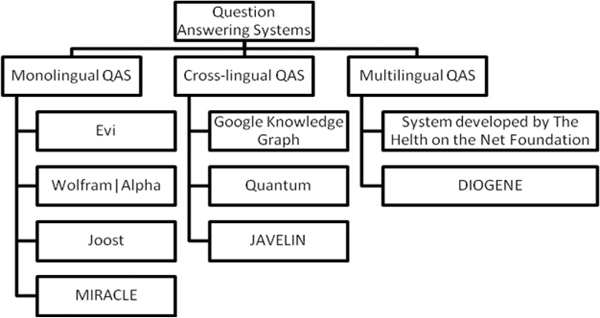

Figure 2 Language paradigm based classification of Question Answering systems (Lebedeva and Zaitseva 2015).

5.3.5 Classification based on language paradigm

Classification based on language paradigm divides QA systems by the number of languages used within query processing into three groups (Kaur et al., 2013) (as described in Figure 2).

5.3.5.1 Monolingual question answering systems

In this system, user’s query, resource documents and system’s answer are expressed in asingle language. START, Freebase and others process users query expressed in English, using facts from built-in database that are also articulated in English, and they return answers in the language form of which questions was asked. Joost is applied to answer medical questions in Dutch (Fahmi 2009), MIRACLE Question Answering System answers questions in Spanish (Sanchez, Martinez, Ledesma, Samy, Martinez, Sandoval and Al-Jumaily 2007). A major deduced problem of the system is that it limits the type of question that can be asked (Pérez, Gómez and Pineda 2007), but these are covered by cross and multi-lingua QA systems. However, substantial works exist on merging capabilities of several monolingual QA systems (Chu-Carroll et al., 2003; Sangoi-Pizzato and Molla-Aliod 2005; Jijkoun et al., 2006).

5.3.5.2 Cross-lingual question answering systems

In this system, user’s query and resource documents are expressed in diverse languages and the query is interpreted into the language of resource documents before the search. Google Knowledge Graph translates queries inputted in other languages into English and returns an answer also in English. English-French cross-lingual system, Quantum translates users query from French to English, processes it in English and translates the results from English to French (Foster et al., 2003). JAVELIN system performs cross-lingual question answering between English and Chinese or Japanese (Kaur et al., 2013). The problem of translating queries between languages has been widely worked upon in the context of cross-language QA (Neumann and Sacaleanu 2005; Sutcliffe, Mulcahy, Gabbay, O’Gorman, White and Slatter 2005; Rosso, Buscaldi and Iskra 2007; Pérez et al., 2007). In contrast, integrating information obtained from varying languages, has not been fully worked upon in QA (Pérez et al., 2007).

5.3.5.3 Multilingual question answering systems

In this system, user’s query and resource documents are expressed in the same language and the query search is performed in the same language the query was expressed, e.g. system developed by The Health on the Net Foundation supports English, French and Italian, while DIOGENE system supports only two languages: English and Italian, but in contrast to cross-lingual systems the question are asked in either Italian or English, retrieval of information is carried out in Italian or English, and the answer is given in the language of the query (Magnini et al., 2003).

This classification is useful for a user who is searching for information in specific language which he/she does not know. An important issue in multilingual QA is the incorporation of information acquired from dissimilar languages into agraded list (Pérez et al., 2007).

5.3.6 Classification based on approaches

5.3.6.1 Linguistic approach

Achieving a full syntactic analysis of a text collection is possible via the use of Knowledge Linguistic techniques such as Part of Speech Tagging, Tokenization, parsing and Lemmatization (Pundge et al., 2016), and with these capabilities it becomes realistic to exploit linguistic information for use in Question Answering systems especially as a database for IR (Bouma 2005). This further helped lot of researchers combining AI and NLP techniques together to organize knowledge information in the form of logics, production rules, templates (represented with triple relations), frames, semantic networks and ontologies which are useful for analyzing query-answer pair (Dwivedi et al., 2013).

Linguistic approach has been able to understand natural language text using its techniques (Pundge et al., 2016) and this understanding has been enhanced in different parts of a question answering system like in the Apache’s IR system Lucene, indexing of textpool along various linguistic dimensions, such as NE classes, POS tags, and dependency relations are done (Bouma 2005). Tiedemann (2005) used genetic algorithm to enhance the use of such an extended Information Retrieval index, and they discussed improvements on their result. The method was implemented on the user’s query, to transform it into an exact question that mines the corresponding reply from structured database (Sasikumar and Sindhu 2014).

However, deployment of linguistic techniques on a particular domain database poses portability constraint as a different application domain requires a total re-write of mapping rules and grammar (Dwivedi et al., 2013). Also, building a knowledge base is a slow process, so linguistic solutions are preferred for problems with lasting information necessities for a domain (Dwivedi et al., 2013).

Recently, the knowledge base limitation is acknowledged as the ability to offer a situation-specific reply. Clark, Thompson and Porter (1999) created a method for supplementing online text with knowledge-based QA capacity.

Existing QA systems using linguistic approach are highlighted in Table 2.

5.3.6.2 Statistical approach

Availability of huge amount of data on internet amplified the importance of statistical methods (Pundge et al., 2016). Because this approach puts forward techniques, which can deal with very large amount of data and the diversities that data possess (heterogeneity). Statistical methods are free of structured query languages and can create questions in NL form. This approach requires enough data for exact statistical learning and it produces better outcomes than other contending methods once properly learned (Dwivedi et al., 2013). Also, the learned model can be simply tailored to a new domain being independent of any language form.

The Watson’s DeepQA architecture has shown that statistical learning method gives better results than other approaches (Murdock and Tesauro 2016). The Watson DeepQA’s processing pipeline features at each stage, statistical analysis of many alternatives being performed as part of a massively parallel computation.

Statistical methods have also been able to leverage on the incomplete or the unstructured nature of web documents using source expansion algorithm that automatically extends a given text body with linked information from unstructured bases (Schlaefer 2011). The textbody can now be used in QA and other IR or IE tasks to collect extraproof for valuing hypotheses.

Table 2 Linguistic based QA systems

| QA System | Description |

|---|---|

| Green et al. (1961) | This is a database-centered system that translates NLquery to a canonical query to answer questions. It answered queries on games played in US league over a single season. It analyses the user query through linguistic knowledge against structured database. |

| Woods (1973) | It parses English questions into a database query. This it achieves in two steps: syntactic analysis via augmented transition network parser and heuristics, and semantic analysis mapping parsed request into query language. LUNAR answered questions on rocks and soil types. |

| Weizenbaum (1966) | ELIZA was a system designed to attempt mimicking basic human interaction Question and answer exchanges. It used a structured database as its knowledge source. It simulated conversations using pattern matching and substitution methodologies. |

| Bobrow, Kaplan, Kay, Norman, Thompson and Winograd (1977) | Genial Understand System is a frame-driven dialog system that answers questions using a structured database as the knowledge source. The system achieves a semantic understanding of user inputs and using a rule based method, it creates an appropriate query to interact with a structured database. |

| Clark et al. (1999) | Knowledge-based question answering system that tries to answer questions using an inference engine component, while a rule based method is implemented for the inferencing. |

| Katz (2017) | Syntactic analysis using reversible transformations (STARTQA) is a Web-Based Question Answering system that tries to answer questions asked in natural language in all domain. It uses a pattern matching technique to match queries produced from parse trees against knowledge base. |

| Riloff et al. (2000) | This system uses some set of generated rules to answer reading comprehension tests over a structured base. |

| Hao, Chang and Liu (2007) | This is a Rule-based Chinese QA system for reading Comprehension test. |

| Androutsopoulos et al.

(1993) |

A QA system, that presents natural queries in a logic form, and further convert the logical representation to a database query form in other to retrieve information. |

| Fu, Jia and Xu (2009) | This work was based on frequent queries and music ontologies. The system answers musical questions using the methods listed. |

| Bhoir and Potey (2014) | This work developed a QA system for a particular domain of tourism. The system returns exact answers to tourism related queries. It uses a pattern matching approach. |

However, statistical methods still treat each term of a query individually and fail to recognize linguistic features for co-joining words or phrases which is one of their major drawbacks.

Mostly, all Statistical Based QA systems apply a statistical technique in QA system such as SVM classifier, Bayesian Classifiers, maximum entropy models (Sangodiah Muniandy and Heng 2015).

5.3.6.3 Pattern matching approach

Pattern matching method deals with the communicative influence of text pattern. It replaces the classy processing used in other computing methods. Many QA systems learn text structures automatically from passages instead of using complex linguistic knowledge for retrieving answers (Dwivedi et al., 2013). Simplicity of such systems makes it quite favorable for small imple-mentation and use. More pattern matching Question Answering systems use surface text pattern, while others rely on templates for response generator. The Surface pattern based approach involves finding answers to factual questions (Ravichandran et al., 2002) while Template are used for Closed domain systems (Guda, Sanampudi and Manikyamba 2011).

i. Surface pattern based

This method matches responses from the shallow structure of retrieved texts by using an extensive list of generated patterns (this can be handcrafted or automatically generated). Solution to a query is recognized based on similarity amongst their patterns having certain semantics. With the level of human involvement in designing such set of patterns, the approach shows high precision. It replaces the sophisticated processing involved in other contending methods (Ravichandran et al., 2002), but downsides to it is that rules must be written for different domains based on different domain construct and requirements. Some existing works include: Hovy et al. (2000), Soubbotin (2001), Zhang and Lee (2002) and Cui et al. (2007).

ii. Template based approach

A template based method uses preformatted patterns for queries. The attention of this method is more on illustration rather than the explanation of queries and solutions. The set of templates are designed to comprise the ideal number of templates that ensures adequate coverage of the problem space (Saxena, Sambhu, Kaushik and Subramaniam 2007; Unger et al., 2012). Some previous works include an Automated QA using query template that covers the abstract model of the database; this they achieve using Frequent Answer Question (FAQ) (Chung et al., 2004), more information can be found in Gunawardena, Lokuhetti, Pathirana, Ragel and Deegalla (2010) and Unger et al. (2012).

Table 3 Statistical QA systems

| QA System | Description |

|---|---|

| Ittycheriah, Franz, Zhu, Ratnaparkhi and Mammone (2000) | This system performs question and answer classification using Maximum entropy model based on numerous N-gram or bag of words features. |

| Cai, Dong, Lv, Zhang and Miao (2005) | They proposed a Question Answering System in Chinese, by training a Sentence Similarity Model for answer validation. |

| Suzuki, Sasaki and Maeda (2002) | This is an open domain QA system that performs answer selection using Support Vector Machine. |

| Moschitti (2003) | This work proposed an approach for Query and Answer classification. The approach tested on Reuters-21578. Classification was achieved using Support Vector Machine. |

| Zhang and Zhao (2010) | This work proposed Support Vector Machine for question classification and answer clustering in Chinese using word features. |

| Quarteroni et al. (2009) | This work designed an Interactive Question Answering System using SVM classifier for question classification. |

| Berger, Caruana, Cohn, Freitag and Mittal (2000) | This work performs answer finding using a Statistical approach (N-gram Mining technique). |

| Radev, Fan, Qi, Wu and Grewal (2002) | This work proposed probabilistic phrase re-ranking algorithm (PPR), which uses proximity and query type features to extend the capability of search engines to support NL question answering. |

| Li et al. (2002) | Li and Roth developed a machine learning approach which uses a hierarchical classifier. |

| Wang, Zhang, Wang and Huang (2009) | This work proposed the use of semantic gram and n-gram model to achieve high accuracy in question classification. |

| Mollaei, Rahati-Quchani and Estaji (2012) | This work proposed to classify Persian based language questions using Conditional Random Fields (CRF) classifier. |

iii. Hybrid approach

Different works have tried to cover the downsides of different approaches by constructing a larger system that houses the combination of some of the approaches mentioned. In some of these systems, the answer is decided by a voting system (Pakray et al., 2011) while others assign decisions to approaches that best suit the question type asked (Jurafsky et al., 2015). Results from the experiments carried out by Chu-Carol (2003) illustrates substantial performance enhancement by merging statistical and linguistic methods to QA. Some other hybrid systems are listed in Table 3.

6 Comparison with Other Surveys

QA review have taken different considerations over the years. This section highlights other QA reviews, their approaches and the distinction of this work from all others.

Hovy et al. (2000) based QA system’s classification on approaches. Identified approaches are: Information retrieval and Natural language processing. The IR retrieves the best matched document by the query while the Pure NLP focus on understanding (syntactically and semantically) both the asked question and sentences present in the documents being considered for answering asked question and then generating the best matched sentence as answer.

Vicedo and Molla (2001) grouped QA systems based on the level of involvement of NLP techniques in answer generation. The groupings are:

a. QA systems without NLP methods.

b. QA systems that use shallow NLP methods.

c. QA systems that use deep NLP methods.

Benamara (2004) surveyed QA systems based on generated responses. The categories are: Questions with short answers (factoid, yes or no question) and Questions with long answers (causal, descriptive, comparison questions).

Goh and Cemal (2005) was based on QA classification and QA system was classified based on the approaches it uses. The two types based on approaches are:

a. Information retrieval (IR) and Natural language processing (NLP): this embodies the use of semantic analysis, syntax processing, information retrieval and named entity recognition techniques.

b. Reasoning and Natural language understanding (NLU): some of the techniques used are discourse or pragmatical analysis along with syntax processing and semantic analysis.

Vanitha et al. (2010) review was based on QA classification. They clas-sified QA systems as Information Retrieval based QA system, Web based QA system, Restricted domain QA system and rule based QA system.

Lopeza et al. (2011) performed a classification review based on: Question type, Scope (open domain or restricted domain), data sources, and Flexibility to various basic problems (such as heterogeneity and ambiguity).

Tirpude (2015) provides an overview of QA and its system architecture (framework), as well as the previous related work comparing each research against the others with respect to the components that were covered and the approaches that were followed.

Mishra et al. (2015) did a comprehensive review on QA system classification based on all commonly known approaches. The approaches include: Question Type, Data Source, Processing Methods, Retrieval Model, Answer form and Data Source characteristics.

Saini et al. (2017) was based on the survey of QA framework, evaluating the current and emerging status and visualizing the future scope and trends. It identified the three core components of a QA system as: information retrieval, question classification and answer extraction module.

Pundge et al. (2016) introduce briefly the QA framework, listed the QA issues and finally did a comprehensive review on QA approaches. The approaches include: Linguistic, Statistical, pattern matching and hybrid.

Our work captures a much broader scope which includes a review of: the generic QA framework, issues in QA systems and finally an adequate classification of QA systems based on commonly known approaches used in surveyed literature.

7 Conclusions

In this paper, we take a short study of the generic QA framework (Question Analysis, Passage Retrieval and Answer extraction), issues of QA system (Question Processing, Question Classes, Data Sources for QA, Context and QA, Answer Extraction, Real time Question Answering, Answer Formulation, Interactive QA, Multilingual (or cross-lingual) question answering, Information clustering for QA, Advanced reasoning for QA and User profiling for QA) and finally we classify QA systems based on some identified criteria (Application domain, Question type, Data source, Form of answer generated, Language paradigm and Approaches) in literature. We make an informed judgement of the basis for each classification criterion through literature on QA systems.

The generic QA architecture can be modified by including some validation modules or removing some modules to fit the question environment under consideration. The performance of each module in the framework depends on the one before. That is, a proper query formulation from the Question Analysis phase increases the likelihood of retrieving important passages in the passage retrieval phase and finally enhancing the probability of extracting the right answers in the answer extraction module. This then calls for the implementation of efficient methods in each module in the QA framework.

The issues of QA systems identified in this work have become the backbone for new research areas under Question Answering. Lots of work have been done to cover Question classes manually and automatically. These include TREC’s introduction of the live question answering track for real time questions, ability of IBM Watson to answer questions based on context and many more. But in the light of new research, some more issues still remain a challenge in the QA domain. The inability to access knowledge in a natural way still begs for more research. A lot of languages still lack resources forcing the need to convert to another language for processing. Problems like disambiguation, learning context and many more still exist and could be explored for more extensive research.

The performance of QA systems is increased by combining different techniques together to strike out the inefficiency of individual implementations as seen in hybridized implementations, but this implementation becomes expensive for simple QA system or quite expensive and time consuming for complex ones, hence the need for better ways in merging techniques in QA systems. The output of a QA system is also reliant on a good knowledge base and a clear understanding of user question. This understanding is extracted using NLP techniques. Failure on the side of this techniques affects the answers generated by the system. Future research should be directed at the optimization and modularization of already existing, better performing QA techniques. This will motivate re-use of quality modules and help researchers focus and isolate core research problems.

References

Agichtein, E., and Savenkov, D. 2016. CRQA: Crowd-Powered Real-Time Automatic Question Answering System. HCOMP.

Ahmed, W., and Babu, P. A. 2016. Answer Extraction and Passage Retrieval for Question Answering Systems. ISSN:2278-1323 International Journal of Advanced Research in Computer Engineering and Technology (IJARCET), 5(12).

Androutsopoulos, I., Ritchie, G. D., and Thanisch, P. 1993. MASQUE/SQL – an efficient and portable natural language query interface for relational databases, In: Proc. of the 6th International Conference on Industrial and Engineering Applications of Artificial Intelligence and Expert Systems, Gordon and Breach Publisher Inc., Edinburgh, pp. 327–30.

Attardi, G., Cisternino, A., Formica, F., Simi, M., and Tommasi, A. 2001. PiQAS: Pisa Question Answering System. TREC.

Banerjee, S., and Bandyopadhyay, S. 2012. Bengali Question Classification: Towards Developing QA System. In: Proceedings of the 3rd Workshop on South and Southeast Asian Natural Language Processing (SANLP), pp. 25–40, COLING 2012, Mumbai.

Basic, B. D., Lombarovic, T., and Snajder, J. 2011. Question Classification for a Croatian QA System. In: Habernal I., Matousek V. (eds) Text, Speech and Dialogue. TSD 2011. Lecture Notes in Computer Science, 6836. Springer, Berlin, Heidelberg.

Baudiš, P., and Šedivy, J. 2015. Modeling of the Question Answering’ task in the YodaQA system. In Proceedings, Lecture Notes in Computer Science, 9283, Springer, pp. 222–8.

Benamara, F. 2004. Cooperative question answering in restricted domains: the WEBCOOP experiments, In: In Workshop on Question Answering in Restricted Domains, 42nd Annual Meeting of the Association for Computational Linguistics, Barcelona, Spain, pp. 31–8.

Benamara, F., and Kaci, S. 2006. Preference Reasoning in Advanced Question Answering Systems, In: A. Sattar, B. Kang (eds) Advances in Artificial Intelligence, AI 2006, Lecture Notes in Computer Science, vol. 4304, Springer, Berlin, Heidelberg.

Berger, A., Caruana, R., Cohn, D., Freitag, D., and Mittal, V. 2000. Bridging the lexical chasm: statistical approaches to answer-finding, In Proceedings of the 23rd annual international ACM SIGIR conference on Research and development in information retrieval, pp. 192–99.

Bergeron, J., Schmidt, A., Khoury, R., and Lamontagne, L. 2016. Building User Interest Profiles Using DBpedia in a Question Answering System. AAAI Publications, The Twenty-Ninth International Flairs Conference.

Bharat, V. K., Vilas, D. P., Uddav, D. S., and Sugriv, D. V. 2016. Multimedia Question Answering System. International Journal of Engineering and Techniques, 2(2).

Bhaskar, P., Banerjee, S., Pakray, P., Banerjee, S., Bandyopadhyay, S., and Gelbukh, A. 2013. A Hybrid Question Answering System for Multiple Choice Question (MCQ). QA4MRE@CLEF 2013.

Bhoir, V., and Potey, M. A. 2014. Question answering system: A heuristic approach. The Fifth International Conference on the Applications of Digital Information and Web Technologies (ICADIWT), pp. 165–70.

Biplab, C. D. 2014. A Survey on Question Answering System. Department of Computer Science and Engineering, Indian Institute of Technology, Bombay.

Bizer, C., Lehmann, J., Kobilarov, G., Auer, S., Becker, C., Cyganiak, R., and Hellmann, S. 2009. DBpedia-A crystallization point for the Web of Data. Web Semantics: science, services and agents on the world wide web, 7(3):154–65.

Bobrow, D. G., Kaplan, R. M., Kay, M., Norman, D. A., Thompson, H., and Winograd, T. 1977. GUS, a frame-driven dialog system. Artificial Intelligence, 8(2):155–73.

Bollacker, K., Evans, C., Paritosh, P., Sturge, T., and Taylor, J. 2008. Freebase: a collaboratively created graph database for structuring human knowledge, In SIGMOD, pp. 1247–50.

Bosma, W., Ghijsen, M., Johnson, W. L., Kole, S., Rizzo, P., and Welbergen, H. V. 2004. Generating Socially Appropriate Tutorial Dialog. ADS 2004, pp. 254–64.

Bouma, G. 2005. Linguistic Knowledge and Question Answering. In: Proceeding KRAQ ‘06 Proceedings of the Workshop KRAQ’06 on Knowledge and Reasoning for Language Processing. pp. 2–3.

Bouziane, A., Bouchiha, D., Doumi, N., and Malki, M. 2015. Question Answering Systems: Survey and Trends, Procedia Computer Science 73:366–75.

Brill, E., Lin, J., Banko, M., Dumais, S., and Ng, A. 2001. Data-intensive question answering, In TREC, pp. 393–400.

Burger, J., Cardie, C., Chaudhri, V., and Weischedel, R. 2001. Issues, task and program structure to roadmap research in question answering (QandA). https://www.researchgate.net/publication/228770683 Issues tasks and program structures to roadmap research in question answering QA.

Burke, R. D., Hammond, K. J., and Kulyukin, V. 1997. Question Answering from Frequently-Asked Question Files: Experiences with the FAQ Finder system. Tech. Rep. TR-97-05.

Cai, D., Dong, Y., Lv, D., Zhang, G., and Miao, X. 2005. A Web-based Chinese question answering with answer validation. In Proceedings of IEEE International Conference on Natural Language Processing and Knowledge Engineering, pp. 499–502.

Carbonell, J., Harman, D., and Hovy, E. 2000. Vision statement to guide research in question and answering (QandA) and text summarization. https://www-nlpir.nist.gov/projects/duc/papers/Final-Vision-Paper-v1a.ps

Chai, J. Y., and Jin, R. 2004. Discourse structure for context question answering. Department of Computer Science and Engineering Michigan State University East Lansing, MI 48864.

Chen, W., Zeng, Q., Wenyin, L., and Hao, T. 2007. A user reputation model for a user-interactive question answering system. Concurrency Computat.: Pract. Exper., pp. 2091–103.

CHUA, T. S., Hong, R., Li, G., and Tang, J. 2010. Multimedia Question Answering. Scholarpedia, 5(5):9546.

Chu-Carroll, J., and Fan, J. 2011. Leveraging Wikipedia characteristics for search and candidate generation in question answering, AAAI’11 Proceedings of the twenty-fifth AAAI conference on Artificial Intelligence, pp. 872–7.

Chu-Carroll, J., Ferrucci, D., Prager, J., and Welty, C. 2003. Hybridization in Question Answering Systems. From: AAAI Technical Report SS-03-07.

Chung, H., Song, Y. I., Han, K.S., Yoon, D.S., Lee, J.Y., and Rim, H.C. 2004. A Practical QA System in Restricted Domains. In Workshop on Question Answering in Restricted Domains, 42nd Annual Meeting of the Association for Computational Linguistics (ACL), pp. 39–45.

Clark, P., Thompson, J., and Porter, B. 1999. A knowledge-based approach to question answering, In Proceedings of AAAI’99 Fall Symposium on Question-Answering Systems, pp. 43–51.

Clarke, C., Cormack, G., and Lynam, T. 2001. Exploiting redundancy in question answering. In proceedings of the 24th SIGIR Conference, pp. 358–65.

Cui, H., Kan, M. Y., and Chua, T. S. 2007. Soft pattern matching models for definitional question answering. ACM Trans. Inf. Syst. (TOIS) 25(2).

Datla, V., Hasan, S.A., Liu, J., Lee, Y. B. K., Qadir, A., Prakash, A., and Farri, O. 2016. Open Domain Real-Time Question Answering Based on Semantic and Syntactic Question Similarity. Artificial intelligence laboratory, Philips research North America, Cambridge, MA, USA.

Dong, T., Furbach, U., Glockner, I., and Pelzer, B. 2016. A Natural Language Question Answering System as a Participant in Human QandA Portals. Published in: Proceeding IJCAI’11 Proceedings of the Twenty-Second international joint conference on Artificial Intelligence. Barcelona, Catalonia, Spain. 3:2430-5.

Dwivedi, S. K., and Singh, V. 2013. Research and reviews in question answering system. International Conference on Computational IntellIgence: Modeling Techniques and Applications CIMTA) Procedia Technology, pp. 417–24.

Echihabi, A., Hermjakob, U., Hovy, E., Marcu, D., Melz, E., and Ravichandran, D. 2008. How to Select Answer String?. In: Strzalkowski T., Harabagiu S.M. (eds) Advances in Open Domain Question Answering. Text, Speech and Language Technology, 32, Springer, Dordretch.

Fader, Zettlemoyer, L. S., and Etzioni, O. 2013. Paraphrase-driven learning for open Question Answering, In Proceedings of the 51st Annual Meeting of the Association for Computational Linguistics, ACL, pp. 4–9.

Fahmi. 2009. Automatic Term and Relation Extraction for Medical Question Answering System, PhD Thesis, University of Groningen, Netherlands, pp.13–4.

Fan, B., Xingwei, Z., and Hau, Y. 2010. Function based question classification for general QA, In: Proceedings of Conference on Empirical Methods in Natural Language Processing, MIT USA, pp. 1119–28.

Ferrucci, D., Brown, E., Chu-Carroll, J., Fan, J., Gondek, D., Kalyanpur, A.A., and Lally, A. 2010. Building Watson: An overview of the DeepQA project. AI magazine 31(3): 59–79.

Forner, P., Giampiccolo, D., Magnini, B., Peñas, A., Rodrigo, Á., and Sutcliffe, R. 2008. Evaluating Multilingual Question Answering Systems at CLEF.

Foster, G. F., and Plamondon, L. 2003. Quantum, a French/English Cross-Language Question Answering System. CLEF.

Fu, J., Jia, K., and Xu, J. 2009. Domain Ontology Based Automatic Question Answering, International Conference on Computer Engineering and Technology, pp. 346–349.

Giampiccolo, D., Forner, P., Herrera, J., Peñas, A., Ayache, C., Forascu, C., Jijkoun, V., Osenova, P., Rocha, P., Sacaleanu, B., and Sutcliffe, R. 2007. Overview of the CLEF 2007 Multilingual Question Answering Track, In: Peters, C., Jijkoun, V., Mandl, T., Müller, H., Oard, D. W., Peñas, A., and Santos, D. (Eds.), Advances in Multilingual and Multimodal Information Retrieval, 8th Workshop of the Cross-Language Evaluation Forum, CLEF, Revised Selected Papers.

Gliozzo, A. M., and Kalyanpur, A. 2012. Predicting Lexical Answer Types in Open Domain QA, Int. J. Semantic Web Inf. Syst., 8:74–88.

Goh, O. S., and Cemal, A. 2005. Response quality evaluation in heterogeneous question answering system: a black-box approach. World Academy of Sciences Engineering and Technology.

Green, B. F., Wolf, A. K., Chomsky, C., and Laughery, K. 1961. Baseball: An automatic question answerer. Readings in natural language processing. pp. 545–9. Morgan Kaufmann Publishers Inc.

Guda, V., Sanampudi, S. K., and Manikyamba, I. L. 2011. Approaches for question answering systems. International Journal of Engineering science and technology (IJEST), ISSN:0975-5462, 3(2).

Gunawardena, T., Lokuhetti, M., Pathirana, N., Ragel, R., and Deegalla, S. 2010. An automatic answering system with template matching for natural language questions. In Proceedings of 5th IEEE International Conference on Information and Automation for Sustainability (ICIAFs), pp. 353–8.

Hakimov, S., Tunc, H., Akimaliev, M., and Dogdu, E. 2013. Semantic Question Answering system over Linked Data using relational patterns, In Workshop Proceedings, New York, USA, pp. 83–8.

Hans, A. F., Feiyu, U. K., Uszkoreit, X. H., Brigitte, B. C., and Schäfer, J. U. 2006. Question answering from structured knowledge sources, German Research Center for Artificial Intelligence, DFKI.

Hao, X., Chang, X., and Liu, K. 2007. A Rule-based Chinese question Answering System for reading Comprehension Tests, In 3rd International Conference on International Information hiding and Multimedia Signal Processing, 2:325–9.

Harabagiu, S., Moldovan, D., Pasca, M., Mihalcea, R., Surdeanu, M., Bunescu, R., Girju, R., Rus, V., and Morarescu, P. 2001. The Role of Lexico-Semantic Feedback in Open-Domain Textual Question Answering. In Proceedings of ACL.

Herrera, J., Peñas, A., and Verdejo, F. 2005. Question Answering Pilot Task at CLEF 2004, Multilingual Information Access for Text, Speech and Images, 3491. Lecture Notes in Computer Science, pp. 581–90.

Higashinaka, R., and Isozaki, H. 2008. Corpus-based question answering for why-questions, In: Proceedings of the International Joint Conference on Natural Language Processing (IJCNLP), pp. 418–25.

Hirschman, L., and Gaizauskas, R. 2001. Natural Language Engineering Cambridge University Press. http://start.csail.mit.edu/index.php.

Hoffner, K., Walter, S., Marx, E., Usbeck, R., Lehmann, J., and Ngomo, A. N. 2016. Survey on Challenges of Question Answering in the Semantic Web Proceedings. Semantic Web, pp. 1–26.

Hovy, E., Gerber, L., Hermjakob, U., Junk, M., and Lin, C.Y. 2000. Question Answering in Webclopedia. In Proceedings of TREC, Gaithersburg, MD.

Iftene, A. 2009. Textual Entailment. Ph.D Thesis, Al. I. Cuza University, Faculty of Computer Science, Iasi, Romania.

Indurkhya, N., and Damereau, F. J. 2010. Handbook of Natural Language Processing. second ed. Chapman and Hall/CRC, Boca Raton.

Ittycheriah, A., Franz, M., Zhu, W. J., Ratnaparkhi, A., and Mammone, R. J. 2000. IBM’s statistical question answering system, In Proceedings of the Text Retrieval Conference TREC-9.

Jijkoun, V., and de Rijke, M. 2006. Overview of the WiQA Task at CLEF 2006, In 7th Workshop of the Cross-Language Evaluation Forum, CLEF, Revised Selected Papers.

Jones, S. 1972.Astatisticalinterpretation of term specificity and its application in retrieval. Journal of documentation, 28(1):11–21.

Juan, L., Chunxia, Z., and Zhendong, N. 2016. Answer Extraction Based on Merging Score Strategy of Hot Terms. Chinese Journal of Electronics, 25(4).

Jurafsky, D., and Martin, J.H. 2015. Question Answering. In: Computational Linguistics and speech recognition. Speech and Language Processing. Colorado.

Kan, K.L., and Lam, W. 2006. Using semantic relations with world knowledge for question answering. In: Proceedings of TREC.

Katz, B. 2017. http://start.csail.mit.edu/index.php.

Katz, Felshin, B., Yuret, S., Ibrahim, D., and Temelkuran, B. 2002. OmnIbase: uniform access to heterogeneous data for question answering, In: Proceedings of the 7th International Workshop on Applications of Natural Language to Information Systems (NLDB).

Kaur, and Gupta, V. 2013. Effective Question Answering Techniques and their Evaluation Metrics. International Journal of Computer Applications, 65(12):30–7.

Kolomiyets, O. 2011. A survey on question answering technology from an information retrieval perspective. Inf. Sci. 181(24):5412–34.

Kosseim, L., Plamondon, L., and Guillemette, L. J. 2003. Answer Formulation for Question-Answering. In: Xiang Y., Chaib-draa B. (eds) Advances in Artificial Intelligence, AI 2003, Lecture Notes in Computer Science, 2671.

Kumar, S. G., and Zayaraz, G. 2014. Concept relation extraction using NaIve Bayes classifier for ontology-based question answering systems. J. King Saud Univ.

Kwok, C., Etzioni, O., and Weld, D.S. 2001. Scaling question answering to the Web. ACM Transactions on Information Systems (TOIS), 19 (3):242–62.

Lally, Prager, J. M., McCord, M. C., Boguraev, B. K., Patwardhan, S., Fan, J., Fodor, and P., Chu-Carroll, J. 2012. Question analysis: How Watson Reads a clue. IBM J. RES. and DEV . 56(¾), pp. 1–14.

Lamel, L., Rosset, S., Ayache, C., Mostefa, D., Turmo, J., and Comas, P. 2007. Question Answering on Speech Transcriptions: the QAST Evaluation in CLEF, In 8th Workshop of the Cross-Language Evaluation Forum, CLEF 2007, Budapest, Hungary, Revised Selected Papers.

Lebedeva, O. and Zaitseva, L. 2015. Question Answering Systems in Education and their Classifications. In: Proceedings of Joint International Conference on Engineering Education and International Conference on Information Technology (ICEE/ICIT 2014), Latvia, RĪga. Riga: RTU Press, pp. 359–366.

Lee, Y. H., Lee, C. W., Sung, C. L., Tzou, M. T., Wang, C. C., Liu, S. H., Shih, C. W., Yang, P. Y., and Hsu, W. L. 2008. Complex question answering with ASQA at NTCIR-7 ACLIA, In Proceedings of NTCIR-7 Workshop Meetings, Entropy, 1(10).

Li, X. and Roth, D. 2002. Learning question classifiers, In: Proceedings of the 19th International Conference on Computational Linguistics (COLING’02).

Li, X., and Roth, D. 2005. Learning question classifiers: The role of semantic information. Journal of Natural Language Engineering, 11(4):2005.

Lin, J., Quan, D., Sinha, V., Bakshi, K., Huynh, D., Katz, B., and Karger, D.R. 2003. What Makes a Good Answer? The Role of Context in Question Answering, MIT AI Laboratory/LCS Cambridge, MA 02139, USA.